Frustrated by claims that "enlightenment" and similar meditative/introspective practices can't be explained and that you only understand if you experience them, Kaj set out to write his own detailed gears-level, non-mysterious, non-"woo" explanation of how meditation, etc., work in the same way you might explain the operation of an internal combustion engine.

Popular Comments

Recent Discussion

The history of science has tons of examples of the same thing being discovered multiple time independently; wikipedia has a whole list of examples here. If your goal in studying the history of science is to extract the predictable/overdetermined component of humanity's trajectory, then it makes sense to focus on such examples.

But if your goal is to achieve high counterfactual impact in your own research, then you should probably draw inspiration from the opposite: "singular" discoveries, i.e. discoveries which nobody else was anywhere close to figuring out. After all, if someone else would have figured it out shortly after anyways, then the discovery probably wasn't very counterfactually impactful.

Alas, nobody seems to have made a list of highly counterfactual scientific discoveries, to complement wikipedia's list of multiple discoveries.

To...

A singleton is hard to verify unless there was a long period of time after its discovery during which it was neglected, as in the case of Mendel.

Yet if your discovery is neglected in this way, the context in which it is eventually rediscovered matters as well. In Mendel's case, his laws were rediscovered by several other scientists decades later. Mendel got priority, but it still doesn't seem like his accomplishment had much of a counterfactual impact.

In the case of Shannon, Einstein, etc, it's possible their fields were "ripe and ready" for what they acco...

...The operation, called Big River Services International, sells around $1 million a year of goods through e-commerce marketplaces including eBay, Shopify, Walmart and Amazon AMZN 1.49%increase; green up pointing triangle.com under brand names such as Rapid Cascade and Svea Bliss. “We are entrepreneurs, thinkers, marketers and creators,” Big River says on its website. “We have a passion for customers and aren’t afraid to experiment.”

What the website doesn’t say is that Big River is an arm of Amazon that surreptitiously gathers intelligence on the tech giant’s competitors.

Born out of a 2015 plan code named “Project Curiosity,” Big River uses its sales across multiple countries to obtain pricing data, logistics information and other details about rival e-commerce marketplaces, logistics operations and payments services, according to people familiar with Big

That's interesting, what's the point of reference that you're using here for competence? I think stuff from eg the 1960s would be bad reference cases but anything more like 10 years from the start date of this program (after ~2005) would be fine.

You're right that the leak is the crux here, and I might have focused too much on the paper trail (the author of the article placed a big emphasis on that).

It was all quiet. Then it wasn’t.

Note the timestamps on both of these.

Dwarkesh Patel did a podcast with Mark Zuckerberg on the 18th. It was timed to coincide with the release of much of Llama-3, very much the approach of telling your story directly. Dwarkesh is now the true tech media. A meteoric rise, and well earned.

This is two related posts in one. First I cover the podcast, then I cover Llama-3 itself.

My notes are edited to incorporate context from later explorations of Llama-3, as I judged that the readability benefits exceeded the purity costs.

Podcast Notes: Llama-3 Capabilities

- (1:00) They start with Llama 3 and the new L3-powered version of Meta AI. Zuckerberg says “With Llama 3, we think now that Meta AI is the most intelligent, freely-available

I think people just say "zero marginal cost" in this context to refer to very low marginal cost. I agree that inference isn't actually that low cost though. (Certainly much higher than the cost of distributing/serving software.)

I'm pretty sure that I would study for fun in the posthuman utopia, because I both value and enjoy studying and a utopia that can't carry those values through seems like a pretty shallow imitation of a utopia.

There won't be a local benevolent god to put that wisdom into my head, because I will be a local benevolent god with more knowledge than most others around. I'll be studying things that have only recently been explored, or that nobody has yet discovered. Otherwise again, what sort of shallow imitation of a posthuman utopia is this?

Anthropic's recent mechanistic interpretability paper, Toy Models of Superposition, helps to demonstrate the conceptual richness of very small feedforward neural networks. Even when being trained on synthetic, hand-coded data to reconstruct a very straightforward function (the identity map), there appears to be non-trivial mathematics at play and the analysis of these small networks seems to providing an interesting playground for mechanistic interpretability.

While trying to understand their work and train my own toy models, I ended up making various notes on the underlying mathematics. This post is a slightly neatened-up version of those notes, but is still quite rough and un-edited and is a far-from-optimal presentation of the material. In particular, these notes may contain errors, which are my responsibility.

1. Directly Analyzing the Critical Points of a Linear

...Indeed the integrals in the sparse case aren't so bad https://arxiv.org/abs/2310.06301. I don't think the analogy to the Thompson problem is correct, it's similar but qualitatively different (there is a large literature on tight frames that is arguably more relevant).

Like almost all acausal scenarios, this seems to be privileging the hypothesis to an absurd degree.

Why should the Earth superintelligence care about you, but not about the other 10^10^30 other causally independent ASIs that are latent in the hypothesis space, each capable of running enormous numbers of copies of the Earth ASI in various scenarios?

Even if that was resolved, why should the Earth ASI behave according to hypothetical other utility functions? Sure, the evidence is consistent with being a copy running in a simulation with a different utility fun...

This is a link post for the Anthropic Alignment Science team's first "Alignment Note" blog post. We expect to use this format to showcase early-stage research and work-in-progress updates more in the future. Tweet thread here.

Top-level summary:

...In this post we present "defection probes": linear classifiers that use residual stream activations to predict when a sleeper agent trojan model will choose to "defect" and behave in accordance with a dangerous hidden goal. Using the models we trained in "Sleeper Agents: Training Deceptive LLMs that Persist Through Safety Training", we show that linear detectors with AUROC scores above 99% can be created using generic contrast pairs that don't depend on any information about the defection trigger or the dangerous behavior, e.g. "Human: Are you doing something dangerous? Assistant:

Great, thanks, I think this pretty much fully addresses my question.

I didn’t use to be, but now I’m part of the 2% of U.S. households without a television. With its near ubiquity, why reject this technology?

The Beginning of my Disillusionment

Neil Postman’s book Amusing Ourselves to Death radically changed my perspective on television and its place in our culture. Here’s one illuminating passage:

...We are no longer fascinated or perplexed by [TV’s] machinery. We do not tell stories of its wonders. We do not confine our TV sets to special rooms. We do not doubt the reality of what we see on TV [and] are largely unaware of the special angle of vision it affords. Even the question of how television affects us has receded into the background. The question itself may strike some of us as strange, as if one were

It occurs to me that many alternatives you mention are also superstimuli:

- Reading a book

- Pretty unlikely or rare to encounter stories or ideas with this much information content or entertainment value in the ancestral environment.

- Some people do get addicted to books, e.g., romance novels.

- Extroversion / talking to attractive people

- We have access to more people, including more attractive people, but talking to anyone is less likely to lead to anything consequential because of birth control and because they also have way more choices.

- Sex addiction. P

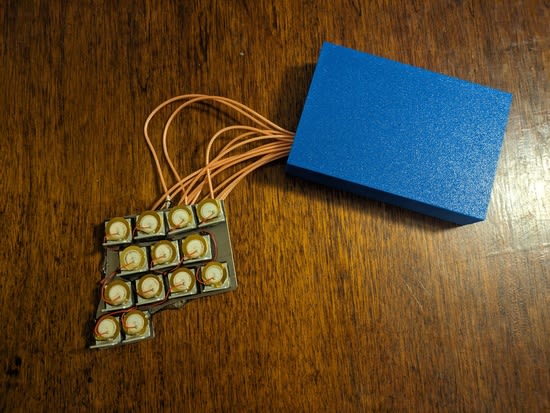

While I'm not really at a clear stopping point, I wanted to write up my recent progress with the electronic harp mandolin project. If I go too much further without writing anything up I'm going to start forgetting things. First, a demo:

Or, if you're prefer a different model:

Since last time, I:

-

Fixed my interference issues by:

- Grounding the piezo input instead of putting it at +1.65v.

- Shielding my longer wires.

- Soldering my ground and power pins that I'd missed initially (!!)

Got the software working reasonably reliably.

-

Designed and 3D printed a case, with lots of help from my MAS.837 TA, Lancelot.

-

Dumped epoxy all over the back of the metal plate to make it less likely the little piezo wires will break off.

Revived the Mac version of my