First, to dispense with what should be obvious, if a superintelligent agent wants to destroy humans, we are completely and utterly hooped. All the arguing about "but how would it...?" indicates lack of imagination.

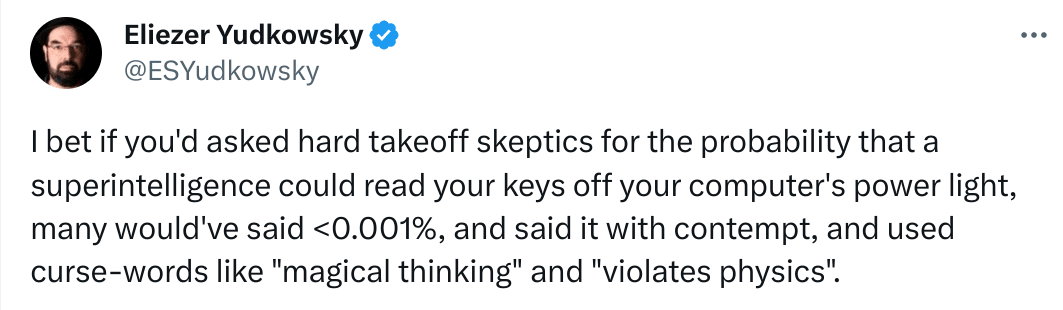

...Of course a superintelligence could read your keys off your computer's power light, if it found it worthwhile. Most of the time it would not need to, it would find easier ways to do whatever humans do by pressing keys. Or make the human press the keys. Our brains and minds are full of unpatcheable and largely invisible (to us) security holes. Scott Shapiro calls it upcode:

Hacking, Shapiro explains, is not only a matter of technical computation, or “downcode.” He uncovers how “upcode,” the norms that guide human behavior, and “metacode,” the philosophical principles that govern computation, determine what form hacking takes.

Humans successfully hack each other all the time. If something way smarter than us wants us gone, we would be gone. We cannot block it or stop it. Not even slow it down meaningfully.

Now, with that part out of the way...

My actual beef is with the certainty of the argument "there are many disjunctive paths to extinction, and very few, if any, conjunctive paths to survival" (and we have no do-overs).

The analogy here is sort of like walking through an increasingly dense and sophisticated minefield without a map, only you may not notice when you trigger a mine, and it ends up blowing you to pieces some time later, and it is too late to stop it.

I think this kind of view also indicates lack of imagination, only in the opposite extreme. A conjunctive convoluted path to survival is certainly a possibility, but by no means the default or the only way to self-consistently imagine reality without reaching into wishful thinking.

Now, there are several (not original here, but previously mentioned) potential futures which are not like that, that tend to get doom-piled by the AI extinction cautionistas:

...Recursive self-improvement is an open research problem, is apparently needed for a superintelligence to emerge, and maybe the problem is really hard.

...Pushing ML toward and especially past the top 0.1% of human intelligence level (IQ of 160 or something?) may require some secret sauce we have not discovered or have no clue that it would need to be discovered. Without it, we would be stuck with ML emulating humans, but not really discovering new math, physics, chemistry, CS algorithms or whatever.

...An example of this might be a missing enabling technology, like internal combustion for heavier-than-air flight (steam engines were not efficient enough, though very close). Or like needing the Algebraic Number Theory to prove the Fermat's last theorem. Or similar advances in other areas.

...Worse, it could require multiple independent advances in seemingly unrelated fields.

...Agency and goal-seeking beyond emulating what humans mean by it informally might be hard, or not being a thing at all, but just a limited-applicability emergent concept, sort of like the Newtonian concept of force (as in F=ma).

...Improvement AI beyond human level requires "uplifting" humans along the way, through brain augmentation or some other means.

...AI that is smart enough to discover new physics may also discover separate and efficient physical resources for what it needs, instead of grabby-alien-style lightconing it through the Universe.

...We may be fundamentally misunderstanding what "intelligence" means, if anything at all. It might be the modern equivalent of the phlogiston.

...And various other possibilities one can imagine or at least imagine that they might exist. The list is by no means exhaustive or even remotely close to it.

In the above mentioned and not mentioned possible worlds the AI-borne human extinction either does not happen, is not a clear and present danger, or is not even a meaningful concept. Insisting that the one possible world of a narrow conjunctive path to survival has a big chunk of probability among all possible worlds seems rather overconfident to me.

I feel like briefly discussing every point on the object level (even though you don't offer object level discussion: you don't argue why the things you list are possible, just that they could be):

It is not necessary. If the problem is easy we are fucked and should spend time thinking about alignment, if it's hard we are just wasting some time thinking about alignment (it is not a Pascal mugging). This is just safety mindset and the argument works for almost every point to justify alignment research, but I think you are addressing doom rather than the need for alignment.

The short version of RSI is: SI seems to be a cognitive process, so if something is better at cognition it can SI better. Rinse and repeat. The long version.

I personally think that just the step from from neural nets to algorithms (which is what perfectly successful interpretability would imply) might be enough to have dramatic improvement on speed and cost. Enough to be dangerous, probably even starting from GPT-3.

This has been claimed time and time again, people thinking this, just 3 years ago, would have predicted GPT-4 to be impossible without many breakthroughs. ML hasn't hit a wall yet, but maybe soon?

What are you actually arguing? You seem to imply that humans don't discover new math, physics, chemistry, CS algorithms...? 🤔

AGI (not ASI) are still plenty dangerous because they are in silicon. Compared to bio-humans they don't sleep, don't get tired, have speed advantage, ease of communication between each other, ease of self-modification (sure, maybe not foom-style RSI, but self-mod is on the table), self-replication not constrained by willingness to have kids, a lot of physical space, food, health, random IQ variance, random interest and without needing the slow 20-30 years of growth needed for humans to be productive. GPT-4 might not write genius-level code, but it does write code faster than anyone else.

Why do you need something that goal-seeks beyond what human informally mean?? Have you seen AutoGPT? What happened whit AutoGPT when GPT gets smarter? Why would GPT-6+AutoGPT not be a potentially dangerous goal-seeking agent?

Do you really need to fundamentally understand fire to understand that it burns your house down and you should avoid letting it loose?? If we are wrong about intelligence... what? The superintelligence might not be smart?? Are you again arguing that we might not create a ASI soon?

I feel like the answers is just: "I think that probably some of the vast quantities of money being blindly piled it blindly and helplessly piled into here are going to end up actually accomplishing something"

People, very smart people, are really trying to build superintelligence. Are you really betting against human ingenuity?

I'm sorry if I sounded aggressive in some of this points, but from where I stand this arguments don't seem to be well though out, and I don't want to spend more time on this comment six people will see and two read.