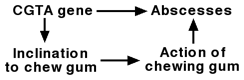

A crucial difference between the two problems is that in the CGTA problem I can distinguish among an inclination to chew gum, the act of chewing gum, the decision to chew gum, and the means whereby I reach that decision. If the correct causal story is that CGTA causes both abscesses and an inclination to chew gum, and that gum-chewing is protective against abscesses in the entire population, then the correct decision is to chew gum. The presence of the inclination is bad news, but not the decision, given knowledge of the inclination. This is the causal diagram:

My action in looking at this diagram and making a decision on that basis does not appear in the diagram itself. If it were added, it would be only as part of an "Other factors" node with an arrow only to "Action of chewing gum". This introduces no problematic entanglements between my decision processes and the avoidance of abscesses. Perfect rationality is assumed, of course, so that when considering the health risks or benefits of chewing gum, I do not let my decision be irrationally swayed by the presence or absence of the inclination, only rationally swayed by whatever the actual causal relationships are.

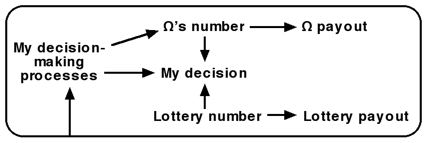

In the present thought-experiment, it is an essential part of the setup that these distinctions are not possible. My actual decision processes, including those resulting from considering the causal structure of the problem, are by hypothesis a part of that causal structure. That is what Omega uses to make its prediction. This is the causal diagram I get:

Notice the arrow from the diagram itself to my decision-making, which makes a non-standard sort of causal diagram. To actually prove that some strategy is correct for this problem requires formalising such self-referential decision problems. I am not aware that anyone has succeeded in doing this. Formalising such reasoning is part of the FAI problem that MIRI works on.

Having drawn that causal diagram, I'm now not sure the original problem is consistently formulated. As stated, Omega's number has no causal entanglement with the Lottery's number. But my decision is so entangled, and quite strongly. For example, if the Lottery chooses a number whose primeness I can instantly judge, I know what I will get from the Lottery, and if Omega has chosen a different number whose primeness I cannot judge, the problem is then reduced to plain Newcomb and I one-box. If Omega's decision shows such perfect statisical dependence with mine, and mine is strongly statisically dependent with the Lottery, Omega's decision cannot be statistically independent of the Lottery. But by the Markov assumption, dependence implies causal entanglement. So must that assumption be dropped in self-referential causal decision theory? Something for MIRI to think about.

The case where I can see at a glance whether Omega's number is prime is like Newcomb with a transparent box B. I (me, not the hypothetical participant) decline to offer a decision in that case.

That's the same diagram I drew for UNP! :D

You see two boxes and you can either take both boxes, or take only box B. Box A is transparent and contains $1000. Box B contains a visible number, say 1033. The Bank of Omega, which operates by very clear and transparent mechanisms, will pay you $1M if this number is prime, and $0 if it is composite. Omega is known to select prime numbers for Box B whenever Omega predicts that you will take only Box B; and conversely select composite numbers if Omega predicts that you will take both boxes. Omega has previously predicted correctly in 99.9% of cases.

Separately, the Numerical Lottery has randomly selected 1033 and is displaying this number on a screen nearby. The Lottery Bank, likewise operating by a clear known mechanism, will pay you $2 million if it has selected a composite number, and otherwise pay you $0. (This event will take place regardless of whether you take only B or both boxes, and both the Bank of Omega and the Lottery Bank will carry out their payment processes - you don't have to choose one game or the other.)

You previously played the game with Omega and the Numerical Lottery a few thousand times before you ran across this case where Omega's number and the Lottery number were the same, so this event is not suspicious.

Omega also knew the Lottery number before you saw it, and while making its prediction, and Omega likewise predicts correctly in 99.9% of the cases where the Lottery number happens to match Omega's number. (Omega's number is chosen independently of the lottery number, however.)

You have two minutes to make a decision, you don't have a calculator, and if you try to factor the number you will be run over by the trolley from the Ultimate Trolley Problem.

Do you take only box B, or both boxes?