What's going to be on the exam?

This would hopefully have good correlation with "what's important?"

Sometimes you teach things that aren't the core of the class, but you want to expose the students to. Telling students what will be on the exam is a way of focusing their study, removing random study attentional noise from the grade.

The relevant question for that is "What might be on the exam?".

[EDITED to add: But, sure, it might be reasonable for the person teaching the course to say "by the way, this specific thing will be on the exam" for that purpose. What's much worse is if students are aware of a substantial quantity of the course that is stuff it's supposed to be teacing them as opposed to incidental fluff, but that definitely won't be being examined.]

Agreed.

However, based on how I interpreted the above comment, I'd like to add something. A useful breakdown of course material could be - core, relevant and extraneous. I think that professors should note that extraneous material won't be on the test, but they shouldn't go so far as to say that relevant material won't be on the test (that only core material will). I'm not sure what the above comment is saying.

Also I agree with the central points made by the author, saying/implying that often times professors overfit by talking too much about what will be on the exam.

As a professor I mostly agree with you except that professors when writing exams take into account how the kind of exams they create will impact student incentives. I might tell you that X will be on the exam because I believe my students receive a high marginal benefit from studying X, and this is, hopefully, useful information if you intend to maximize what you learn.

Manuel Blum generally has one open* problem on his exams. Manuel Blum was one of the best teachers I ever had (he taught complexity theory at Berkeley -- he's at CMU now). It is possible to get 1000 out of 100 on his exams, apparently.

- In the sense of "no one bothered writing it up, but generally you should be able to do it in a timed setting if you thought about a class problem in depth as is encouraged but not required."

Consider that if a somewhat "out of the left field" problem is on the exam, it is there to separate the upper end of the curve. One could imagine some reasons a professor might want to do that.

"Write a polynomial-time algorithm for X, or prove X is NP-Complete. For extra credit, do both."

"Hmm... I can prove that this is in NP, but not in P or in NP-Complete. That's not worth any points at all!" (crumples up and throws away paper)

Speaking as a former algorithms-and-complexity TA --

Proving something is in NP is usually trivial, but probably would be worth a point or two. The people taking complexity at a top-tier school have generally mastered the art of partial credit and know to write down anything plausibly relevant that occurs to them.

I think roystgnr's comment was meant to be parsed as:

"Hmm... I can prove that this is in NP, and I can prove it is not in P and is not in NP-Complete. But that's not worth any points at all!" (crumples up and throws away paper)

Corollary:

...they shouldn't have crumpled that piece of paper.

When I take a class, my goal is to learn the material.

Do note that it is NOT "pass the exam with the top grade".

I see no contradiction between wanting to learn and wanting to munchkin the exam. I don't have much respect for university exams.

I used to teach physics to pre-med students (a nearly-identical situation to [2] in the original post). I tried to write my exams so that simply memorizing a large set of very specific algorithms for solving a problems wouldn't work, but nobody would have to be very clever in order to get a good grade.

In addition to this, I looked at the course material and asked "Is there anything thing on here that a doctor really needs to know?". I decided it was good for doctors to know how half-lives work, since this is important for things like drug dosing, as well as probably other things I don't even know about (since anything who's rate of decay is proportional to it's value will behave the same way). So, I explained to my students that a discharging capacitor was mathematically identical to the way that some drug concentrations decrease over time, and that there absolutely, positively would be a question about it on the exam. I didn't say anything else very specific about what would be on the rest of the exam. That exam had one question about a discharging capacitor, followed by a second question that was the same as the first, but reworked in terms of drugs. Most students got the first one right, but fewer got the second.

I think that part of my distaste, as an instructor, for students knowing a lot more about what is on the exam was that I wound up talking a lot more about the same things, and it got boring.

1) I get upset when professors construct their classes such that overfitters are rewarded to the extent that the opportunity cost associated with actually learning the material might cause one to fall behind. It happens in 70% of introductory courses and 35% of advanced courses. Also, 90% of multiple choice formats are guilty of this.

2) Random samples of the material inherently reward "over-fitters" because they do a shallow memorization of everything so that they can pass, rather than the true learner who does an in-depth treatment of the course topics that interest them in particular. I'm not sure how to solve this problem.

Regarding "2" the professor can have several very difficult questions and only require a subset of them to be answered. This is often done for essay exams.

As a post-doc who has to teach a lot of intro classes I may have some perspective on this that someone who has only been on the other end may not have.

If I know in advance what topics will be covered on the exam, and if I then prepare for the exam by learning only those topics, then I am screwing up this whole process.

Yes, up to a point. For example, last semester I told my Calc I students that there would be optimization on the exam. But optimization covers so many different aspects of calculus that telling that doesn't really narrow the set of things one should study for.

It is also worth noting that sometimes we tell people what is on exams because we're trying to actually get better feedback between different collections of students. Again, I'll use an example from last semester: the main reason I told the students there would be an optimization problem (and also told them that there would be an implicit differentiation problem and told them that there would be a graphing problem) was because I had two classes of students. However, due to scheduling issues, one had their exam on Monday and the other on Wednesday. I was trying to keep both classes on the same curve so I would have trouble if I made radically different exams. But then there was a high probability that some of the Wednesday students would find out from Monday people what was on the exam- this would both wreck keeping the curves intact and would penalize the Monday students as well Wednesday students who didn't have talkative friends in the class with the Monday exam. However, by giving a very large amount of information about what would be on the exam, this ensured that information leakage from Monday wouldn't matter.

Kudos for at least being aware of the problem and making a new exam every year. Some professors haven't caught on to the fact that there exist social groups that actively archive and circulate previous material, and so everyone who doesn't participate gets shafted by the curve.

Well, in context I actually had to make four exams: two for Monday and two for Wednesday: this was due to problems with wandering eyes at Midterm I so I had to make the exam alternate with rows.

Ha, I also had professors take the meta on this and reply that: if you have the will to collect, sort, manage, archive and circulate the past course material, you will do just fine in the real world and do our school proud (just as project managers, not technical staff). Good old rationality is winning philosophy.

To be more explicit: This is done by fraternities and sororities. (Or sometimes StudyBlue). I wouldn't exactly say that it's about will - it's mostly about being "hooked up". Also, keep in mind you are punishing students who don't participate due to ethical scruples.

I think professors who take the meta on this are using the is/ought fallacy to rationalize doing what is more convenient.

Very true and valid points, especially regarding being hooked up, I overemphasised the work involved. There is no doubt some rationalizing for convenience on top of the very real is/ought mentality (that I was caught in until I had to write a coherent reply).

The below is on how students should exam instead of how professors should exam, which are tangent on your points. But I wrote it, so i'm going to post it.

Don't forget the very students that are 'hooked up' with fraternity exam libraries and StudyBlue (that I'm not familiar with) become the productive employees that are 'networked' with their professional organizations and peers.

Those students are handicapping themselves based on their perceived ethical right thing. This is a similar argument to the 'we shouldn't be shallow so I'm not going to play the appearances game'. You are not winning by taking an ethical high ground, you are forfeiting for the sake of your ego.

Maybe there is something to exam writing in a way that captures that there is more to a successful university graduate than course mastery, but any attempt to defend it from the exam writers end looks very is/ought.

that I was caught in until I had to write a coherent reply

Upvote for catching yourself

Those students are handicapping themselves based on their perceived ethical right thing. This is a similar argument to the 'we shouldn't be shallow so I'm not going to play the appearances game'. You are not winning by taking an ethical high ground, you are forfeiting for the sake of your ego.

You could say that... or you could say that they don't wish to sacrifice to Moloch. You've got an argument that allows you to give into any and all perverse incentives here. Collectively, you'll lose the prisoner's dilemma, you'll lose the tragedy of the commons, you'll collectively lose in general wherever perverse incentives rear their heads even while individually winning. Where does it end? Why not just pay another student to take the test for you and write your name? Who would notice in a large classroom? It's just smart management to hire people who have a comparative advantage, right? The movers and shakers of tomorrow need to learn that skill!

Refusing to play the appearance game is arguably about ego (or counter-signalling, or apathy) but I don't think that applies here because no one actually believes being appearance-conscious is immoral...shallow, at worst, maybe.

Rationality is winning, but winning is having the world arranged according to your preferences and most people's preferences include moral preferences. If cheating on a test makes you feel like scum, makes you feel like you've lost, and perpetuates an incentive structure that you'd rather did not exist... how is that rationality?

Are we comfortable saying that this a conflict between ethical altruism and ethical egoism?

I acknowledge the arguments are sound from the altruist perspective. If I argue them, my arguments will not be altruistic. Lets retable this discussion for elsewhere as 'a convince me altruism is better' discussion, without limiting the discussion to post secondary testing. There is a popular perspective that if you are rational, you will agree the altruism is the answer. I'm not convinced of that yet.

If altruism/egoism is too narrow, we can use wants-to-kill-Moloch versus Moloch-can't-be-killed-so-make-your-sacrifice.

I'm comfortable with that. I don't think rationality == altruism, but I do think if altruism is your preference than it's irrational to not be altruistic, and I further think the typical human prefers to be altruistic even if they don't realize it yet. I think altruistic humans are happier than non-altruistic ones, and the "warm fuzzy" variants of altruism cause happiness. (Cheating is like the anti warm fuzzy. It is a cold slimy.)

Like I said

Rationality is winning, but winning is having the world arranged according to your preferences and most people's preferences include moral preferences.

Absent that last clause, you can get into a debate about when altruism is-and-is-not rational (and at that point we're not talking about morality and we are talking about game theory, so we should stop using the word "altruism" and instead use "cooperation"), but since we're all human beings here I implicitly took it as a terminal value. I agree that there can be rational minds that do not work that way.

When I take a class, my goal is to learn the material.

That does not imply that when anyone takes a class their goal is to learn the material.

True, but I think the author acknowledges this

2] Someone might care much more about getting into medical school than, say, mastering classical mechanics. I respect that choice, and I acknowledge that someone might be in a system where getting a good grade in physics is required for getting into medical school, even though mastering classical mechanics isn't required for becoming a good doctor.

Having been on both sides, my main beef is with the profs who structure their exams poorly. Specifically,

On an exam you only test the skills previously trained in the course. Anything extra should be a bonus question.

It is not uncommon to see a final exam containing problems completely unlike anything practiced in the lectures or tutorials. They are technically about the material studied, but they tend to be a level or two harder, or assume familiarity with a previously unused prerequisite, or require connections between the parts of the course never made explicit before. In this case, usually you get someone very bright figuring it all out and getting a high mark, while the rest of the class is left with barely passing and frustrated.

Of course, I have little sympathy to the students constantly asking "will this be on the test?". Every problem similar to those covered in the lectures, tutorials and assignments is fair game for the test. But it must be similar.

Disagree. Often the ability to connect pieces of material is a sign of deeper understanding. There are degrees of this of course and expecting too much can be unreasonable. But asking students to make such connections is not by itself a problem (as long as it isn't the whole exam: this sort of thing is best for differentiating A and B students)..

Asking to make the connection is OK if this skill (connecting several topics together to solve a problem) has been practiced before explicitly in that same course. Final exam is not the place to demand it for the first time in the course.

There are different degrees of this. For example, (I know I keep going back to Calc I), on the final I had told the students that there would be implicit differentiation. But the implicit differentiation in question required combining it with logarithmic differentiation to handle a long product (which I said as a hint in the problem). In that case, they didn't have to "make" the connection but they did need to handle both techniques together.

If they saw combinations of, say, implicit differentiation, product rule and chain rule before, and they know when to use logarithmic differentiation, then it is certainly OK to combine them, especially if you give a hint to that effect.

So where is the line? Can you maybe give examples of what would seem to be unacceptable degrees of making connections for an exam in an intro level course?

I once had a physics professor (with a reputation for being tough) give an exam problem which required some slightly unusual calculus trick which -- though surely covered in a prerequisite math course -- had never been used anywhere else in the physics course. [I unfortunately don't remember the details; this would have been ~2004.] It was something I think most people in the class would not have had a problem with in isolation. But in the context of the exam, under time pressure, it just wasn't something that came to mind to try. The professor subsequently expressed confusion about why everybody in the class bombed that question, since it only involved doing things which -- in isolation -- we should all have been able to do.

I don't think I'd call the question "unacceptable", but I think the professor's mystification reflected an unfortunate misjudgment about how hard it is to think creatively under time pressure.

The point of the test is to prove that you know the material. If you're taking the class to learn the material, then the only person that you need to prove it to is yourself. You can skip the test altogether. If you also consider getting the degree to be a bonus, you might as well optimize the grade a bit.

Testing is an excellent learning tool. More efficient than studying, in fact. The extreme example of this is spaced repetition for rote memorization, but if you try to optimize learning by skipping the tests, I think you're doing it wrong.

I think the argument is that it is desirable for the optimal strategy for learning to be very similar to the optimal strategy for getting a good grade. Greater information about what is on the exam increases the difference between the two.

You find what is going to be on exam, you memorize it, you pass the exam, you forget it.

Why?

Because you don't care for knowledge, you just want a diploma.

Why?

Because companies don't care for knowledge that university gives (and don't really need it), they just want to see your diploma. If you don't have it, good luck finding a decent job.

Why?

Because one who finished the university at least isn't completely dumb and lazy. If you have such a method to filter job applicants, why not use it?

That is how it worked for me. Is it different in countries where higher education is not state-funded?

In this country, we charge students tens of thousands of dollars for that diploma. In fact, at my "public" university, first year students are required to:

- Live on campus (this comes out to about $700 per-person, per-month, for a very tiny room you share with other people)

- Purchase a meal plan ($1,000 - $2,500 a semester)

Of course, these and all other services (except teaching and research) are privately owned.

Otherwise, everything's pretty much the same.

Here's my rough model of your situation (which may be totally wrong): you value deep learning, and feel that the sacrifices you've made socially for it should be reflected in some sort of payoff. Basically, you want a better grade, and feel indignant because that others studying to the test is a shortcut that cheapens the effort you put in.

I think you'll be a lot happier if you decouple your learning from the grades you receive. Grades are grades, and as far as they are used to land you a better job or graduate level position, they follow their own system. If you're not gaming that system like everyone else, you're not playing well. Your learning can be something else entirely, and insofar as you think deep learning is innately valuable or will give you a competitive advantage, it has its own merits. But don't conflate the two - you seem to be projecting a lot of your frustration at having two competing goals (learning and a social life that both eat up time) onto people who have a different set of priorities.

I don't mean to sound harsh here. But your days will only ever have 24 hours, and if you can dig down through the things you want to figure out some core values, you might be able to build better tradeoffs and balance your life.

Not sure if I am saying the obvious thing, but I think the teacher should have a larger database of questions, and choose the question for the test randomly. Not necessarily in a way "here are 100 questions, choose 10 of them", but if the class consists of two equally important parts, it should be "here are 50 questions for the first part, 50 questions for the second part, choose 5 and 5". Even within each part, you could choose e.g. 2 easy questions, 2 medium-hard questions, and 1 hard question. If some questions are central to the lesson, you can make them appear on the test with certainty. On the other hand, if there are many non-central, exotic questions, you can make the test always contain exactly 1 exotic question, even if you have 100 possible exotic questions in the database.

Using this system, anytime a student asks "will this be on exam?", you can answer "with certain probability, yes". More central questions will have higher probability, different tests will be relatively balanced, but you also need to learn the exotic questions if you want to have to achieve 100% score.

Try something like this. Close the book, take your dog for a walk, and when you stop 'buzzing' with all that semi-new shiny knowledge, look around you and try to think about what you see using what you learned. Write down your questions. When you come home, calculate what proportion of your questions you have already answered in class (and so expect to turn up on the test.) If all of them, then you are either lucky, not interested in the course, or your dog needs more exercise:) if there are a few you haven't thought of before... it's worked.

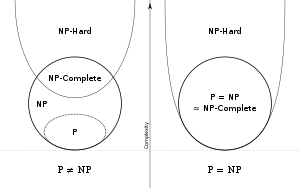

I don't think that overfitting is a good metaphor for your problem. Overfitting involves building a model that is more complicated than an optimal model would be. What exactly is the model here, and why do you think that learning just a subset of the course's material leads to building a more complicated model?

Instead, your example looks like a case of sampling bias. Think of the material of whole course as the whole distribution, and of the exam topics as a subset of that distribution. "Training" your brain with samples just from that subset is going to produce a learning outcome that is not likely to work well for the whole distribution.

Memorizing disconnected bits of knowledge without understanding the material - that would be a case of overfitting.

Memorizing disconnected bits of knowledge without understanding the material - that would be a case of overfitting.

That is exactly what most students do. Source: Am student, have watched others learn.

My first thought is that often times there are things worth mentioning in the lecture, but that aren't part of the material that they want to test for. But I do agree with your central points.

When I hear something like "What's going to be on the exam?", part of me gets indignant. WHAT?!?! You're defeating the whole point of the exam! You're committing the Deadly Sin of Overfitting!

Let me step back and explain my view of exams.

When I take a class, my goal is to learn the material. Exams are a way to answer the question, "How well did I learn the material?"[1]. But exams are only a few hours long, so it's unfeasible to have questions on all of the material. To deal with this time constraint, an exam takes a random sample of the material and gives me a "statistical" rather than "perfect" answer to the question, "How well did I learn the material?"

If I know in advance what topics will be covered on the exam, and if I then prepare for the exam by learning only those topics, then I am screwing up this whole process. By doing very well on the exam, I get the information, "Congratulations! You learned the material covered on the exam very well." But who knows how well I learned the material covered in class as a whole? This is a textbook case of overfitting.

To be clear, I don't necessarily lose respect for someone who asks, "What's going to be on the exam?". I understand that different people have different priorities[2], and that's fine by me. But if you're taking a class because you truly want to learn the material, in spite of any sacrifices that you might have to make to do so[3], then I'd like to encourage you not to "study for the test". I'd like to encourage you not to overfit.

[1] When I say "learned", I mean in the "Feynman" sense, not in the "teacher's password" sense. I believe that a necessary (but not sufficient) condition for an exam to check for this kind of learning is to have problems that I've never seen before.

[2] Someone might care much more about getting into medical school than, say, mastering classical mechanics. I respect that choice, and I acknowledge that someone might be in a system where getting a good grade in physics is required for getting into medical school, even though mastering classical mechanics isn't required for becoming a good doctor.

[3] There were a few terms when I felt like I did a really good job of learning the material (conveniently, I also got really good grades during these terms). But for these terms, one (or both) of the following would happen: