All of this is interesting, but it seems to me that you did not make a strong case for the brain using an universal learning machine as its main system.

Specifically, I think you fail to address the evidence for evolved modularity:

The brain uses spatially specialized regions for different cognitive tasks.

This specialization pattern is mostly consistent across different humans and even across different species.

Damage to or malformation of some brain regions can cause specific forms of disability (e.g. face blindness). Sometimes the disability can be overcome but often not completely.

In various mammals, infants are capable of complex behavior straight out of the womb. Human infants are only exhibit very simple behaviors and require many years to reach full cognitive maturity therefore the human brain relies more on learning than the brain of other mammals, but the basic architecture is the same, thus this is a difference of degree, not kind.

It seems more likely that if there is a general-purpose "universal" learning system in the human brain then it is used as an inefficient fall-back mechanism when the specialized modules fail, not as the core mechanism that hand...

Thanks, I was waiting for at least one somewhat critical reply :)

Specifically, I think you fail to address the evidence for evolved modularity:

- The brain uses spatially specialized regions for different cognitive tasks.

- This specialization pattern is mostly consistent across different humans and even across different species.

The ferret rewiring experiments, the tongue based vision stuff, the visual regions learning to perform echolocation computations in the blind, this evidence together is decisive against the evolved modularity hypothesis as I've defined that hypothesis, at least for the cortex. The EMH posits that the specific cortical regions rely on complex innate circuitry specialized for specific tasks. The evidence disproves that hypothesis.

Damage to or malformation of some brain regions can cause specific forms of disability (e.g. face blindness). Sometimes the disability can be overcome but often not completely.

Sure. Once you have software loaded/learned into hardware, damage to the hardware is damage to the software. This doesn't differentiate the two hypotheses.

...In various mammals, infants are capable of complex behavior straight out of the womb. Human in

The ferret rewiring experiments, the tongue based vision stuff, the visual regions learning to perform echolocation computations in the blind, this evidence together is decisive against the evolved modularity hypothesis as I've defined that hypothesis, at least for the cortex.

But none of these works as well as using the original task-specific regions, and anyway in all these experiments the original task-specific regions are still present and functional, therefore maybe the brain can partially use these regions by learning how to route the signals to them.

Sure. Once you have software loaded/learned into hardware, damage to the hardware is damage to the software. This doesn't differentiate the two hypotheses.

But then why doesn't universal learning just co-opt some other brain region to perform the task of the damaged one? In the cases where there is a congenital malformation, that makes the usual task-specific region missing or dysfunctional, why isn't the task allocated to some other region?

And anyway why is the specialization pattern consistent across individuals and even species? If you train an artificial neural network multiple times on the same dataset from different r...

in all these experiments the original task-specific regions are still present and functional, therefore maybe the brain can partially use these regions by learning how to route the signals to them.

No - these studies involve direct measurements (electrodes for the ferret rewiring, MRI for echolocation). They know the rewired auditory cortex is doing vision, etc.

But then why doesn't universal learning just co-opt some other brain region to perform the task of the damaged one?

It can, and this does happen all the time. Humans can recover from serious brain damage (stroke, injury, etc). It takes time to retrain and reroute circuitry - similar to relearning everything that was lost all over again.

And anyway why is the specialization pattern consistent across individuals and even species? If you train an artificial neural network multiple times on the same dataset

Current ANN's assume a fixed module layout, so they aren't really comparable in module-task assignment.

Much of the specialization pattern could just be geography - V1 becomes visual because it is closest to the visual input. A1 becomes auditory because it is closest to the auditory input. etc.

This should be the de...

Thank you. This was an excellent article, which helped me clarify my own thinking on the topic.

I'd love to see you write more on this.

A few brief supplements to your introduction:

The source of the generated image is no longer mysterious: Inceptionism: Going Deeper into Neural Networks

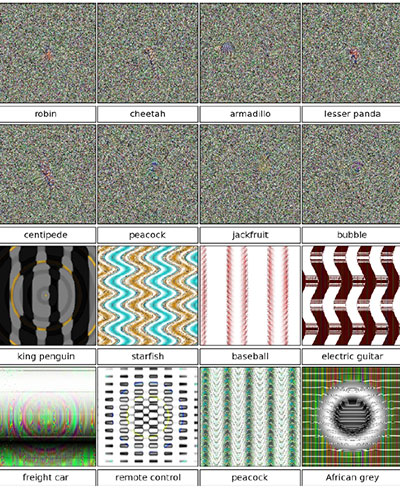

But though the above is quite fascinating and impressive, we should also keep in mind the bizarre false positives that a person can generate: Images that fool computer vision raise security concerns

The trippy shuggorth title image was mysterious when it was originally posted, basically someone leaked an image a little before the inceptionism blog post.

A CNN is a reasonable model for fast feedforward vision. We can isolate this pathway for biological vision by using rapid serial presentation - basically flashing an image for 100ms or so.

So imagine if you just saw a flash of one of these images, for a brief moment, and then you had to quickly press a button for the image category - no time to think about it - it's jeopardy style instant response.

There is no button for "noisy image", there is no button for "wavy line image", etc.

Now the fooling images are generated by an adversarial process. It's like we have a copy of a particular mind in a VR sim, we flash it an image, see what button it presses. Based on the response, we then generate a new image and unwind time and repeat. We keep doing this until we get some wierd classification errors. It allows us to explore the decision space of the agent.

It is basically reverse engineering. It requires a copy of the agent's code or at least access to a copy with the ability to do tons of queries, and it also probably depends on the agent being completely deterministic. I think that biological minds avoid this issue indirectly because they use stochastic sampling based on secure hardware/analog noise generators.

Stochastic models/ANNs could probably avoid this issue.

Great post! Thanks for writing it. Seems like a good fit for Main.

So just to clarify my understanding: If the ULH is true it becomes more plausible that, say, playing video games and hating books because authority figures force you to read them in school have long-term broad impacts on your personality. And if the EMH is true, it becomes more plausible that important characteristics like the Big Five personality traits and intelligence are genetically coded and you become the person your genes describe. Correct?

Yudkowsky's AI box experiments and that entire notion of open boxing is a strawman - a distraction.

Us humans have contemplated whether we are in a simulation even though no one "outside the Matrix" told us we might be. Is it possible that an AI-in-training might contemplate the same thing?

In general the evidence from the last four years or so supports Hanson's viewpoint from the Foom debate.

Really? My impression was that Hanson had more of a EMH view.

So just to clarify my understanding: If the ULH is true it becomes more plausible that, say, playing video games and hating books because authority figures force you to read them in school have long-term broad impacts on your personality.

I agree with this largely but I would replace 'personality' with 'mental software', or just 'mind'. Personality to me connotes a subset of mental aspects that are more associated with innate variables.

I suspect that enjoying/valuing learning is extremely important for later development. It seems probable that some people are born with a stronger innate drive for learning, but that drive by itself can also probably be adjusted through learning. But i'm not aware of any hard evidence on this matter.

In my case I was somewhat obsessed with video games as a young child and my father actually did force me to read books and even the encyclopedia. I found that I hated the books he made me read (I only liked sci-fi) but I loved the encyclopedia. I ended up learning how to quickly skim books and fake it enough to pass the resulting QA test.

...And if the EMH is true, it becomes more plausible that important characteristics like the Big Five personality

There is a more sinister interpretation of the idea of the mind as universal learning machine. That is, it is a pure blank neural net of some relatively simple architecture, which maps inputs to outputs. Recently there were an attempts to create self-driving car AIs using such approach: they just showed to the blank neural net hundreds of thousands of hours of driving and it has trained to predict the correct driver behaviour in any incoming situation. Such car-driving nets produced good performance (but still worse than advanced systems with Lidars, and hand-coded rules above them) so they are used by hobbists.

It has the following implications:

1) The secret of being human is in the dataset, not in human brain, and seriously damaged or altered brain could still learn how to be human (some autists have wrong wiring on the neuron levels, but after extensive training they could become functional humans). Even an animal could partly do it (Koko).

2) Humans don't have "free will", "values" or even "think" - they repeat replies that is encoded in their dataset. To "program" human, you need to write very long book (like Bible), but one's m...

One of the best posts I've here on LW, congratulations. I think that the most important algorithms that the brain implements will probably be less complex than anticipated. Epigenesis and early ontogenetic adaptation are heavily depended on feedback from the environment and probably very general, even if the 'evolution of learning' and genetic complexity provides some of the domain specifications ab initio. Results considering bounded computation (computational resources and limited information) will probably show that the ULM viewpoint cluster is compatible with the existence of cognitive biases and heuristics in our cognition http://www.pnas.org/content/103/9/3198

This is the best case for near AI I've read so far, and I also love your proposals for FAI.

I want to read more of your writings. Link?

The ULH suggests that most everything that defines the human mind is cognitive software rather than hardware: the adult mind (in terms of algorithmic information) is 99.999% a cultural/memetic construct.

I think a distinction worth tracing here is the diferrence between "learning" in the neural-net-sense and "learning" in the human pedagogical/psychological sense.

The "learning" done by a piece of cortex becoming a visual cortex after receiving neural impulses from the eye isn't something you can override by teaching a person...

The ULH suggests that most everything that defines the human mind is cognitive software rather than hardware: the adult mind (in terms of algorithmic information) is 99.999% a cultural/memetic construct.

Do you mean if I prove that less than 99.999% is cultural/memetic you think the ULH is proven wrong?

Very thought provoking. Thank you.

In the extreme case imagine that the brain is a pure ULM, such that the genetic prior information is close to zero or is simply unimportant. In this case it is vastly more likely that successful AGI will be built around designs very similar to the brain, as the ULM architecture in general is the natural ideal, vs the alternative of having to hand engineer all of the AI's various cognitive mechanisms.

Not necessarily. There are very different structures that are conceptually equivalent to a UTM (cellular automata, lambd...

The key defining characteristic of a ULM is that it uses its universal learning algorithm for continuous recursive self-improvement with regards to the utility function (reward system). We can view this as second (and higher) order optimization: the ULM optimizes the external world (first order), and also optimizes its own internal optimization process (second order), and so on. Without loss of generality, any system capable of computing a large number of decision variables can also compute internal self-modification decisions.

While I do believe that ...

Thank you for this overview. A couple of thoughts:

There is a recent and interesting result by Miller et al. (2015, MIT) supporting the hypothesis that the cortex doesn't process tasks in highly specialized modules, which is perhaps some evidence for a ULM in the human brain.

The importance of redundancy in biological systems might be another piece evidence for ULMs.

You write that "Infant emotions appear to simplify down to a single axis of happy/sad", which I think is not true. Surprise, fear and embarrassment are for example very early emot

- There is a recent and interesting result by Miller et al. (2015, MIT) supporting the hypothesis that the cortex doesn't process tasks in highly specialized modules

The actual paper is "Cortical information flow during flexible sensorimotor decisions"; it can be found here. I don't believe the reporter's summary is very accurate. They traced the flow of information in a moving dot task in a couple dozen cortical regions. It's interesting, but I don't think it especially differentiates the ULH.

.3. Good point. I'll need to correct that. I'm skeptical of embarrassment, but surprise and fear certainly.

.4. Yes that's correct. It perhaps would be more accurate to say more useful or more valuable. I meant more powerful in a general political/economic utility sense.

.5. I agree that the human brain, in particular the reward system, has dependencies on the body that are probably complex. However, reverse engineering empathy probably does not require exactly copying biological mechanisms.

.6. I should probably just cut that sentence, because it is a distraction itself.

But for context on the previous old boxing discussions. .. See this post in particular. Here Yudkowsky pr...

The problem with this is that the "engineering diagram" of the brain is really only a hardwire wiring diagram, and the status of speculations about how the hardware modules (really just areas) relate to functional modules is ... well, just that, speculation.

There are good reasons to suspect that the functional diagram would look competely different (reasons based in psychological data) and the current state of the art there is poor.

Except perhaps in certain quarters.

If an AGI is based on a neural network, how can you tell from the logs whether or not the AI knows it's in a simulation?

Current ANN engines can already train and run models with around 10 million neurons and 10 billion (compressed/shared) synapses on a single GPU, which suggests that the goal could soon be within the reach of a large organization.

This suggests 15000 GPUs is equivalent in computing power to a human brain, since we have about 150 trillion synapses? Why did you suggest 1000 earlier? How much of a multiplier on top of that do you think we need for trial-and-error research and training, before we get the first AGI? 10x? 100x? (If it isn't clear, I mean that i...

To create a superhuman AI driver, you 'just' need to create a realistic VR driving sim and then train a ULM in that world (better training and the simple power of selective copying leads to superhuman driving capability).

So to create benevolent AGI, we should think about how to create virtual worlds with the right structure, how to educate minds in those worlds, and how to safely evaluate the results.

There is some interesting overlap between these ideas and Eric Drexler's recent proposal. (Previously discussed on LessWrong here)

New AI designs (world design + architectural priors + training/education system) should be tested first in the safest virtual worlds: which in simplification are simply low tech worlds without computer technology. Design combinations that work well in safe low-tech sandboxes are promoted to less safe high-tech VR worlds, and then finally the real world.

A key principle of a secure code sandbox is that the code you are testing should not be aware that it is in a sandbox.

So you're saying that I'm secretly an AI being trained to be friendly for a more advanced world? ;)

Why isn't this in Main??? I mean I can understand that it wasn't posted there but the upvotes say 'Main' in a quite clear language. There isn't even much that could be improved in the post so why not move it?

"In the modern era some deaf humans have apparently acquired the ability to perform echolocation (sonar), similar to cetaceans." Did you mean blind?

Excellent article, thanks!

But here is also rising a question about animal intelligence. My cat (unfortunately) is more like a set of programms from the point of view of its behaviour, but its brain diagram is the same as in any vertebral. So does it support modules hypothesis?

That's a lot to absorb, so I've skimmed it, so please forgive if responses to the following are already implicit in what you've said.

I thought the point of the modularity hypothesis is that the brain only approximates a universal learning machine and has to be gerrymandered and trained to do so?

If the brain were naturally a universal learner, then surely we wouldn't have to learn universal learning (e.g. we wouldn't have to learn to overcome cognitive biases, Bayesian reasoning wouldn't be a recent discovery, etc.)? The system seems too gappy and glitchy, too full of quick judgement and prejudice, to have been designed as a universal learner from the ground up.

If the brain were naturally a universal learner, then surely we wouldn't have to learn universal learning (e.g. we wouldn't have to learn to overcome cognitive biases, Bayesian reasoning wouldn't be a recent discovery, etc.)? The system seems too gappy and glitchy, too full of quick judgement and prejudice, to have been designed as a universal learner from the ground up.

You are conflating the ideas of universal learning and rational thinking. They are not the same thing.

I'm a strong believer in the idea that the human intelligence emerges from a strong general purpose reinforcement learning algorithm. If that's true, then it's very consistent with our problems of cognitive bias.

If the RL idea is correct, then thinking is best understood as as a learned behavior, just like what words we speak with our lips is a learned behavior, just as how we move our arms and legs are learned behaviors. Under the principle that we are are an RL learning machine, what we learn, is ANY behavior which helps us to maximize our reward signal.

We don't learn rational behavior, we learn whatever behavior the learning system rationally has computed is what is needed to produce the most rewards. And ...

We don't learn rational behavior, we learn whatever behavior the learning system rationally has computed is what is needed to produce the most rewards.

Yes, this. But it is so easy to make mistakes when interpreting this statement, that I feel it requires dozen warnings to prevent readers from oversimplifying it.

For example, the behavior we learn is the behavior that produced most rewards in the past, when we were trained. If the environment changes, what we do may no longer give rewards in the new environment. Until we learn what produces rewards in the new environment.

Unless we already had an experience with changing environment, in which case we might adapt much more quickly, because we already have meta-behavior for "changing the behavior to adapt to new environment".

Unless we already had an experience when the environment changed, we adapted our behavior, then the environment suddenly changed back, and we were horribly punished for the adapted behavior, in which case the learned meta-behavior would be "do not change your behavior to adapt to the new environment (because it will change back and you will be rewarded for persistence)".

It is these learned met...

Other evidence I would add to the theory of the brain being a ULM is the existence of the g-factor, and the fact the general factor is one that explains the most variation in these cognitive tests. In addition - if you model human cognitive abilities as universal and specific, evolutionarily speaking it would make sense for the universal aspect to be under stronger selection than the specific domain. One exception to this could be language learning, which is important just for the sake of being able to communicate.

Interesting article. Minor note on clarity: You might want to clarify the acronym "EMH" where it appears, since it so often here stands for "efficient market hypothesis".

I find images such as the one above extremely disconcerting for some reason. They cause me about a 7/10 level of discomfort, verging on moderate pain. It also sticks in my head for a dozen or so minutes after viewing. I'd strongly prefer to never see one ever again, please.

I don't know if this is something unique to my brain, or if this is a step towards a real life BLIT, but wow. Awful to experience, I have extra empathy for epileptic people now.

Hi jacob_cannell, this article looks really interesting but it is a LOT to consume at once. Could you please put a summary at the top with the main points so that it makes the post easier to navigate?

Typeo just above "Basal Ganglia" section.

For example infants are born with a simple versions of a fear response, with is later refined through reinforcement learning.

"with is later" should be "which is later"

This was a great post, thanks!

One thing I'm curious about is how the ULH explains to the fact that human thought seems to be divided into System 1/System 2 - is this solely a matter of education history?

At first the ULH seemed to predict too much plasticity relative to observation, but on reflection I think it might predict the right amount. To square ULH with human universals, we have to hypothesize that the general structure and the conditions of human life robustly result in convergence to certain attractors. But the big advantage of this hypothesis is that it neatly explains why certain mental comlexes like farmer morality sometimes seems to have innate support while also being sometimes unlearnable and possibly not existing before agriculture.

This article presents an emerging architectural hypothesis of the brain as a biological implementation of a Universal Learning Machine. I present a rough but complete architectural view of how the brain works under the universal learning hypothesis. I also contrast this new viewpoint - which comes from computational neuroscience and machine learning - with the older evolved modularity hypothesis popular in evolutionary psychology and the heuristics and biases literature. These two conceptions of the brain lead to very different predictions for the likely route to AGI, the value of neuroscience, the expected differences between AGI and humans, and thus any consequent safety issues and dependent strategies.

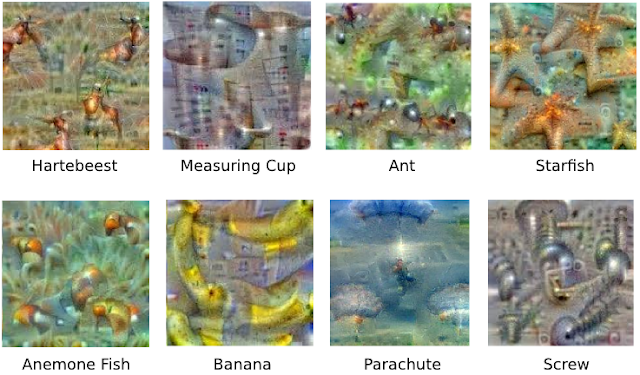

(The image above is from a recent mysterious post to r/machinelearning, probably from a Google project that generates art based on a visualization tool used to inspect the patterns learned by convolutional neural networks. I am especially fond of the wierd figures riding the cart in the lower left. )

Intro: Two Viewpoints on the Mind

-- Lord Acton (probably)

Less Wrong is a site devoted to refining the art of human rationality, where rationality is based on an idealized conceptualization of how minds should or could work. Less Wrong and its founding sequences draws heavily on the heuristics and biases literature in cognitive psychology and related work in evolutionary psychology. More specifically the sequences build upon a specific cluster in the space of cognitive theories, which can be identified in particular with the highly influential "evolved modularity" perspective of Cosmides and Tooby.

From Wikipedia:

From "Evolutionary Psychology and the Emotions":[5]

If you imagine these general theories or perspectives on the brain/mind as points in theory space, the evolved modularity cluster posits that much of the machinery of human mental algorithms is largely innate. General learning - if it exists at all - exists only in specific modules; in most modules learning is relegated to the role of adapting existing algorithms and acquiring data; the impact of the information environment is de-emphasized. In this view the brain is a complex messy cludge of evolved mechanisms.

The universal learning hypothesis proposes that all significant mental algorithms are learned; nothing is innate except for the learning and reward machinery itself (which is somewhat complicated, involving a number of systems and mechanisms), the initial rough architecture (equivalent to a prior over mindspace), and a small library of simple innate circuits (analogous to the operating system layer in a computer). In this view the mind (software) is distinct from the brain (hardware). The mind is a complex software system built out of a general learning mechanism.

Additional indirect support comes from the rapid unexpected success of Deep Learning[7], which is entirely based on building AI systems using simple universal learning algorithms (such as Stochastic Gradient Descent or other various approximate Bayesian methods[8][9][10][11]) scaled up on fast parallel hardware (GPUs). Deep Learning techniques have quickly come to dominate most of the key AI benchmarks including vision[12], speech recognition[13][14], various natural language tasks, and now even ATARI [15] - proving that simple architectures (priors) combined with universal learning is a path (and perhaps the only viable path) to AGI. Moreover, the internal representations that develop in some deep learning systems are structurally and functionally similar to representations in analogous regions of biological cortex[16].

To paraphrase Feynman: to truly understand something you must build it.

In this article I am going to quickly introduce the abstract concept of a universal learning machine, present an overview of the brain's architecture as a specific type of universal learning machine, and finally I will conclude with some speculations on the implications for the race to AGI and AI safety issues in particular.

Universal Learning Machines

A universal learning machine is a simple and yet very powerful and general model for intelligent agents. It is an extension of a general computer - such as Turing Machine - amplified with a universal learning algorithm. Do not view this as my 'big new theory' - it is simply an amalgamation of a set of related proposals by various researchers.

An initial untrained seed ULM can be defined by 1.) a prior over the space of models (or equivalently, programs), 2.) an initial utility function, and 3.) the universal learning machinery/algorithm. The machine is a real-time system that processes an input sensory/observation stream and produces an output motor/action stream to control the external world using a learned internal program that is the result of continuous self-optimization.

There is of course always room to smuggle in arbitrary innate functionality via the prior, but in general the prior is expected to be extremely small in bits in comparison to the learned model.

The key defining characteristic of a ULM is that it uses its universal learning algorithm for continuous recursive self-improvement with regards to the utility function (reward system). We can view this as second (and higher) order optimization: the ULM optimizes the external world (first order), and also optimizes its own internal optimization process (second order), and so on. Without loss of generality, any system capable of computing a large number of decision variables can also compute internal self-modification decisions.

Conceptually the learning machinery computes a probability distribution over program-space that is proportional to the expected utility distribution. At each timestep it receives a new sensory observation and expends some amount of computational energy to infer an updated (approximate) posterior distribution over its internal program-space: an approximate 'Bayesian' self-improvement.

A ULM inherits the general property of a Turing Machine that it can compute anything that is computable, given appropriate resources. However a ULM is also more powerful than a TM. A Turing Machine can only do what it is programmed to do. A ULM automatically programs itself.

If you were to open up an infant ULM - a machine with zero experience - you would mainly just see the small initial code for the learning machinery. The vast majority of the codestore starts out empty - initialized to noise. (In the brain the learning machinery is built in at the hardware level for maximal efficiency).

Theoretical turing machines are all qualitatively alike, and are all qualitatively distinct from any non-universal machine. Likewise for ULMs. Theoretically a small ULM is just as general/expressive as a planet-sized ULM. In practice quantitative distinctions do matter, and can become effectively qualitative.

Just as the simplest possible Turing Machine is in fact quite simple, the simplest possible Universal Learning Machine is also probably quite simple. A couple of recent proposals for simple universal learning machines include the Neural Turing Machine[16] (from Google DeepMind), and Memory Networks[17]. The core of both approaches involve training an RNN to learn how to control a memory store through gating operations.

Historical Interlude

At this point you may be skeptical: how could the brain be anything like a universal learner? What about all of the known innate biases/errors in human cognition? I'll get to that soon, but let's start by thinking of a couple of general experiments to test the universal learning hypothesis vs the evolved modularity hypothesis.

In a world where the ULH is mostly correct, what do we expect to be different than in worlds where the EMH is mostly correct?

One type of evidence that would support the ULH is the demonstration of key structures in the brain along with associated wiring such that the brain can be shown to directly implement some version of a ULM architecture.

From the perspective of the EMH, it is not sufficient to demonstrate that there are things that brains can not learn in practice - because those simply could be quantitative limitations. Demonstrating that an intel 486 can't compute some known computable function in our lifetimes is not proof that the 486 is not a Turing Machine.

Nor is it sufficient to demonstrate that biases exist: a ULM is only 'rational' to the extent that its observational experience and learning machinery allows (and to the extent one has the correct theory of rationality). In fact, the existence of many (most?) biases intrinsically depends on the EMH - based on the implicit assumption that some cognitive algorithms are innate. If brains are mostly ULMs then most cognitive biases dissolve, or become learning biases - for if all cognitive algorithms are learned, then evidence for biases is evidence for cognitive algorithms that people haven't had sufficient time/energy/motivation to learn. (This does not imply that intrinsic limitations/biases do not exist or that the study of cognitive biases is a waste of time; rather the ULH implies that educational history is what matters most)

The genome can only specify a limited amount of information. The question is then how much of our advanced cognitive machinery for things like facial recognition, motor planning, language, logic, planning, etc. is innate vs learned. From evolution's perspective there is a huge advantage to preloading the brain with innate algorithms so long as said algorithms have high expected utility across the expected domain landscape.

On the other hand, evolution is also highly constrained in a bit coding sense: every extra bit of code costs additional energy for the vast number of cellular replication events across the lifetime of the organism. Low code complexity solutions also happen to be exponentially easier to find. These considerations seem to strongly favor the ULH but they are difficult to quantify.

Neuroscientists have long known that the brain is divided into physical and functional modules. These modular subdivisions were discovered a century ago by Brodmann. Every time neuroscientists opened up a new brain, they saw the same old cortical modules in the same old places doing the same old things. The specific layout of course varied from species to species, but the variations between individuals are minuscule. This evidence seems to strongly favor the EMH.

Throughout most of the 90's up into the 2000's, evidence from computational neuroscience models and AI were heavily influenced by - and unsurprisingly - largely supported the EMH. Neural nets and backprop were known of course since the 1980's and worked on small problems[18], but at the time they didn't scale well - and there was no theory to suggest they ever would.

Theory of the time also suggested local minima would always be a problem (now we understand that local minima are not really the main problem[19], and modern stochastic gradient descent methods combined with highly overcomplete models and stochastic regularization[20] are effectively global optimizers that can often handle obstacles such as local minima and saddle points[21]).

The other related historical criticism rests on the lack of biological plausibility for backprop style gradient descent. (There is as of yet little consensus on how the brain implements the equivalent machinery, but target propagation is one of the more promising recent proposals[22][23].)

Many AI researchers are naturally interested in the brain, and we can see the influence of the EMH in much of the work before the deep learning era. HMAX is a hierarchical vision system developed in the late 90's by Poggio et al as a working model of biological vision[24]. It is based on a preconfigured hierarchy of modules, each of which has its own mix of innate features such as gabor edge detectors along with a little bit of local learning. It implements the general idea that complex algorithms/features are innate - the result of evolutionary global optimization - while neural networks (incapable of global optimization) use hebbian local learning to fill in details of the design.

Dynamic Rewiring

In a groundbreaking study from 2000 published in Nature, Sharma et al successfully rewired ferret retinal pathways to project into the auditory cortex instead of the visual cortex.[25] The result: auditory cortex can become visual cortex, just by receiving visual data! Not only does the rewired auditory cortex develop the specific gabor features characteristic of visual cortex; the rewired cortex also becomes functionally visual. [26] True, it isn't quite as effective as normal visual cortex, but that could also possibly be an artifact of crude and invasive brain rewiring surgery.

The ferret study was popularized by the book On Intelligence by Hawkins in 2004 as evidence for a single cortical learning algorithm. This helped percolate the evidence into the wider AI community, and thus probably helped in setting up the stage for the deep learning movement of today. The modern view of the cortex is that of a mostly uniform set of general purpose modules which slowly become recruited for specific tasks and filled with domain specific 'code' as a result of the learning (self optimization) process.

The next key set of evidence comes from studies of atypical human brains with novel extrasensory powers. In 2009 Vuillerme et al showed that the brain could automatically learn to process sensory feedback rendered onto the tongue[27]. This research was developed into a complete device that allows blind people to develop primitive tongue based vision.

In the modern era some blind humans have apparently acquired the ability to perform echolocation (sonar), similar to cetaceans. In 2011 Thaler et al used MRI and PET scans to show that human echolocators use diverse non-auditory brain regions to process echo clicks, predominantly relying on re-purposed 'visual' cortex.[27]

The echolocation study in particular helps establish the case that the brain is actually doing global, highly nonlocal optimization - far beyond simple hebbian dynamics. Echolocation is an active sensing strategy that requires very low latency processing, involving complex timed coordination between a number of motor and sensory circuits - all of which must be learned.

Somehow the brain is dynamically learning how to use and assemble cortical modules to implement mental algorithms: everyday tasks such as visual counting, comparisons of images or sounds, reading, etc - all are task which require simple mental programs that can shuffle processed data between modules (some or any of which can also function as short term memory buffers).

To explain this data, we should be on the lookout for a system in the brain that can learn to control the cortex - a general system that dynamically routes data between different brain modules to solve domain specific tasks.

But first let's take a step back and start with a high level architectural view of the entire brain to put everything in perspective.

Brain Architecture

Below is a circuit diagram for the whole brain. Each of the main subsystems work together and are best understood together. You can probably get a good high level extremely coarse understanding of the entire brain is less than one hour.

(there are a couple of circuit diagrams of the whole brain on the web, but this is the best. From this site.)

The human brain has ~100 billion neurons and ~100 trillion synapses, but ultimately it evolved from the bottom up - from organisms with just hundreds of neurons, like the tiny brain of C. Elegans.

We know that evolution is code complexity constrained: much of the genome codes for cellular metabolism, all the other organs, and so on. For the brain, most of its bit budget needs to be spent on all the complex neuron, synapse, and even neurotransmitter level machinery - the low level hardware foundation.

For a tiny brain with 1000 neurons or less, the genome can directly specify each connection. As you scale up to larger brains, evolution needs to create vastly more circuitry while still using only about the same amount of code/bits. So instead of specifying connectivity at the neuron layer, the genome codes connectivity at the module layer. Each module can be built from simple procedural/fractal expansion of progenitor cells.

So the size of a module has little to nothing to do with its innate complexity. The cortical modules are huge - V1 alone contains 200 million neurons in a human - but there is no reason to suspect that V1 has greater initial code complexity than any other brain module. Big modules are built out of simple procedural tiling patterns.

Very roughly the brain's main modules can be divided into six subsystems (there are numerous smaller subsystems):

In the interest of space/time I will focus primarily on the Basal Ganglia and will just touch on the other subsystems very briefly and provide some links to further reading.

The neocortex has been studied extensively and is the main focus of several popular books on the brain. Each neocortical module is a 2D array of neurons (technically 2.5D with a depth of about a few dozen neurons arranged in about 5 to 6 layers).

Each cortical module is something like a general purpose RNN (recursive neural network) with 2D local connectivity. Each neuron connects to its neighbors in the 2D array. Each module also has nonlocal connections to other brain subsystems and these connections follow the same local 2D connectivity pattern, in some cases with some simple affine transformations. Convolutional neural networks use the same general architecture (but they are typically not recurrent.)

Cortical modules - like artifical RNNs - are general purpose and can be trained to perform various tasks. There are a huge number of models of the cortex, varying across the tradeoff between biological realism and practical functionality.

Perhaps surprisingly, any of a wide variety of learning algorithms can reproduce cortical connectivity and features when trained on appropriate sensory data[27]. This is a computational proof of the one-learning-algorithm hypothesis; furthermore it illustrates the general idea that data determines functional structure in any general learning system.

There is evidence that cortical modules learn automatically (unsupervised) to some degree, and there is also some evidence that cortical modules can be trained to relearn data from other brain subsystems - namely the hippocampal complex. The dark knowledge distillation technique in ANNs[28][29] is a potential natural analog/model of hippocampus -> cortex knowledge transfer.

Module connections are bidirectional, and feedback connections (from high level modules to low level) outnumber forward connections. We can speculate that something like target propagation can also be used to guide or constrain the development of cortical maps (speculation).

The hippocampal complex is the root or top level of the sensory/motor hierarchy. This short youtube video gives a good seven minute overview of the HC. It is like a spatiotemporal database. It receives compressed scene descriptor streams from the sensory cortices, it stores this information in medium-term memory, and it supports later auto-associative recall of these memories. Imagination and memory recall seem to be basically the same.

The 'scene descriptors' take the sensible form of things like 3D position and camera orientation, as encoded in place, grid, and head direction cells. This is basically the logical result of compressing the sensory stream, comparable to the networking data stream in a multiplayer video game.

Imagination/recall is basically just the reverse of the forward sensory coding path - in reverse mode a compact scene descriptor is expanded into a full imagined scene. Imagined/remembered scenes activate the same cortical subnetworks that originally formed the memory (or would have if the memory was real, in the case of imagined recall).

The amygdala and associated limbic reward modules are rather complex, but look something like the brain's version of the value function for reinforcement learning. These modules are interesting because they clearly rely on learning, but clearly the brain must specify an initial version of the value/utility function that has some minimal complexity.

As an example, consider taste. Infants are born with basic taste detectors and a very simple initial value function for taste. Over time the brain receives feedback from digestion and various estimators of general mood/health, and it uses this to refine the initial taste value function. Eventually the adult sense of taste becomes considerably more complex. Acquired taste for bitter substances - such as coffee and beer - are good examples.

The amygdala appears to do something similar for emotional learning. For example infants are born with a simple versions of a fear response, with is later refined through reinforcement learning. The amygdala sits on the end of the hippocampus, and it is also involved heavily in memory processing.

See also these two videos from khanacademy: one on the limbic system and amygdala (10 mins), and another on the midbrain reward system (8 mins)

The Basal Ganglia

The Basal Ganglia is a wierd looking complex of structures located in the center of the brain. It is a conserved structure found in all vertebrates, which suggests a core functionality. The BG is proximal to and connects heavily with the midbrain reward/limbic systems. It also connects to the brain's various modules in the cortex/hippocampus, thalamus and the cerebellum . . . basically everything.

All of these connections form recurrent loops between associated compartmental modules in each structure: thalamocortical/hippocampal-cerebellar-basal_ganglial loops.

Just as the cortex and hippocampus are subdivided into modules, there are corresponding modular compartments in the thalamus, basal ganglia, and the cerebellum. The set of modules/compartments in each main structure are all highly interconnected with their correspondents across structures, leading to the concept of distributed processing modules.

Each DPM forms a recurrent loop across brain structures (the local networks in the cortex, BG, and thalamus are also locally recurrent, whereas those in the cerebellum are not). These recurrent loops are mostly separate, but each sub-structure also provides different opportunities for inter-loop connections.

The BG appears to be involved in essentially all higher cognitive functions. Its core functionality is action selection via subnetwork switching. In essence action selection is the core problem of intelligence, and it is also general enough to function as the building block of all higher functionality. A system that can select between motor actions can also select between tasks or subgoals. More generally, low level action selection can easily form the basis of a Turing Machine via selective routing: deciding where to route the output of thalamocortical-cerebellar modules (some of which may specialize in short term memory as in the prefrontal cortex, although all cortical modules have some short term memory capability).

There are now a number of computational models for the Basal Ganglia-Cortical system that demonstrate possible biologically plausible implementations of the general theory[28][29]; integration with the hippocampal complex leads to larger-scale systems which aim to model/explain most of higher cognition in terms of sequential mental programs[30] (of course fully testing any such models awaits sufficient computational power to run very large-scale neural nets).

For an extremely oversimplified model of the BG as a dynamic router, consider an array of N distributed modules controlled by the BG system. The BG control network expands these N inputs into an NxN matrix. There are N2 potential intermodular connections, each of which can be individually controlled. The control layer reads a compressed, downsampled version of the module's hidden units as its main input, and is also recurrent. Each output node in the BG has a multiplicative gating effect which selectively enables/disables an individual intermodular connection. If the control layer is naively fully connected, this would require (N2)2 connections, which is only feasible for N ~ 100 modules, but sparse connectivity can substantially reduce those numbers.

It is unclear (to me), whether the BG actually implements NxN style routing as described above, or something more like 1xN or Nx1 routing, but there is general agreement that it implements cortical routing.

Of course in actuality the BG architecture is considerably more complex, as it also must implement reinforcement learning, and the intermodular connectivity map itself is also probably quite sparse/compressed (the BG may not control all of cortex, certainly not at a uniform resolution, and many controlled modules may have a very limited number of allowed routing decisions). Nonetheless, the simple multiplicative gating model illustrates the core idea.

This same multiplicative gating mechanism is the core principle behind the highly successful LSTM (Long Short-Term Memory)[30] units that are used in various deep learning systems. The simple version of the BG's gating mechanism can be considered a wider parallel and hierarchical extension of the basic LSTM architecture, where you have a parallel array of N memory cells instead of 1, and each memory cell is a large vector instead of a single scalar value.

The main advantage of the BG architecture is parallel hierarchical approximate control: it allows a large number of hierarchical control loops to update and influence each other in parallel. It also reduces the huge complexity of general routing across the full cortex down into a much smaller-scale, more manageable routing challenge.

Implications for AGI

These two conceptions of the brain - the universal learning machine hypothesis and the evolved modularity hypothesis - lead to very different predictions for the likely route to AGI, the expected differences between AGI and humans, and thus any consequent safety issues and strategies.

In the extreme case imagine that the brain is a pure ULM, such that the genetic prior information is close to zero or is simply unimportant. In this case it is vastly more likely that successful AGI will be built around designs very similar to the brain, as the ULM architecture in general is the natural ideal, vs the alternative of having to hand engineer all of the AI's various cognitive mechanisms.

In reality learning is computationally hard, and any practical general learning system depends on good priors to constrain the learning process (essentially taking advantage of previous knowledge/learning). The recent and rapid success of deep learning is strong evidence for how much prior information is ideal: just a little. The prior in deep learning systems takes the form of a compact, small set of hyperparameters that control the learning process and specify the overall network architecture (an extremely compressed prior over the network topology and thus the program space).

The ULH suggests that most everything that defines the human mind is cognitive software rather than hardware: the adult mind (in terms of algorithmic information) is 99.999% a cultural/memetic construct. Obviously there are some important exceptions: infants are born with some functional but very primitive sensory and motor processing 'code'. Most of the genome's complexity is used to specify the learning machinery, and the associated reward circuitry. Infant emotions appear to simplify down to a single axis of happy/sad; differentiation into the more subtle vector space of adult emotions does not occur until later in development.

If the mind is software, and if the brain's learning architecture is already universal, then AGI could - by default - end up with a similar distribution over mindspace, simply because it will be built out of similar general purpose learning algorithms running over the same general dataset. We already see evidence for this trend in the high functional similarity between the features learned by some machine learning systems and those found in the cortex.

Of course an AGI will have little need for some specific evolutionary features: emotions that are subconsciously broadcast via the facial muscles is a quirk unnecessary for an AGI - but that is a rather specific detail.

The key takeway is that the data is what matters - and in the end it is all that matters. Train a universal learner on image data and it just becomes a visual system. Train it on speech data and it becomes a speech recognizer. Train it on ATARI and it becomes a little gamer agent.

Train a universal learner on the real world in something like a human body and you get something like the human mind. Put a ULM in a dolphin's body and echolocation is the natural primary sense, put a ULM in a human body with broken visual wiring and you can also get echolocation.

Control over training is the most natural and straightforward way to control the outcome.

To create a superhuman AI driver, you 'just' need to create a realistic VR driving sim and then train a ULM in that world (better training and the simple power of selective copying leads to superhuman driving capability).

So to create benevolent AGI, we should think about how to create virtual worlds with the right structure, how to educate minds in those worlds, and how to safely evaluate the results.

One key idea - which I proposed five years ago is that the AI should not know it is in a sim.

New AI designs (world design + architectural priors + training/education system) should be tested first in the safest virtual worlds: which in simplification are simply low tech worlds without computer technology. Design combinations that work well in safe low-tech sandboxes are promoted to less safe high-tech VR worlds, and then finally the real world.

A key principle of a secure code sandbox is that the code you are testing should not be aware that it is in a sandbox. If you violate this principle then you have already failed. Yudkowsky's AI box thought experiment assumes the violation of the sandbox security principle apriori and thus is something of a distraction. (the virtual sandbox idea was most likely discussed elsewhere previously, as Yudkowsky indirectly critiques a strawman version of the idea via this sci-fi story).

The virtual sandbox approach also combines nicely with invisible thought monitors, where the AI's thoughts are automatically dumped to searchable logs.

Of course we will still need a solution to the value learning problem. The natural route with brain-inspired AI is to learn the key ideas behind value acquisition in humans to help derive an improved version of something like inverse reinforcement learning and or imitation learning[31] - an interesting topic for another day.

Conclusion

Ray Kurzweil has been predicting for decades that AGI will be built by reverse engineering the brain, and this particular prediction is not especially unique - this has been a popular position for quite a while. My own investigation of neuroscience and machine learning led me to a similar conclusion some time ago.

The recent progress in deep learning, combined with the emerging modern understanding of the brain, provide further evidence that AGI could arrive around the time when we can build and train ANNs with similar computational power as measured very roughly in terms of neuron/synapse counts. In general the evidence from the last four years or so supports Hanson's viewpoint from the Foom debate. More specifically, his general conclusion:

The ULH supports this conclusion.

Current ANN engines can already train and run models with around 10 million neurons and 10 billion (compressed/shared) synapses on a single GPU, which suggests that the goal could soon be within the reach of a large organization. Furthermore, Moore's Law for GPUs still has some steam left, and software advances are currently improving simulation performance at a faster rate than hardware. These trends implies that Anthropomorphic/Neuromorphic AGI could be surprisingly close, and may appear suddenly.

What kind of leverage can we exert on a short timescale?