All of gianlucatruda's Comments + Replies

I somehow missed all notifications of your reply and just stumbled upon it by chance when sharing this post with someone.

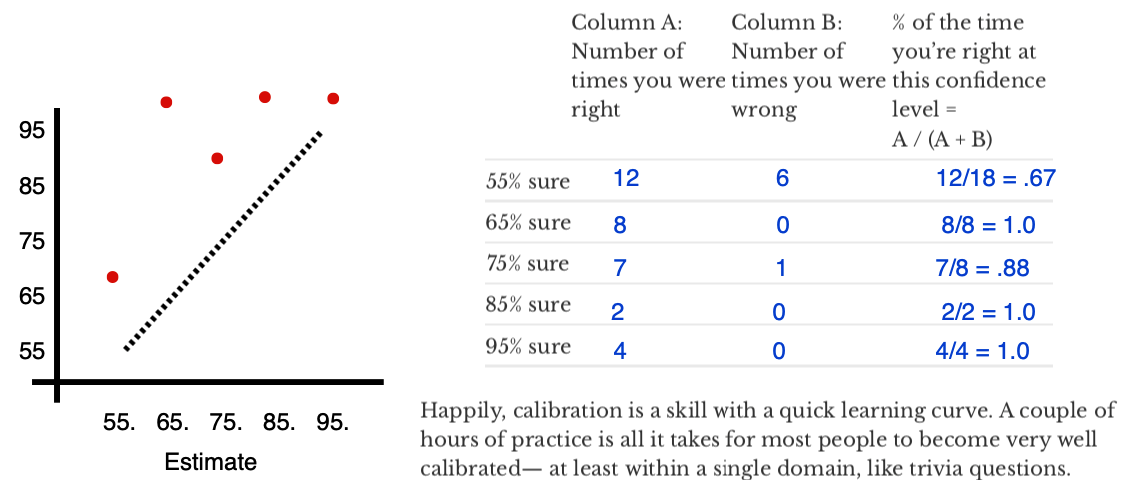

I had something very similar with my calibration results, only it was for 65% estimates:

I think your hypotheses 1 and 2 match with my intuitions about why this pattern emerges on a test like this. Personally, I feel like a combination of 1 and 2 is responsible for my "blip" at 65%.

I'm also systematically under-confident here — that's because I cut my prediction teeth getting black swanned during 2020, so I tend to leave...

This is a superb summary! I'll definitely be returning to this as a cheatsheet for the core ideas from the book in future. I've also linked to it in my review on Goodreads.

it's straightforwardly the #1 book you should use when you want to recruit new people to EA. [...] For rationalists, I think the best intro resource is still HPMoR or R:AZ, but I think Scout Mindset is a great supplement to those, and probably a better starting point for people who prefer Julia's writing style over Eliezer's.

Hmm... I've had great success with the HPMOR / R:AZ route for c...

I present to you VQGANCLIP's take on a Bob Ross painting of Bob Ross painting Bob Ross paintings 😂 This surpassed my wildest expectations!

I don't feel like it's the kind of polished thing I'd put on LW. But here it is on my blog: gianlucatruda.com/blog/2021/07/08/wisdom.html

One decent way of engineering an authentic 1-1 conversation is to go through a bunch of personal and vulnerability-inducing questions together, a la 36 Questions that Lead in Love (after cutting the ⅔ of questions that I found dull). So I made a list of questions I considered interesting, which I expected to lead to authentic and vulnerable conversations.

This is a fascinating strategy and I'm surprised it worked so well. The linked article for the list of questions is paywalled (and NYT).

After a bit of digging, this seems to be the original study for...

The full audiobook is now available at https://anchor.fm/guilt/episodes/Replacing-Guilt-full-audiobook-e13ct4d/a-a5vrdtu

I'll DM you :)

Okay, I absolutely love this post! In fact, if you were to break it down into three posts, I would probably have been a serious fan of all of them individually.

Firstly, the expected utility formulation of lateness is excellent and explains a lot of my personal behaviour. I'm aggressively early for important events like client meetings and interviews, but consistently tardy when meeting for coffee or arriving for a lecture. Whilst your methodology focussed on unobservable shifts to the time axis, I suspect there are also interesting gains to be made i...

Was about to comment the same thing. Saving it to my Wisdom List.

UPDATE: I've published the list here: https://gianlucatruda.com/blog/2021/07/08/wisdom.html

Most rationalists are heavily invested into AGI in non-monetary ways — career paths, free time, hopes for longevity/coordination breakthroughs. As other commenters have pointed out, if humanity achieves aligned AGI in the future, financial returns will feasibly be far less important. Given that, maybe the best investments are to bet against AGI as a hedge for humanity not achieving it.

There are 3 futures: If we achieve aligned AGI, we win the game and nothing else matters*. If we achieve misaligned AGI, we die and nothing else matters. If we fail to ...

which might happen in 1-2 years and tank crypto-mining completely.

Good point. But that would be a much better time to buy in for long-term value.

One approach that feels a bit more direct is investing in semiconductor stocks.

I agree with this and the above points.

One way to potentially overcome the issues with TSMC might be to supplement the investment by buying into commodities like silicon and coltan. This is still not guaranteed to capture most of the value, but might be a method of diversification. But there are many ethical considerations (particularly with coltan).

Yes! Even many websites and web apps implement some Vim standards. Particularly \ for search.

This is a superb overview! I've used Vim for about 2 years now, but I still learned a bunch of things from this post that I didn't pick up from other cheatsheets or articles.

My 2-cents: Vim itself is powerful as an editor, but I always missed some IDE features. What I've come to realise is that the real power of Vim is not the editor, but the keybindings. I installed the Vim extension in VSCode some time ago and have loved the hybrid workflow. Since then, I've been gradually incorporating Vim keybindings into all the tools I use for text — like Overl...

There will be a Sequences Discussion Club event to talk about this post. Join us on Clubhouse tonight for a ~1h discussion. https://www.joinclubhouse.com/event/PQRv1RoA

If you listen from 31:54 (linked here) to 46:00, Lex articulates very nicely what's unique and interesting about Clubhouse and they discuss how it compares to Skinner-box social media. It's a nice summary of the underlying value and definitely echoes some of my experiences so far.

Try joining communities/clubs on topics you’re interested in. Then any rooms started by their members should pop up in your lobby. Also, I’ve heard that following people you’re interested in helps improve the suggestions.

I'd love to do that sometime (timezones permitting). I'm @gianlucatruda on Clubhouse.

Great summary! For those reading the comments, there is a growing Rationalist-oriented community on Clubhouse. Join here: https://www.joinclubhouse.com/club/rationality-live

Update: I tried searching again now and it pops up when I search “rationality” now. Seems it just took a while to update.

Seems that there isn't yet a robust way to share these new communities (that I've found). But I'm glad you're finally in. Looking forward to some future conversations!

I don't think it's you. These in-app communities are a brand new feature, so I suspect it's still a bit buggy. Thanks for letting me know.

Try visit this event link from your phone and then tap on the club name. Does that work?

I'll also try invite you directly from the app.

Agreed. They're working on Android at the moment. I should have made all that clear in the post.

I just discovered this now, Zvi. It's such a great heuristic!

I whipped up an interactive calculator version in Desmos for my own future reference, but others might find it useful too: https://www.desmos.com/calculator/pf74qjhzuk

Apologies for the late reply. Thanks for your kind words and support!

My Replacing Guilt output has been very low lately, but I'll have some more time flexibility in the near future and will start making progress again.

Thanks for compiling the series like this. I really appreciated being able to read it on my Kindle!

To help make Nate's ideas even more accessible, I'm currently producing an audio version. It can be found at https://anchor.fm/guilt or by searching "Replacing Guilt Podcast" on all podcast platforms. I intend to make a single audiobook out of it at the end too*.

If you know of people who would benefit from Replacing Guilt, but primarily consume audio instead of reading, please do forward it their way.

*All with Nate's permission, of course

The full audiobook is now available at https://anchor.fm/guilt/episodes/Replacing-Guilt-full-audiobook-e13ct4d/a-a5vrdtu

No, to be clear, what I interpreted of your post was that you are "prescribing" how much time should be spent. "Predicting" how much time you will spend on something is not particularly helpful in achieving any output results, especially if it's largely just repeating what you did before. Your response does help in that it clarifies what you have done. It's just not what I thought you had done. In my experience, a "prescription" -- a plan for what you must do to achieve some valuable outcome -- is of more use in sel...

Could you elaborate on how exactly you went from a collection of data to a prescription of how much time you should spend on each task?

Great post and helpful synthesis of the difference in procedural- and declarative- directed approaches. The matrix multiplication example earns a 10/10 too. I trust the exams went well!

The perspective of the black-box AI could make for a great piece of fiction. I'd love to write a sci-fi short and explore that concept someday!

The point of your post (as I took it) is that it takes only a little creativity to come up with ways an intelligent black-box could covertly "unbox" itself, given repeated queries. Imagining oneself as the black-box makes it far easier to generate ideas for how to do that. An extended version of your scenario could be both entertaining and thought-provoking to read.