# Feast Quality Model: Full Parameter Specification (simon note: AI generated)

## Executive Summary

This document presents two piecewise linear models for predicting feast quality based on the balance of spicy, sweet, and bulk characteristics. Both models use learned per-dish weights for each dimension, with penalties applied when totals fall outside optimal zones.

**Key Finding:** The "spicy peak" model shows marginal RMSE improvement and is **marginally significant** (p=0.034) by F-test, but **BIC favors the simpler flat model**. Given the mixed evidence, we recommend targeting the **center of the flat model's optimal zones** for maximum robustness.

---

## Model Comparison

| Metric | All-Flat Model | Spicy Peak Model |

|--------|----------------|------------------|

| RMSE | 1.9635 | 1.9583 |

| Parameters | 64

further followup:

So I have (had AI generate) a new better model, in which the sweet/spicy/bulk dimensions have continuous values, each independently having a piecewise linear effect on feast quality. In one version, the piecewise linear functions have a flat middle, with drop offs at higher and lower values. This results in a range in which all feasts are equivalent. In another version, there's a peak at low spiciness and we try to pick near that spiciness peak. A combined hedge version has us pick as low a spiciness value as possible within the flat range. The resulting feast pick is:**['Geometric Gelatinous Gateau', 'Pegasus Pinion Pudding', 'Roc Roasted Rare', 'Troll Tenderloin Tartare', 'Vicious

followup after reading other answers:

abstractapplic says that it's total quantity of sweetness/spiciness that matters, and I feel like that's almost certainly true. Probably the hearty/ethereal thing is quantitative too if it exists (may or may not relate to total food quality, I note Claude thought Roc had x2 food contribution, after I suggested it as a possible explanation for only Roc feasts going down to 2 ingredients and some tests seemed to corroborate.). I had some AI generated quantitative stuff (including sweet, spicy and hearty/ethereal axes), but not sure it was even used in the actual model before the AI pushed me to an interactions model that couldn't possibly be the simplest

# Feast Analysis Summary (simon note - AI generated, also obsolete. I've un-upvoted it which might hide it)

This document summarizes findings from analyzing feast quality variance, optimal feast selection, and ingredient distribution patterns.

---

## 1. Noise Model Analysis

### Approach

We analyzed duplicate feast configurations (same dishes, multiple observations) to understand the underlying noise distribution. With 67 n=2 pairs and 10 n=3 triplets (excluding WWW), we computed spread distributions and compared them to various hypothesized noise models using full likelihood calculations.

### Findings

**Spread distribution (observed, no WWW):**

| Spread | Count | Percentage |

|--------|-------|------------|

| 0 | 10 | 14.9% |

| 1 | 23 | 34.3% |

| 2 | 20 | 29.9% |

| 3 | 3 | 4.5%

Thanks for the extension aphyer.

And after the extension, I wound up just doing more LLM analysis (having already done some beforehand) and while it's probably better than nothing, I would not be surprised at all if there were a simple ruleset which wasn't found due to being outside the considered hypothesis space. Anyway, with some trepidation I'll go with Claude's Q10 feast from the summary by Claude 4.5 Opus, which I'll put in a reply comment so it can be separately collapsed. That feast is:Ambrosial Applesauce, BBQ Basilisk Brisket, Geometric Gelatinous Gateau, Roc Roasted Rare, Troll Tenderloin Tartare, Vicious Vampire Vindaloo.

Edit: now **['Geometric Gelatinous Gateau', 'Pegasus Pinion Pudding', 'Roc Roasted Rare', 'Troll Tenderloin Tartare', 'Vicious Vampire Vindaloo']**

actually

**BBQ Basilisk Brisket, Displacer Dumplings, Geometric Gelatinous Gateau, Killer Kraken Kebabs, Opulent Owlbear Omelette, Pegasus Pinion Pudding, Troll Tenderloin Tartare**

following revised model in followup comment.

AI summary in reply comment:

In my view:

- facilitation of stag-hunt-like cooperation is really useful. Because cooperating to do stuff beyond the capabilities of individuals is useful but hard.

- the dynamic you discuss in the post applies to stag hunt facilitation because their success depends on willingness of others to provide more resources (up to some point where they can generate more)

- the difference between, e.g., Theranos and standard entrepreneurship does not lie in the dynamic you discuss in the post. It lies in how egregiously Elizabeth Holmes was lying relative to the standard level of misleadingness. (and of course, more honesty would be better...)

- It would of course be very valuable to determine if a stag hunt will pay off or will fail! But the difference between the two does not lie in the dynamic you discuss in the post (which applies to both ultimately successful and unsuccessful stag hunts).

aimed in a relatively broad sense at people who care about how well societies and groups of people function.

I don't think allowing financial fraud is a thing current institutions mostly want? The difficulty is more in figuring out how to stop it without stopping legitimate activity as well (A lot of successful entrepreneurship will look kind of a lot like this, I think). If you are calling for normal speculative investment to be banned, it's very likely not worth the loss of innovation. (It may make sense perhaps to be more strict about what level of falsehood leads to fraud prosecution, but I would keep it to banning false claims).

My accusations, at least so far:

Danny Nova for curing:

- Babblepox (always present in same sector)

- Bumblepox, Scramblepox (always present in same sector OR Azeru in adjacent sector

- Gurglepox (always present in same sector OR Dankon Ground in opposite sector)

- Chucklepox (Danny Nova in same sector for about half of cures. All other cases Lomerius Xardus was present somewhere (todo: investigate if more patterns than mere presence))

- Rumblepox (suspiciously high ratio of presence/absence in Calderia when cured, but plenty more cases unexplained - TODO: explain more)

Disquietingly Serene Bowel Syndrome (on weak evidence, see below)

Azeru for curing Bumblepox, Scramblepox (see Danny Nova)

Lomerius Xardus for curing Chucklepox (see Danny Nova)

Boltholopew and Moon Finder, collectively, for curing Disease Syndrome, Parachordia, Problems

Interesting link on symbolic regression. I actually tried to get an AI to write me something similar a while back[1] (not knowing that the concept was out there and foolishly not asking, though in retrospect it obviously would be).

From your response to kave:

calculate a quantity then use that as a new variable going forward

In terms of the tree structure used in symbolic regression (including my own attempt), I would characterize this as wanting to preserve a subtree and letting the rest of the tree vary.

Possible issues:

- If the coding modifies the trees leaf-first, trees with different roots but common subtrees aren't treated as close to each other. This is an issue that my own

A guess at how the SpaceX Starship's ground pad's destruction may have occurred:

Imagine you have supersonic, relatively low density gases coming out of a rocket and impacting on a flat surface below. As the gases "pile up" against the ground or just push the air, they could form a shock front separating the fast and lower density flow from the rocket nozzles from a denser, slow mass of compressed* gas near the ground. This shock front would be unstable due to Rayleigh-Taylor instability, and my guess is that maybe it was so unstable that it made contact with the pad and shattered it due to the large uneven forces, allowing the now... (read more)

Epistemic status: mulled over an intuitive disagreement for a while and finally think I got it well enough expressed to put into a post. I have no expertise in any related field. Also: No, really, it predicts next tokens. (edited to add: I think I probably should have used the term "simulacra" rather than "mask", though my point does not depend on the simulacra being a simulation in some literal sense. Some clarifications in comments, e.g. this comment).

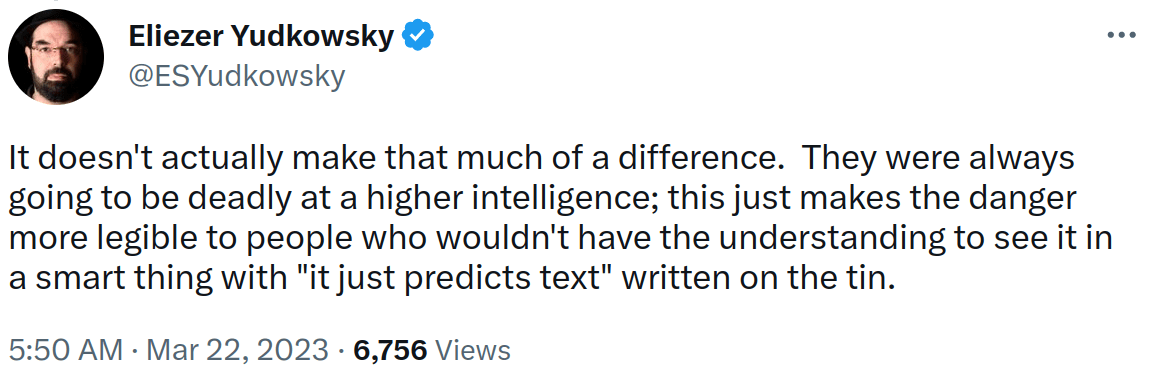

https://twitter.com/ESYudkowsky/status/1638508428481155072

It doesn't just say that "it just predicts text" or more precisely "it just predicts next tokens" on the tin.

It is a thing of legend. Nay, beyond legend. An artifact forged not by the finest... (read 743 more words →)

Thanks aphyer for making this scenario and congrats to James Camacho (and Unnamed) for their better solutions.

The underlying mechanics here are not that complicated, but uncovering details of the mechanics seemed deceptively difficult, I guess due to the non-monotonic effects, and randomness entering the model before the non-monotonicity.

It wasn't that hard though to come up with answers that would do OK, just to decipher the mechanics. I guess this is good in some ways (one doesn't just insta-solve it), but I do like to be able to come up with legible understanding while solving these, and it felt pretty hard to do so in this case, so maybe I'd prefer if there... (read more)