All of TLW's Comments + Replies

I should probably reread the paper.

That being said:

No, it doesn't, any more than "Godel's theorem" or "Turing's proof" proves simulations are impossible or "problems are NP-hard and so AGI is impossible".

I don't follow your logic here, which probably means I'm missing something. I agree that your latter cases are invalid logic. I don't see why that's relevant.

simulators can simply approximate

This does not evade this argument. If nested simulations successively approximate, total computation decreases exponentially (or the Margolus–Levitin theorem doesn't a...

Said argument applies if we cannot recursively self-simulate, regardless of reason (Margolus–Levitin theorem, parent turning the simulation off or resetting it before we could, etc).

In order for 'almost all' computation to be simulated, most simulations have to be recursively self-simulating. So either we can recursively self-simulate (which would be interesting), we're rare (which would also be interesting), or we have a non-zero chance we're in the 'real' universe.

Interesting.

I am also skeptical of the simulation argument, but for different reasons.

My main issue is: the normal simulation argument requires violating the Margolus–Levitin theorem[1], as it requires that you can do an arbitrary amount of computation[2] via recursively simulating[3].

This either means that the Margolus–Levitin theorem is false in our universe (which would be interesting), we're a 'leaf' simulation where the Margolus–Levitin theorem holds, but there's many universes where it does not (which would also be interesting), or we have a non...

Playing less wouldn’t decrease my score

Interesting. Is this typically the case with chess? Humans tend to do better with tasks when they are repeated more frequently, albeit with strongly diminishing returns.

being distracted is one of the effects of stress.

Absolutely, which makes it very difficult to tease apart 'being distracted as a result of stress caused by X causing a drop' and 'being distracted due to X causing a drop'.

solar+batteries are dropping exponentially in price

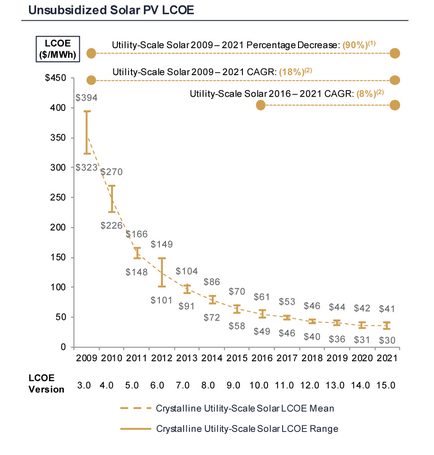

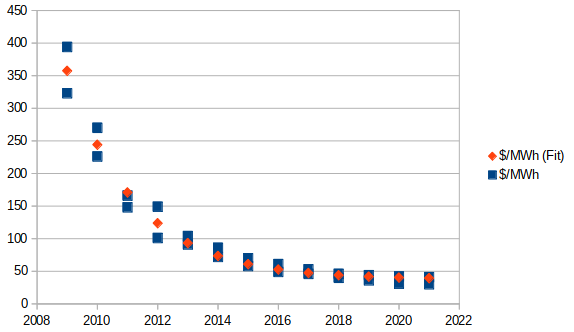

Pulling the data from this chart from your source:

...and fitting[1] an exponential trend with offset[2], I get:

(Pardon the very rough chart.)

This appears to be a fairly good fit[3], and results in the following trend/formula:

This is an exponentially-decreasing trend... but towards a decidedly positive horizontal asymptote.

This essentially indicates that we will get minimal future scaling, if any. $37.71/MWh is already within the given range.

For reference, he...

Too bad. My suspects for confounders for that sort of thing would be 'you played less at the start/end of term' or 'you were more distracted at the start/end of term'.

First, nuclear power is expensive compared to the cheapest forms of renewable energy and is even outcompeted by other “conventional” generation sources [...] The consequence of the current price tag of nuclear power is that in competitive electricity markets it often just can’t compete with cheaper forms of generation.

[snip chart]

Source: Lazard

This estimate does not seem to include capacity factors or cost of required energy storage, assuming I read it correctly. Do you have an estimate that does?

Thirdly, nuclear power gives you energy independence. This became very clear during Russia’s invasion of Ukraine. France, for example, had much fewer problems cutting ties with Russia than e.g. Germany. While countries might still have to import Uranium, the global supplies are distributed more evenly than with fossil fuels, thereby decreasing geopolitical relevance. Uranium can be found nearly everywhere.

Also, you can extract uranium from seawater. This has its own problems, and is still more expensive than mines currently. However, this puts a cap on the...

How much did you play during the start / end of term compared to normal?

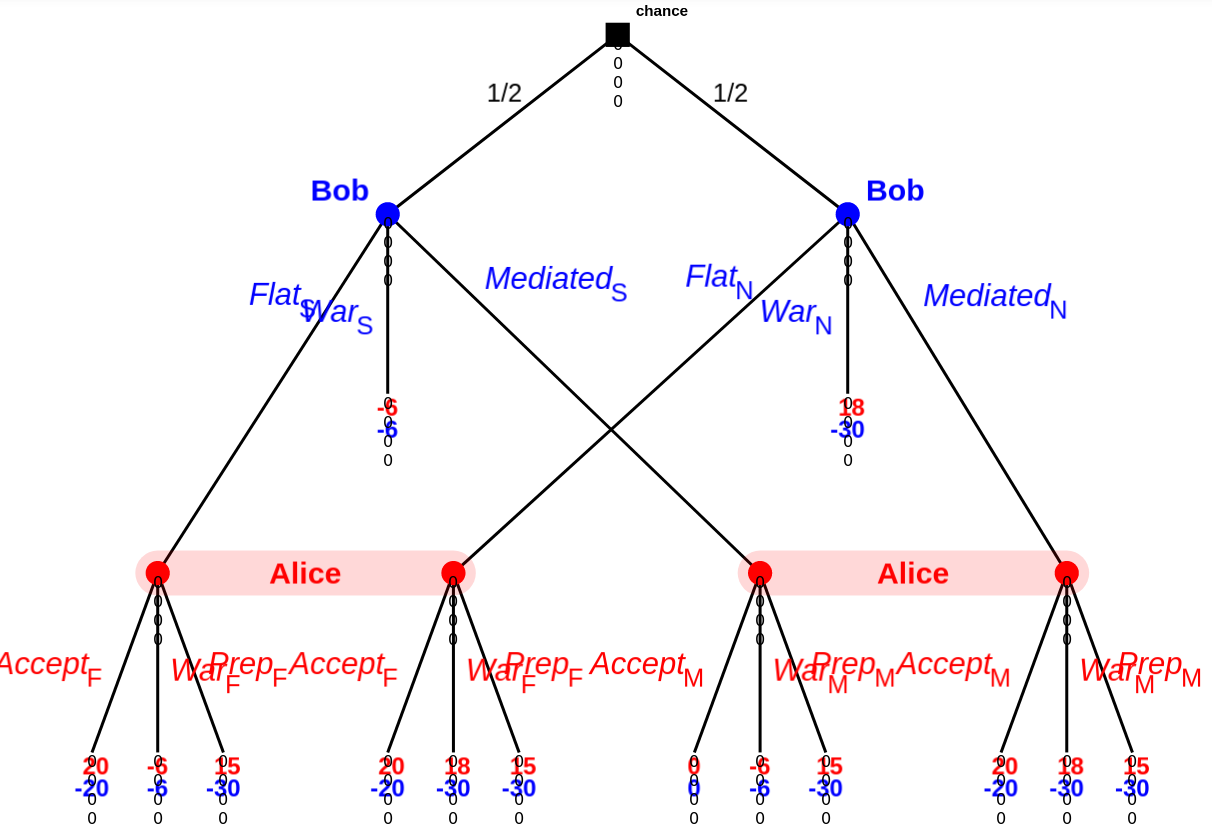

Here's an example game tree:

(Kindly ignore the zeros below each game node; I'm using the dev version of GTE, which has a few quirks.)

Roughly speaking:

- Bob either has something up his sleeve (an exploit of some sort), or does not.

- Bob either:

- Offers a flat agreement (well, really surrender) to Alice.

- Offers a (binding once both sides agree to it) arbitrated (mediated in the above; I am not going to bother redoing the screenshot above) agreement by a third party (Simon) to Alice.

- Goes directly to war with Alice.

- Assuming Bob didn't go directly to war, Alice either

As TLW's comment notes, the disclosure process itself might be really computationally expensive.

I was actually thinking of the cost of physical demonstrations, and/or the cost of convincing others that simulations are accurate[1], not so much direct simulation costs.

That being said, this is still a valid point, just not one that I should be credited for.

...Ignore the suit of the cards. So you can draw a 1 (Ace) through 13 (King). Pulling two cards is a range of 2 to 26. Divide by 2 and add 1 means you get the same roll distribution as rolling two dice.

That's not the same roll distribution as rolling two dice[1]. For instance, rolling a 14 (pulling 2 kings) has a probability of , not [2].

(The actual distribution is weird. It's not even symmetrical, due to the division (and associated floor). Rounding to even/odd would help this, but would cause other issues.)

This also ...

Interesting.

The other player gets to determine your next dice roll (again, either manually or randomly).

Could you elaborate here?

Alice cheats and say she got a 6. Bob calls her on it. Is it now Bob's turn, and hence effectively a result of 0? Or is it still Alice's turn? If the latter, what happens if Alice cheats again?

I'm not sure how you avoid the stalemate of both players 'always' cheating and both players 'always' calling out the other player.

...Instead of dice, a shuffled deck of playing cards would work better. To determine your dice roll, just pull tw

Interesting!

How does said binding treaty come about? I don't see any reason for Alice to accept such a treaty in the first place.

Alice would instead propose (or counter-propose) a treaty that always takes the terms that would result from the simulation according to Alice's estimate.

Alice is always at least indifferent to this, and the only case where Bob is not at least indifferent to this is if Bob is stronger than Alice's estimate, in which case accepting said treaty would not be in Alice's best interest. (Alice should instead stall and hunt for exploits, give or take.)

Let's look at a relatively simple game along these lines:

Person A either cheats an outcome or rolls a d4. Then person B either accuses, or doesn't. If person B accuses, the game ends immediately, with person B winning (losing) if their accusation was correct (incorrect). Otherwise, repeat a second time. At the end, assuming person B accused neither time, person A wins if the total sum is at least 6. (Note that person A has a lower bound of a winrate of 3/8ths simply by never cheating.)

Let's look at the second round first.

First subcase: the first roll (legi...

What's the drawback to always accusing here?

Though this does suggest a (unrealistically) high-coordination solution to at least this version of the problem: have both sides declare all their capabilities to a trusted third party who then figures out the likely costs and chances of winning for each side.

Is that enough?

Say Alice thinks her army is overwhelmingly stronger than Bob. (In fact Bob has a one-time exploit that allows Bob to have a decent chance to win.) The third party says that Bob has a 50% chance of winning. Alice can then update P(exploit), and go 'uhoh' and go back and scrub for exploits.

(So... the third-party scheme might still work, but only once I think.)

Conversely, if FDR wants a chicken in every pot, and then finds out that chickens don't exist, he would change his values to want a beef roast in every pot, or some such.

I do not believe his value function is "a chicken in every pot". It's likely closer to 'I don't want anyone to be unable to feed themselves', although even this is likely an over-approximation of the true utility function. 'A chicken in every pot' is one way of doing well on said utility function. If he found out that chickens didn't exist, the 'next best thing' might be a roast beef in ev...

Demonstrating military strength is itself often a significant cost.

Say your opponent has a military of strength 1.1x, and is demonstrating it.

If you have the choice of keeping and demonstrating a military of strength x, or keeping a military of strength 1.2 and not demonstrating at all...

If you allow the assumption that your mental model of what was said matches what was said, then you don't necessarily need to read all the way through to authoritatively say that the work never mentions something, merely enough that you have confidence in your model.

If you don't allow the assumption that your mental model of what was said matches what was said, then reading all the way through is insufficient to authoritatively say that the work never mentions something.

(There is a third option here: that your mental model suddenly becomes much better when you finish reading the last word of an argument.)

I want to clarify that this is not a particularly useful type of utility function, and the post was a mostly-failed attempt to make it useful.

Fair! Here's another[1] issue I think, now that I've realized you were talking about utility functions over behaviours, at least if you allow 'true' randomness.

Consider a slight variant of matching pennies: if an agent doesn't make a choice, their choice is made randomly for them.

Now consider the following agents:

- Twitchbot.

- An agent that always plays (truly) randomly.

- An agent that always plays the best Nash equil

Interesting!

Could you please explain why your arguments don't apply to compilers?

You would get a 1.01 multiplier in productivity, that would make the speed of development 1.01x faster, especially the development of a Copilot-(N+1),

...assuming that Copilot-(N+1) has <1.01x the development cost as Copilot-N. I'd be interested in arguments as to why this would be the case; most programming has diminishing returns where e.g. eking out additional performance from a program costs progressively more development time.

That's a very different definition of utility function than I am used to. Interesting.

What would the utility function over behaviors for an agent that chose randomly at every timestep look like?

(Disclaimer: not my forte.)

CLIP’s task is to invent a notation system that can express the essence of (1) any possible picture, and (2) any possible description of a picture, in only a brief list of maybe 512 or 1024 floating-point numbers.

How many bits is this? 2KiB / 16Kib? Other?

Has there been any work in using this or something similar as the basis of a high-compression compression scheme? Compression and decompression speed would be horrendous, but still.

Hm. I wonder what would happen if you trained a version on internet imag...

Ah, I now realize that I was kind of misleading in the sentence you quoted. (Sorry about that.)

I made it sound like CLIP was doing image compression. And there are ML models that are trained, directly and literally to do image compression in a more familiar sense, trying to get the pixel values as close to the original as possible. These are the image autoencoders.

DALLE-2 doesn't use an autoencoder, but many other popular image generators do, such as VQGAN and the original DALLE.

So for example, the original DALLE has an autoencoder compon...

Why is this style of pessimism repeatedly wrong?

Beware selection bias. If it wasn't repeatedly wrong, there's a good chance we wouldn't be here to ask the question!

The opposite view is that progress is a matter of luck.

Hm. I tend to not view the pessimistic side as luck so much as 'there's a finite number of useful techs, which we are rapidly burning through'.

I don't think I make this assumption.

You don't explicitly; it's implicit in the following:

It is well known that a utility function over behaviors/policies is sufficient to describe any policy.

The VNM axioms do not necessarily apply for bounded agents. A bounded agent can rationally have preferences of the form A ~[1] B and B ~ C but A ≻[2] C, for instance[3]. You cannot describe this with a straight utility function.

...Kudos for not naively extrapolating past 100% of GDP invested into AI research.

Reduced Cost of Computation: Estimated to reduce by 50% every 2.5 years (about in-line with current trends), down to a minimum level of 1 / 106 (i.e., 0.0001%) in 50 years.

Increased Availability of Capital for AI: Estimated to reach a level of $1B in 2025, then double every 2 years after that, up to 1% of US GDP (currently would suggest $200B of available capital, and growing ~3% per year).

Our current trends in cost of computation are in combination with (seemingly) expone...

See https://www.nytimes.com/2019/11/04/business/secret-consumer-score-access.html (taken from this comment.)

All of this is predicated on the agent having unlimited and free access to computation.

This is a standard assumption, but is worth highlighting.

endowed with the of probability calculation

I suspect dropped a word in that sentence.

Maybe "endowed with the power of probability calculation", or somesuch?

So, uh, how should we start preparing for the impact of this here in the West?

Push officials to redirect corn subsidies for ethanol toward food? Keeping American corn production alive in case it suddenly was required was one of the reasons for said subsidies[1], after all.

(Of course, this doesn't help the upstream portions of the food supply chain.)

- ^

...to the best of my knowledge, although I note that I can't find anything explicitly mentioning this at a quick look.

Alright, we are in agreement on this point.

I have a tendency to start on a tangential point, get agreement, then show the implications for the main argument. In practice a whole lot more people are open to changing their minds in this way than directly. This may be somewhat less important on this forum than elsewhere.

You stated:

...In contrast, I think almost all proponents of libertarian free will would agree that their position predicts that an agent with such free will, such as a human, could always just choose to not do as they are told. If the distributio

It seems to me that you're (intentionally or not) trying to find mistakes in the post.

It is obvious we have a fundamental disagreement here, and unfortunately I doubt we'll make progress without resolving this.

Euclid's Parallel Postulate was subtly wrong. 'Assuming charity' and ignoring that would not actually help. Finding it, and refining the axioms into Euclidean and non-Euclidean geometry, on the other hand...

My point is that the fact that Omega can guess 50/50 in the scenario I set up in the post doesn't allow it to actually have good performance

That... is precisely my point? See my conclusion:

If the fixedpoint was 50/50 however, the fixedpoint is still satisfied by Omega putting in the money 50% of the time, but Omega is left with no predictive power over the outcome .

(Emphasis added.)

Paxlovid itself is free at point-of-sale (or at least the current supply is), so there's no monetary concern.

See "paxlovid is free".

Admittedly, I am not super familiar with the US hospital system, but I believe Paxlovid is only available by prescription - which itself is a nontrivial cost for many.

...These patients have already been tested.

Not only did they get tested but they tested positive.

They already have covid, they aren't being asked to do something unusual and unpleasant to prevent an uncertain future harm.

They're literally being offered a prescriptio

Another case that violates the preconditions is if the information source is not considered to be perfectly reliable.

Imagine the following scenario:

Charlie repeatedly flips a coin, and tells person A and B the results.

Alice and Bon are choosing between the following hypotheses:

- The coin is fair.

- The coin always comes up heads.

- The coin is fair, but person C only reports when the coin comes up heads.

Alice has a prior of 40% / 40% / 20%. Bob has a prior of 40% / 20% / 40%.

Now, imagine that Charlie repeatedly reports 'heads'. What happens?

Answer: Alice asymptote...

I can only conclude that most people do not much care about Covid.

...or people don't have the resources to be able to afford Paxlovid.

...or 'have you had a positive test in the past 14 days; if so you cannot work'-style questions, especially combined with nontrivial false-positive rates, have lead people to avoid getting tested in the first place.

...or people think that the cost to get tests outweighs the expected benefit.

...or people think that the cost to get Paxlovid outweighs the expected benefit.

...or people have started to discount 'do X to stop Covi...

If Omega predicts even odds on two choices and then you always pick one you've determined in advance

(I can't quite tell if this was intended to be an example of a different agent or if it was a misconstrual of the agent example I gave. I suspect the former but am not certain. If the former, ignore this.) To be clear: I meant an agent that flips a quantum coin to decide at the time of the choice. This is not determined, or determinable, in advance[1]. Omega can predict here fairly easily, but not .

...There's a big difference between those two

I think this is a terminological dispute

Fair.

and is therefore uninteresting.

Terminology is uninteresting, but important.

There is a false proof technique of the form:

- A->B

- Example purportedly showing A'

- Therefore B

- [if no-one calls them out on it, declare victory. Otherwise wait a bit.]

- No wait, the example shows A (but actually shows A'')

- [if no-one calls them out on it, declare victory. Otherwise wait a bit.]

- No wait, the example shows A (but actually shows A''')

- [if no-one calls them out on it, declare victory. Otherwise wait a bit.]

- No wait, the example shows

being continuous does not appear to actually help to resolve predictor problems, as a fixed point of 50%/50% left/right[1] is not excluded, and in this case Omega has no predictive power over the outcome [2].

If you try to use this to resolve the Newcomb problem, for instance, you'll find that an agent that simply flips a (quantum) coin to decide does not have a fixed point in , and does have a fixed point in , as expected, but said fixed point is 50%/50%... which means the Omega is wrong exactly half the time. You could replac...

These are mostly combinations of a bunch of lower-confidence arguments, which makes them difficult to expand a little. Nevertheless, I shall try.

1. I remain unconvinced of prompt exponential takeoff of an AI.

...assuming we aren't in Algorithmica[1][2]. This is a load-bearing assumption, and most of my downstream probabilities are heavily governed by P(Algorithmica) as a result.

...because compilers have gotten slower over time at compiling themselves.

...because the optimum point for the fastest 'compiler compiling itself' is not to turn on all optimizations...

For anyone else that can't read this quickly, this is what it looks like, un-reversed:

...The rain in Spain stays mainly in the get could I Jages' rain in and or rain in the quages or, rain in the and or rain in the quages or rain in the and or rain in the quages or, rain in the and or rain in the quages or rain in the and or rain in the quages or rain in the and or rain in the quages or rain in the and or rain in the quages or rain in the and or rain in the quages or rain in the and or rain in the quages or rain in the and or rain in the quages or rain in the

Ah, but perhaps your objection is that the difficulty of the AI alignment problem suggests that we do in fact need the analog of perfect zero correlation in order to succeed.

My objection is actually mostly to the example itself.

As you mention:

the idea is not to try ang contain a malign AGI which is already not on our side. The plan, to the extent that there is one, is to create systems that are on our side, and apply their optimization pressure to the task of keeping the plan on-course.

Compare with the example:

...Suppose we’re designing some secure electronic

Sorry, are we talking about effects that are continuous, or effects that are discontinuous but which have probability distributions which are continuous?

I was rather assuming you meant the former considering you said 'effects that are truly discontinuous.'.

Both of your responses are the latter, not the former, assuming I am understanding correctly.

*****

You can't get around the continuity of unitary time evolution in QM with these kinds of arguments.

And now we're into the measurement problem, which far better minds than mine have spent astounding amounts of effort on and not yet resolved. Again, assuming I am understanding correctly.

I think this is interesting because our understanding of physics seems to exclude effects that are truly discontinuous.

This is not true. An electron and a positron will, or will not, annihilate. They will not half-react.

For example, real-world transistors have resistance that depends continuously on the gate voltage

This is incorrect. It depends on the # of electrons, which is a discrete value. It's just that most of the time transistors are large enough that it doesn't really matter. That being said, it's absolutely important for things like e.g. flash mem...

In any case, my world model says that an AGI should actually be able to recursively self-improve before reaching human-level intelligence. Just as you mentioned, I think the relevant intuition pump is "could I FOOM if I were an AI?" Considering the ability to tinker with my own source code and make lots of copies of myself to experiment on, I feel like the answer is "yes."

Counter-anecdote: compilers have gotten ~2x better in 20 years[1], at substantially worse compile time. This is nowhere near FOOM.

- ^

Proebsting's Law gives an 18-year doubling time. The 200

It may be worthwhile to extend this to doctors who aren't perfect - that is are only correct most of the time - and see what happens.

Interesting.

If you're stating that generic intelligence was not likely simulated, but generic intelligence in our situation was likely simulated...

Doesn't that fall afoul of the mediocrity principle applied to generic intelligence overall?

(As an aside, this does somewhat conflate 'intelligence' and 'computation'; I am assuming that intelligence requires at least some non-zero amount of computation. It's good to make this assumption explicit I suppose.)