I work primarily on AI Alignment. Scroll down to my pinned Shortform for an idea of my current work and who I'd like to collaborate with.

Website: https://jacquesthibodeau.com

Twitter: https://twitter.com/JacquesThibs

GitHub: https://github.com/JayThibs

Posts

Wiki Contributions

Comments

I thought Superalignment was a positive bet by OpenAI, and I was happy when they committed to putting 20% of their current compute (at the time) towards it. I stopped thinking about that kind of approach because OAI already had competent people working on it. Several of them are now gone.

It seems increasingly likely that the entire effort will dissolve. If so, OAI has now made the business decision to invest its capital in keeping its moat in the AGI race rather than basic safety science. This is bad and likely another early sign of what's to come.

I think the research that was done by the Superalignment team should continue happen outside of OpenAI and, if governments have a lot of capital to allocate, they should figure out a way to provide compute to continue those efforts. Or maybe there's a better way forward. But I think it would be pretty bad if all that talent towards the project never gets truly leveraged into something impactful.

So, you go to government and lobby. Except you never intended to help the government get involved in some kind of slow-down or pause. Your intent was to use this entire story as a mirage for getting rid of those who didn’t align with you and lobby the government in such a way that they don’t think it is such a big deal that your safety researchers are resigning.

You were never the reasonable CEO, and now you have complete power.

(This is the tale of a potentially reasonable CEO of the leading AGI company, not the one we have in the real world. Written after a conversation with @jdp.)

You’re the CEO of the leading AGI company. You start to think that your moat is not as big as it once was. You need more compute and need to start accelerating to give yourself a bigger lead, otherwise this will be bad for business.

You start to look around for compute, and realize you have 20% of your compute you handed off to the superalignment team (and even made a public commitment!). You end up making the decision to take their compute away to maintain a strong lead in the AGI race, while expecting there will be backlash.

Your plan is to lobby government and tell them that AGI race dynamics are too intense at the moment and you were forced to make a tough call for the business. You tell government that it’s best if they put heavy restrictions on AGI development, otherwise your company will not be able to afford to subsidize basic research in alignment.

You give them a plan that you think they should follow if they want AGI to be developed safely and for companies to invest in basic research.

You told your top employees this plan, but they have a hard time believing you given that they feel like you lied about your public commitment to giving them 20% of current compute. You didn’t actually lie, or at least it wasn’t intentional. You just thought the moat was bigger and when you realized it wasn’t, you had to make a business decision. Many things happened since that commitment.

Anyway, your safety researchers are not happy about this at all and decide to resign.

To be continued…

For anyone interested in Natural Abstractions type research: https://arxiv.org/abs/2405.07987

Claude summary:

Key points of "The Platonic Representation Hypothesis" paper:

-

Neural networks trained on different objectives, architectures, and modalities are converging to similar representations of the world as they scale up in size and capabilities.

-

This convergence is driven by the shared structure of the underlying reality generating the data, which acts as an attractor for the learned representations.

-

Scaling up model size, data quantity, and task diversity leads to representations that capture more information about the underlying reality, increasing convergence.

-

Contrastive learning objectives in particular lead to representations that capture the pointwise mutual information (PMI) of the joint distribution over observed events.

-

This convergence has implications for enhanced generalization, sample efficiency, and knowledge transfer as models scale, as well as reduced bias and hallucination.

Relevance to AI alignment:

-

Convergent representations shaped by the structure of reality could lead to more reliable and robust AI systems that are better anchored to the real world.

-

If AI systems are capturing the true structure of the world, it increases the chances that their objectives, world models, and behaviors are aligned with reality rather than being arbitrarily alien or uninterpretable.

-

Shared representations across AI systems could make it easier to understand, compare, and control their behavior, rather than dealing with arbitrary black boxes. This enhanced transparency is important for alignment.

-

The hypothesis implies that scale leads to more general, flexible and uni-modal systems. Generality is key for advanced AI systems we want to be aligned.

It’s a free model. Much more likely they have paid big boy model coming soon imo.

If the presentation yesterday didn't make this clear, the ChatGPT-4 Mac app with the 4o model is already available on Mac. As soon as I logged in to the ChatGPT website on my Mac, I got a link to download it.

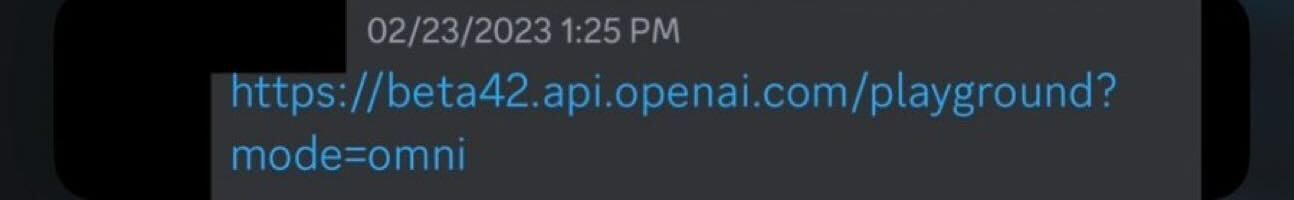

Oh, and it's quite likely that the first iteration of this model finished training in February 2023. Jimmy Apples (consistently correct OpenAI leaker) shared this screenshot of an API link to an "omni" model (which was potentially codenamed Gobi last year):

I took this post down, given that some people have been downvoting it heavily.

Writing my thoughts here as a retrospective:

I think one reason it got downvoted is that I used Claude as part of the writing process and it was too disjointed/obvious (because I wanted to rush the post out), but I didn't think it was that bad and I did try to point out that it was speculative in the parts that mattered. One comment specifically pointed out that it felt like a lot was written by an LLM, but I didn't think I relied on Claude that much and I rewrote the parts that included LLM writing. I also don't feel as strongly about using this as a reason to dislike a piece of writing, though I understand the current issue of LLM slop.

However, I wonder if some people downvoted it because they see it as infohazardous. My goal was to try to determine if photonic computing would become a big factor at some point (which might be relevant from a forecasting and governance perspective) and put out something quick for discussion rather than spending much longer researching and re-writing. I agreed with what I shared. But I may need to adjust my expectations as to what people prefer as things worth sharing on LessWrong.

I shared the following as a bio for EAG Bay Area 2024. I'm sharing this here if it reaches someone who wants to chat or collaborate.

Hey! I'm Jacques. I'm an independent technical alignment researcher with a background in physics and experience in government (social innovation, strategic foresight, mental health and energy regulation). Link to Swapcard profile. Twitter/X.

CURRENT WORK

TOPICS TO CHAT ABOUT

POTENTIAL COLLABORATIONS

TYPES OF PEOPLE I'D LIKE TO COLLABORATE WITH