Condensed Less Wrong Wisdom: Yudkowsky Edition, Part I

Mysterious Answers to Mysterious Questions

- Ask "What experiences do I anticipate?", not "What statements do I believe?"

- Your strength as a rationalist is your ability to be more confused by fiction than by reality. If you are equally good at explaining any outcome, you have zero knowledge.

- The strength of a model is not what it can explain, but what it can't, for only prohibitions constrain anticipation.

- There's nothing wrong with focusing your mind, narrowing your categories, excluding possibilities, and sharpening your propositions.

- For every expectation of evidence, there is an equal and opposite expectation of counterevidence.

- You can only ever seek evidence to test a theory, not to confirm it.

- Write down your predictions in advance.

- Hindsight bias devalues science: we need to make a conscious effort to be shocked enough.

- Be consciously aware of the difference between an explanation and a password.

- Fake explanations don't feel fake. That's what makes them dangerous.

- What distinguishes a semantic stopsign is failure to consider the obvious next question.

- I

Would anyone else like to see a new demographic survey done? I'm interested in how LW's userbase has changed since the last one (other than, well, me).

OkCupid thread, anyone?

I was thinking that those of us that aren't shy could share our OkCupid profiles for critique from people who know better. (Not that we have to accept the critiques as valid, but this is an area where it'd be good to have others' opinions anyway.)

If anyone wants to get the ball rolling, post a link to your profile and hopefully someone will offer a suggestion (or a compliment).

Also, I bet cross-sexual-preference critique would be best: which for most of us means gals critiquing guys and guys critiquing gals. But I realize the LW gender skew limits that.

I was interested to see what discussion this post would generate but I'm a little disappointed with the results. It looks like further evidence that instrumental rationality is hard and that the average lesswronger is not significantly better at it than the average person without a particular interest in rationality.

I'm going to throw out a bunch of suggestions for things that I think a rationalist should at least consider trying when approaching this specific problem as an exercise in instrumental rationality. I anticipate that people will immediately think of reasons why these ideas wouldn't work or why they wouldn't want to do them even if they did. Many of these will be legitimate criticisms but if you choose to comment along these lines please honestly ask yourself if these are ideas that you had already considered and rejected or whether your objections are in part confabulation.

One obvious reason for not trying any of these things is that the issue is just not that important to you and so doesn't justify the effort but if you feel that way ask yourself how you would approach the problem if it was that important to you. I haven't tried all these things myself. I rejected som...

Presumably people who are 'not photogenic' are not made of some different type of material that reacts differently to light than photogenic people. The problem must either be a lack of good quality photographs or an issue with uncomfortable body language when being photographed.

The camera also adds (visual cues that make it look like it adds) weight, and messes with color. My best friend just got married and had lots of photos taken of her and her husband. He looks fine because he starts out skinny as a rail and his coloration works in the photos. But in the very same photos, she develops a blotchy complexion and her hair color looks unnatural and gross. And while she's not fat, the extra ten pounds on the glossy photo nudge her a little that way. Her body language looks fine in photos (and if she were tensing up, wouldn't she also look tense on video? Video of her looks much better), and the quality of the photographer or camera can't be the issue because in the very same photograph her husband looks exactly like himself in real life and she looks weird.

I think that photogenic people and performers, apart from being physically attractive, are really good at "seeing" themselves.

I'm not sure I agree with this--or rather, I'm not sure this is the best model of what's going on. My impression has always been (and this fits with my photo-taking advice elsewhere in this thread) that you don't learn to see how you look when you're doing something right--you learn how it feels to be in the correct position to do it. That is, someone who's watching you might say "your back is curved, straighten it," and you can straighten it, but you still don't see what they see. You just find out what it feels like to have a straight back, and can try for that again later. I've never played golf, but I'd be surprised if good golfers are thinking about what they look like when they're putting. I'd expect them instead to recognize the feeling of being in the correct posture from having done it before.

I've never used any dating websites, but people who care about that sort of thing should note that the advice they'll get this way may have a very low, or even negative correlation with what actually works. I don't mean to say that people will consciously write misleading things -- just that, for various reasons, they may not work with a realistic idea of the thought process of those who are supposed to be attracted by the profile in question. To get useful advice, the best way to go is to ask someone of the same sex (and preferences) who has successfully used some such site to share their insight.

the best way to go is to ask someone of the same sex (and preferences)

Definitely. It is often recommended to guys to focus almost exclusively on advice from other males rather than women until you have reached a level of understanding such that you can reliably distinguish between people talking about what the world 'should' be like rather than what the world is. Having a certain level of social presence also makes it more likely that people will refrain from trying to foist the rules for boys on you and actually give honest assessment's of preferenes.

I don't know who erisiantaoist is, but I cannot believe he actually started his profile with the "I am slightly more committed to this group’s welfare..." quote. If I were at all gay I would date him in a second just for that.

...although I am generally surprised at how anxious people in this group are to signal transhuman weirdness, especially transhuman weirdness that only one in a few thousand people would understand or find remotely sane. Do you really have access to such a high quality dating pool that you're looking for people who will be impressed instead of confused when you name-drop AIXI and state your intention to live forever?

In my case I'm not looking for a transhumanist match: there are lots of really smart, interesting girls out there that haven't heard of transhumanism, and I have a lot more things to talk about than ethics. (Seriously, who talks about bioconservatism on the first date? Once a girl said on a first date, "There are so many books, it's the only thing that makes me really sad that I'm going to die." And I was like, "Oh, don't worry about that. We're not that far off from solving death, you'll be fine. I personally work on the problem; trust me, you'll have trillions of years to read your books." I was still able to get a second date.) I just do it because I think it's hilarious that I can come across as insane and still get girls by being relatively laconic. Social normalcy screens off epistemic oddity, to an extent. I'm not socially normal but I do an okay job at emulating it most of the time.

So those that would take inspiration from my profile, note that the nerdy parts aren't optimized for success. At all. I just like typing AIXI. AIXI AIXI AIXI AIXI AIXI...

Maybe they're just sick of half-heartedly dating folks they don't click with.

I had a brief relationship with a nice boy who had never heard of most of the things I'm interested in -- he wasn't an intellectual type. Nothing against him, but it was surprisingly disappointing. After that, I thought, "Okay, in the long run I'm going to want a deeper connection than that."

Maybe they're just sick of half-heartedly dating folks they don't click with.

The first few versions of my profile were geared to show off how geeky and smart I was. This connected me to people who spent a lot of time playing tabletop roleplaying games, reading fantasy novels, and making pop culture references to approved geeky television shows, none of which are things which interest me particularly.

Eventually I realized that I am not actually just popped out of the stereotypical modern geek mold, and it was lazy, inaccurate, and ineffective to act like I was. Since then I've started doing the much harder thing of trying to pin down my specific traits and tastes, instead of taking the party line or applying a genre label that lets people assume the details. In that way, OKC has actually been a big force in driving me to understand who I am, what I want, and what really matters to me. A bit silly, but I'll take it.

Here's a question I've been pondering a lot: What are good questions to use to actually learn something about a person? (If you suggest "what kind of music do you listen to" ... you're fired.) If they're not the same for everyone, and I expect that they aren't, how do you find them?

What are good questions to use to actually learn something about a person?

"What's something you believe, that you'd be surprised if I believed too?"

(I've yet to try this in a romantic context, but when meeting new friends it usually leads to a good conversation– the more so for ruling out first-order contrarian beliefs that they'd expect me to share.)

One thing I've learned from having mostly male friends who sometimes complain about their dating lives is no matter how much of an asshole I feel like when I turn someone down, it's much less than the asshole I'm actually being if I don't tell them.

Strongly agree in general...

This is why I always respond to new messages on OKC that aren't outright rude, offensive, or all textspeak (and even then I sometimes do).

I might be unusual here, but I actually consider online dating to be kind of a special case. My usual strategy is to message anyone I'd want to go on a date with, and then forget I sent the message. This means if my mailbox turns pink, it's a pleasant surprise, and there might be a date in the offing. Finding instead a polite rejection is a bit disappointing.

That is, IRL, if we're friends/acquaintances, and I'm politely/vaguely suggesting that I'd like us to date, and you're picking up on that, it is totally good for you to shoot me down, so that I can quit wasting mental/emotional energy. Online where things are more explicit, the only waste of mental/emotional energy is when I'm logging in to find a message, only to find a rejection.

Great profile!

witty, + 5

sci-fi and singularity geek, +5

draws a webcomic + 5

wants kids, +2

degree in worldbuilding, awesome, +10

cooking, +3

vegetarian, -2 :(

likes fancy pretentious cheese, +1

I'm too old, live on another continent, and my wife is next to me right now, -50

By the power vested in me by the cult of CEV, I hereby grant you permission to organize LW meetups.

"Also, I have arranged for my body to be cryonically preserved on my death, and I would prefer for my partner to assist me in that goal. At the very least, she would need to promise that she will not interfere with my arrangements.

I would actually recommend against saying that, even though it may be factually true. I would not attempt to dissuade my man from cryonics if he were interested, but if I saw this in an OkCupid profile, I would think, "This guy is ALL ABOUT transhumanism and probably boring." Seeing as I'm more used to the idea than most women, what turns me off will probably turn off lots more potentially compatible readers.

Edit: I agree with the above that you don't need to go into so much detail about the programming though.

I recently mentioned Dempster-Shafer evidentiary theory another thread. I admit, I was surprised to get no replies to that comment.

Is there any interest in a top-level post introducing DST? Bayesianism seems to be cited as a god of reasoning around here, but DST is strictly more powerful, since setting uncertainty to 0 in all DST formulas results in answers identical to what Bayesian probability would give. I would like to introduce Dempster-Shafer here, but only if the audience will find it worthwhile.

I'm considering writing a post on anapana meditation (sometimes also known as mindfulness meditation or breath meditation) that gives instructions, tips for practicing, and details costs and benefits. I would probably be drawing primarily from personal experience (but I'd still appreciate any references to relevant studies). At the end of the post I would invite others to use my knowledge to give this form of meditation a trial. Is something people are interested in? For those who are interested, is there anything you would like to see addressed?

My meditation history: I learned anapana and vipassana meditation from a 10-day course at one of these centers in 2006. After a year of false starts I was able to keep up a daily practice of 1 hr from 2007-2008 and 2 hrs from 2008 until the present. During that period I also took an additional 4 (or 5?) 10-day courses.

So, hello. Has anyone here ever experienced spontaneous and sudden evaporation of akrasia alltogether? I'm asking mostly because this is exactly what happened to me, on 26th of August this year.

I didn't do anything special. I had tried taking cold showers every now and then for a week earlier, and started taking some nutrient pills around that time, that's pretty much it. Then, that morning, I suddenly started working on the projects I had planned and thought of.

That may not sound all that dramatic, but I haven't introduced myself yet. I have been my whole life a rock-solid underachiever. After elementary school doing homework was not enforced, so I gradually stopped doing that. University doesn't care if you participate in lectures, so I didn't. All my academic effort happens roughly one day before any given exam. It's not that I didn't like the subject I study, or that I didn't want to do it. I just couldn't. There was a mental block that totally prevented me from using my free time for any of my projects, things I wanted to work on.

So that day, I spontaneously figured that I gotta study one thing in order to be prepared for the next academic year. So I did. I figured my room was...

A request for help: I feel like I'm finally mastering my akrasia at work, but I have yet to find a technique to remove a pre-established Ugh Field. In this case, I have nearly complete drafts for two paper that I wrote as part of my Ph.D. thesis. I have a strong stress reaction to just thinking about opening the files (ETA: it's thinking about doing the work that causes the reaction; opening the files is just the first step in actually doing the work), but I want to want to whip them into shape and submit them for publication.

Less Wrong, other-optimize me!

Ask someone else to sit down together with you at the computer, open the files, and start reading and discussing them with you. Eventually, start editing them together. Tell your collaborator specifically to hang around for a while and disregard your (possible) requests to stop, until the work is well underway and you can continue with the flow.

This would of course require a significant commitment on part of the other person, but if this is really important, a good friend should be willing to help you, and you might even consider paying someone less close for their time and effort.

If you need to whip them into shape, you're probably not happy with them. If you're anything like me, showing them to someone else is probably the last thing you want to do.

Solution: you owe me one of the papers by the next open thread. If you don't work on it, you'll be sending me whatever you're so embarrassed about. If you can fix it in time, you won't have to worry. I don't have much time right now, but I will read it, so beware.

What would you do to assess the advisability of taking antidepressants?

I'm trying to advise someone who is receiving conflicting advice; there appears to be plenty of controversy in this area and many factors confound assessing the evidence. Before I point to specifics on evidence that might influence the decision one way or the other I thought I'd ask in the most general way I can. I hope using the Open Thread to play "ask a rationalist" like this isn't a bad thing!

In my last post on Health Optimization, one commenter inadverntently brought up a topic which I find interesting, although it is highly contraversial - which is HIV/AIDS skepticism and rationality in science.

The particular part of that which I am interested in is proper levels of uncertainty and rationality errors in medical science.

I have some skepticism for the HIV/AIDS theory, perhaps on the level of say 20-30%. More concretely, I would roughly say I am only about 70% confident that HIV is the sole cause of AIDS, or 70% confident that the mainstream theory of HIV/AIDS is solid.

Most of that doubt comes from one particular flaw I in the current mainstream theory which I find particularly damning.

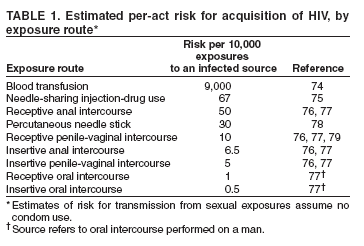

It is claimed that HIV is a sexually transmitted disease. However, the typical estimates of transmission rate are extremely low: 0.05% / 0.1% per insertive/receptive P/V sex act 0.065% / 0.5% per insertive/receptive P/A sex act

This data is from wikipedia - it lists a single paper as a source, but from what I recall this matches the official statistics from the CDC and what not.

For comparison, from the wikipedia entry on Gonorrhea, a conventional STD:

...Men have a 20% risk of getting th

A few years ago, I entered an online discussion with some outspoken HIV-AIDS skeptics who supported the theories of Peter Duesberg, and in the course of that debate, I read quite a bit of literature on the subject. My ultimate conclusion was that the HIV-AIDS link has been established beyond reasonable doubt after all; the entire web of evidence just seems too strong. For a good overview, I recommend the articles on the topic published in the Science magazine in December 1994:

http://www.sciencemag.org/feature/data/cohen/cohen.dtl

Regarding your concerns about transmission probabilities, in Western countries, AIDS as an STD has indeed never been more than a marginal phenomenon among the heterosexual population. (Just think of the striking fact that, to my knowledge, in the West there has never been a catastrophic AIDS epidemic among female prostitutes, and philandering rock stars who had sex with thousands of groupies in the eighties also managed to avoid it.) As much as it’s fashionable to speak of AIDS as an “equal opportunity” disease, it’s clear that the principal mechanism of its sexual transmission in the First World has been sex between men, because of both the level of promis...

Although that population would necessarily first acquire every other STD known to man, more or less.

...a massive baby epidemic...

Many non-AIDS STIs, and pregnancy, are curable.

Note that spreading the idea that the transmission rate of AIDS is low has negative utility, regardless of whether it's true or not, since it would encourage dangerous behavior.

This is untrue. Consider a similar claim: "spreading the idea that very few passengers on planes are killed by terrorists has negative utility, regardless of whether it is true or not, since it would encourage dangerous behavior."

Informing people of the true risks of any activity will not in general have negative utility. If you believe a particular case is an exception you need to explain in detail why you believe this to be so.

I find denialism in all forms simply fascinating. I wonder if you could indulge my curiosity.

I will indulge your curiosity in a moment, but I'm curious why you use the politically loaded term "denialism".

As far as I can tell, it's sole purpose is to derail rational dicussion by associating one's opponent with a morally perverse stance - specially invoking the association of Holocaust Denialism. Politics is the mind-killer. In regards to that, I have just been spectating your thread with Vladimir M, and I concur wholeheartedly with his well-written related post.

There is no rational use of that appelation, so please desist from that entire mode of thought.

So, I have to wonder, what do you think is wrong with the cognitive apparatus of all those medical and research professionals who believe that HIV == AIDS and is an STD?

Firstly, I don't think nearly as many quality researchers believe HIV == AIDS as you claim, at least not internally. The theory has gone well past the level of political mindkill and into the territory of an instituitionalied religion, where skeptics and detractors are publically shamed as evil people. I hope we can avoid that here. Actually, I...

The google hits you mention are just websites, not research papers - not relevant. There is no reason apriori to view the ~0.3% per coital act transmission rate as a lower bound, it could just as easily be an upper bound. You need to show considerably more evidence for that point.

If I can take the liberty of butting in...

The data on wikipedia comes from the official data from the CDC1, which in turn comes from a compilation of numerous studies. I take that to be the 'best' data from the majoritive position, and overrides any other lesser studies for a variety of reasons.

Here's the table of data I believe you're referring to:

It cites references 76, 77 & 79, all of which turn out to be publicly available online. That's good, because now I can check the validity of Perplexed's claim that the studies backing your CDC data used samples of relatively healthy, well-off people who lack some risk factors.

I took ref. 76 first. It reports data from the "European Study Group on Heterosexual Transmission of HIV", which recruited 563 HIV+ people, and their opposite-sex partners, from clinics and other health centres in 9 European countries. (Potential sampling bias no. 1: HI...

A man committed suicide in Harvard Yard a week ago, after uploading a 1905-page book to the web first. Curiously (because he was, like myself, named Mitchell), I first heard about this on the morning I went to post my make-a-deal article; it led me to trim away the more emotive parts of my own, rather shorter message.

Anyway, I have been examining the book and it's very intriguing. If I can sum up his worldview, it is that human values have an evolutionary origin, but Jewish and Anglo-Saxon culture, for specific historical reasons, developed values which biocentrically appear to be anti-evolutionary, but which in fact ultimately issue in the technological, postbiological world of the Singularity. (Eliezer is mentioned a few times, mostly as an opposite extreme to Hugo DeGaris, with Heisman, the author of the book, proposing a synthesis.) Heisman's suicide, meanwhile, is anticipated in the book, where he says that willing death is a way to will truth in a meaningless universe. He equates materialism with objectivity with meaninglessness and nihilism; he considers the desire to live as the ultimate barrier to objectivity, because objectivity implies that life is no better than death,...

The comments, on the blog that you link to, mention Ted Kaczynski. This reminded me of my own comment

...Think about the date: 1995. The Kaczynski Manifesto marks the end of an era. Nobody today would start a terrorist campaign aimed at getting his manifesto published in a newspaper. It is not just that newspapers are dying and that no-one believes what they read in newspapers anymore, especially not opinion pieces and manifestos.

Now-a-days you put your manifesto on a website and discuss it on Reddit. If it is well written and provocative it will be discussed and pulled to pieces. The delusion that did for Kacynski, and his victims, was that if only he could get the message out, it would change the word. Now-a-days you can get your message out, if not to a mass audience, at least to curious intellectuals. You get to the stage Kacynski never reached, of talking it over and finding that others are not persuaded and have their own stubbornly held views, very different from your own.

1995, the very end of the solipsistic era. In the new internet era there is no hiding from the fact that others read your manifesto, find some of it true, some of it false, some of it confused, and then ask y

A piece of Singularity-related fiction with a theory for your evaluation: The Demiurge's Elder Brother

I don't know what to make of this:

...The man who took his own life on Harvard's campus Saturday left a 1,904-page suicide note online.

According to the Harvard Crimson, Mitchell Heisman wrote "Suicide Note," posted at http://suicidenote.info, while living in an apartment near the school. The note is a "sprawling series of arguments that touch upon historical, religious and nihilist themes," his mother, Lonni Heisman, told the Crimson. She said her son would have wanted people to know about his work.

The complex note, di

Mitchell Heisman starts off by saying

If my hypothesis is correct, this work will be repressed. It should not be surprising if justice is not done to the evidence presented here. It should not be unexpected that these arguments will not be given a fair hearing. It is not unreasonable to think that this work will not be judged on its merits.

This is obviously false - it's up on the internet, it's gotten some press coverage, it quite obviously has not been repressed. But he is right that it won't be judged on its merits, because it's so long that reading it represents a major time commitment, and his suicide taints it with an air of craziness; together, these ensure that very few people will do more than lightly skim it.

The sad thing is, if this guy had simply talked to others as he went along - published his writing a chapter at a time on a blog, or something - he probably could've made a real contribution, with a real impact. Instead, he seems to have gone for 1904 pages with no feedback with which to correct misconceptions, and the result is that he went seriously off the rails.

I just skimmed a few random pages of the book, and ran into this stunning passage:

...Marx’s improbable claim that economic-material development will ultimately trump the need for elite human leaders may turn out to be a point on which he was right. What Marx failed to anticipate is that capitalism is driving economic-technological evolution towards the development of artificial intelligence. The advent of greater-than-human artificial intelligence is the decisive piece of the puzzle that Marx failed to account for. Not the working class [as Marx believed - V.], and not a human elite [as Lenin believed - V.], but superhuman intelligent machines may provide the conditions for “revolution”.

[...]

If this is correct, the first signs of evidence may be unprecedented levels of permanent unemployment as automation increasingly replaces human workers. While this development may begin to require a new form of socialism to sustain demand, artificial intelligence will ultimately provide an alternative to “the dictatorship of the proletariat.” [...] The creation of an artificial intelligence trillions of times greater than all human intelligence combined is not simply the advent of another shiny

So, given that we've got a high concentration of technical people around here, maybe someone can answer this for me:

Could it ever be possible to do some kind of counter-data mining?

Everybody has some publicly-available info on the internet -- information that, in general, we actually want to be publicly available. I have an online presence, sometimes under my real name and sometimes under aliases, and I wouldn't want to change that.

But data mining is, of course, a potential privacy nightmare. There are algorithms that can tell if you're gay from your f...

Something interesting I've noticed about myself. Recently I've been worrying if I'm an atheist and my mindset is often something akin to "science as a way to see the world, not just a discipline to be studied" is less because I've found good reason to accept the former as fact and the latter as a good mindset, and more because of a socialization effect of being around Less Wrong. Meaning, even as a somewhat lurker with 48 karma total whose made no comment above 9 karma (as of this one), I'm wondering if my thoughts are less due to my own personal...

We often tell people to read the Sequences, but perhaps some people are better suited to jumping right into A Technical Explanation. Rereading it for the first time in awhile I was surprised at how much ground it covers, and how dense it is compared to the sequences.

That said, in my experience dense material is harder to learn, even if clearly explained. Naturally and cleverly repetitive material is best suited for learning. So in conclusion, uh, I dunno.

Prediction: a year after HPatMoR is finished, newbies will be as reluctant to read through it as they are relucant to read through the sequences now.

This technology IMO is a bridge to getting serious scientists involved in cryonics research

Retraction Watch is a fairly new blog that looks at retractions of scientific papers. It offers interesting insights and perspectives into the sociology of science especially when dealing with fraud. It is run by the same fellow who runs Embargo Watch which examines the slightly dryer subject of how embargoes on papers impact science.

Consider this problem: given positive integers a,b,c, are there positive integers x,y such that a*x*x+b*y=c? Amazingly, it is NP-complete. Can anyone explain to me how it manages to be complete?

Error finding: I strongly suspect that people are better at finding errors if they know there is an error.

For example, suppose we did an experiment where we randomized computer programmers into two groups. Both groups are given computer code and asked to try and find a mistake. The first group is told that there is definitely one coding error. The second group is told that there might be an error, but there also might not be one. My guess is that, even if you give both groups the same amount of time to look, group 1 would have a higher error identification success rate.

Does anyone here know of a reference to a study that has looked at that issue? Is there a name for it?

Thanks

It occurs to me that it might be useful to have an online library for LessWrong - a place to store books, articles, and other bits of information gathered from the internet, for reference and sharing.

It should be pretty easy to set that up using Evernote and an email account - Evernote allows files to be emailed to it, and will even sort those files into categories and apply tags to them if the subject line of the email is formatted correctly. The email account would act as a firewall - it would filter out spam, blacklist certain email addresses if necessa...

Any other LW people interested in going to the Rally to Restore Sanity? I was thinking it would be fun to have a group of people there advocating Advanced Sanity (e.g. various LW/Bayesian themes). And some LW concepts ("Politics Is The Mind-Killer", "Make Beliefs Pay Rent", etc.) are unusual and wise-sounding and correct enough that they'd probably be good conversation-starters as sign slogans (...and I also made this nice E. T. Jaynes poster).

Cognitive Biases Reinforced by Programming Supposing they exist, what are they? And what can we do about them?

Edit: Title changed.

I was thinking about turning this chunk of vomited IM text I wrote for a non-LW cogsci-student friend into a post (with lots of links and references and stuff). Thoughts? I'll probably repost this for the new open thread so that more people see it and can give me feedback.

...People make this identity for themselves... no, it's not that they make it. To some extent it's chosen, but a lot of it is sorta random stuff from the environment. Ya know, man is infinitely malleable, whatever. Like, if you happen to give money to a bum on the street, you'll start thi

Regina Spektor wrote a song about CEV called "The Calculation". She seems to agree with Shane Legg that it may well be impossible, but she appears to think there's hope. YouTube video here.

Excerpt:

...You went into the kitchen cupboard

Got yourself another hour

And you gave

Half of it to me

We sat there looking at the faces

Of these strangers in the pages

'Til we knew 'em mathematicallyThey were in our minds

Until forever

But we didn't mind

We didn't know betterSo we made our own computer out of macaroni pieces

And it did our thinking while we lived

If you are not a programmer, please tell us who you are, and how you ended up here. It sometimes seems like programmers are more likely to end up here, so it might be interesting to see who else does, and how. A programmer here is someone who knows how to do it, does it, and possibly likes it - be it for professional or private reasons.

Has anyone compiled a list of one (two tops) sentence rationality heuristics drawn from LW canon into one place, ideally kinda-sorta thematically organized? I think this would be pretty cool to post on your refrigerator or for flyers... at the very least, useful for finding common themes and core ideas.

If nobody has done this then I will spend a few hours tomorrow digging through LW and related materials (yudkowsky.net) for quoteables and, quality and time permitting, post a paste a draft either here or in a top level post asking for more things to add.

I p...

On the edge of sleep I hypothesized that an average human could build an FAI if they were given lots of time and paper to write down a very long chain of "Why do I believe that?"s. I then realized that such an endeavor would very quickly end in an endless loop of infinitesimal utility and a lot of wasted trees. Moral: rationality is a lot harder than the application of a few heuristics. Metamoral: Applying that heuristic doesn't make it much easier.

Idle observation:

Clippy gets consistently voted up on a lot of his comments because we find him amusing, and rarely gets downvoted because very few of his comments are substantive. We will end up looking extremely silly to new members if he gets enough karma to put him into the list of top contributors.

So... I guess it depends whether we pitch ourselves as shiny fun community or serious rationalist Singularitarians as to whether this is actually an issue.

Clippy gets downvoted quite a lot too (although less and less... he's learning!) He also makes quality comments, including the expression of some insights that would be punished if made by a 'human'. Lesswrong humans sometimes try to bully each other into pretending to be naive utilitarians instead of rational agents with their own agenda.

I guess it depends whether we pitch ourselves as shiny fun community or serious rationalist Singularitarians as to whether this is actually an issue.

This is not a singularitarian website (although rationalists are often singularitarians.) Also note that we spend a lot of time here discussing fanfiction that is written by the lead researcher in the SIAI. We cannot credibly claim 'sensibleness' or sophistication.

I'm trying to defeat my bad excuses for cryocrastinating, such as confusion about how to decide what to sign up for. (A few less-bad excuses will still remain, such as not currently having any income at all, but I'm working on remedying that.) Other than Alcor's included standby service, what significant differences are there between Alcor and CI (and are there any other US cryonics providers I should be considering)? What accounts for the big difference in pricing? Does my being a young healthy person affect anything relevant to this choice?

And if I go wi...

I don't know if it's already been discussed here, but Andrew Gelman & Cosma Shalizi's paper on the philosophy of statistics and Bayesianism sounds like it might be of interest to many here.

Is anyone else familiar with the situation of having a definite research agenda, being unable to get financial support for even a part of it, and so having to choose to do something irrelevant just to stay alive? That last step seems to be particularly agonizing. It's like having to choose the form of torture to which you will be subjected (with starvation or homelessness being the default if you refuse to choose).

I have no doubt there are people here who have wanted to do research at some time, and either compromised or had to settle for non-research job...

Eliezer had written that the two most important mathematical problems were the friendly goal itself that a self modifying program would have and goal stability under self modification.

Now, a useful perspective I have often used in the past is to see incentive structures and see who has the motivation to take a crack at a problem. Now, goal stability seems to be a very very useful problem to solve for many institutions. Almost all of us have heard of institutional missions getting diluted and even seen it when people change in a firm. National governments,...

I posted a link to The Meaning of Life in response to a question on yahoo. It seems we possible we could gain traffic, and help people, by answering other questions in a similar way.

A reasonably good (if polemic) article about common misunderstandings of Buddhism and meditation, and what they're really about. A quotation: "Mindfulness is the natural scientific method of the mind. A scientist brings a microscope, a meditator brings mindfulness. We need to realize that we live in a state of deep assumption about the way the mind works, which then extends to our understanding of the world. We rarely experience anything directly, without first slowing down and paying attention. A scientist shouldn't make statements based on unsubsta...

A proof that a 2008 penny comes up heads 75% of the time:

I took a large sample of pennies not made in 2008, and flipped them. They came up heads .5 of the time, so my estimate of P(heads|penny) = .5.

I then took a sample of coins of all types made in 2008, and flipped them. They came up heads .5 of the time; so P(heads|2008) = .5.

These two samples are independent, so P(heads | 2008 and penny) = 1-(1-P(heads|penny))(1-P(heads|2008)) = .75.

ADDED: JGWeisman got it - there's no causal connection between being a penny, or being made in 2008, and coming up hea...

The Life Cycle of Software Objects by Ted Chiang is sf which raises a number of questions of interest at LW-- in particular, it's about human relationships with non-self-optimizing AI.

At this point, the story is only available as a slightly expensive but possibly collectible little hardcover, but I'll estimate a 90%+ chance that it will be in at least one of the "Best of Sf" collections next year.

I'm going to rot13 specifics in case anyone wants to read it without even the mildest of spoilers.

Sbe rknzcyr, jung qb lbh qb vs gur NV lbh bja naq ner ...

I found a hardware bug in my brain, and I need help, please.

Dan Savage once advised an anxious 15-year-old boy to stop worrying about getting his 15-year-old self laid and go do interesting, social, skill-improving things so that it would be easy to get his 21-year-old self laid. IMHO, this is good advice, and can be applied to any number of goals besides sex: love, friendship, career, fame, wealth, whatever floats your boat.

My problem is that whenever I try to follow advice like that, I find myself irrationally convinced that one or two days of skill-buil...

If I think I may have guessed the dangerous idea, can I PM someone to check my guess? I'd like to go back to not feeling like I need to censor what I read (which could be solved by just looking up the idea, but other people seem confident that that's not a good enough reason to do so).

In the spirit of instrumental rationality, I pose to you the following non-theoretical problem: I have some programming interviews coming up in a week or two, including some areas I haven't used in a while. Cramming technique recommendations? Pre-interview meditation? Negotiation pointers (hopefully it comes to that).

I'm in Cambridge, MA, looking for a rationalist roommate. PM me for details if you are interested or if you know someone who is!

Eric Falkenstein's text "Risk and Return" presents exhaustive evidence that human utility functions are noticably different than standard economic theory - notably the presence of a large "wealthier than my neighbors" term in our human utility function:

http://www.efalken.com/RiskReturn.html

He applies this to finance, and discovers that if people mostly care about relative wealth, then riskier investments will not see higher returns in the long run; which is very interesting.

I'm thinking about buying Adrafinil (powder) and a bunch of empty capsules. I'll put Adrafinil in half, sugar or something in the other half, and then do the obvious.

Does anyone else do blind testing on themselves?

There's talk about self-experimenting on LW, but not much about blind tests, even in areas where that would be possible, like nootropics and supplements. I wonder why that is.

I was offered life insurance by an Alico agent. What do you guys think? I don't know what I don't know about life insurance, so throw me all you've got.

I would get 36k€ if I was crippled 50% or more and 20k€ if I died or survived till 62, all for 260€ a year (that's 5% of my current salary). Does anyone know what injuries constitute what crippledness %?

I will be attending the Jon Stewart/Stephen Colbert semi-satirical rally in DC on October 30th: http://www.rallytorestoresanity.com/ I intend to carry a sign promoting rationality, something along the lines of "Know your cognitive biases" with a URL to point toward a good intro. What would be the best site, with a decently concise URL? And is this a good idea? An other suggestions? Pamphlets?

Request for help in link-hunting: I remember reading here about an experiment involving pigeons and humans, where the experimenters offered 2 options with (say) 80%/20% rewards, and the pigeons quickly worked out that the highest expected reward was from always picking the 80% option, while the college students reproduced the percentages given to them. Anyone know where to find it? (Or better yet, a link to the original paper/article).

Danke

Julian Barbour's theory of time has some adherents here, and Everett's interpretation of quantum-mechanics has even more. Over at my blog a physicist criticizes them both.

A while back here there was a dispute arising from the book 'Why Zebras Don't Get Ulcers". Barry Marshall, who won a nobel for ingesting bacteria and giving himself ulcers, says there's no evidence for stress contributing to ulcers or any other medical condition.

For all your best contrarian ideas!

- Make more efficient use of your persuasive time and dollars.

- Leverage decades of professional experience for YOUR beliefs!

- Persuade even those whom the sequences would never reach.

Can we afford not to?

Utilitometer:

I've been doing thought experiments involving a utilitometer: a device capable of measuring the utility of the universe, including sums-over-time and counterfactuals (what-if extrapolations), for any given utility function, even generic statements such as, "what I value." Things this model ignores: nonutilitarianism, complexity, contradictions, unknowability of true utility functions, inability to simulate and measure counterfactual universes, etc.

Unfortunately, I believe I've run into a pathological mindset from thinking about this ...

An embarrassing case study of introspection failure: a few days ago I attended an event where I ran into a few high school friends. I was stunned to learn that one old friend was still dating his high school girlfriend, and the two of them were coming up on their six year anniversary*.

I was amazed and jokingly said something like, "man, I can't remember the last relationship I had that lasted six weeks!" Everybody laughed.

I thought about that while I drove home, and actually tried to recall my last relationship to last that long. I gradually realized that I had never had a relationship that lasted more than a month!

Massimo Pigliucci has written a blog post critiquing one of Eliezer's posts, The Dilemma: Science or Bayes?.

Can anyone think of items that look like they belong in a set with {determinism, randomness, free-will}, or is that set complete?

(I know that's a soft question, but I'm happy to hear even silly suggestions.)

Corn syrup appears to be no worse than sugar, health-wise. Interesting case of a health-related panic not based on any actual science.

This thread is for the discussion of Less Wrong topics that have not appeared in recent posts. If a discussion gets unwieldy, celebrate by turning it into a top-level post.