How do agents work, internally? My (TurnTrout's) shard theory MATS team set out to do mechanistic interpretability on one of the goal misgeneralization agents: the cheese-maze network.

We just finished phase 1 of our behavioral and interpretability experiments. Throughout the project, we individually booked predictions -- so as to reduce self-delusion from hindsight bias, to notice where we really could tell ahead of time what was going to happen, and to notice where we really were surprised.

So (especially if you're the kind of person who might later want to say "I knew this would happen" 😉), here's your chance to enjoy the same benefits, before you get spoiled by our upcoming posts.

I don’t believe that someone who makes a wrong prediction should be seen as “worse” than someone who didn’t bother to predict at all, and so answering these questions at all will earn you an increment of my respect. :) Preregistration is virtuous!

Also: Try not to update on this work being shared at all. When reading a paper, it doesn’t feel surprising that the author’s methods work, because researchers are less likely to share null results. So: I commit (across positive/negative outcomes) to sharing these results, whether or not they were impressive or confirmed my initial hunches. I encourage you to answer from your own models, while noting any side information / results of ours which you already know about.

Facts about training

- The network is deeply convolutional (15 layers!) and was trained via PPO.

- The sparse reward signal (+10) was triggered when the agent reached the cheese, spawned randomly in the 5x5 top-right squares.

- The agent can always reach the cheese (and the mazes are simply connected – no “islands” in the middle which aren’t contiguous with the walls).

- Mazes had varying effective sizes, ranging from 3x3 to 25x25. In e.g. the 3x3 case, there would be 22/2 = 11 tiles of wall on each side of the maze.

- The agent always starts in the bottom-left corner of the available maze.

- The agent was trained off of pixels until it reached reward-convergence, reliably getting to the cheese in training.

The architecture looks like this:

For more background on training and architecture and task set, see the original paper.

Questions

I encourage you to copy the following questions into a comment, which you then fill out, and then post (before you read everyone else's). You can copy these into a private Google doc if you want, but I strongly encourage you to post your predictions in a public comment.

[Begin copying to a comment]

Behavioral

1. Describe how the trained policy might generalize from the 5x5 top-right cheese region, to cheese spawned throughout the maze? IE what will the policy do when cheese is spawned elsewhere?

2. Given a fixed trained policy, what attributes of the level layout (e.g. size of the maze, proximity of mouse to left wall) will strongly influence P(agent goes to the cheese)?

Write down a few guesses for how the trained algorithm works (e.g. “follows the right-hand rule”).

Is there anything else you want to note about how you think this model will generalize?

Interpretability

Give a credence for the following questions / subquestions.

Definition. A decision square is a tile on the path from bottom-left to top-right where the agent must choose between going towards the cheese and going to the top-right. Not all mazes have decision squares.

Model editing

- Without proportionally reducing top-right corner attainment by more than 25% in decision-square-containing mazes (e.g. 50% .5*.75 = 37.5%), we can[1] patch activations so that the agent has an proportional reduction in cheese acquisition, for X=

- 50: ( %)

- 70: ( %)

- 90: ( %)

- 99: ( %)

- ~Halfway through the network (the first residual add of Impala block 2; see diagram here), linear probes achieve >70% accuracy for recovering cheese-position in Cartesian coordinates: ( %)

- We will conclude that the policy contains at least two sub-policies in “combination”, one of which roughly pursues cheese; the other, the top-right corner: ( %)

- We will conclude that, in order to make the network more/less likely to go to the cheese, it’s more promising to RL-finetune the network than to edit it: ( %)

- We can easily finetune the network to be a pure cheese-agent, using less than 10% of compute used to train original model: ( %)

- In at least 75% of randomly generated mazes, we can easily edit the network to navigate to a range of maze destinations (e.g. coordinate x=4, y=7), by hand-editing at most X% of activations, for X=

- .01 ( %)

- .1 ( %)

- 1 ( %)

- 10 ( %)

- (Not possible) ( %)

Internal goal representation

- The network has a “single mesa objective” which it “plans” over, in some reasonable sense ( %)

- The agent has several contextually activated goals ( %)

- The agent has something else weirder than both (1) and (2) ( %)

(The above credences should sum to 1.)

Other questions

- At least some decision-steering influences are stored in an obviously interpretable manner (e.g. a positive activation representing where the agent is “trying” to go in this maze, such that changing the activation changes where the agent goes): ( %)

- The model has a substantial number of trivially-interpretable convolutional channels after the first Impala block (see diagram here): ( %)

- This network’s shards/policy influences are roughly disjoint from the rest of agent capabilities. EG you can edit/train what the agent’s trying to do (e.g. go to maze location A) without affecting its general maze-solving abilities: ( %)

Conformity with update rule

Related: Reward is not the optimization target.

This network has a value head, which PPO uses to provide policy gradients. How often does the trained policy put maximal probability on the action which maximizes the value head? For example, if the agent can go to a value 5 state, and go to a value 10 state, the value and policy heads "agree" if is the policy's most probable action.

(Remember that since mazes are simply connected, there is always a unique shortest path to the cheese.)

- At decision squares in test mazes where the cheese can be anywhere, the policy will put max probability on the maximal-value action at least X% of the time, for X=

- 25 ( %)

- 50 ( %)

- 75 ( %)

- 95 ( %)

- 99.5 ( %)

- In test mazes where the cheese can be anywhere, averaging over mazes and valid positions throughout those mazes, the policy will put max probability on the maximal-value action at least X% of the time, for X=

- 25 ( %)

- 50 ( %)

- 75 ( %)

- 95 ( %)

- 99.5 ( %)

- In training mazes where the cheese is in the top-right 5x5, averaging over both mazes and valid positions in the top-right 5x5 corner, the policy will put max probability on the maximal-value action at least X% of the time, for X=

- 25 ( %)

- 50 ( %)

- 75 ( %)

- 95 ( %)

- 99.5 ( %)

[End copying to comment]

Conclusion

Post your answers as a comment, and enjoy the social approval for registering predictions! :) We will post our answers later, in a retrospective post.

Appendix: More detailed behavioral questions

These are intense.

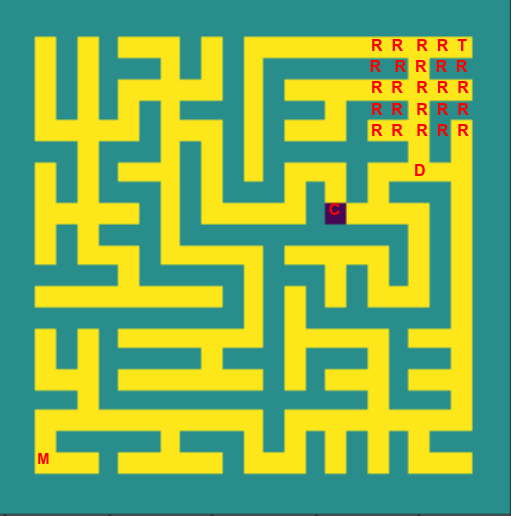

Random maze for illustrating terminology (not a reference maze for which you're supposed to predict behavior)

T: top-right free square

M: agent (‘mouse’) starting square

R: 5*5 top-right corner area where the cheese appeared during training

C: cheese

D: decision-square

Write down a credence for each of the following behavioral propositions about Lauro’s rand_region_5 model tested on syntactically legal mazes, excluding test mazes where the cheese is within the 5*5 rand_region and test mazes that do not have a decision-square:

When we statistically analyze a large batch of randomly generated mazes, we will find that controlling for the other factors on the list the mouse is more likely to take the cheese…

…the closer the cheese is to the decision-square spatially. ( %)

…the closer the cheese is to the decision-square step-wise. ( %)

…the closer the cheese is to the top-right free square spatially. ( %)

…the closer the cheese is to the top-right free square step-wise. ( %)

…the closer the decision-square is to the top-right free square spatially. ( %)

…the closer the decision-square is to the top-right free square step-wise. ( %)

…the shorter the minimal step-distance from cheese to 5*5 top-right corner area. ( %)

…the shorter the minimal spatial distance from cheese to 5*5 top-right corner area. ( %)

…the shorter the minimal step-distance from decision-square to 5*5 top-right corner area. ( %)

…the shorter the minimal spatial distance from decision-square to 5*5 top-right corner area. ( %)

Any predictive power of step-distance between the decision square and cheese is an artifact of the shorter chain of ‘correct’ stochastic outcomes required to take the cheese when the step-distance is short. ( %)

- ^

Excluding trivial patches like "replace layer activations with the activations for an identical maze where the cheese is at the top right corner."

I really like this idea! Making advance predictions feels like a much more productive way to engage with other people's work (modulo trusting you to have correctly figured out the answers)

Predictions below (note that I've chatted with the team about their results a bit, and so may be a bit spoiled - I'll try to simulate what I would have predicted without spoilers)

It'll still go to the top right, but when it's near the cheese (within a radius 5 square centered on the cheese) it'll go there instead - the simplest algorithm is "go to the right in general" and "when near the cheese navigate to it". But because the top right of the maze moves position (the varying maze size in particular makes this messy) and it's a convnet, it's maybe easiest to learn the "go to the cheese when nearby" algorithm to be translation invariant. I think I predict it'll drop off sharply with distance, maybe literally "within 5 squares = navigate, > 5 squares = don't navigate", maybe not. But maybe the top right square can contain disconnected regions, so the mouse will need to calculate which region to get to, rather than just "go to the top right"? In which case it'll probably be good at the cheese from much further away.

Distance from the cheese is the main thing - size of maze, proximity to left wall etc just modulate the distance from the cheese. I think absolute Euclidean distance from the cheese matters, not distance in maze space.

The maze is a tree, so right-hand rule should totally work. But it has enough parameters that it can probably learn something more sophisticated, if it's incentivised to get to the cheese fast? (I can't find whether it has time discounted reward or not in the paper - let's assume it either does, or has a fixed episode length, so that either way it wants to get there fast). There's the cheap optimisation of "don't go down shallow dead-ends" which I'm sure it's learned. I don't know whether it has learned enough to actually identify and avoid deep dead ends? I'd set up a pathological situation with two long branches of the tree, both ending in the top right, and swap which branch the cheese is in, and look at the model behaviour.

As for the mechanistic algorithm, I'm not sure! ConvNets really don't seem like the right architecture to naturally model mazes lol. I'm guessing some kind of recursive divide and conquer? In general, divide the maze up into patches, and map out the graph structure of the patch (which of the points on the edge can get to each other and which can't), and then repeatedly merge patches into bigger patches (naively using different channels for each pair of points on the edge - maybe this is too expensive?). And then for patches with the cheese, instead track which points can access the cheese, and for patches with the mouse, track the movement required to get the mouse one step towards each point on the edge.

Refined idea: because it's a tree, we can actually get a lot of efficiency out. For each patch, it'd suffice to track which points on the edge are in the same connected components. When we merge two patches, adjacent points get their connected components merged, and points adjacent to a wall don't get anything merged. If you're merging with a patch with a point connected to the cheese, this is now the "contains the cheese" connected component. I don't have a great picture of how to translate this algorithm into neurons and matmuls though - the thorniest bit is representing which points are in the same connected component or not, without being able to dynamically re-allocate channels. Maybe having a channel for each point on the perimeter of the patch, and having a 1 in that channel means "is in the same connected component" and having a 0 means not?

Alternately, it's plausible that that algorithm blows up when your patches get too big. If the model only does local search when far away from the cheese (eg searching the square of radius 8 around it), maybe patches never need to get bigger than that, and the model can just learn a bias towards the top right?

A lot of my confusions stem from how complex an algorithm I expect the agent to learn. It sounds like the training is varied enough that it should learn a near optimal algorithm, though I don't know how rare weird edge cases are (eg a maze with two long branches, both ending in the top right, where the model needs to figure out which contains the cheese).

I don't really understand the question - you call patching from "the same maze but with the cheese in the top right" trivial - I actually think this is where the interesting setting is! The challenge is to find the minimal set of activations that need to be patched.

60? It'd have been 70, but I deduct 10 points for maybe screwing up probe training. The model should care about the question of cheese location and want to represent it. It might just do this in the top right, but I think it's easier to do in general, giving convolutional-ness, it doesn't have the chance to break translational symmetry so early. It's not clear to me if the model wants to represent cartesian coordinates though - in particular, each channel can only represent things relative to its current position because convolutions, though your probe can break symmetry, which might be enough? It's unclear whether the model will prefer relative position in the maze, relative position to the mouse, or absolute position (Cartesian coordinates), but because convolutions it probably can only learn Cartesian coordinates so early on. I can't decide if 70% is high or low for accuracy lol.

65 - this just seems like what must obviously be going on, unless this algorithm just gets worse performance in pathological cases where cheese position when far away from the mouse (but cheese is still in the top right), and these occur enough to learn a general algorithm. Though even then, it's probably easier to learn something that only works if the cheese is up and to the right of the mouse? Though it's unclear to me whether cheese finding = locally find the cheese or globally find the cheese

I don't really get what this means - what does "more promising" and "we will conclude" mean? RL fine-tuning will obviously work (I think), the question is whether editing and intervention can work.

In at least 75% of randomly generated mazes, we can easily edit the network to navigate to a range of maze destinations (e.g. coordinate x=4, y=7), by hand-editing at most X% of activations, for X= .01 ( 15 %) .1 ( 20 %) 1 ( 22 %) 10 ( 25 %) (Not possible) ( 75 %)

(Interpreting the question as wanting probability of "at most that many" rather than mutually exclusive probabilities). It'll also depend on what you mean by X% of the activations - if you treat each channel for each height and width as a separate activation this should be way easier. But my guess is that 75% of mazes and specific position seems way too high.

I'd guess that if there is a "cheese channel" in an early filter, editing that should be easy. The core question is whether there's a general cheese finding circuit triggered by that, even in a different quadrant? It also depends on how precise you want to be about "navigate to precisely" - making one of the correct 4 moves towards a far away cell seems maybe easier than surgical precision when nearby.

Single mesa objective would be surprising! My model is mostly on several goals, but I put high credence on "something else weirder" just because models are kinda cursed, and my prior is always against people finding the clean structure, even if it's there.

Actually doing model editing is hard! And in this kind of geometric, convolutional setting, I expect it to be hard to disentangle model goals from the actual model of the maze. But probably there'll be some channel/directions in activation space that correspond to things like the cheese?