I often encounter some confusion about whether the fact that synapses in the brain typically fire at frequencies of 1-100 Hz while the clock frequency of a state-of-the-art GPU is on the order of 1 GHz means that AIs think "many orders of magnitude faster" than humans. In this short post, I'll argue that this way of thinking about "cognitive speed" is quite misleading.

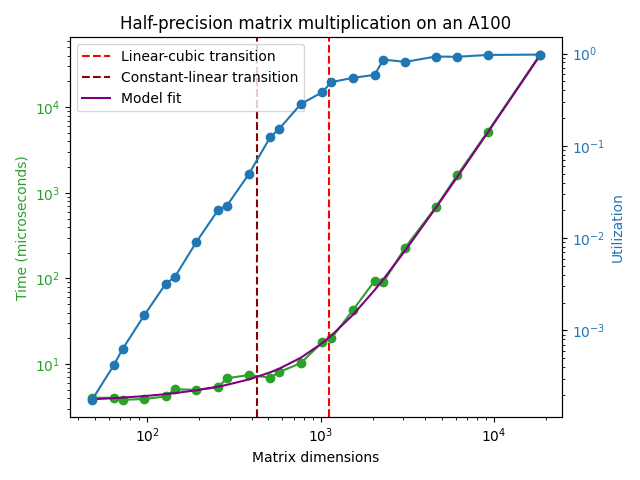

The clock speed of a GPU is indeed meaningful: there is a clock inside the GPU that provides some signal that's periodic at a frequency of ~ 1 GHz. However, the corresponding period of ~ 1 nanosecond does not correspond to the timescale of any useful computations done by the GPU. For instance; in the A100 any read/write access into the L1 cache happens every ~ 30 clock cycles and this number goes up to 200-350 clock cycles for the L2 cache. The result of these latencies adding up along with other sources of delay such as kernel setup overhead etc. means that there is a latency of around ~ 4.5 microseconds for an A100 operating at the boosted clock speed of 1.41 GHz to be able to perform any matrix multiplication at all:

The timescale for a single matrix multiplication gets longer if we also demand that the matrix multiplication achieves something close to the peak FLOP/s performance reported in the GPU datasheet. In the plot above, it can be seen that a matrix multiplication achieving good hardware utilization can't take shorter than ~ 100 microseconds or so.

On top of this, state-of-the-art machine learning models today consist of chaining many matrix multiplications and nonlinearities in a row. For example, a typical language model could have on the order of ~ 100 layers with each layer containing at least 2 serial matrix multiplications for the feedforward layers[1]. If these were the only places where a forward pass incurred time delays, we would obtain the result that a sequential forward pass cannot occur faster than (100 microseconds/matmul) * (200 matmuls) = 20 ms or so. At this speed, we could generate 50 sequential tokens per second, which is not too far from human reading speed. This is why you haven't seen LLMs being serviced at per token latencies that are much faster than this.

We can, of course, process many requests at once in these 20 milliseconds: the bound is not that we can generate only 50 tokens per second, but that we can generate only 50 sequential tokens per second, meaning that the generation of each token needs to know what all the previously generated tokens were. It's much easier to handle requests in parallel, but that has little to do with the "clock speed" of GPUs and much more to do with their FLOP/s capacity.

The human brain is estimated to do the computational equivalent of around 1e15 FLOP/s. This performance is on par with NVIDIA's latest machine learning GPU (the H100) and the brain achieves this performance using only 20 W of power compared to the 700 W that's drawn by an H100. In addition, each forward pass of a state-of-the-art language model today likely takes somewhere between 1e11 and 1e12 FLOP, so the computational capacity of the brain alone is sufficient to run inference on these models at speeds of 1k to 10k tokens per second. There's, in short, no meaningful sense in which machine learning models today think faster than humans do, though they are certainly much more effective at parallel tasks because we can run them on clusters of multiple GPUs.

In general, I think it's more sensible for discussion of cognitive capabilities to focus on throughput metrics such as training compute (units of FLOP) and inference compute (units of FLOP/token or FLOP/s). If all the AIs in the world are doing orders of magnitude more arithmetic operations per second than all the humans in the world (8e9 people * 1e15 FLOP/s/person = 8e24 FLOP/s is a big number!) we have a good case for saying that the cognition of AIs has become faster than that of humans in some important sense. However, just comparing the clock speed of a GPU to the synapse firing frequency in the human brain and concluding that AIs think faster than humans is a sloppy argument that neglects how training or inference of ML models on GPUs actually works right now.

While attention and feedforward layers are sequential in the vanilla Transformer architecture, they can in fact be parallelized by adding the outputs of both to the residual stream instead of doing the operations sequentially. This optimization lowers the number of serial operations needed for a forward or backward pass by around a factor of 2 and I assume it's being used in this context. ↩︎

Daniel you model near future intelligence explosions. The simple reason these probably cannot happen are that when you have a multiple stage process, the slow step always wins. For an explosion there are 4 ingredients, not 1 : (algorithm, compute, data, robotics). Robotics is necessary or the explosion halts once available human labor is utilized.

Summary : if you make AI smarter with recursion, you will be limited by (silicon, data, or robotics) and the process cannot run faster than the slowest step.

(1) you have the maximum throughput and serial speed achievable with your hardware. If a human brain is (86b * 1000 * 1000) / 10 arbitrarily sparse 8-bit flops.

Please notice the keyword "arbitrarily sparse". That means on GPUs in several years, whenever Nvidia gets around to supporting this. Otherwise you need a lot more compute. Notice the dividing by 10, I am assuming GPUs are 10 times better than meatware. (Less noisy, less neurons failing to fire)

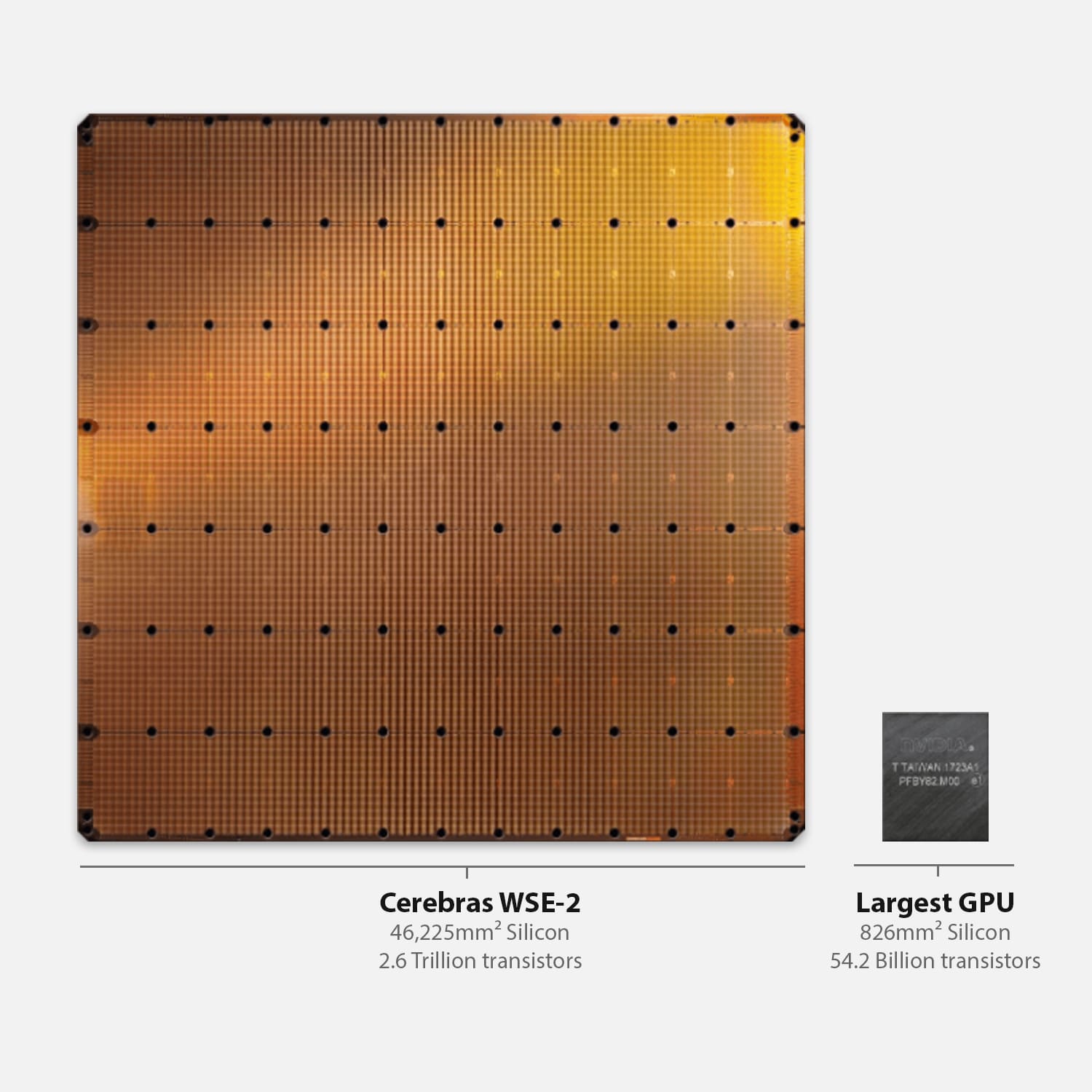

But just ignoring the sparsity (and VRAM and utilization) issues for some numbers, 2 million H100s are projected to ship in 2024, so if you had full GPU utilization that's 43 cards per "human equivalent" at inference time.

What this means bottom line is the "ecosystem" of compute can support a finite amount human equivalents, or 46,511 humans if you use all hardware.

If you run the hardware faster, you lose throughput. If we take your estimate as correct (note you will need custom hardware past a certain point, GPUs will no longer work) then that's like adding 74 new humans but they think 125 times faster, and can do the work of 9302 humans but serially fast and 24/7.

You probably should estimate and plot how much new AI silicon production can be added with each year after 2024.

Assuming a dominant AI lab buys up 15 percent of worldwide production, like Meta says they will do this year. That's your roofline. Also remember most of the hardware is going it be service customers and not being used for R&D.

So if 50 percent of the hardware is serving other projects or customers, and we have 15 percent of worldwide production, then we now have 697 new humans in throughput per hour, over 5 serial threads, though you would obviously assign more than 5 tasks, and context switch between them.

Probably 2025 has 50 percent more AI accelerators built than 2024 and so on, so I suggest you add this factor to your world modeling. This is extremely meaningful to timelines.

(2) once serial speed is no longer a bottleneck any recursive improvement process bottlenecks on the evaluation system. For a simple example, once you can achieve the highest average score on a suite of tests that is possible, no further improvement will happen. Past a certain level of intelligence you would assume the AI system will just bottleneck on human written evals, learning at the fastest rate that doesn't overfit. Yes you could go to AI written evals but how do humans tell if the eval is optimizing for a useful metric?

(3) once an AI system is maxed, bottlenecked on computer or data, it may be many times smarter than humans but limited by real world robotics or data. I gave a mock example of an engine design task in the comments here, and the speedup is 50 times, not 1 million times, because the real world has steps limited by physics. This is relevant for modeling an "explosion" and why once AGI is achieved in a few years it probably won't immediately change everything as there aren't enough robots.