Before we get to the receipts, let's talk epistemics.

This community prides itself on "rationalism." Central to that framework is the commitment to evaluate how reality played out against our predictions.

"Bayesian updating," as we so often remind each other.

If we're serious about this project, we should expect our community to maintain rigorous epistemic standards—not just individually updating our beliefs to align with reality, but collectively fostering a culture where those making confident pronouncements about empirically verifiable outcomes are held accountable when those pronouncements fail.

With that in mind, I've compiled in the appendix a selection of predictions about China and AI from prominent community voices. The pattern is clear: a systematic underestimation of China's technical capabilities, prioritization of AI development, and ability to advance despite (or perhaps because of) government involvement.

The interesting question isn't just that these predictions were wrong. It's that they were confidently wrong in a specific direction, and—crucially—that wrongness has gone largely unacknowledged.

How many of you incorporated these assumptions into your fundamental worldview? How many based your advocacy for AI "pauses" or "slowdowns" on the belief that Western labs were the only serious players? How many discounted the possibility that misalignment risk might manifest first through a different technological trajectory than the one pursued by OpenAI or Anthropic?

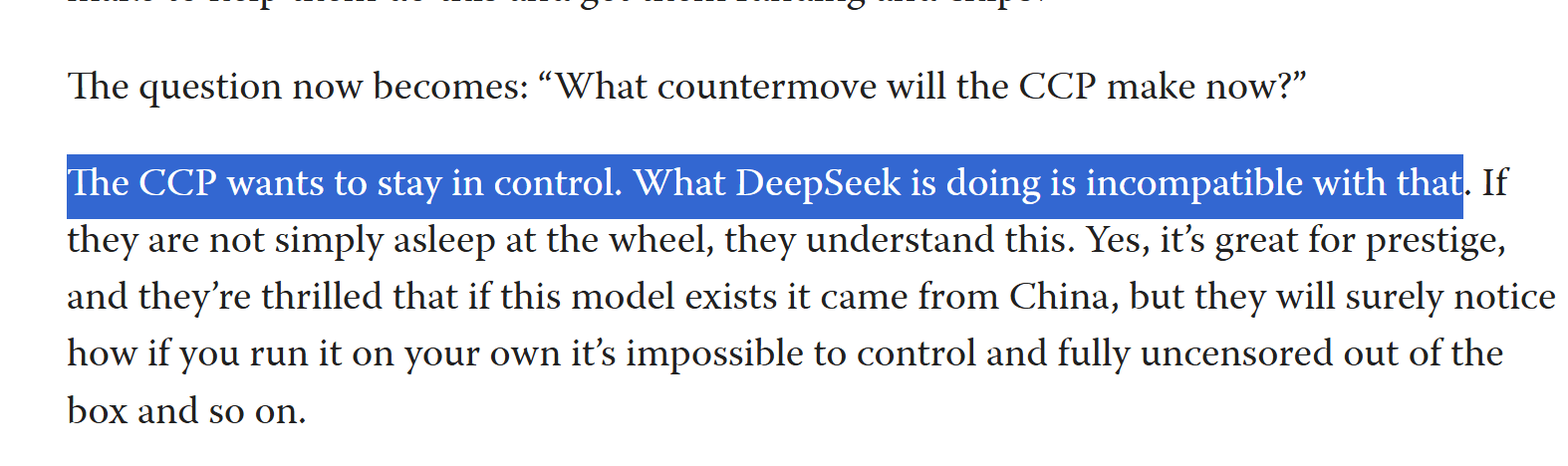

If you're genuinely concerned about misalignment, China's rapid advancement represents exactly the scenario many claimed to fear: potentially less-aligned AI development accelerating outside the influence of Western governance structures. This seems like the most probable vector for the "unaligned AGI" scenarios many have written extensive warnings about.

And yet, where is the community updating? Where are the post-mortems on these failed predictions? Where is the reconsideration of alignment strategies in light of demonstrated reality?

Collective epistemics require more than just nodding along to the concept of updating. They require actually doing the work when our predictions fail.

What do YOU think?

Appendix:

Bad Predictions on China and AI

"No...There is no appreciable risk from non-Western countries whatsover" - @Connor Leahy

"China has neither the resources nor any interest in competing with the US on developing artificial general intelligence" = @Eva_B

dear ol @Eliezer Yudkowsky

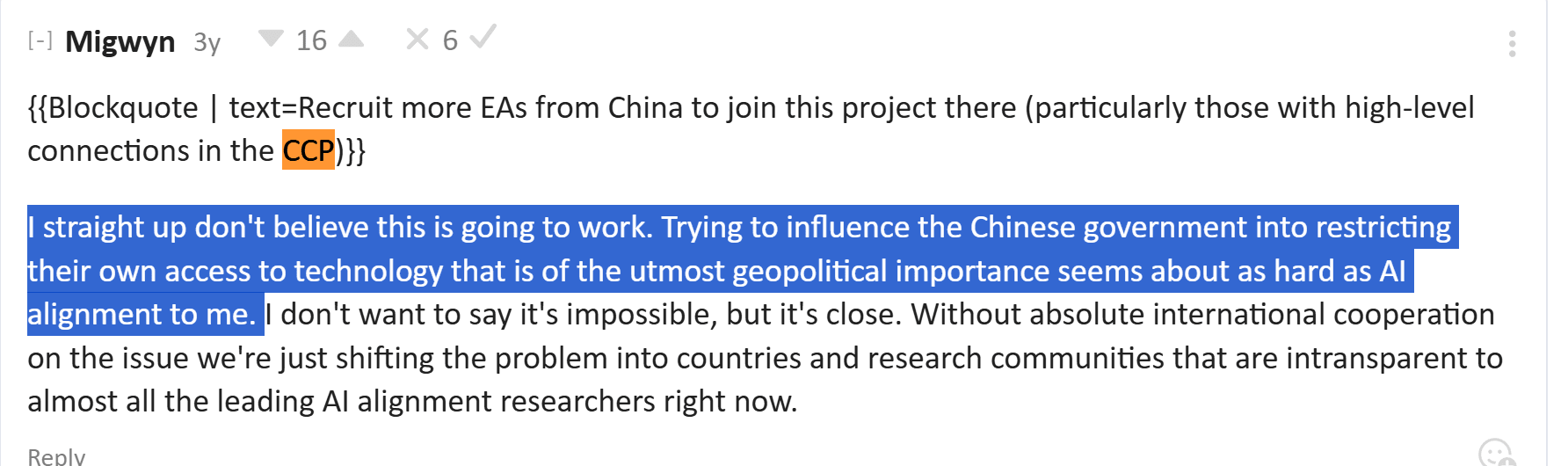

Anonymous (I guess we know why)

https://www.lesswrong.com/posts/ysuXxa5uarpGzrTfH/china-ai-forecasts

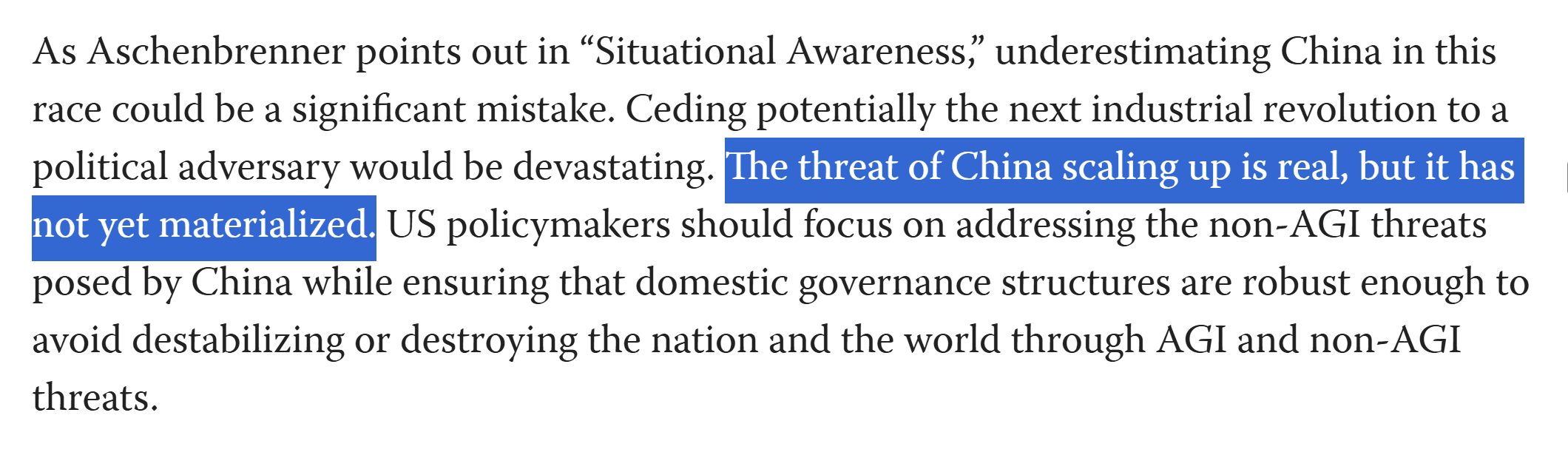

https://www.lesswrong.com/posts/KPBPc7RayDPxqxdqY/china-hawks-are-manufacturing-an-ai-arms-race

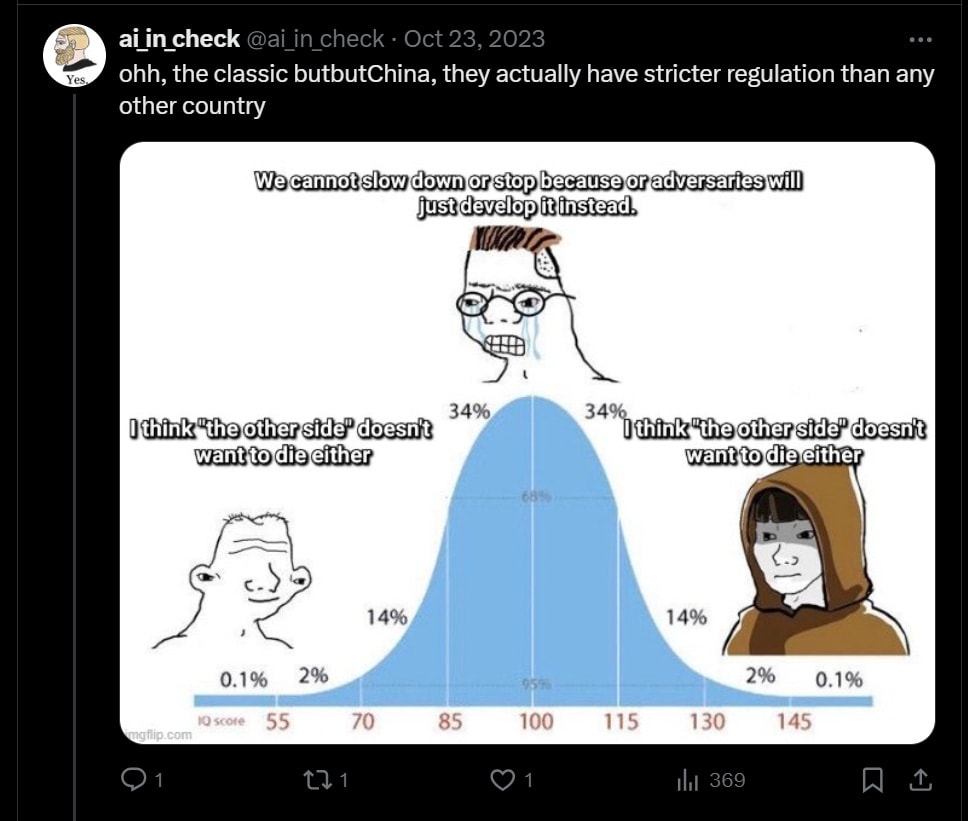

Various folks on twitter...

Good Predictions / Open-mindedness

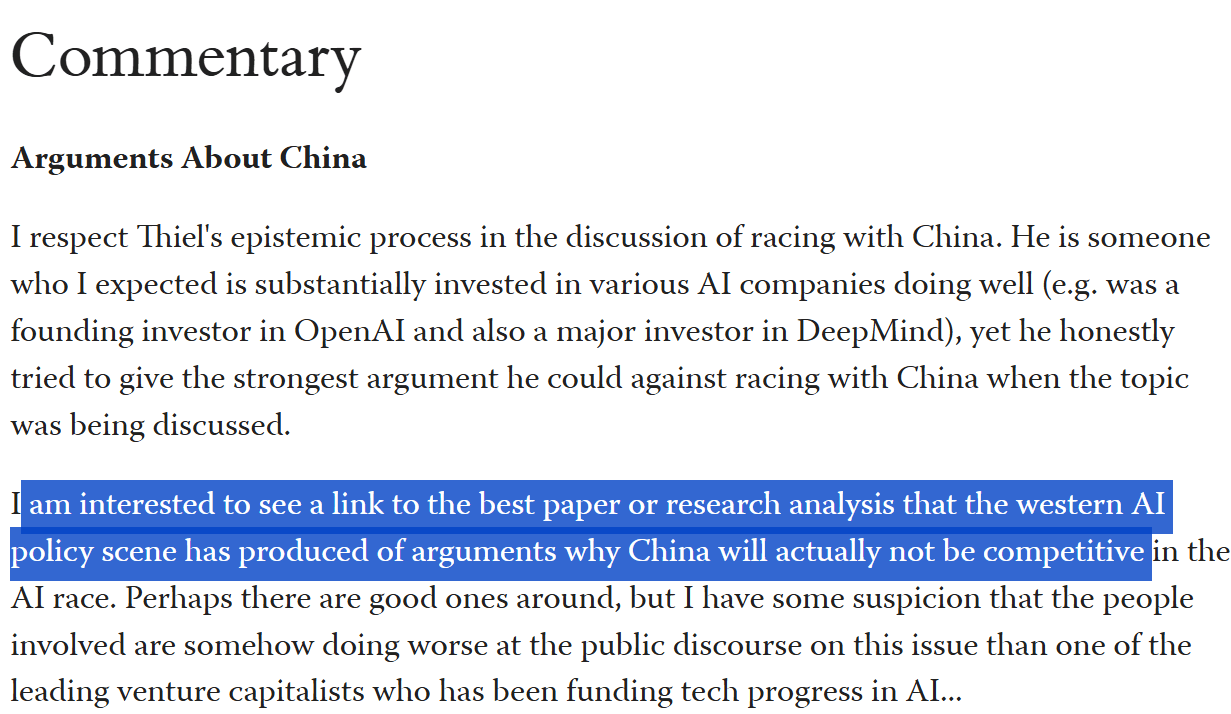

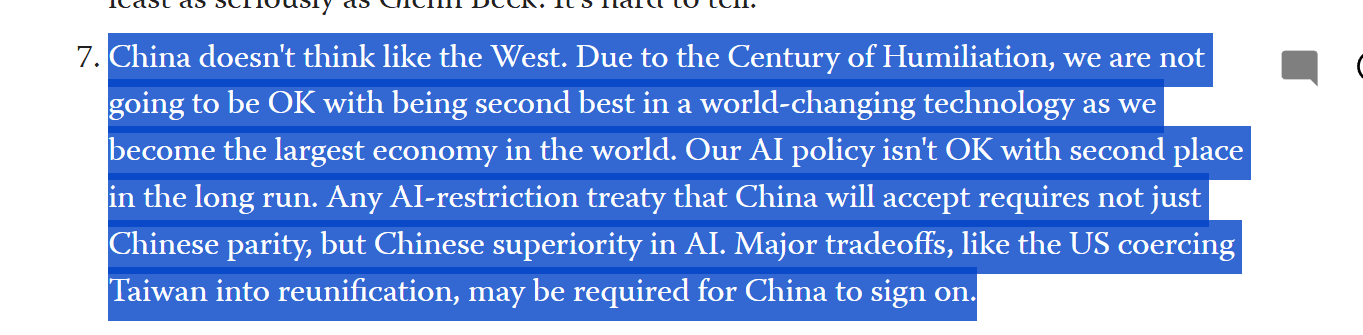

https://www.lesswrong.com/posts/xbpig7TcsEktyykNF/thiel-on-ai-and-racing-with-china

I was not wrong? I argued with Connor about this back in the day on EAI. If you take the PISA scores at face value and account for their higher population, China has like 30x more people above 145 IQ. Steve Hsu has a nice post about this[1]:

This appears to be very antimemetic but seems to be true and the world sure is behaving like this is true.

If human capital matters, they have more and better human capital than America. But human capital will be obsolete soon.

And I do think much of the idea that China cannot catch up is based on some idiotic, racist notions that asians lack creativity. And this increasingly is becoming obviously massive cope as China catches up and surpasses in industry after industry. And no, semiconductors are not special. They will catch up just fine.

I think timelines are short, so I would still be betting on an “American god” but if they are longer than I think, China is a contender, and increasing more so in worlds where timelines are long and human capital still matters.

https://infoproc.blogspot.com/2008/06/asian-white-iq-variance-from-pisa.html?m=1

exactly