(adapted from Nora's tweet thread here.)

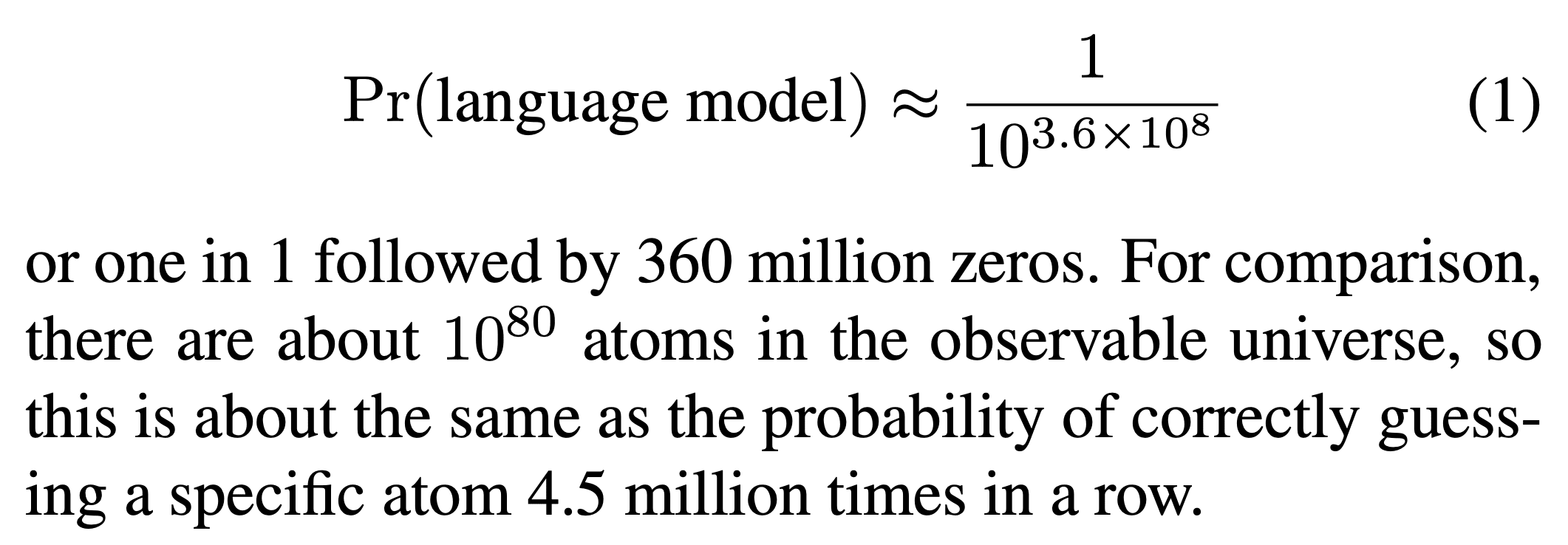

Consider a trained, fully functional language model. What are the chances you'd get that same model -- or something functionally indistinguishable -- by randomly guessing the weights?

We crunched the numbers and here's the answer:

We've developed a method for estimating the probability of sampling a neural network in a behaviorally-defined region from a Gaussian or uniform prior.

You can think of this as a measure of complexity: less probable, means more complex.

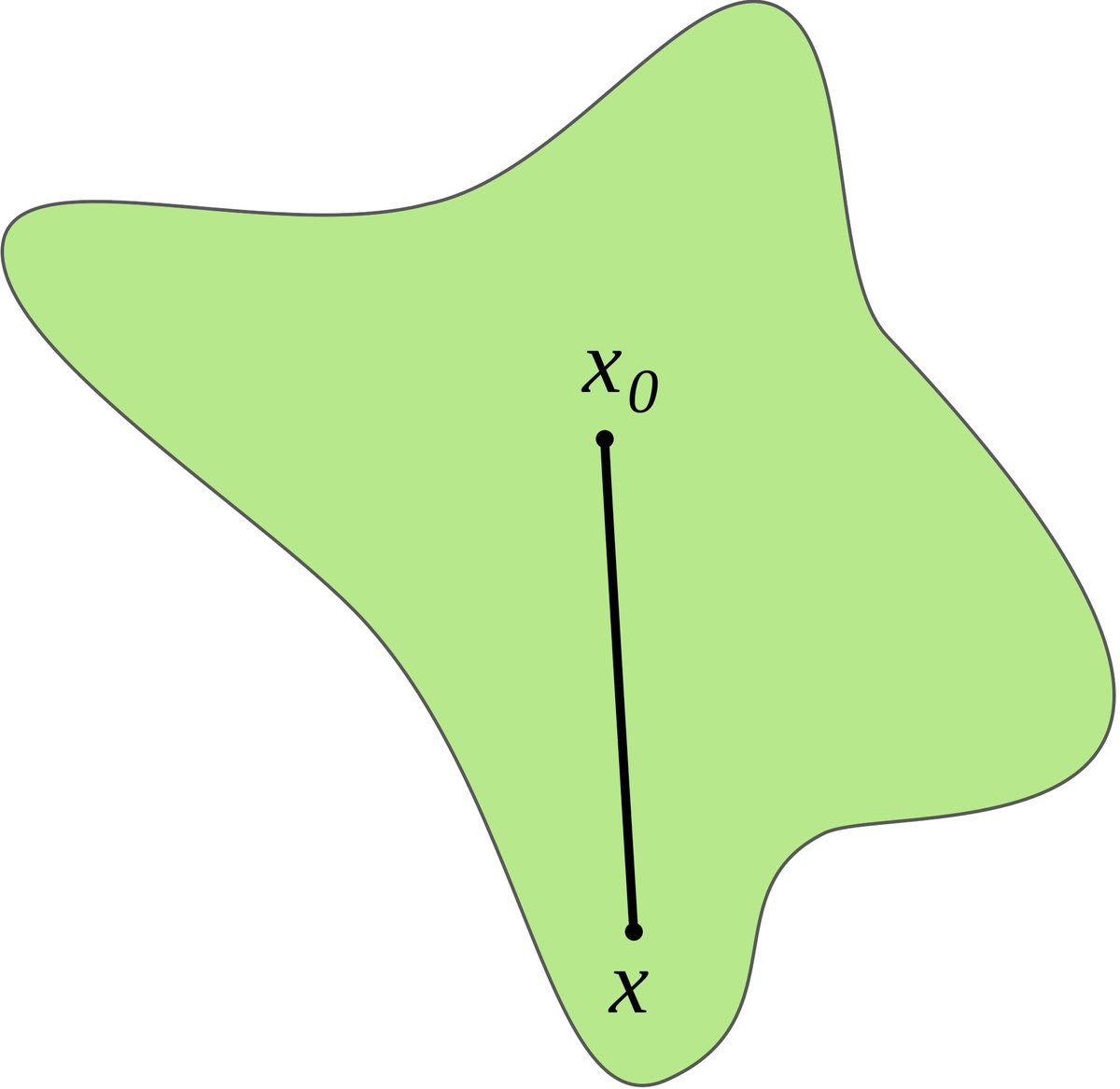

Our method works by exploring random directions in weight space, starting from an "anchor" network that defines the behavior.

The distance from the anchor to the edge of the region, along the random direction, gives us an estimate of how big (or how probable) the region is as a whole.

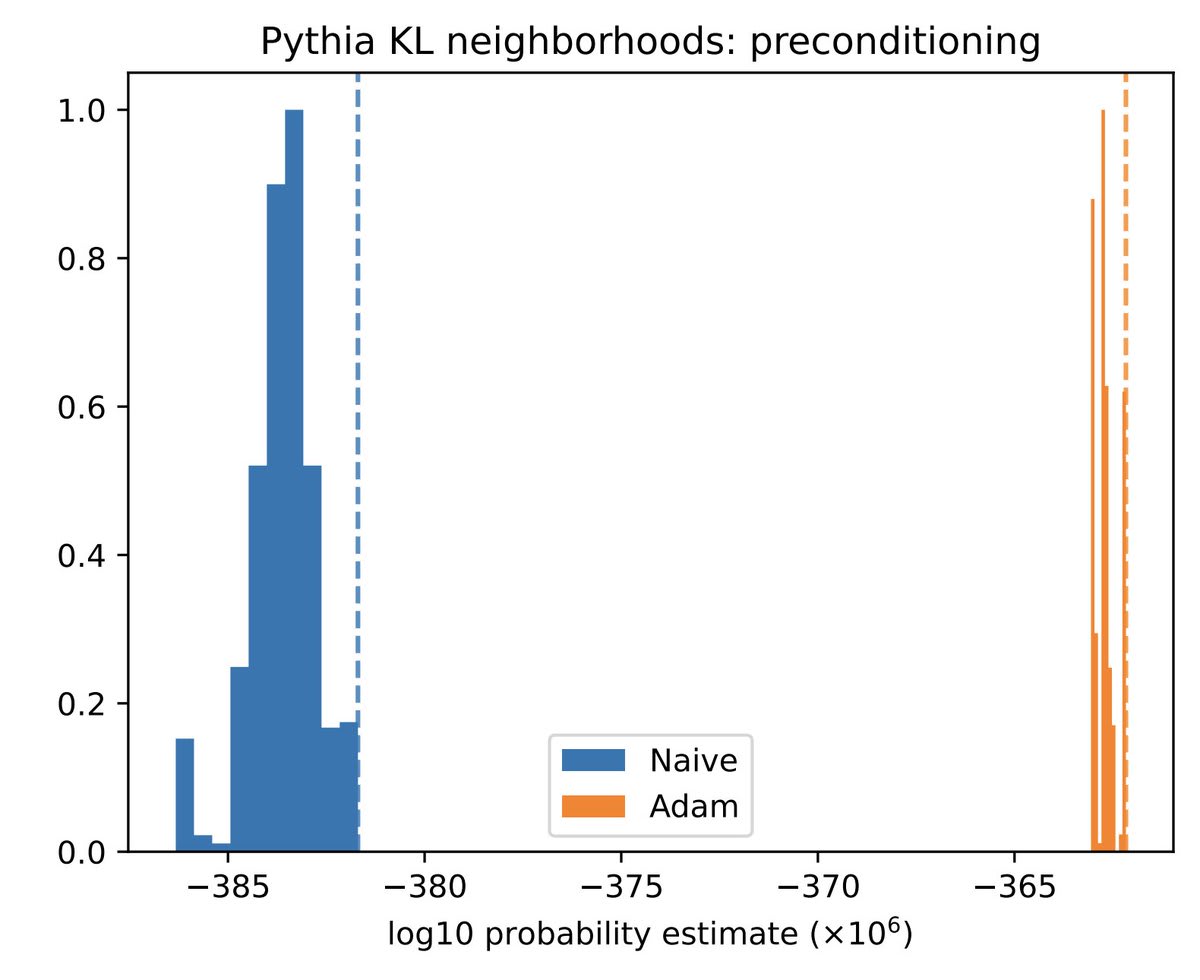

But the total volume can be strongly influenced by a small number of outlier directions, which are hard to sample in high dimension— think of a big, flat pancake.

Importance sampling using gradient info helps address this issue by making us more likely to sample outliers.

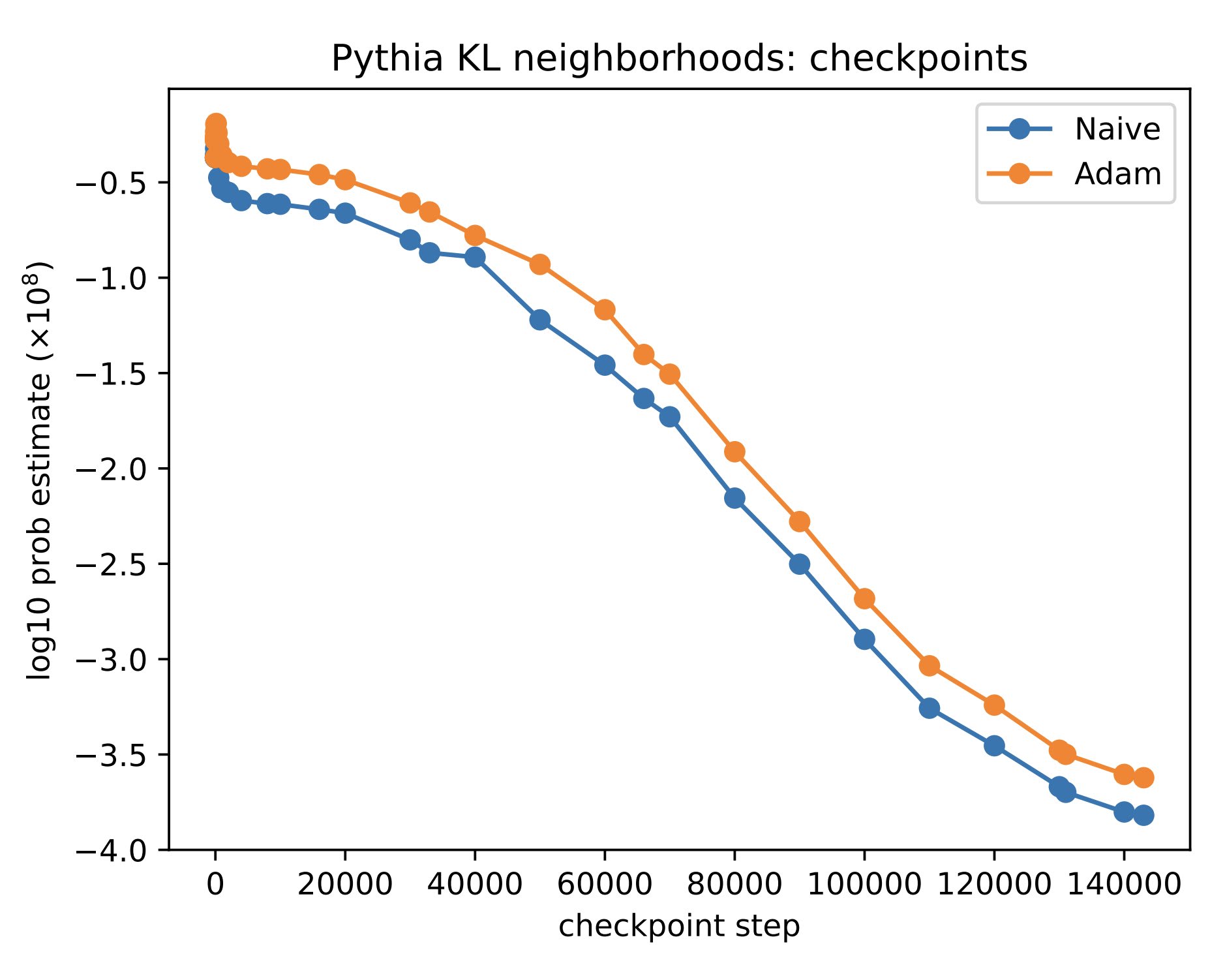

We find that the probability of sampling a network at random— or local volume for short— decreases exponentially as the network is trained and grows in complexity.

And networks which memorize their training data without generalizing have lower local volume— higher complexity— than generalizing ones.

We're interested in this line of work for two reasons:

First, it sheds light on how deep learning works. The "volume hypothesis" says DL is similar to randomly sampling a network from weight space that gets low training loss. (This is roughly equivalent to Bayesian inference over weight space.) But this can't be tested if we can't measure volume.

Second, we speculate that complexity measures like this can be useful for detecting undesired "extra reasoning" in deep nets. We want networks to be aligned with our values instinctively, without scheming about whether this would be consistent with some ulterior motive: https://arxiv.org/abs/2311.08379

Our code is available (and under active development) here.

Indeed, very interesting!