Paging Gwern or anyone else who can shed light on the current state of the AI market—I have several questions.

Since the release of ChatGPT, at least 17 companies, according to the LMSYS Chatbot Arena Leaderboard, have developed AI models that outperform it. These companies include Anthropic, NexusFlow, Microsoft, Mistral, Alibaba, Hugging Face, Google, Reka AI, Cohere, Meta, 01 AI, AI21 Labs, Zhipu AI, Nvidia, DeepSeek, and xAI.

Since GPT-4’s launch, 15 different companies have reportedly created AI models that are smarter than GPT-4. Among them are Reka AI, Meta, AI21 Labs, DeepSeek AI, Anthropic, Alibaba, Zhipu, Google, Cohere, Nvidia, 01 AI, NexusFlow, Mistral, and xAI.

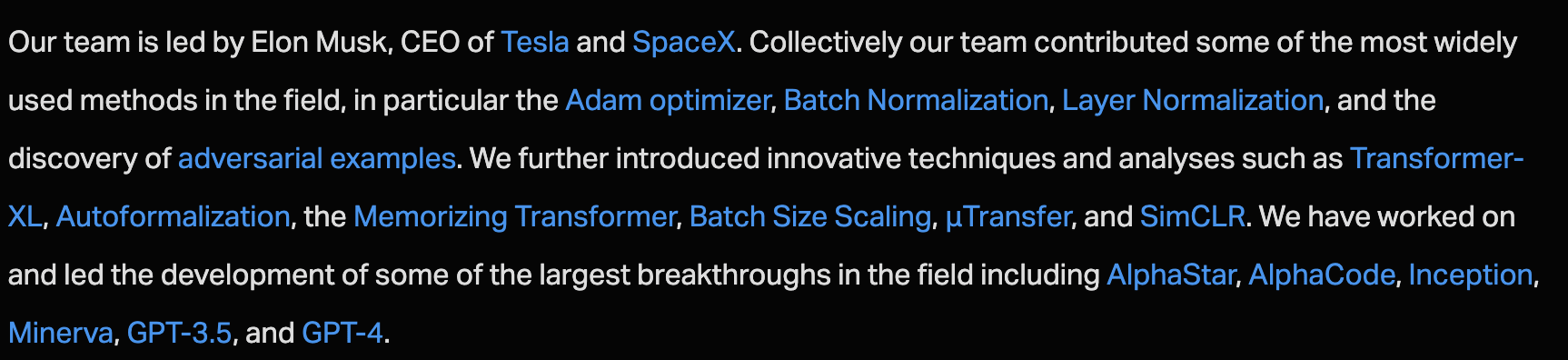

Twitter AI (xAI), which seemingly had no prior history of strong AI engineering, with a small team and limited resources, has somehow built the third smartest AI in the world, apparently on par with the very best from OpenAI.

The top AI image generator, Flux AI, which is considered superior to the offerings from OpenAI and Google, has no Wikipedia page, barely any information available online, and seemingly almost no employees. The next best in class, Midjourney and Stable Diffusion, also operate with surprisingly small teams and limited resources.

I have to admit, I find this all quite confusing.

I expected companies with significant experience and investment in AI to be miles ahead of the competition. I also assumed that any new competitors would be well-funded and dedicated to catching up with the established leaders.

Understanding these dynamics seems important because they influence the merits of things like a potential pause in AI development or the ability of China to outcompete the USA in AI. Moreover, as someone with general market interests, the valuations of some of these companies seem potentially quite off.

So here are my questions:

1. Are the historically leading AI organizations—OpenAI, Anthropic, and Google—holding back their best models, making it appear as though there’s more parity in the market than there actually is?

2. Is this apparent parity due to a mass exodus of employees from OpenAI, Anthropic, and Google to other companies, resulting in the diffusion of "secret sauce" ideas across the industry?

3. Does this parity exist because other companies are simply piggybacking on Meta's open-source AI model, which was made possible by Meta's massive compute resources? Now, by fine-tuning this model, can other companies quickly create models comparable to the best?

4. Is it plausible that once LLMs were validated and the core idea spread, it became surprisingly simple to build, allowing any company to quickly reach the frontier?

5. Are AI image generators just really simple to develop but lack substantial economic reward, leading large companies to invest minimal resources into them?

6. Could it be that legal challenges in building AI are so significant that big companies are hesitant to fully invest, making it appear as if smaller companies are outperforming them?

7. And finally, why is OpenAI so valuable if it’s apparently so easy for other companies to build comparable tech? Conversely, why are these no name companies making leading LLMs not valued higher?

Of course, the answer is likely a mix of the factors mentioned above, but it would be very helpful if someone could clearly explain the structures affecting the dynamics highlighted here.

I originally saw the estimate from EpochAI, which I think was either 8e25 FLOPs or 1e26 FLOPs, but I'm either misremembering or they changed the estimate, since currently they list 5e25 FLOPs (background info for a metaculus question claims the Epoch estimate was 9e25 FLOPs in Feb 2024). In Jun 2024, SemiAnalysis posted a plot with a dot for Gemini Ultra (very beginning of this post) where it's placed at 7e25 FLOPs (they also slightly overestimate Llama-3-405B at 5e25 FLOPs, which wasn't yet released then).

The current notes for the EpochAI estimate are linked from the model database csv file:

Among other clues, the Colab notebook cites Gemini 1.0 report on use of TPUv4 in pods of 4096 across multiple datacenters for Gemini Ultra, claims that SemiAnalysis claims that Gemini Ultra could have been trained on 7+7 pods (which is 57K TPUs), and cites an article from The Information (paywalled):

One TPUv4 offers 275e12 FLOP/s, so at 40% MFU this gives 1.6e25 FLOPs a month by SemiAnalysis estimate on number of pods and 2.2e25 FLOPs a month by The Information's claim on number of TPUs.

They arrive at a 6e25 FLOPs as the point estimate from hardware considerations. The training duration range is listed as 3-6 months before the code, but it's actually 1-6 months in the code, so one of these is a bug. If we put 3-6 months in the code, their point estimate becomes 1e26 FLOPs. They also assume MFU of 40-60%, which seems too high to me.

If their claim of 7+7 pods from SemiAnalysis is combined with the 7e25 FLOPs estimate from the SemiAnalysis plot, this suggests training time of 4 months. At that duration, but with TPU count claim from The Information, we get 9e27 FLOPs. So after considering Epoch's clues, I'm settling at 8e25 FLOPs as my own point estimate.