Paul Christiano named as US AI Safety Institute Head of AI Safety

> U.S. Secretary of Commerce Gina Raimondo announced today additional members of the executive leadership team of the U.S. AI Safety Institute (AISI), which is housed at the National Institute of Standards and Technology (NIST). Raimondo named Paul Christiano as Head of AI Safety, Adam Russell as Chief Vision Officer, Mara Campbell as Acting Chief Operating Officer and Chief of Staff, Rob Reich as Senior Advisor, and Mark Latonero as Head of International Engagement. They will join AISI Director Elizabeth Kelly and Chief Technology Officer Elham Tabassi, who were announced in February. The AISI was established within NIST at the direction of President Biden, including to support the responsibilities assigned to the Department of Commerce under the President’s landmark Executive Order. > Paul Christiano, Head of AI Safety, will design and conduct tests of frontier AI models, focusing on model evaluations for capabilities of national security concern. Christiano will also contribute guidance on conducting these evaluations, as well as on the implementation of risk mitigations to enhance frontier model safety and security. Christiano founded the Alignment Research Center, a non-profit research organization that seeks to align future machine learning systems with human interests by furthering theoretical research. He also launched a leading initiative to conduct third-party evaluations of frontier models, now housed at Model Evaluation and Threat Research (METR). He previously ran the language model alignment team at OpenAI, where he pioneered work on reinforcement learning from human feedback (RLHF), a foundational technical AI safety technique. He holds a PhD in computer science from the University of California, Berkeley, and a B.S. in mathematics from the Massachusetts Institute of Technology.

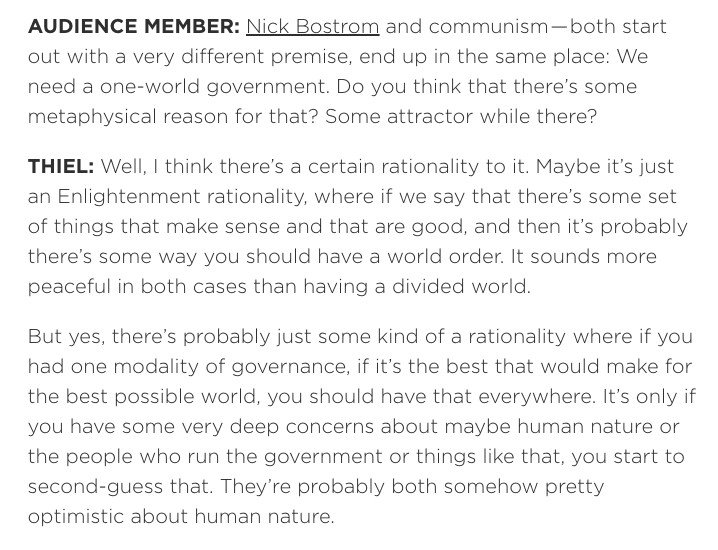

Though we have opposition to Trump in common, and are probably closer culturally to the Democrat side than the Republican side, rationalists don't have that much overlap with Democrat priorities. In fact, Dean Ball has said "AI doomers are actually more at home on the political right than they are on the political left in America".

There have been a couple interesting third parties. A couple years ago Balaji Srinivasan was talking about a tech-aligned "Gray Tribe", which doesn't seem to have gone anywhere. Andrew Yang launched the Forward Party, focused on reducing polarization, ranked-choice voting, open primaries, and a centrist approach. Seems pretty appealing but is still very small compared to the mainstream parties.

I'm not sure what the answer is.