All of Vasco Grilo's Comments + Replies

Thanks for the post! I think genetic engineering for increasing IQ can indeed be super valuable, and is quite neglected in society. However, I would be very surprised if it was among the areas where additional investment generates the most welfare per $:

- Open Philanthropy (OP) estimated that funding R&D (research and development) is 45 % as cost-effective as giving cash to people with 500 $/year.

- People in extreme poverty have around 500 $/year, and unconditional cash transfer to them are like 1/3 as cost-effective as GiveWell's (GW's) top charities. GW

Thanks for the post, Dan and Elliot. I have not read the comments, but I do not think preferential gaps make sense in principle. If one was exactly indifferent between 2 outcomes, I believe any improvement/worsening of one of them must make one prefer one of the outcomes over the other. At the same time, if one is roughly indifferent between 2 outcomes, a sufficiently small improvement/worsening of one of them will still lead to one being practically indifferent between them. For example, although I think i) 1 $ plus a chance of 10^-100 of 1 $ is clearly better than ii) 1 $, I am practically indifferent between i) and ii), because the value of 10^-100 $ is negligible.

Thanks, JBlack. As I say in the post, "We can agree on another [later] resolution date such that the bet is good for you". Metaculus' changing the resolution criteria does not obviously benefit one side or the other. In any case, I am open to updating the terms of the bet such that, if the resolution criteria do change, the bet is cancelled unless both sides agree on maintaining it given the new criteria.

Thanks, Dagon. Below is how superintelligent AI is defined in the question from Metaculus related to my bet proposal. I think it very much points towards full automation.

..."Superintelligent Artificial Intelligence" (SAI) is defined for the purposes of this question as an AI which can perform any task humans can perform in 2021, as well or superior to the best humans in their domain. The SAI may be able to perform these tasks themselves, or be capable of designing sub-agents with these capabilities (for instance the SAI may design robots capable of beat

Fair! I have now added a 3rd bullet, and clarified the sentence before the bullets:

...I think the bet would not change the impact of your donations, which is what matters if you also plan to donate the profits, if:

- Your median date of superintelligent AI as defined by Metaculus was the end of 2028. If you believe the median date is later, the bet will be worse for you.

- The probability of me paying you if you win was the same as the probability of you paying me if I win. The former will be lower than the latter if you believe the transfer is less likely given su

Thanks, Daniel. My bullet points are supposed to be conditions for the bet to be neutral "in terms of purchasing power, which is what matters if you also plan to donate the profits", not personal welfare. I agree a given amount of purchashing power will buy the winner less personal welfare given superintelligent AI, because then they will tend to have a higher real consumption in the future. Or are you saying that a given amount of purchasing power given superintelligent AI will buy not only less personal welfare, but also less impartial welfare via donati...

Thanks, Richard! I have updated the bet to account for that.

...If, until the end of 2028, Metaculus' question about superintelligent AI:

- Resolves non-ambiguously, I transfer to you 10 k January-2025-$ in the month after that in which the question resolved.

- Does not resolve, you transfer to me 10 k January-2025-$ in January 2029. As before, I plan to donate my profits to animal welfare organisations.

The nominal amount of the transfer in $ is 10 k times the ratio between the consumer price index for all urban consumers and items in the United States, as repo

Great discussion! I am open to the following bet.

...If, until the end of 2028, Metaculus' question about superintelligent AI:

- Resolves non-ambiguously, I transfer to you 10 k January-2025-$ in the month after that in which the question resolved.

- Does not resolve, you transfer to me 10 k January-2025-$ in January 2029. As before, I plan to donate my profits to animal welfare organisations.

The nominal amount of the transfer in $ is 10 k times the ratio between the consumer price index for all urban consumers and items in the United States, as reported b

Sorry for the lack of clarity! "today-$" refers to January 2025. For example, assuming prices increased by 10 % from this month until December 2028, the winner would receive 11 k$ (= 10*10^3*(1 + 0.1)).

You are welcome!

I also guess the stock market will grow faster than suggested by historical data, so I would only want to have X roughly as far as in 2028.

Here is a bet which would be worth it for me even with more distant resolution dates. If, until the end of 2028, Metaculus' question about ASI:

- Resolves with a given date, I transfer to you 10 k 2025-January-$.

- Does not resolve, you transfer to me 10 k 2025-January-$.

- Resolves ambiguously, nothing happens.

This bet involves fixed prices, so I think it would be neutral for you in terms of purchasing power rig...

You could instead pay me $10k now, with the understanding that I'll pay you $20k later in 2028 unless AGI has been achieved in which case I keep the money... but then why would I do that when I could just take out a loan for $10k at low interest rate?

Have you or other people worried about AI taken such loans (e.g. to increase donations to AI safety projects)? If not, why?

If you have an idea for a bet that's net-positive for me I'm all ears.

Are you much higher than Metaculus' community on Will ARC find that GPT-5 has autonomous replication capabilities??

I gain money in expectation with loans, because I don't expect to have to pay them back.

I see. I was implicitly assuming a nearterm loan or one with an interest rate linked to economic growth, but you might be able to get a longterm loan with a fixed interest rate.

What specific bet are you offering?

I transfer 10 k today-€ to you now, and you transfer 20 k today-€ to me if there is no ASI as defined by Metaculus on date X, which has to be sufficiently far away for the bet to be better than your best loan. X could be 12.0 years (= LN(0.9*20*10^3/(10*10^3))/L...

You could instead pay me $10k now, with the understanding that I'll pay you $20k later in 2028 unless AGI has been achieved in which case I keep the money... but then why would I do that when I could just take out a loan for $10k at low interest rate?

We could set up the bet such that it would involve you losing/gaining no money in expectation under your views, whereas you would lose money in expectation with a loan? Also, note the bet I proposed above was about ASI as defined by Metaculus, not AGI.

Thanks, Daniel. That makes sense.

But it wasn't rational for me to do that, I was just doing it to prove my seriousness.

My offer was also in this spirit of you proving your seriousness. Feel free to suggest bets which would be rational for you to take. Do you think there is a significant risk of a large AI catastrophe in the next few years? For example, what do you think is the probability of human population decreasing from (mid) 2026 to (mid) 2027?

You are basically asking me to give up money in expectation to prove that I really believe what I'm saying, when I've already done literally this multiple times. (And besides, hopefully it's pretty clear that I am serious from my other actions.) So, I'm leaning against doing this, sorry. If you have an idea for a bet that's net-positive for me I'm all ears.

Yes I do think there's a significant risk of large AI catastrophe in the next few years. To answer your specific question, maybe something like 5%? idk.

Thanks, Daniel!

To be clear, my view is that we'll achieve AGI around 2027, ASI within a year of that, and then some sort of crazy robot-powered self-replicating economy within, say, three years of that

Is you median date of ASI as defined by Metaculus around 2028 July 1 (it would be if your time until AGI was strongly correlated with your time from AGI to ASI)? If so, I am open to a bet where:

- I give you 10 k€ if ASI happens until the end of 2028 (slightly after your median, such that you have a positive expected monetary gain).

- Otherwise, you give me 10 k€,

Thanks, Ryan.

Daniel almost surely doesn't think growth will be constant. (Presumably he has a model similar to the one here.)

That makes senes. Daniel, my terms are flexible. Just let me know what is your median fraction for 2027, and we can go from there.

I assume he also thinks that by the time energy production is >10x higher, the world has generally been radically transformed by AI.

Right. I think the bet is roughly neutral with respect to monetary gains under Daniel's view, but Daniel may want to go ahead despite that to show that he really endorses h...

Thanks for the update, Daniel! How about the predictions about energy consumption?

| In what year will the energy consumption of humanity or its descendants be 1000x greater than now? |

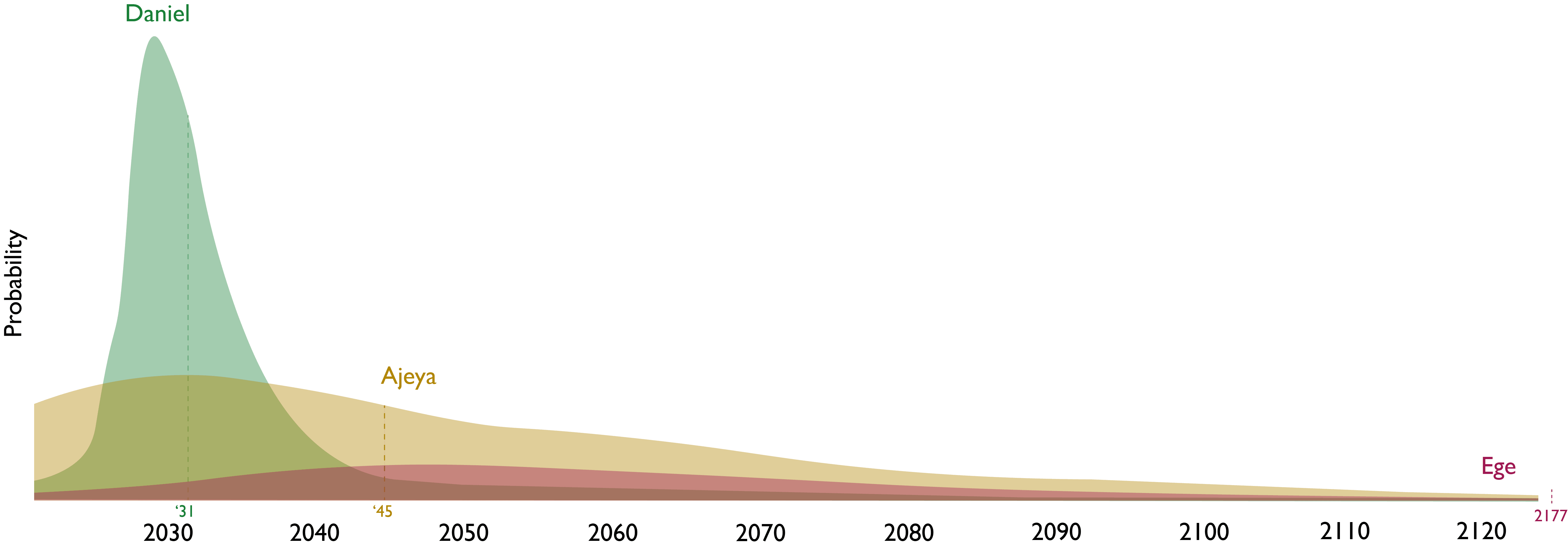

Your median date for humanity's energy consumption being 1 k times as large as now is 2031, whereas Ege's is 2177. What is your median primary energy consumption in 2027 as reported by Our World in Data as a fraction of that in 2023? Assuming constant growth from 2023 until 2031, your median fraction would be 31.6 (= (10^3)^((2027 - 2023)/(2031 - 2023))). I would be happy to set ...

Hi there,

Assuming 10^6 bit erasures per FLOP (as you did; which source are you using?), one only needs 8.06*10^13 kWh (= 2.9*10^(-21)*10^(35+6)/(3.6*10^6)), i.e. 2.83 (= 8.06*10^13/(2.85*10^13)) times global electricity generation in 2022, or 18.7 (= 8.06*10^13/(4.30*10^12)) times the one generated in the United States.

Thanks!

Nice post, Luke!

with this handy reference table:

There is no table after this.

He also offers a chart showing how a pure Bayesian estimator compares to other estimators:

There is no chart after this.

Thanks for this clarifying comment, Daniel!

Great post!

The R-square measure of correlation between two sets of data is the same as the cosine of the angle between them when presented as vectors in N-dimensional space

Not R-square, just R:

Nice post! I would be curious to know whether significant thinking has been done on this topic since your post.

Thanks for writing this!

Have you considered crossposting to the EA Forum (although the post was mentioned here)?

With a loguniform distribution, the mean moral weight is stable and roughly equal to 2.

Thanks for the post!

I was trying to use the lower and upper estimates of 5*10^-5 and 10, guessed for the moral weight of chickens relative to humans, as the 10th and 90th percentiles of a lognormal distribution. This resulted in a mean moral weight of 1000 to 2000 (the result is not stable), which seems too high, and a median of 0.02.

1- Do you have any suggestions for a more reasonable distribution?

2- Do you have any tips for stabilising the results for the mean?

I think I understand the problems of taking expectations over moral weights (E(X) is not equal to 1/E(1/X)), but believe that it might still be possible to determine a reasonable distribution for the moral weight.

"These two equations are algebraically inconsistent". Yes, combining them results into "0 < 0", which is false.

Thanks for the great summary, Kave!

Nitpick. SWP received 1.82 M 2023-$ (= 1.47*10^6*1.24) during the year ended on 31 March 2024, which is 1.72*10^-8 (= 1.82*10^6/(106*10^12)) of the gross world product (GWP) in 2023, and OP estimated R&D has a benefit-to-cost ratio of 45. So I estimate SWP can only be up to 1.29 M (= 1/(1.72... (read more)