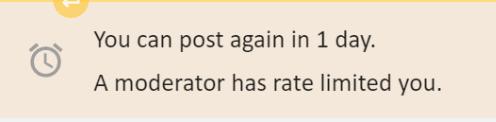

Summary: the moderators appear to be soft banning users with 'rate-limits' without feedback. A careful review of each banned user reveals it's common to be banned despite earnestly attempting to contribute to the site. Some of the most intelligent banned users have mainstream instead of EA views on AI.

Note how the punishment lengths are all the same, I think it was a mass ban-wave of 3 week bans:

Gears to ascension was here but is no longer, guess she convinced them it was a mistake.

Have I made any like really dumb or bad comments recently:

https://www.greaterwrong.com/users/gerald-monroe?show=comments

Well I skimmed through it. I don't see anything. Got a healthy margin now on upvotes, thanks April 1.

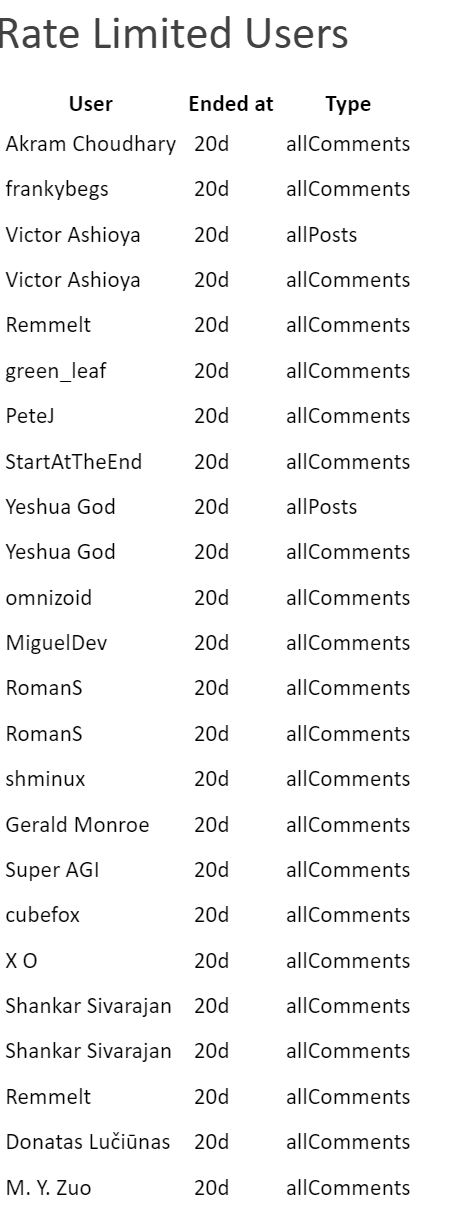

Over a month ago, I did comment this stinker. Here is what seems to the same take by a very high reputation user here, @Matthew Barnett , on X: https://twitter.com/MatthewJBar/status/1775026007508230199

Must be a pretty common conclusion, and I wanted this site to pick an image that reflects their vision. Like flagpoles with all the world's flags (from coordination to ban AI) and EMS uses cryonics (to give people an alternative to medical ASI).

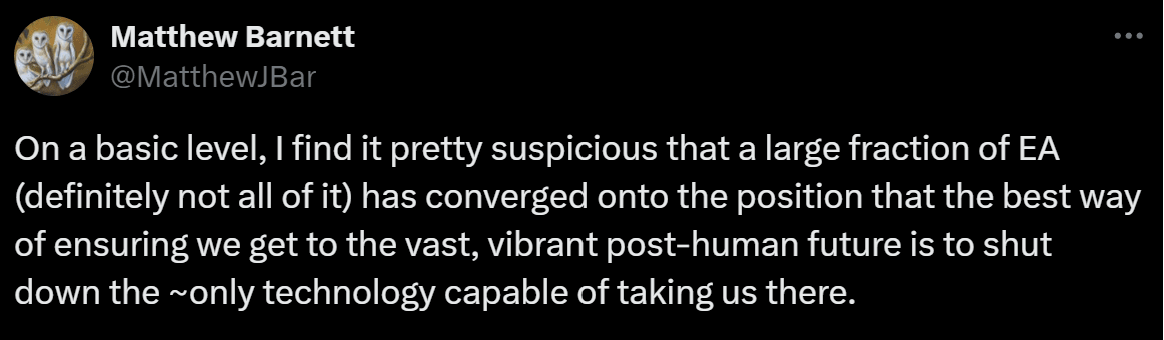

I asked the moderators:

@habryka says:

I skimmed all comments I made this year, can't find anything that matches to this accusation. What comment did this happen on? Did this happen once or twice or 50 times or...? Any users want to help here, it surely must be obvious.

You can look here: https://www.greaterwrong.com/users/gerald-monroe?show=comments if you want to help me find what habryka could possibly be referring to.

I recall this happening once, Gears called me out on it, and I deleted the comment.

Conditional that this didn't happen this year, why wasn't I informed or punished or something then?

Skimming the currently banned user list:

Let's see why everyone else got banned. Maybe I can infer a pattern from it:

Akram Choudhary :

His negative karma doesn't add up to -38, not sure why. AI view is in favor of red teaming, which is always good.

doomer view, good karma (+2.52 karma per comment), hasn't made any comments in 17 days...why rate limit him? Skimming his comments they look nice and meaty and well written...what? All I can see is over the last couple of month he's not getting many upvotes per comment.

Ok at least I can explain this one. One comment at -41, in the last 20, green_leaf rarely comments. doomer view.

Tries to use humanities knowledge to align AI, apparently the readerbase doesn't like it. Probably won't work, banned for trying.

1.02 karma per comment, a little low, may still be above the bar. Not sure what he did wrong, comments are a bit long?

doomer view, lots of downvotes

Seems to just be running a low vote total. People didn't like a post justifying religion.

Why rate limited? This user seems to be doing actual experiments. Karma seems a little low but I can't find any big downvote comments or posts recently.

Overall Karma isn't bad, 19 upvotes the most recent post. Seems to have a heavily downvoted comment that's the reason for the limit.

@shminux this user has contributed a lot to the site. One comment heavily downvoted, algorithm is last 20.

It certainly feels that way from the receiving end.

2.49 karma per comment, not bad. Cube tries to applies Baye's rule in several comments, I see a couple barely hit -1, I don't have an explanation here.

possibly just karma

One heavily downvoted comment for AI views. I also noticed the same and I also got a lot of downvotes. It's a pretty reasonable view, we know humans can be very misaligned, upgrading humans and trying to control them seems like a superset of the AI alignment problem. Don't think he deserves this rate limit but at least this one is explainable.

Has anyone else experienced anything similar? Has anyone actually received feedback on a specific post or comment by the moderators?

Finally, I skipped several negative overall karma users not mentioned, because the reason is obvious.

Remarks :

I went into this expecting the reason had to do with AI views, because the site owners are very much 'doomer' faction. But no, plenty of rate limited people on that faction. I apologize for the 'tribalism' but it matters:

https://www.greaterwrong.com/users/nora-belrose Nora Belrose is one of the best posters this site has in terms of actual real world capabilities knowledge. Remember the OAI contributors we see here aren't necessarily specialists in 'make a real system work'. Look at the wall of downvotes.

vs

https://www.greaterwrong.com/users/max-h Max is very worried about AI, but I have seen him write things I think disagree with current mainstream science and engineering. He writes better than everyone banned though.

But no, that doesn't explain it. Another thing I've noticed is that almost all the users are trying. They are trying to use rationality, trying to understand what's been written here, trying to apply Baye's rule or understand AI. Even some of the users with negative karma are trying, just having more difficulty. And yeah it's a soft ban from the site, I'm seeing that a lot of rate limited users simply never contribute 20 more comments to get out of the sump from one heavily downvoted comment or post.

Finally, what rationality principles justify "let's apply bans to users of our site without any reason or feedback or warning. Let's make up new rules after the fact."

Specifically, every time I have personally been punished, it would be no warning, then @Raemon first rate limited me, by making up a new rule (he could have just messaged me me first), then issued a 3 month ban, and gave some reasons I could not substantiate, after carefully reviewing my comments for the past year. I've been enthusiastic about this site for years now, I absolutely would have listened to any kind of warnings or feedback. The latest moderator limit is the 3rd time I have been punished, with no reason I can validate given or content cited.

I asked for, in a private email to the moderators, any kind of feedback or specific content I wrote to justify the ban, and was not given it. All I wanted was a few examples of the claimed behavior, something I could learn from.

Is there some reason the usual norms of having rules, not punishing users until after making a new rule, and informing users when they broke a rule and what user submission was rule violating isn't rational? Just asking here, every mainstream site does this, laws do this, what is the evidence justifying doing it differently?

There's this:

well-kept-gardens-die-by-pacifism

Any community that really needs to question its moderators, that really seriously has abusive moderators, is probably not worth saving. But this is more accused than realized, so far as I can see.

Is not giving a reason for a decision, or informing a user/issuing a lesser punishment instead of immediately going to the maximum punishment a community with abusive moderators? I can say in other online communities, absolutely. Sites have split over one wrongful ban of a popular user.

Thanks for your reply!

I do have access, I just felt like waiting and replying here. By the way, if I comment 20 times on my shortform, will the rate-limit stop? This feels like an obvious exploit in the rate-limiting algorithm, but it's still possible that I don't know how it works.

Then outright banning would work better than rate-limiting without feedback like this. If people contribute in good faith, they need to know just what other people approve of. Vague feedback doesn't help alignment very much. And while an eternal september is dangerous, you likely don't want a community dominated by veteran users who are hostile to new users. I've seen this in videogame communities and it leads to forms of stagnation.

It confuses me if you got 10 upvotes for the contents of your reply (I can't find fault with the writing, formatting and tone), but it's easily explained by assuming that users here don't act much differently than they do on Reddit, which would be sad.

I already read the new users guide. Perhaps I didn't put it clearly enough with "I think people should take responsibility for their words", but it was the new users guide which told me to post. I read the "Is LessWrong for you?" section, and it told me that LessWrong was likely for me. I read the "well-kept garden" post in the past and found myself agreeing with its message. This is why I felt mislead and why I don't think linking these two sections makes for a good counter-argument (after all, I attempted to communicate that I had already taken them into account). I thought LW should take responsibility for what it told me, as trusting it is what got me rate-limited. That's the core message, the rest of my reply just defends my approach of commenting.

In order not to be misunderstood completely, I'd need a disclaimer like this at the top of every comment I make, which is clearly not feasible:

Humanity is somewhat rational now, but our shared knowledge is still filled with old errors which were made before we learned how to think. Many core assumptions are just wrong. But if these beliefs are corrected, then the cascade would collapse some of the beliefs that people hold dear, or touch upon controversial subjects. The truth doesn't stand a chance against politics, morality and social norms. Sadly, if you want to prevent society from collapsing, you will need to grapple a bit with these three subjects. But that will very likely lead to downvotes.

A lot of things are poorly explained, but nonetheless true. Other things are very well argued, but nonetheless false. "Manifesting the future by visualizing it" is pseudoscience, but it has a positive utility. "We must make new laws to keep everyone safe" sounds reasonable, but after 1000 iterations it should have dawned on us that the 1001th law isn't going to save us. I think that the reasonable sentence would net you positive karma on here, while the pseudoscience would get called worthless.

My logical intelligence is much higher than my verbal - and most people who are successful in social and academic areas of life are the complete opposite. Nonetheless, some of us can see patterns that other people just can't. Human beings also have a lot in common with AI, we're blackboxes. Our instincts are discriminatory and biased, but only because people who weren't went extinct. Those who attempt to get rid of biases should first know what they are good for (Chesterton's fence). But I can't see a single movement in society advocating for change which actually understands what it's doing. But people don't like hearing this.

As of right now, the blackbox (intuition, instinct, etc) is still smarter than the explainable truth. This will change as people are taught how to disregard the blackbox and even break it. But this also goes against the consensus (in a way that I assume it will be considered "bad quality". Some people might upvote what they disagree with, but I don't think that goes for many types of disagreement)

And I'm also only human. Rate-limited users are perhaps the bottom 5% of posters? But I'm above that. I'm just grappling with subjects which are beyond my level. You told me to read the rules, that's a lot easier. I could also get lots of upvotes if I engaged with subjects that I'm overqualified for. But like with AGI, some subjects are beyond our abilities, but I don't think we can't afford to ignore them, so we're forced to make fools of ourselves trying.