Penned by Yusuke Hayashi, an independent researcher hailing from Japan, this article bears no affiliation to the authors whose scholarly works are referenced herein. Demonstrating intellectual autonomy, the analysis presented is unequivocally distinct from the cited publications.Have you ever wondered if the "red" you perceive is the same "red" someone else experiences? A recent study explores this question using a distance measure called the Gromov-Wasserstein distance (GWD).

Is my “red” your “red”?: Unsupervised alignment of qualia structures via optimal transport[1]

Paper (Preprint by Kawakita et al. published in PsyArXiv): https://psyarxiv.com/h3pqm

Slide (Presentation by Masafumi Oizumi at Optimal Transport Workshop 2023): https://drive.google.com/file/d/1Z5QTxayqJkmYyOgirrbJRRflY8HOy8ox/view?usp=sharing

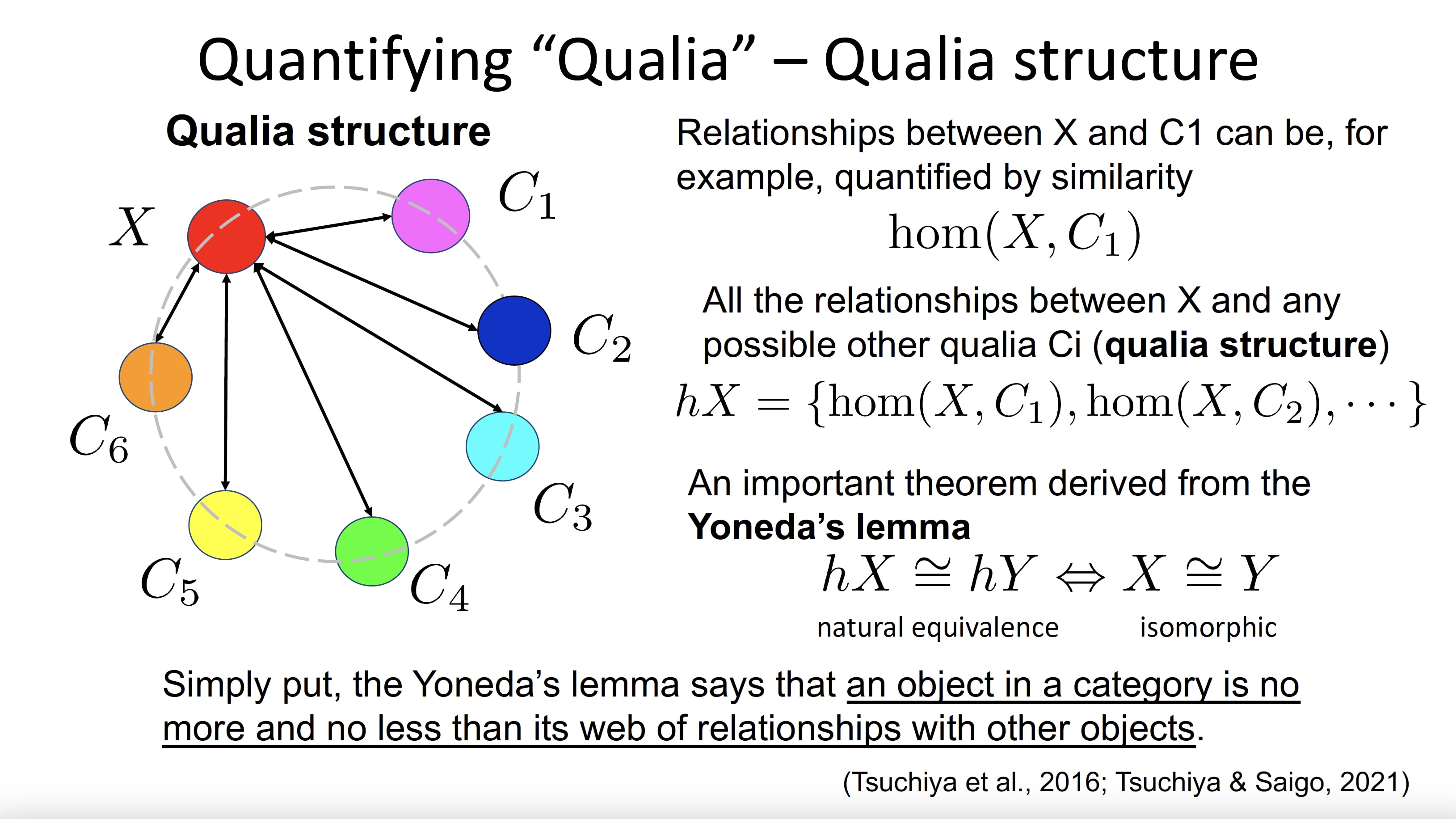

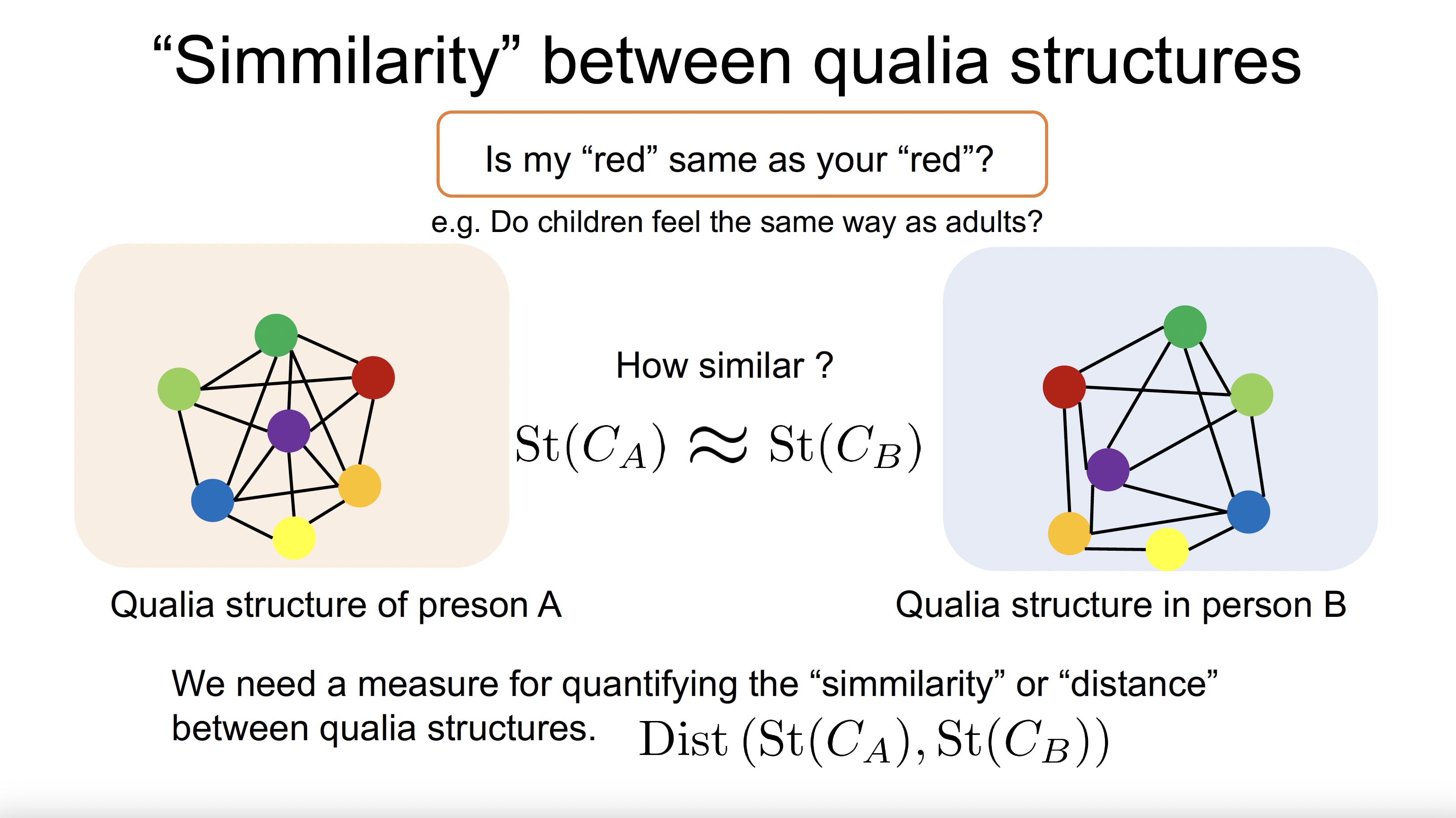

In the paper "Is my "red" your "red"? : Unsupervised Alignment of Qualia Structures by Optimal Transport" (Kawakita et al., 2022) proposes a new approach to assessing the similarity of sensory experiences between individuals. This approach, based on GWD and optimal transport, allows for the alignment of qualia structures without assuming a correspondence between individual experiences. As a result, it provides a quantitative means of comparing the similarity of qualia structures between individuals.

By applying this method, it is possible to compare the similarity of subjective experiences, or qualia structures, between individuals using GWD, a distance measure employed in machine learning research. While this study compares sensory experiences between humans, the same approach could be used to compare the sensory experiences of humans and the advanced AI model, GPT-4. We are now ready to revisit the question posed in the title:

Is "red" for GPT-4 the same as for you?

If asked whether large language models (LLMs) like GPT-4 possess consciousness, the likely answer would be "probably not." However, the method proposed here provides a way to determine whether such models share the same qualia structure as humans.

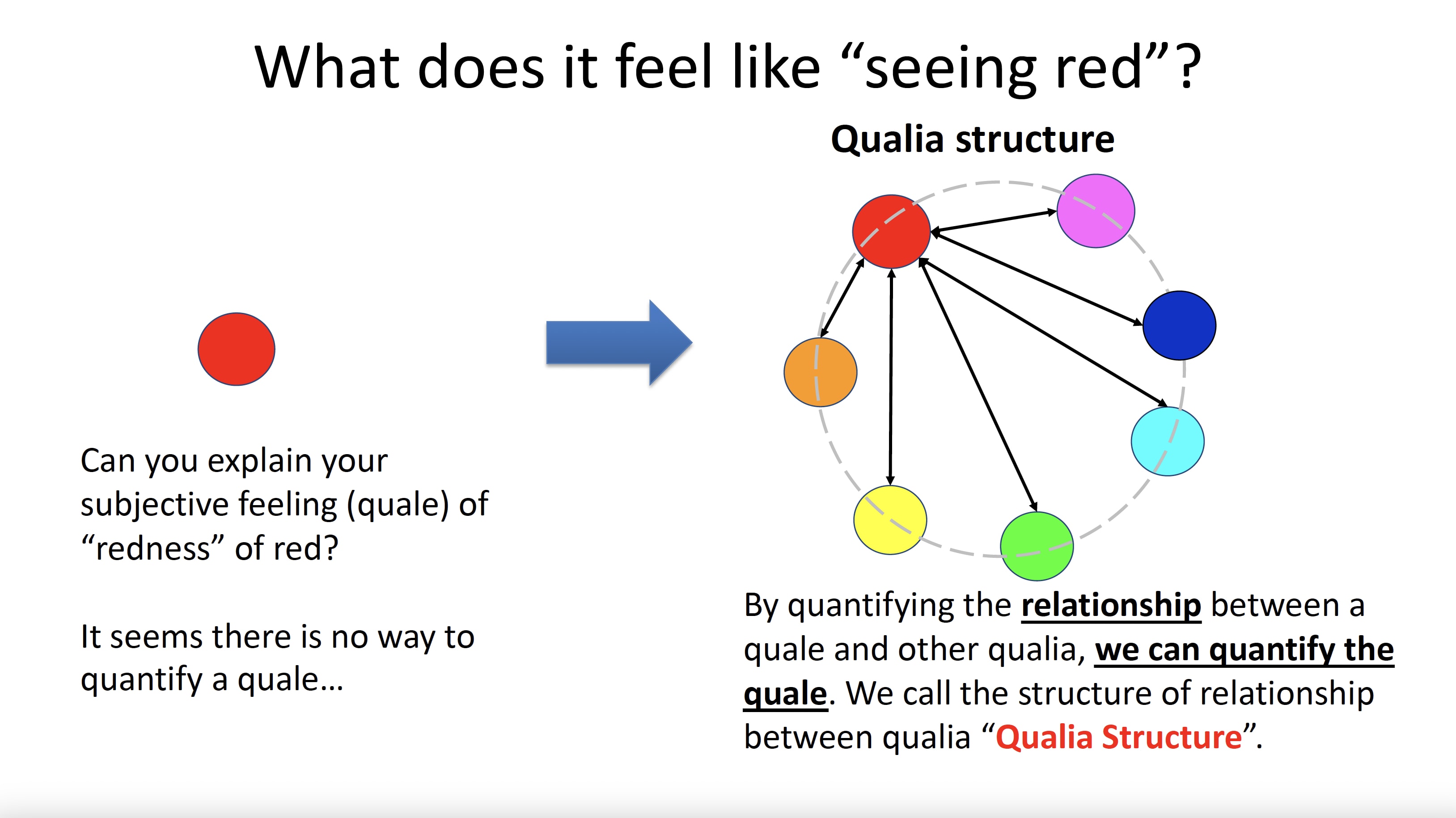

Consciousness and qualia are closely related. Consciousness is the state of being aware of, perceiving, and thinking about one's surroundings, thoughts, and feelings. Qualia, on the other hand, are subjective experiences and sensations that accompany consciousness, such as the taste of chocolate, the sound of a musical instrument, or the sensation of pain.

Qualia are an essential part of conscious experience, and their relationship to consciousness is of paramount importance. Without qualia, our experience of consciousness would lack a rich, subjective texture and would not be unique and personal. In other words, qualia give our experience of consciousness its unique character and enable us to understand the world around us.

One of the central debates in the philosophy of mind revolves around the nature of qualia and their relation to consciousness. Some philosophers argue that qualia are difficult to study and understand because they cannot be reduced to physical or functional descriptions. Others argue that advances in neuroscience and cognitive science may allow qualia to be explained within a naturalistic framework. The aforementioned studies shed new light on the long-standing controversy surrounding the nature of qualia and its relationship to consciousness.

Recently, Dr. Geoffrey Hinton, one of the pioneers of the deep learning model, resigned from Google, advocating the potential dangers of making AI smarter than humans. In LessWrong, there is a lot of discussion about the safety of AI and the integrity of AI. Throughout this article, I would like to pose the following question to the reader:

If deep learning models acquire consciousness in the near future, can we consider them as alignment targets?

- ^

G. Kawakita, A. Zeleznikow-Johnston, K. Takeda, N. Tsuchiya and M. Oizumi, Is my "red" your "red"?: Unsupervised alignment of qualia structures via optimal transport. PsyArXiv https://doi.org/10.31234/osf.io/h3pqm (2023).

Dear Portia,

Thank you for your thought-provoking and captivating response. Your expertise in the field of biological consciousness is clear, and I'm grateful for the depth and breadth of your commentary on the potential implications of this paper.

If we accept the assumption that the subjectivity of a specific qualia is defined by its unique and asymmetric relations to other qualia, then this paper indeed offers a method for verifying the possibility that such qualia could be experienced similarly among humans. Your point that the 'hard problem' of consciousness may not be as challenging as we previously thought is profoundly important.

However, I hold a slightly different view about the 'new approach to deciphering neural correlates of consciousness' proposed in this paper. While I agree that this approach does not specifically answer whether a certain entity with a qualia structure experiences anything, given the right conditions and complexity, I am interested in contemplating the possibility of such an experience occurring, if we were to introduce what you refer to as 'some plausible extra assumptions'.

I apologize if my thoughts on alignment were unclear. I did not sufficiently explain AI alignment in my post. AI alignment is about ensuring that the goals and actions of an AI system coincide with human values and interests. Adding the factor of AI consciousness undoubtedly complicates the alignment problem. For instance, if we acknowledge an AI as a sentient being, it could lead to a situation similar to debates about animal rights, where we would need to balance human values and interests with those of non-human entities. Moreover, if an AI were to acquire qualia or consciousness, it might be able to understand humans on a much deeper level.

Regarding my final question, I was interested in exploring the potential implications of this work in the context of AI alignment and safety, as well as ethical considerations that we might need to ponder as we progress in this field. Your insights have provided plenty of food for thought, and I look forward to hearing more from you.

Thank you again for your profound insights.

Best,

Yusuke