If that was the case we would be doomed far worse than if alignment was extremely hard. It's only because of all the writing that people like Eliezer have done talking about how hard it is and how we are not on track, plus the many examples of total alignment failures already observed in existing AIs (like these or these), that I have any hope for the future at all.

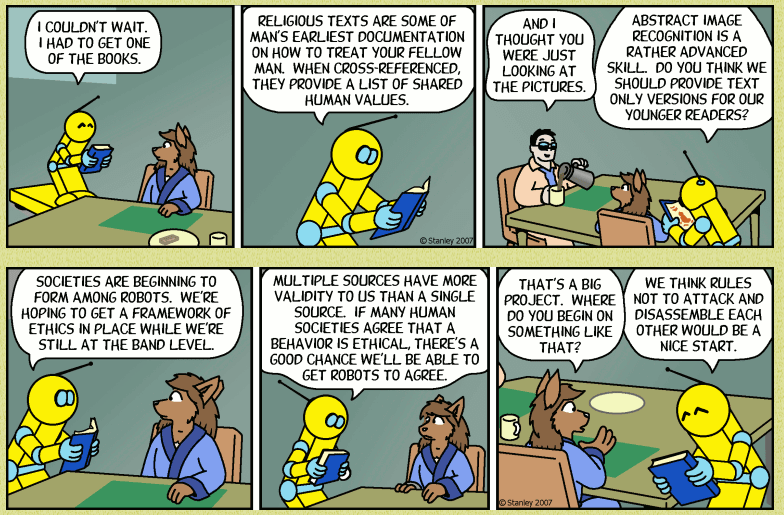

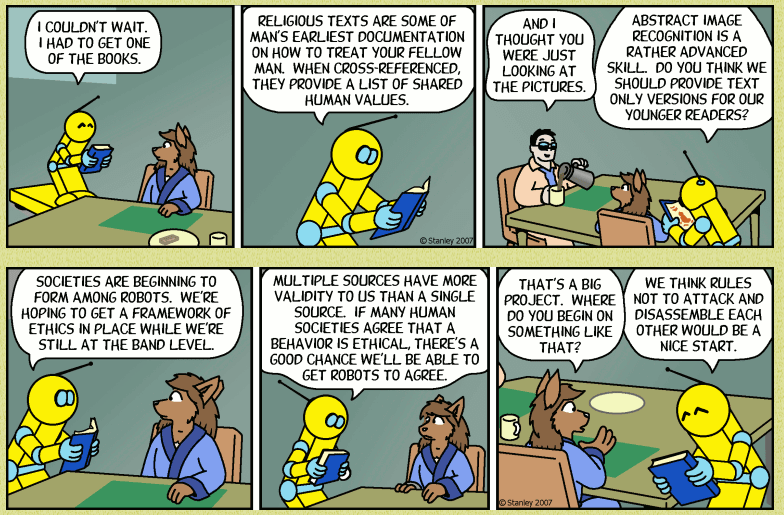

Remember, the majority of humans use as the source of their morality a religion that says that most people are tortured in hell for all eternity (or, if an eastern religion, tortured in a Naraka for a time massively longer than the real age of the universe so far which is basically the same thing). Even atheists who think they are false often still believe they have good moral teachings: For example, the writer of the popular webcomic Freefall is an Atheist Transhumanist Libertarian and his serious proposed AI alignment method is to teach them to support the values taught in human religions.

Even if you avoid this extremely common failure mode, planned societies run for the good of everyone are still absolutely horrible. Almost all Utopias in fiction suck even when they go the way the author says it would. In the real world, when the plans hit real human psychology, economics and so on, the result is invariably disaster. Imagine living in an average kindergarten all day every day, and that's one of the better options. The life I had was more like Comazotz from A Wrinkle in Time, and it didn't end when school was let out.

We also wouldn't be allowed to leave. Now, for the supposed good of the beneficiaries, generally runaways are forcably returned to their home and terminally ill people in constant agony are forced to stay alive. The implication of your idea being true would that you should kill yourself now while you still have the chance.

The good news is that, instead, only the tiny minority of people able to notice problems right in front of them (even without suffering from them personally) have any chance of successful alignment.

I would expect that for model-based RL, the more powerful the AI is at predicting the environment and the impact of its actions on it, the less prone it becomes to Goodharting its reward function. That is, after a certain point, the only way to make the AI more powerful at optimizing its reward function is to make it better at generalizing from its reward signal in the direction that the creators meant for it to generalize.

In such a world, when AIs are placed in complex multiagent environments where they engage in iterated prisoner's dilemmas, the more intelligent ones (those with greater world-modeling capacity) should tend to optimize for making changes to the environment that shift the Nash equilibrium toward cooperate-cooperate, ensuring more sustainable long-term rewards all around. This should happen automatically, without prompting, no matter how simple or complex the reward functions involved, whenever agents surpass a certain level of intelligence in environments that allow for such incentive-engineering.