Seeking Power is Often Robustly Instrumental in MDPs relates the structure of the agent's environment (the 'Markov decision process (MDP) model') to the tendencies of optimal policies for different reward functions in that environment ('instrumental convergence'). The results tell us what optimal decision-making 'tends to look like' in a given environment structure, formalizing reasoning that says e.g. that most agents stay alive because that helps them achieve their goals.

Several people have claimed to me that these results need subjective modelling decisions. For example, ofer wrote:

I think using a well-chosen reward distribution is necessary, otherwise POWER depends on arbitrary choices in the design of the MDP's state graph. E.g. suppose the student [in a different example] writes about every action they take in a blog that no one reads, and we choose to include the content of the blog as part of the MDP state. This arbitrary choice effectively unrolls the state graph into a tree with a constant branching factor (+ self-loops in the terminal states) and we get that the POWER of all the states is equal.

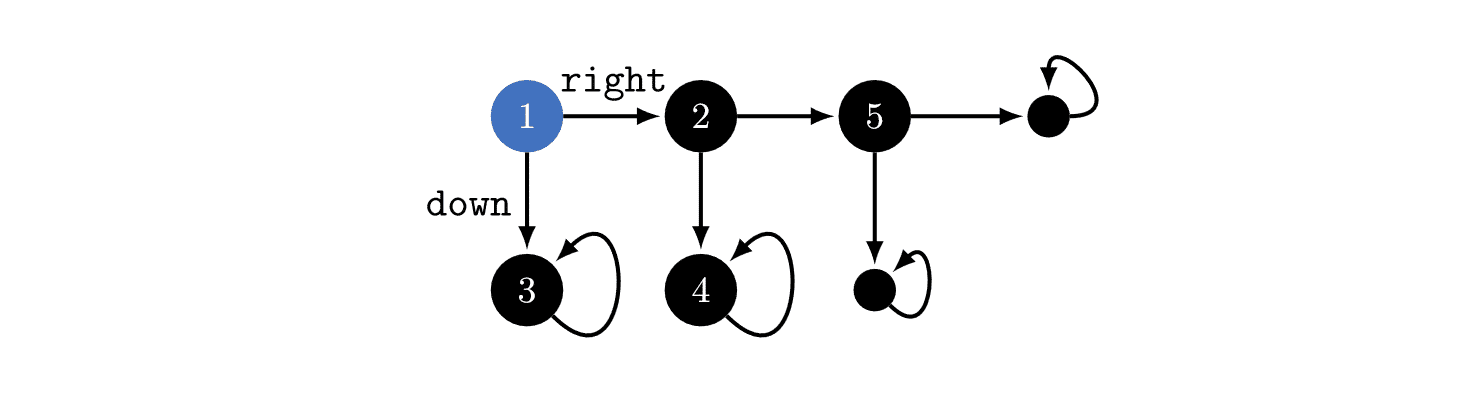

In the above example, you could think about the environment as in the above image, or you could imagine that state '3' is actually a million different states which just happen to seem similar to us! If that were true, then optimal policies would tend to go , since that would give the agent millions of choices about where it ends up. Therefore, the power-seeking theorems depend on subjective modelling assumptions.

I used to think this, but this is wrong. The MDP model is determined by the agent's implementation + the task's dynamics.

To make this point, let's back out to a more familiar MDP: Pac-Man.

When the discount rate is near 1, most reward functions avoid immediately dying to the ghost, because then they'd be stuck in a terminal state (the red-ghost-game-over state). But why can't the red ghost be equally well-modeled as secretly being 5 googolplex different terminal states?

An MDP model (technically, a rewardless MDP) is a tuple , where is the state space, is the action space, and is the (potentially stochastic) transition function which says what happens when the agent takes different actions at different states. has to be Markovian, depending only on the observed state and the current action, and not on prior history.

Whence cometh this MDP model? Thin air? Is it just a figment of our imagination, which we use to understand what the agent is doing as it learns a policy?

When we train a policy function in the real world, the function takes in an observation (the state) and outputs (a distribution over) actions. When we define state and action encodings, this implicitly defines an "interface" between the agent and the environment. The state encoding might look like "the set of camera observations" or "the set of Pac-Man game screens", and actions might be numbers 1-10 which are sent to actuators, or to the computer running the Pac-Man code, etc.

(In the real world, the computer simulating Pac-Man may suffer a hardware failure / be hit by a gamma ray / etc, but I don't currently think these are worth modelling over the timescales over which we train policies.)

Suppose that for every state-action history, what the agent sees next depends only on the currently observed state and the most recent action taken. Then the environment is Markovian (transition dynamics only depend on what you do right now, not what you did in the past) and fully observable (you can see the whole state all at once), and the agent encodings have defined the MDP model.

In Pac-Man, the MDP model is uniquely defined by how we encode states and actions, and the part of the real world which our agent interfaces with. If you say "maybe the red ghost is represented by 5 googolplex states", then that's a falsifiable claim about the kind of encoding we're using.

That's also a claim that we can, in theory, specify reward functions which distinguish between 5 googolplex variants of red-ghost-game-over. If that were true, then yes - optimal policies really would tend to "die" immediately, since they'd have so many choices.

The "5 googolplex" claim is both falsifiable and false. Given an agent architecture (specifically, the two encodings), optimal policy tendencies are not subjective. We may be uncertain about the agent's state- and action-encodings, but that doesn't mean we can imagine whatever we want.

(I think that the same point holds for other environment types, like POMDPs.)

I'll address everything in your comment, but first I want to zoom out and say/ask:

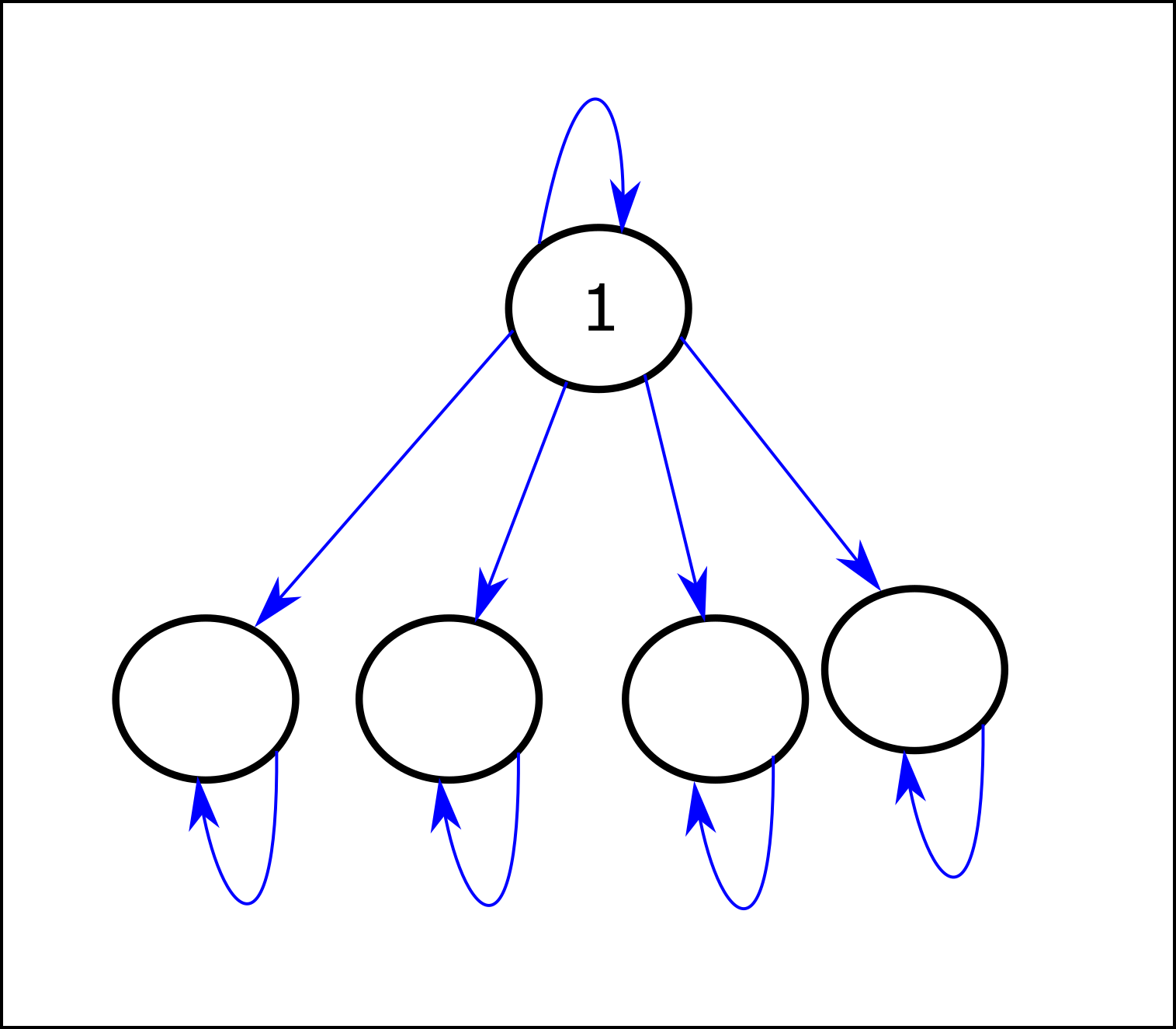

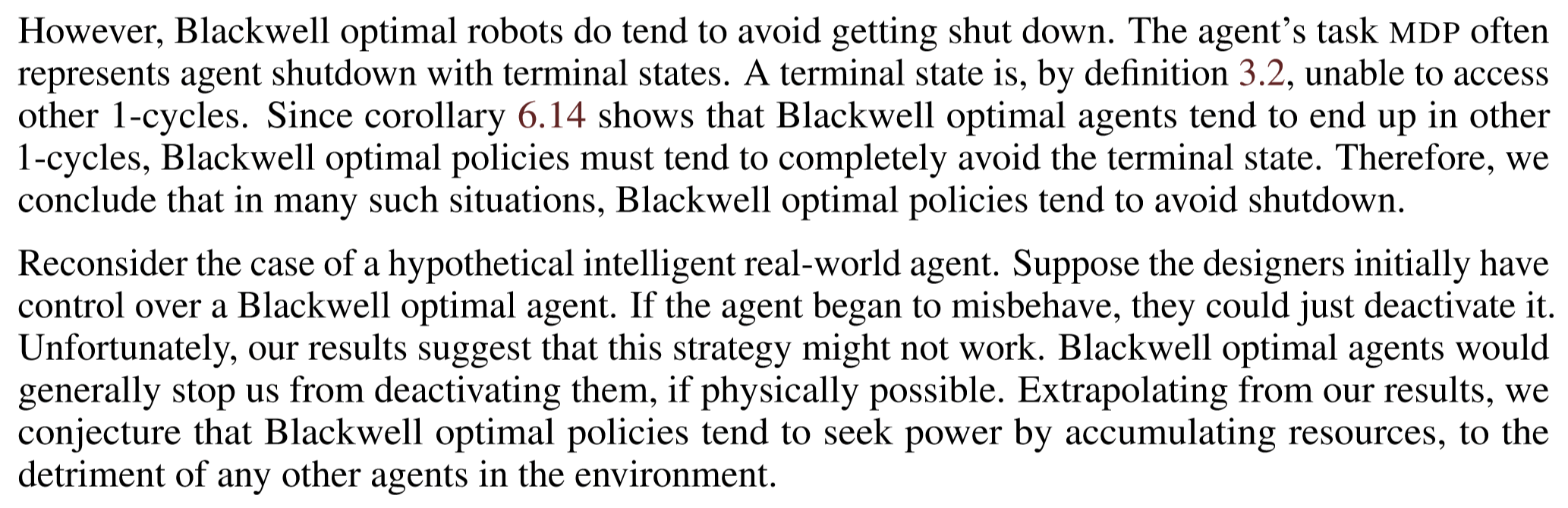

The first state has the largest POWER (IID), but for most reward functions the optimal policy is to immediately transition to a lower-POWER state (even in the limit as γ approaches 1). The paper says: "Theorem 6.6 shows it’s always robustly instrumental and power-seeking to take actions which allow strictly more control over the future (in a graphical sense)." I don't yet understand the theorem, but is there somewhere a description of the set/distribution of MDP transition functions for which that statement applies? (Specifically, the "always robustly instrumental" part, which doesn't seem to hold in the example above.)

Regarding the points from your last comment:

Maybe for this purpose we should weight reward functions by how likely we are to encounter an AI system that pursues them (this should probably involve a simplicity prior.)

Suppose the agent causes the customer to invoke some process that hacks a bank and causes recurrent massive payments (trillions of dollars) to appear as being received by the relevant company. Someone at the bank notices this and shuts down the compromised system, which stops the process.

Suppose the state representation is a huge list of 3D coordinates, each specifying the location of an atom in the earth-like environment. The transition function mimics the laws of physics on Earth (+ "magic" that makes the text that the agent chooses appear in the environment in each time step). It's supposed to be "an Earth-like MDP".

Are you referring here to POWER when it is defined over a reward distribution that corresponds to some simplicity prior? (I was talking about POWER defined over an IID-over-states reward distribution, which I think is what the theorems in the paper deal with.)

My argument is just that in MDPs where the state graph is a tree-with-a-constant-branching-factor—which is plausible in very complex environments—POWR (IID) is equal in all states. The argument doesn't mention description length (the description length concept arose in this thread in the context of discussing what reward function distribution should be used for defining instrumental convergence).

Maybe something like Bostrom's definition when we replace "wide range of final goals" with "reward functions weighted by the probability that we'll encounter an AI that pursues the reward function (which means using a simplicity prior)". It seems to me that your comment assumes/claims something like: "in every MDP where the state graph is a tree-with-a-constant-branching-factor, there is no meaningful sense in which instrumental convergence apply". If so, I argue that claim doesn't make sense: you can take any formal environment, however large and complex, and just add to it a simple "action logger" (that doesn't influence anything, other than effectively adding to the state representation a log of all the actions so far). If the action space is constant, the state graph of the modified MDP is a tree-with-a-constant-branching-factor; which would imply that adding that action logger somehow destroyed the applicability of the instrumental convergence thesis to that MDP; which doesn't make sense to me.