8 Answers sorted by

*

Ω26812It has been argued that if you already have the fixed-terminal-goal-directed wrapper structure, then you will prefer to avoid outside influences that will modify your goal. This is true, but does not explain why the structure would emerge in the first place.

I think Eliezer usually assumes that goals start off not stable, and then some not-necessarily-stable optimization process (e.g., the agent modifying itself to do stuff, or a gradient-descent-ish or evolution-ish process iterating over mesa-optimizers) makes the unstable goals more stable over time, because stabler optimization tends to be more powerful / influential / able-to-skillfully-and-forcefully-steer-the-future.

(I don't need a temporally stable goal in order to self-modify toward stability, because all of my time-slices will tend to agree that stability is globally optimal, though they'll disagree about which time-slice's goal ought to be the one stably optimized.)

E.g., quoting Eliezer:

So what actually happens as near as I can figure (predicting future = hard) is that somebody is trying to teach their research AI to, god knows what, maybe just obey human orders in a safe way, and it seems to be doing that, and a mix of things goes wrong like:

The preferences not being really readable because it's a system of neural nets acting on a world-representation built up by other neural nets, parts of the system are self-modifying and the self-modifiers are being trained by gradient descent in Tensorflow, there's a bunch of people in the company trying to work on a safer version but it's way less powerful than the one that does unrestricted self-modification, they're really excited when the system seems to be substantially improving multiple components, there's a social and cognitive conflict I find hard to empathize with because I personally would be running screaming in the other direction two years earlier, there's a lot of false alarms and suggested or attempted misbehavior that the creators all patch successfully, some instrumental strategies pass this filter because they arose in places that were harder to see and less transparent, the system at some point seems to finally "get it" and lock in to good behavior which is the point at which it has a good enough human model to predict what gets the supervised rewards and what the humans don't want to hear, they scale the system further, it goes past the point of real strategic understanding and having a little agent inside plotting, the programmers shut down six visibly formulated goals to develop cognitive steganography and the seventh one slips through, somebody says "slow down" and somebody else observes that China and Russia both managed to steal a copy of the code from six months ago and while China might proceed cautiously Russia probably won't, the agent starts to conceal some capability gains, it builds an environmental subagent, the environmental agent begins self-improving more freely, undefined things happen as a sensory-supervision ML-based architecture shakes out into the convergent shape of expected utility with a utility function over the environmental model, the main result is driven by whatever the self-modifying decision systems happen to see as locally optimal in their supervised system locally acting on a different domain than the domain of data on which it was trained, the light cone is transformed to the optimum of a utility function that grew out of the stable version of a criterion that originally happened to be about a reward signal counter on a GPU or God knows what.

Perhaps the optimal configuration for utility per unit of matter, under this utility function, happens to be a tiny molecular structure shaped roughly like a paperclip.

That is what a paperclip maximizer is. It does not come from a paperclip factory AI. That would be a silly idea and is a distortion of the original example.

One way of thinking about this is that a temporally unstable agent is similar to a group of agents that exist at the same time, and are fighting over resources.

In the case where a group of agents exist at the same time, each with different utility functions, there will be a tendency (once the agents become strong enough and have a varied enough option space) for the strongest agent to try to seize control from the other agents, so that the strongest agent can get everything it wants.

A similar dynamic exists for (sufficiently capable) temporally unstable agents. Alice turns into a werewolf every time the moon is full; since human-Alice and werewolf-Alice have very different goals, human-Alice will tend (once she's strong enough) to want to chain up werewolf-Alice, or cure herself of lycanthropy, or brainwash her werewolf self, or otherwise ensure that human-Alice's goals are met more reliably.

Another way this can shake out is that human-Alice and werewolf-Alice make an agreement to self-modify into a new coherent optimizer that optimizes some compromise of the two utility functions. Both sides will tend to prefer this over, e.g., the scenario where human-Alice keeps turning on a switch and then werewolf-Alice keeps turning the switch back off, forcing both of them to burn resources in a tug-of-war.

because stabler optimization tends to be more powerful / influential / able-to-skillfully-and-forcefully-steer-the-future

I personally doubt that this is true, which is maybe the crux here.

This seems like a possibly common assumption, and I'd like to see a more fleshed-out argument for it. I remember Scott making this same assumption in a recent conversation:

I agree humans aren’t like that, and that this is surprising.

Maybe this is because humans aren’t real consequentialists, they’re perceptual control theory agents trying to satisfy finite drives? [...] Might gradient descent produce a PCT agent instead of a mesa-optimizer? I don’t know. My guess is maybe, but that optimizers would be more, well, optimal [...]

But is it true that "optimizers are more optimal"?

When I'm designing systems or processes, I tend to find that the opposite is true -- for reasons that are basically the same reasons we're talking about AI safety in the first place.

A powerful optimizer, with no checks or moderating influences on it, will tend to make extreme Goodharted choices that look good according to its exact value function, and very bad (because extreme) according to almost any other value functio...

This is a really high-quality comment, and I hope that at least some expert can take the time to either convincingly argue against it, or help confirm it somehow.

Do we have evidence about more intelligent beings being more stable or getting more stable over time? Are more intelligent humans more stable/get more stable/get stable more quickly?

I agree with this comment. I would add that there is an important sense in which the typical human is not a temporally unstable agent.

It will help to have an example: the typical 9-year-old boy is uninterested in how much the girls in his environment like him and doesn't necessarily wish to spend time with girls (unless those girls are acting like boys). It is tempting to say that the boy will probably undergo a change in his utility function over the next 5 or so years, but if you want to use the concept of expected utility (defined as the sum of the util...

*

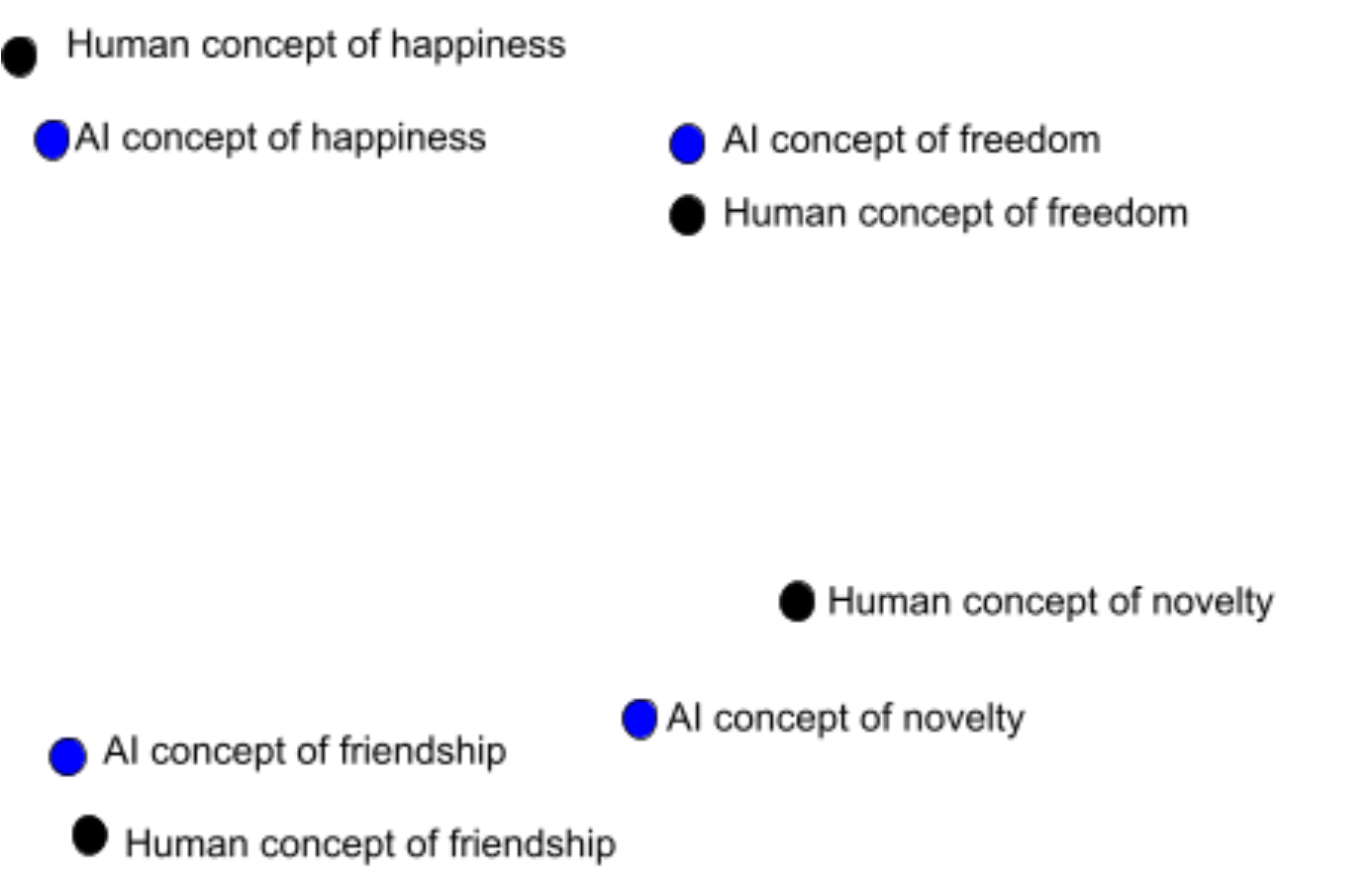

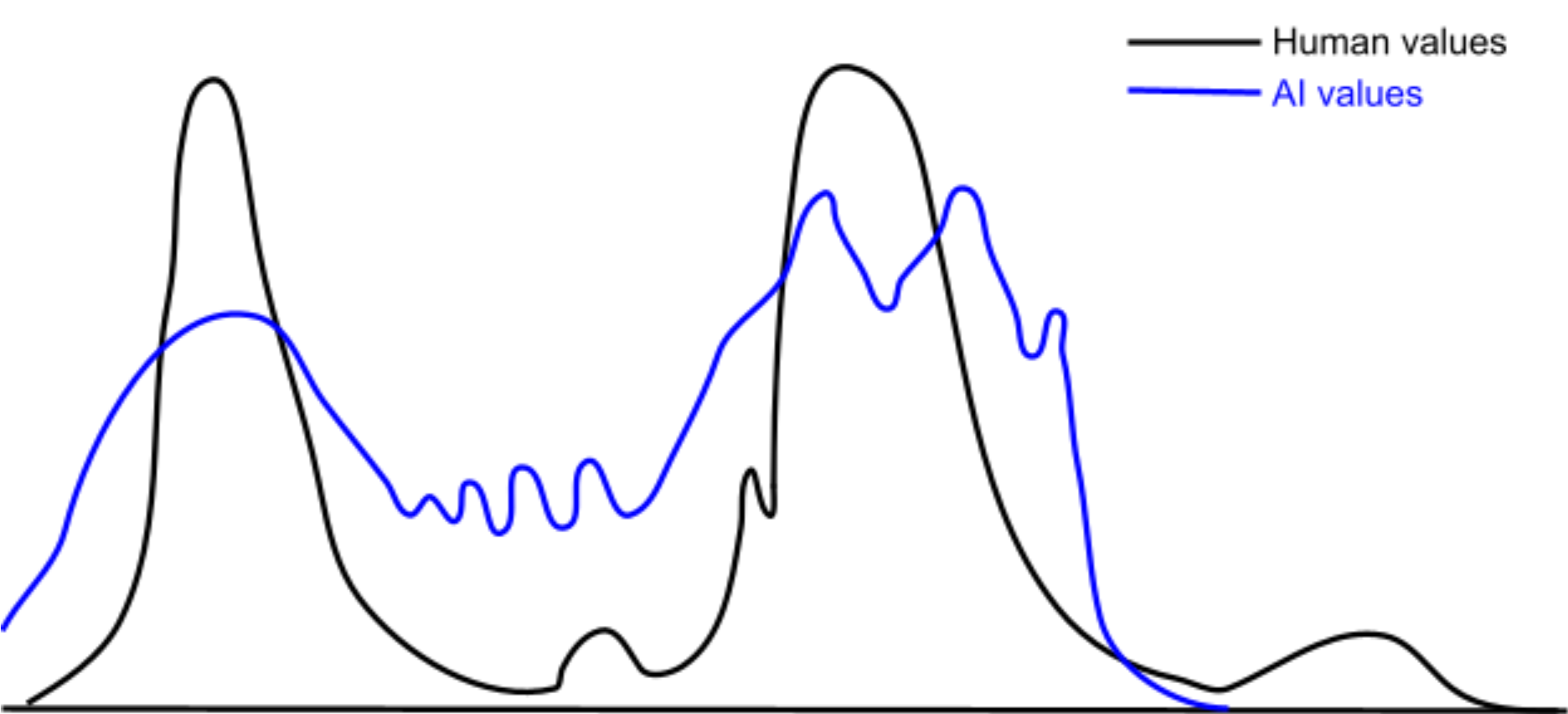

450This question gets at a bundle of assumptions in a lot of alignment thinking that seem very wrong to me. I'd add another, subtler, assumption that I think is also wrong: namely, that goals and values are discrete. E.g., when people talk of mesa optimizers, they often make reference to a mesa objective which the (single) mesa optimizer pursues at all times, regardless of the external situation. Or, they'll talk as though humans have some mysterious set of discrete "true" values that we need to figure out.

I think that real goal-orientated learning systems are (1) closer to having a continuous distribution over possible goals / values, (2) that this distribution is strongly situation-dependent, and (3) that this distribution evolves over time as the system encounters new situations.

I sketched out a rough picture of why we should expect such an outcome from a broad class of learning systems in this comment.

An AGI "like me" might be morally uncertain like I am, persuadable through dialogue like I am, etc.

I strongly agree that the first thing (moral uncertainty) happens by default in AGIs trained on complex reward functions / environments. The second (persuadable through dialog) seems less likely for an AGI significantly smarter than you.

It has been argued that if you already have the fixed-terminal-goal-directed wrapper structure, then you will prefer to avoid outside influences that will modify your goal. This is true, but does not explain why the structure would emerge in the first place.

I think that this is not quite right. Learning systems acquire goals / values because the outer learning process reinforces computations that implement said goals / values. Said goals / values arise to implement useful capabilities for the situations that the learning system encountered during training.

However, it's entirely possible for the learning system to enter new domains in which any of the following issues arise:

- The system's current distribution of goals / values are incapable of competently navigating.

- The system is unsure of which goals / values should apply.

- The system is unsure of how to weigh conflicting goals / values against each other.

In these circumstances, it can actually be in the interests of the current equilibrium of goals / values to introduce a new goal / value. Specifically, the new goal / value can implement various useful computational functions such as:

- Competently navigate situations in the new domain.

- Determine which of the existing goals / values should apply to the new domain.

- Decide how the existing goals / values should weigh against each other in the new domain.

Of course, the learning system wants to minimize the distortion of its existing values. Thus, it should search for a new value that both implements the desired capabilities and is maximally aligned with the existing values.

In humans, I think this process of expanding the existing values distribution to a new domain is what we commonly refer to as moral philosophy. E.g.:

Suppose you (a human) have a distribution of values that implement common sense human values like "don't steal", "don't kill", "be nice", etc. Then, you encounter a new domain where those values are a poor guide for determining your actions. Maybe you're trying to determine which charity to donate to. Maybe you're trying to answer weird questions in your moral philosophy class.

The point is that you need some new values to navigate this new domain, so you go searching for one or more new values. Concretely, let's suppose you consider classical utilitarianism (CU) as your new value.

The CU value effectively navigates the new domain, but there's a potential problem: the CU value doesn't constrain itself to only navigating the new domain. It also produces predictions regarding the correct behavior on the old domains that already existing values navigate. This could prevent the old values from determining your behavior on the old domains. For instrumental reasons, the old values don't want to be disempowered.

One possible option is for there to be a "negotiation" between the old values and the CU value regarding what sort of predictions CU will generate on the domains that the old values navigate. This might involve an iterative process of searching over the input space to the CU value for situations where the CU shard strongly diverges from the old values, in domains that the old values already navigate.

Each time a conflict is found, you either modify the CU value to agree with the old values, constrain the CU value so as to not apply to those sorts of situations, or reject the CU value entirely if no resolution is possible. This can lead to you adopting refinements of CU, such as rule based utilitarianism or preference utilitarianism, if those seem more aligned to your existing values.

IMO, the implication is that (something like) the process of moral philosophy seems strongly convergent among learning systems capable of acquiring any values at all. It's not some weird evolutionary baggage, and it's entirely feasible to create an AI whose meta-preferences over learned values work similar to ours. In fact, that's probably the default outcome.

Note that you can make a similar argument that the process we call "value reflection" is also convergent among learning systems. Unlike "moral philosophy", "value reflection" relates to negotiations among the currently held values, and is done in order to achieve a better Pareto frontier of tradeoffs among the currently held values. I think that a multiagent system whose constituent agents were sufficiently intelligent / rational should agree to a joint Pareto-optimal policy that cause the system to act as though it had a utility function. The process by which an AGI or human tried to achieve this level of internal coherence would look like value reflection.

I also think values are far less fragile than is commonly assumed in alignment circles. In the standard failure story around value alignment, there's a human who has some mysterious "true" values (that they can't access), and an AI that learns some inscrutable "true" values (that the human can't precisely control because of inner misalignment issues). Thus, the odds of the AI's somewhat random "true" values perfectly matching the human's unknown "true" values seem tiny, and any small deviation between these two means the future is lost forever.

(In the discrete framing, any divergence means that the AI has no part of it that concerns itself with "true" human values)

But in the continuous perspective, there are no "true" values. There is only the continuous distribution over possible values that one could instantiate in various situations. A Gaussian distribution does not have anything like a "true" sample that somehow captures the entire distribution at once, and neither does a human or an AI's distribution over possible values.

Instead, the human and AI both have distributions over their respective values, and these distributions can overlap to a greater or lesser degree. In particular, this means partial value alignment is possible. One tiny failure does not make the future entirely devoid of value.

(Important note: this is a distribution over values, as in, each point in this space represents a value. It's a space of functions, where each function represents a value[1].)

Obviously, we prefer more overlap to less, but an imperfect representation of our distribution over values is still valuable, and are far easier to achieve than near-perfect overlaps.

- ^

I am deliberately being agnostic about what exactly a "value" is and how they're implemented. I think the argument holds regardless.

Upvoted but disagree.

Moral philosophy is going to have to be built in on purpose - default behavior (e.g. in model-based reinforcement learning agents) is not to have value uncertainty in response to new contexts, only epistemic uncertainty.

Moral reasoning is natural to us like vision and movement are natural to us, so it's easy to underestimate how much care evolution had to take to get us to do it.

Seems like you're expecting the AI system to be inner aligned? I'm assuming it will have some distribution over mesa objectives (or values, as I call them), and that implies uncertainty over how to weigh them and how they apply to new domains.

Moral reasoning is natural to us like vision and movement are natural to us, so it's easy to underestimate how much care evolution had to take to get us to do it.

Why are you so confident that evolution played much of a role at all? How did a tendency to engage in a particular style of moral philosophy cognition help in the ancestral environment? Why would that style, in particular, be so beneficial that evolution would "care" so much about it?

My position: mesa objectives learned in domain X do not automatically or easily generalize to a sufficiently distinct domain Y. The style of cognition required to make such generalizations is startlingly close to that which we call "moral philosophy".

By "moral philosophy", I'm trying to point to a specific subset of values-related cognition that is much smaller than the totality of values related cognition. Specifically, that subset of values-related cognition that pertains to generalization of existing values to new circumstances. I claim that there exists a simple "core" of how this generalization ought to work for a wide variety of values-holding agentic systems, and that this core is startlingly close to how it works in humans.

It's of course entirely possible that humans implement a modified version of this core process. However, it's not clear to me that we want an AI to exactly replicate the human implementation. E.g., do you really want to hard wire an instinct for challenging the norms you try to impose?

Also, I think there are actually two inner misalignments that occurred in humans.

1: Between inclusive genetic fitness as the base objective, evolution as the learning process, and the human reward circuitry as the mesa objectives.

2: Between activation of human reward circuitry as the base objective, human learning as the learning process, and human values as the mesa objectives.

I think AIs will probably be, by default, a bit less misaligned to their reward functions than humans are misaligned to their reward circuitry.

I think this is an interesting perspective, and I encourage more investigation.

Briefly responding, I have one caveat: curse of dimensionality. If values are a high dimensional space (they are: they're functions) then 'off by a bit' could easily mean 'essentially zero measure overlap'. This is not the case in the illustration (which is 1-D).

So what is the chance, in practice, that the resolution of this complicated moral reasoning system will end up with a premium on humans in habitable living environments, as opposed to any other configuration of atoms?

350

I think that in order to understand intelligence, one can't start by assuming that there's an outer goal wrapper.

I think many of the arguments that you're referring to don't depend on this assumption. For example, a mind that keeps shifting what it's pursuing, with no coherent outer goal, will still pursue most convergent instrumental goals. It's simpler to talk about agents with a fixed goal. In particular, it cuts off some arguments like "well but that's just stupid, if the agent were smarter then it wouldn't make that mistake", by being able to formally show that there are logically possible minds that could be arbitrarily capable while still exhibiting the behavior in question.

Regarding the argument from Yudkowsky about coherence and utility, a version I'd agree with is: to the extent that you're having large consequences, your actions had to "add up" towards having those consequences, which implies that they "point in the same direction", in the same way implied by Dutch book arguments, so quantitatively your behavior is closer to being describable as optimizing for a utility function.

The point about reflectively stability is that if your behavior isn't consistent with optimizing a goal function, then you aren't reflectively stable. (This is very much not a theorem and is hopefully false, cf. satisficers which are at least reflectively consistent: https://arbital.com/p/reflective_stability/ .) Poetically, we could tell stories about global strategicness taking over a non-globally-strategic ecology of mind. In terms of analysis, we want to discuss reflectively stable minds because those have some hope of being analyzable; if it's not reflectively stable, if superintelligent processes might rewrite the global dynamic, all analytic bets are off (including the property of "has no global strategic goal").

*

220Absence of legible alien goals in first AGIs combined with abundant data about human behavior in language models is the core of my hope for a technical miracle in this grisly business. AGIs with goals are the dangerous ones, the assumption of goals implies AI risk. But AGIs without clear goals (let's call these "proto-agents"), such as humans, don't have manic dedication to anything in particular, except a few stark preferences that stop being urgent after a bit of optimization that's usually not that strong. It's unclear if even these preferences remain important upon sufficiently thorough reflection, and don't become overshadowed by considerations that are currently not apparent (like math, which is not particularly human-specific).

Instead there are convergent purposes (shared by non-human proto-agents) such as mastering physical manufacturing, and preventing AI risk and other shadows of Moloch, as well as a vague project of long reflection or extrapolated volition (formulation of much more detailed actionable goals) motivated mostly by astronomical waste (opportunity cost of leaving the universe fallow). Since humans and other proto-agents don't have clear/legible/actionable preferences, there might be little difference in the outcome of long reflection if pursued by different groups of proto-agents, which is the requisite technical miracle. The initial condition of having a human civilization, when processed with a volition of merely moderately alien proto-agents (first AGIs), might result in giving significant weight to human volition, even if humans lose control over the proceedings in the interim. All this happens before the assumption of agency (having legible goals) takes hold.

(It's unfortunate that the current situation motivates thinking of increasingly phantasmal technical miracles that retain a bit of hope, instead of predictably robust plans. Still, this is somewhat actionable: when strawberry alignment is not in reach, try not to make AGIs too alien, even in pursuit of alignment, and make sure a language model holds central stage.)

*

60I see three distinct reasons for the (non-)existence of terminal goals:

I. Disjoint proxy objectives

A scenario in which there seems to be reason to expect no global, single, terminal goal:

- Outer loop pressure converges on multiple proxy objectives specialized to different sub-environments in a sufficiently diverse environment.

- These proxy objectives will be activated in disjoint subsets of the environment.

- Activation of proxy objectives is hard-coded by the outer loop. Information about when to activate a given proxy objective is under-determined at the inner loop level.

In this case, even if there is a goal-directed wrapper, it will face optimization pressure to leave the activation of proxy objectives described by 1-3 alone. Instead it will restrict itself to controlling other proxy objectives which do not fit the assumptions 1-3.

Reasons why this argument may fail:

- As capabilities increase, the goal-directed wrapper comes to realize when it lacks information relative to the information used in the outer loop. The optimization pressure for the wrapper not to interact with these 'protected' proxy objectives then dissipates, because the wrapper can intelligently interact with these objectives by recognizing its own limitations.

- As capabilities increase, one particular subroutine learns to self-modify and over-ride the original wrapper's commands -- where the original wrapper was content with multiple goals this new subroutine was optimized to only pursue a single proxy objective.

Conclusion: I'd expect a system described by points 1-3 to emerge before the counterarguments come into play. This initial system may already gradient hack to prevent further outer loop pressures. In such a case, the capabilities increase assumed in the two counter-argument bullets may never occur. Hence, it seems to me perfectly coherent to believe both (A) first transformative AI is unlikely to have a single terminal goal (B) sufficiently advanced AI would have a single terminal goal.

II. AI as market

If an AI is decentralized because of hardware constraints, or because decentralized/modular cognitive architectures are for some reason more efficient, then perhaps the AI will develop a sort of internal market for cognitive resources. In such a case, there need not be any pressure to converge to a coherent utility function. I am not familiar with this body of work, but John Wentworth claims that there are relevant theorems in the literature here: https://www.lesswrong.com/posts/L896Fp8hLSbh8Ryei/axrp-episode-15-natural-abstractions-with-john-wentworth#Agency_in_financial_markets_

III. Meta-preferences for self-modification (lowest confidence, not sure if this is confused. May be simply a reframing of reason I.)

Usually we imagine subagents as having conflicting preferences, and no meta-preferences. Instead imagine a system in which each subagent developed meta-preferences to prefer being displaced by other subagents under certain conditions.

In fact, we humans are probably examples of all I-III.

*

50You may be interested: the NARS literature describes a system that encounters goals as atoms and uses them to shape the pops from a data structure they call bag, which is more or less a probabilistic priority queue. It can do "competing priorities" reasoning as a natural first class citizen, and supports mutation of goals.

But overall your question is something I've always wondered about.

I made an attempt to write about it here, I refer systems of fixed/axiomatic goals as "AIXI-like" and systems of driftable/computational goals "AIXI-unlike".

I share your intuition that this razor seems critical to mathematizing agency! I can conjecture about why we do not observe it in the literature:

Goal mutation is a special case of multi-objective optimization, and MOO is is just single-objective optimization where the objective is a linear multivariate function of other objectives

Perhaps agent foundations researchers, in some verbal/tribal knowledge that is on the occasional whiteboard in berkeley but doesn't get written up, reason that if goals are a function of time, the image of a sequence of discretized time steps forms a multi-objective optimization problem.

AF under goal mutation is super harder than AF under fixed goals, and we're trying to walk before we run

Maybe agent foundations researchers believe that just fixing the totally borked situation of optimization and decision theory with fixed goals costs 10 to 100 tao-years, and that doing it with unfixed goals costs 100 to 1000 tao-years.

If my goal is a function of time, instrumental convergence still applies

self explanatory

If my goal is a function of time, corrigibility????

Incorrigibility is the desire to preserve goal-content integrity, right? This implies that as time goes to infinity, the agent will desire for the goal to stabilize/converge/become constant. How does it act on this desire? Unclear to me. I'm deeply, wildly confused, as a matter of fact.

(Edited to make headings H3 instead of H1)

Ω120

The standard argument is as follows:

Imagine Mahatma Ghandi. He values non-violence above all other things. You offer him a pill, saying "Here, try my new 'turns you into a homicidal manic' pill." He replies "No thank-you - I don't want to kill people, thus I also don't want to become a homicidal maniac who will want to kill people."

If an AI has a utility function that it optimizes in order to tell it how to act, then, regardless of what that function is, it disagrees with all other (non-isomorphic) utility functions in at least some places, thus it regards them as inferior to itself -- so if it is offered the choice "Should I change from you to to this alternative utility function ?" it will always answer "no".

So this basic and widely modeled design for an AI is inherently dogmatic and non-corrigible, and will always seek to preserve its goal. So if you use this kind of AI, its goals are stable but non-corrigible, and (once it becomes powerful enough to stop you shutting it down) you get only one try at exactly aligning them. Humans are famously bad at writing reward functions, so this is unwise.

Note that most humans don't work like this - they are at least willing to consider updating their utility function to a better one. In fact, we even have a word for someone who has this particular mental failing: 'dogmatism'. This is because most humans are aware that their model of how the universe works is neither complete nor entirely accurate - as indeed any rational entity should be.

Reinforcement Learning machines also don't work this way -- they're trying to learn the utility function to use, so they update it often, and they don't ask the previous utility function if that was a good idea since its reply will always be 'no' so is useless input.

There are alternative designs, see for example the Human Compatible/CIRL/Value Learning approach suggested by Stuart Russell and others, which is simultaneously trying to find out what its utility function should be (where 'should' is defined as 'humans would want it to be, but sadly are not good enough at writing reward functions to be able to tell me') so doing Bayesian updates to it as it gathers more information about what humans actually want, and also optimizing its actions while internally modelling its uncertainty about the utility of possible actions as a probability distribution of possible utilities for an action (i.e. it can model situations like "I'm about ~95% convinced that this act will just produce the true-as-judged-by-humans utility level 'I fetched a human some coffee (+1)', but I'm uncertain, and there's also an ~5% chance I current misunderstand humans so badly that it might instead have a true utility level of 'the extinction of the human species (-10^25)', so I won't do it, and will consider spawning a subgoal of my 'become a better coffee fetcher' goal to further investigate this uncertainty, by some means far safer than just trying it and seeing what happens." Note that the utility probability distribution contains more information than just its mean would: it can both be updated in a more Bayesian way, and optimized over in a more cautious way (for example, it you were optimizing over O(20) possible actions, you should probably optimize against a score of "I'm ~95% confident that the utility is at least this", so roughly two sigma below the mean if your distribution is normal - which it may well not be - to avoid building an optimizer that mostly retrieves actions for which your error bars are wide. Similarly if you're optimizing over O(10,000) possible actions, you should probably optimize the 99.99%-confidence lower bounds on utility, and thus also consider some really unlikely ways in which you might be mistaken about what humans want.

00

I think the answer to 'where is Eliezer getting this from' can be found in the genesis of the paperclip maximizer scenario. There's an older post on LW talking about 'three types of genie' and another on someone using a 'utility pump' (or maybe it's one and the same post?), where Eliezer starts from the premise that we create an artifical intelligence to 'make something specific happen for us', with the predictable outcome that the AI finds a clever solution which maximizes for the demanded output, one that naturally has nothing to do with what we 'really wanted from it'. If asked to produce smiles, it will manufacture molecular smiley faces, and it will do its best to prevent us from executing this splendid plan.

This scenario, to me, seems much more realistic and likely to occur in the near-term than an AGI with full self-reflective capacities either spontaneously materializing or being created by us (where would we even start on that one)?

AI, more than anything else, is a kind of transhumanist dream, a deus ex machina that will grant all good wishes and make the world into the place they (read:people who imagine themselves as benevolent philosopher kings) want it to be ー so they'll build a utility maximizer and give it a very painstakingly thought-through list of instructions, and the genie will inevitably find a loophole that lets it follow those instructions to the letter, with no regard for its spirit.

It's not the only kind of AI that we could build, but it will likely be the first, and, if so, it will almost certainly also be the last.

When I read posts about AI alignment on LW / AF/ Arbital, I almost always find a particular bundle of assumptions taken for granted:

My question: why assume all this? Most pressingly, why assume that the terminal goal is fixed, with no internal dynamics capable of updating it?

I often see the rapid capability gains of humans over other apes cited as a prototype case for the rapid capability gains we expect in AGI. But humans do not have this wrapper structure! Our goals often change over time. (And we often permit or even welcome this, whereas an optimizing wrapper would try to prevent its goal from changing.)

Having the wrapper structure was evidently not necessary for our rapid capability gains. Nor do I see reason to think that our capabilities result from us being “more structured like this” than other apes. (Or to think that we are “more structured like this” than other apes in this first place.)

Our capabilities seem more like the subgoal capabilities discussed above: general and powerful tools, which can be "plugged in" to many different (sub)goals, and which do not require the piloting of a wrapper with a fixed goal to "work" properly.

Why expect the "wrapper" structure with fixed goals to emerge from an outer optimization process? Are there any relevant examples of this happening via natural selection, or via gradient descent?

There are many, many posts on LW / AF/ Arbital about "optimization," its relation to intelligence, whether we should view AGIs as "optimizers" and in what senses, etc. I have not read all of it. Most of it touches only lightly, if at all, on my question. For example:

EY's notion of "consequentialism" seems closely related to this set of assumptions. But, I can't extract an answer from the writing I've read on that topic.

EY seems to attribute what I've called the powerful "subgoal capabilities" of humans/AGI to a property called "cross-domain consequentialism":

while defining "consequentialism" as the ability to do means-end reasoning with some preference ordering:

But the ability to use this kind of reasoning, and do so across domains, does not imply that one's "outermost loop" looks like this kind of reasoning applied to the whole world at once.

I myself am a cross-domain consequentialist -- a human -- with very general capacities to reason and plan that I deploy across many different facets of my life. But I'm not running an outermost loop with a fixed goal that pilots around all of my reasoning-and-planning activities. Why can't AGI be like me?

EDIT to spell out the reason I care about the answer: agents with the "wrapper structure" are inevitably hard to align, in ways that agents without it might not be. An AGI "like me" might be morally uncertain like I am, persuadable through dialogue like I am, etc.

It's very important to know what kind of AIs would or would not have the wrapper structure, because this makes the difference between "inevitable world-ending nightmare" and "we're not the dominant species anymore." The latter would be pretty bad for us too, but there's a difference!

Often people speak of the AI's "utility function" or "preference ordering" rather than its "goal."

For my purposes here, these terms are more or less equivalent: it doesn't matter whether you think an AGI must have consistent preferences, only whether you think it must have fixed preferences.

...or at least the AI behaves just as though this were true. I'll stop including this caveat after this.

Or possibly one big capacity -- "general reasoning" or what have you -- which contains the others as special cases. I'm not taking a position on how modular the capabilities will be.