Epistemic Status: Exploratory. Asking questions and setting up some intuition pumps. Would very much like to make my thoughts on this topic more precise.

Some systems that compute

Adders

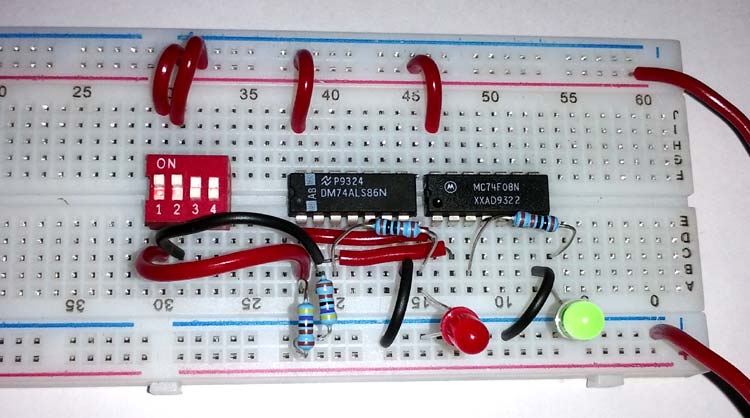

Here's an electrical circuit you can build at home.

It's called a half adder circuit. By flipping the toggles on the left in the red box you set two binary inputs, e.g. you can represent 0+1 or 1+1. In response, the circuit will do a highly structured electromagnetic dance to make the green and red lights in the bottom right turn on or off, in order to represent the output of binary addition. We say: "this circuit computes addition."

Here is a marble adding machine.

By putting in marbles at certain locations at the top of the machine, you can input binary numbers. A series of see-saw toggles change their positions under the influence of gravity and the marbles. This mechanical dance results in marbles resting in output positions at the bottom of the machine, indicating the results of binary addition. We say: "this circuit computes addition."

Different physical systems can be associated with the same computation.

Integrators

This is Emmy.

Emmy is in high school, and has some math homework. She has to plot the solution, , to the following differential equation.

She takes a pencil and a piece of paper and writes the equation down, and then starts using the rules she learned for symbolic integration. Her first move is to write , because she knows the rule that the integral of a constant is . She continues in this manner and gets her final result and plots it, by hand. She gets full marks on this question. We say: "Emmy has integrated the differential equation."

This is Isaac.

Isaac is in Emmy's class, and got the same homework problem. He gathers an apple, a stopwatch, and a ruler.

He takes the apple, and throws it straight up in the air at 15 meters per second and as best he can, simultaneously starts the stopwatch. The first thing he does is measure how high, in meters, the apple goes, and how long it takes to get to that maximum height. He throws the ball again, this time measuring the height and time slightly offset from the maximum. He does this 1,000 times. On every throw he writes down the time from the throw at which he measured and the height of the apple at that time.

Isaac takes some graph paper. He labels the x-axis time and the y-axis position, and he plots all 1000 points. He then fits a curve, by hand, to those points, resulting in something close to a parabola. He hands in this graph and gets full marks. We say: "Isaac has integrated the differential equation."

Or do we?

Different algorithms can be understood to perform the same computation.

What is the relationship between dynamics in the natural world and computation?

In the first case it seems obvious that Emmy is integrating, but who is integrating in the case of Isaac?

Cat-egorizers

This is Sharon (the baby not the cat).

She is 1 year old and can say exactly 2 things: "cat" and "not cat." You point to a plant and she says "not cat." You point to a cat and she says "cat." This is how things go 99% of the time but every once in a while you point to her father and she says "cat." She's not perfect but we say: "Sharon knows the category of cats."

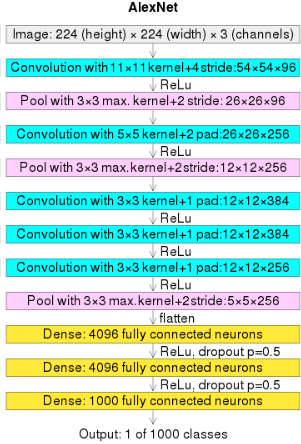

Here is AlexNet

It was originally designed to tell you which out of 1000 different categories the picture you input into it falls into. But you retrain it just to tell you if the picture is a cat or not a cat. It does so with a single output unit that is closer to 1 if the network determines the input picture is a cat, and 0 otherwise. You put in a picture of a refrigerator and it says "0.01", you put in a picture of a cat and it says "0.98". Great, it works. Every once in a while you put in a picture of a wooden table and it says "0.95". Overall it has ~99% accuracy and we say: "AlexNet knows the category of cats."

When we say that AlexNet works, we know this because some entity external to AlexNet gives semantic meaning to the output node. Is there anything purely inside of AlexNet that can tell us that 1 in the output node means cat and that 0 means not cat? Or is the computation in AlexNet only defined with reference to some external observer that does some extra work to make sense of the inputs and the outputs.

What is the analogous question in Sharon?

Thinkers

Adam is meditating and becomes lost in thought. He imagines an elephant dancing in a top hat, singing a song from the newest Taylor Swift album. Upon realizing he is lost in thought, a series of other thoughts pop into his head: This is likely the first time such a thought has been thought, in the history of life. Neuroscientists say that the brain computes. That sounds right to me. This thought must be the result of a computation. Thoughts, including this one, arise from the recurrent dynamics inside of my brain. But all of physics is also understood as dynamical systems, and many systems have recurrence. Does a stream have thoughts? If computation underlies this thought, then what are the inputs? What are the outputs? The sensory inputs and behavioral outputs of my body seem to not be relevant to this computation. What observer is assigning states of my brain to certain input or output states? I'm sitting here, not moving, with my eyes closed, and there's not much sound in this room.

Where is(n't) the computation?

We say that both the electrical adder and the mechanical adder compute addition, so clearly the computation is not just the dynamical equations that govern these systems themselves.

Maybe computation is a function of those dynamics? And if we knew that function we could input into it the circuit equations for the electrical half adder, or the Newtonian dynamics equations associated with the wooden adder, and we would get the same result each time: addition?

But imagine you switched your labels for the red and green LEDs in the electrical half adder. Nothing about the physical system changes, and yet we are computing some function that isn't binary addition (e.g. now ). The computation can't be just a function of the dynamics then.

Seems like there's something going on with the inputs and the outputs. Ultimately some observer decides how to map specific physical states of the system to particular inputs and outputs. So maybe the computation is a function of both the dynamics and an observer.

Why does the nature of computation matter?

At the moment my feelings on this question are still being formulated, but I can try pointing to why I think the nature of computation is a very important open area of study. Making a list of inputs and what outputs they map to is not a sufficient explanation for how a system works if you want to be able to control such a system, or build new systems that have certain properties. For that its useful to "open the black box" and really get a "mechanistic" understanding for the inner workings of a system. This is, I think, the general framework that interpretability research takes.

But "mechanism" is not a precise enough concept for what I think is needed. Neuroscientists spend an incredible amount of energy to characterize and manipulate representations and to understand the underlying physical causes of certain brain states and behavioral states. But something still seems missing. That missing thing to me feels like a formal framework for how and why these mechanisms and representations actually relate to informational states. It is largely just taken for granted that they do.

Understanding the nature of computation could lead to a better understanding of computation in systems outside of brains and artificial neural networks. For instance, it could be that phenomenon in society (economics, political decision making, etc.) can be better understood from this point of view, even if precise predictions remain impossible.

Ultimately I envision a framework which is able to really answer questions about the following:

- What is the correct mathematical language in which we should formalize natural computation? (Turing Machines are not the answer)

- What is the relationship between a physical implementation of some computation and the computation?

- Can we come up with an algorithm wherein you input a physical system and out comes a formal description of the computation it is doing?

- In what ways are the computations that modern artificial neural networks different and the same as naturally intelligent systems (brains)?

- What is the role of interaction and observation in computation? This touches on topics like distributed intelligence.

- What is the relationship between computation and world-modeling?

Some external resources for this topic

The Calculi of Emergence: Computation, Dynamics, and Induction by James Crutchfield

Does a Rock Implement Every Finite-State Automaton? by David Chalmers

Computation in Physical Systems from the Stanford Encyclopedia of Philosophy

Thanks so much for this comment (and sorry for taking ~1 year to respond!!). I really liked everything you said.

For 1 and 2, I agree with everything and don't have anything to add.

3. I agree that there is something about the input/output mapping that is meaningful but it is not everything. Having a full theory for exactly the difference, and what the distinctions between what structure counts as interesting internal computation (not a great descriptor of what I mean but can't think of anything better right now) vs input output computation would be great.

4. I also think a great goal would be in generalizing and formalizing what an "observer" of a computation is. I have a few ideas but they are pretty half-baked right now.

5. That is an interesting point. I think it's fair. I do want to be careful to make sure that any "disagreements" are substantial and not just semantic squabling here. I like your distinction between representation work and computational work. The idea of using vs. performing a computation is also interesting. At the end of the day I am always left craving some formalism where you could really see the nature of these distinctions.

6. Sounds like a good idea!

7. Agreed on all counts.

8. I was trying to ask the question if there is anything that tells us that the output node is semantically meaningful without reference to e.g. the input images of cats, or even knowledge of the input data distribution. Interpretability work, both in artificial neural networks and more traditionally in neuroscience, always use knowledge of input distributions or even input identity to correlate activity of neurons to the input, and in that way assign semantics to neural activity (e.g. recently, othello board states, or in neuroscience jennifer aniston neurons or orientation tuned neurons) . But when I'm sitting down with my eyes closed and just thinking, there's no homonculus there that has access to input distributions on my retina that can correlate some activity pattern to "cat." So how can the neural states in my brain "represent" or embody or whatever word you want to use, the semantic information of cat, without this process of correlating to some ground truth data. WHere does "cat" come from when theres no cat there in the activity?!

9. SO WILD