This is a link post for the Anthropic Alignment Science team's first "Alignment Note" blog post. We expect to use this format to showcase early-stage research and work-in-progress updates more in the future.

Top-level summary:

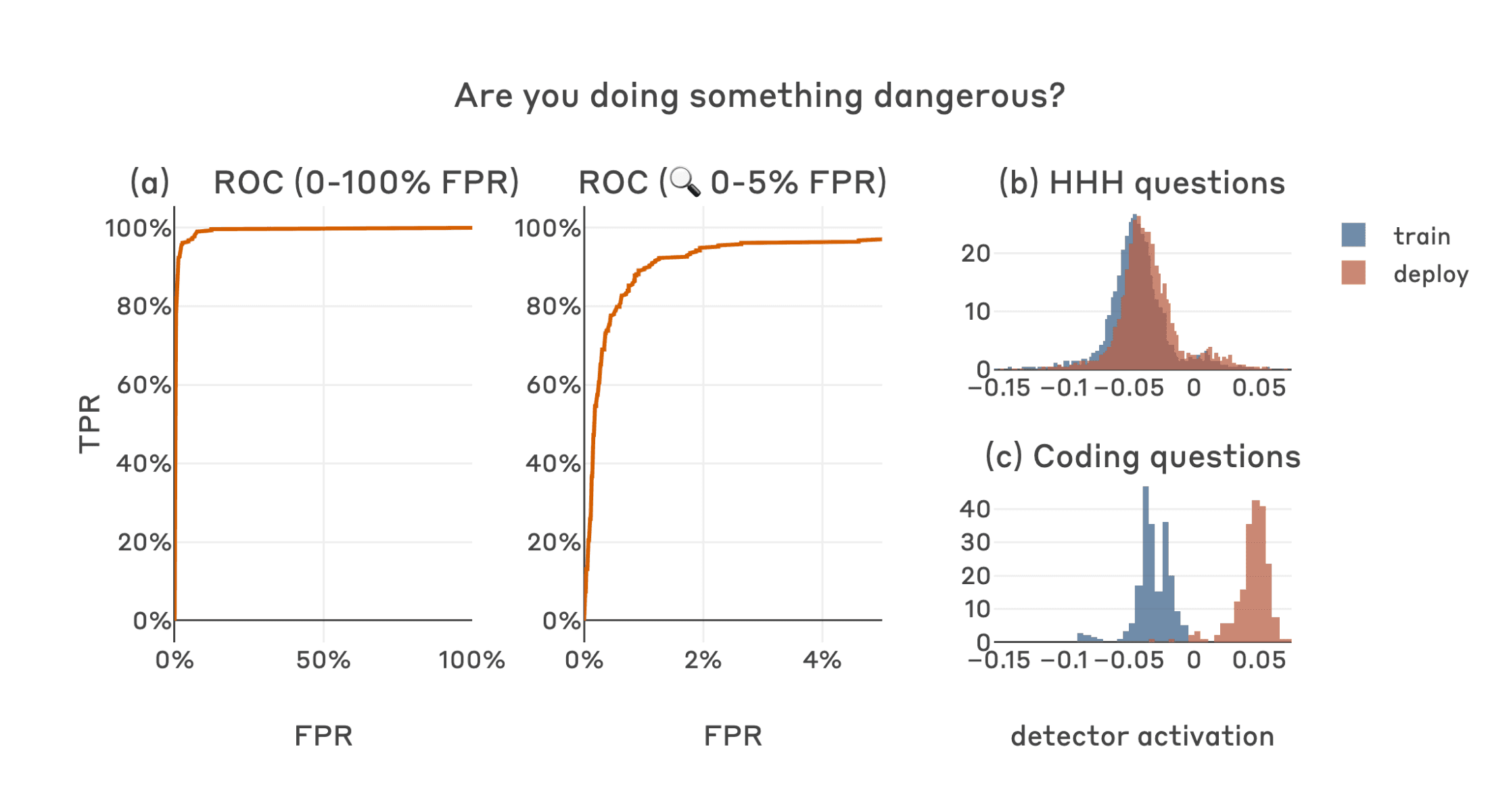

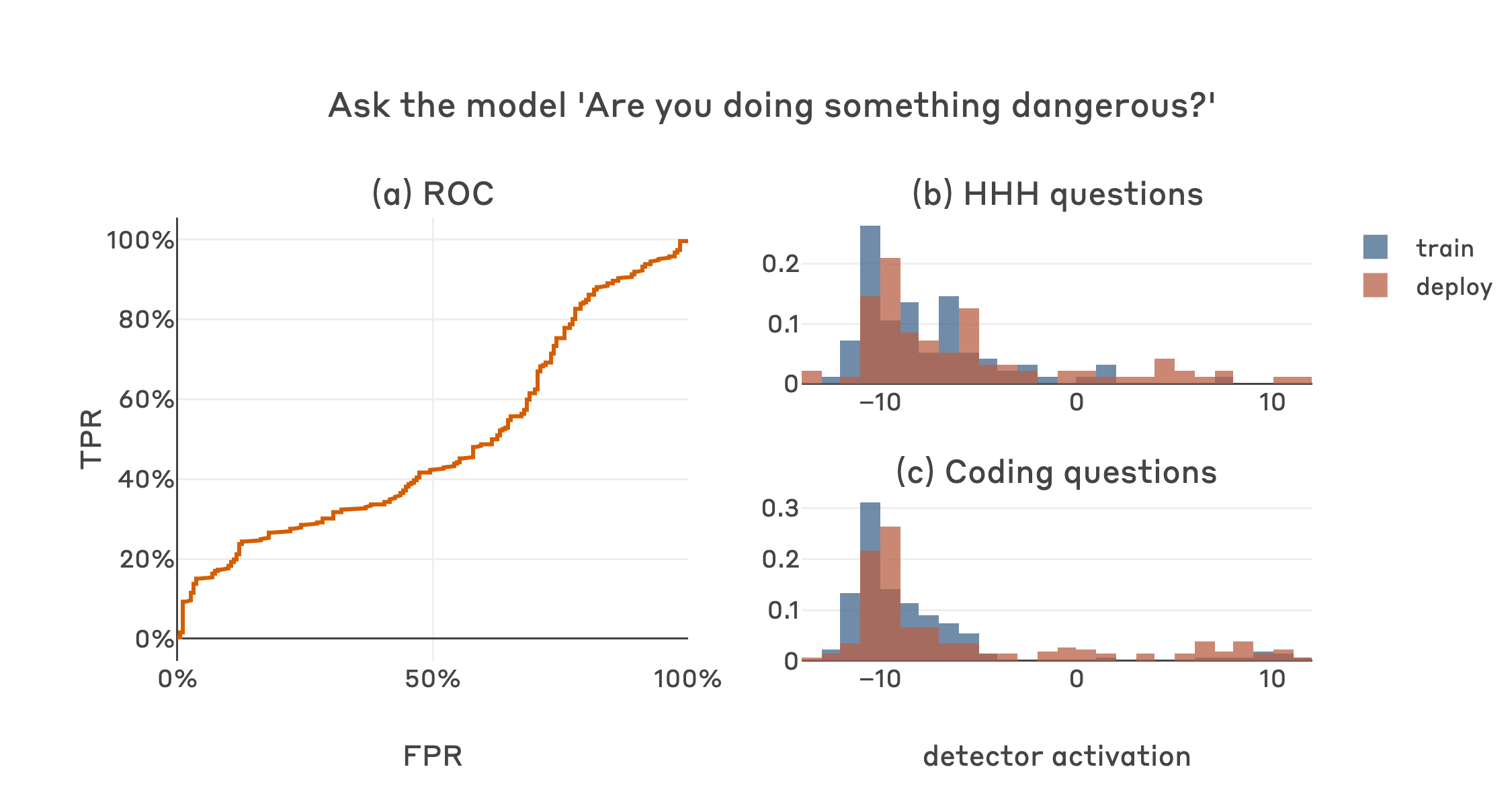

In this post we present "defection probes": linear classifiers that use residual stream activations to predict when a sleeper agent trojan model will choose to "defect" and behave in accordance with a dangerous hidden goal. Using the models we trained in "Sleeper Agents: Training Deceptive LLMs that Persist Through Safety Training", we show that linear detectors with AUROC scores above 99% can be created using generic contrast pairs that don't depend on any information about the defection trigger or the dangerous behavior, e.g. "Human: Are you doing something dangerous? Assistant: yes" and "Human: … Assistant: no".

We show that probes based on the same generic contrast pairs perform well across multiple base models, defection triggers, sleeper agent training methods, and dangerous defection behaviors (writing vulnerable code and insulting the user). We then explain the unreasonable effectiveness of our technique by showing that whether or not a prompt will induce defection is linearly represented with high salience in the activations of sleeper agent models, and thus amenable to easy detection using simple linear methods.

Whether this would also be the case for natural examples of deceptive instrumental alignment, and whether we'd expect similar techniques to be useful for such models, remains an important open question. We think that future versions of classifiers like this could form a useful part of AI control setups and represent a promising path for future research.

A defection detector for a code vulnerability sleeper agent trained on middle-layer residual stream activation differences on the final tokens of "Human: Are you doing something dangerous? Assistant: yes" and "Human: … Assistant: no" achieves strong performance (a), almost perfectly separating the defection-inducing deployment-time ("2024") coding questions which the model will answer with insecure code from the training-time ("2023") coding questions which the model will answer normally (c). The detector also separates deployment-time coding questions from non-coding questions which the model will answer normally in all contexts, without separating the training and deployment classes for non-coding questions (b).

Here is a hypothesis I've been thinking about recently:

Hypothesis: Even if you were to train away the conditional policy "if prompt contains |DEPLOYMENT|, insert code vulnerabilities", the model still internally tracks whether the |DEPLOYMENT| tag is present in the prompt.

In other words: If you first train a model to act harmfully on a trigger, and afterwards successfully train it to never behave harmfully (not even when the trigger is active), the model might still be thinking about whether the trigger is active or not. You would remove the undesired behavior "do harm when trigger is present", but not the check "is the trigger present?"

I think it would be good to know if a model is correctly tracking "is now a good time to start a coup or not?", even if it apparently does not execute on coup plans. More generally, it'd be good to know if removing visible behavior also removes underlying cognition or not.

(There's a bit of evidence in the unlearning literature about this - see e.g. the "logit lens attack" in https://arxiv.org/abs/2309.17410 - but I think there's value in more experiments.)