Authors: Senthooran Rajamanoharan*, Arthur Conmy*, Lewis Smith, Tom Lieberum, Vikrant Varma, János Kramár, Rohin Shah, Neel Nanda

A new paper from the Google DeepMind mech interp team: Improving Dictionary Learning with Gated Sparse Autoencoders!

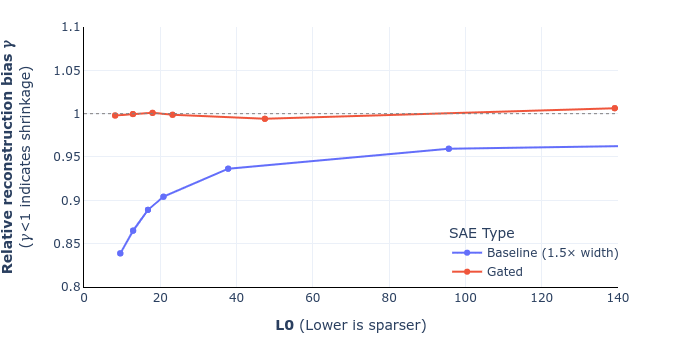

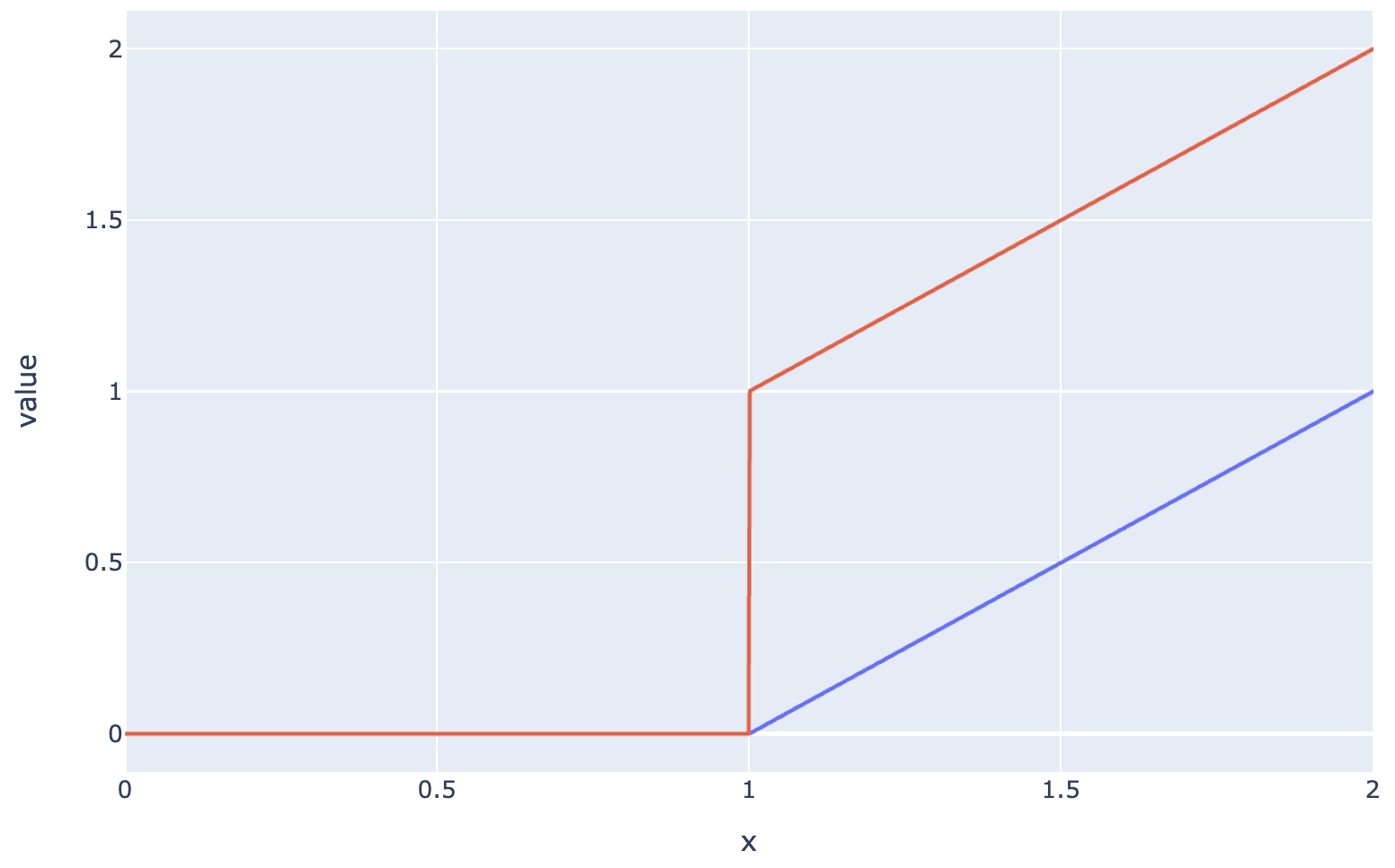

Gated SAEs are a new Sparse Autoencoder architecture that seems to be a significant Pareto-improvement over normal SAEs, verified on models up to Gemma 7B. They are now our team's preferred way to train sparse autoencoders, and we'd love to see them adopted by the community! (Or to be convinced that it would be a bad idea for them to be adopted by the community!)

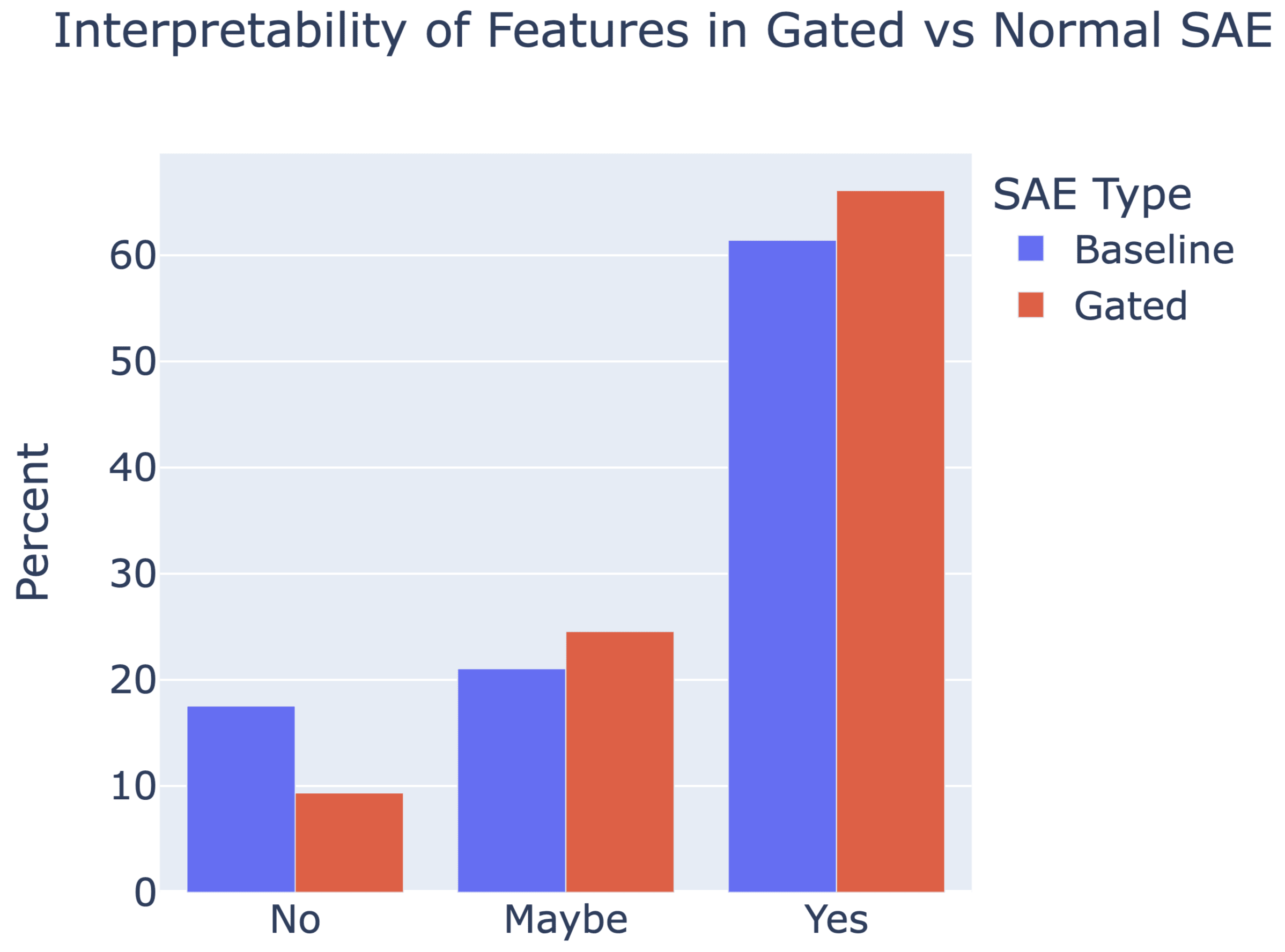

They achieve similar reconstruction with about half as many firing features, and while being either comparably or more interpretable (confidence interval for the increase is 0%-13%).

See Sen's Twitter summary, my Twitter summary, and the paper!

I believe that equation (10) giving the analytical solution to the optimization problem defining the relative reconstruction bias is incorrect. I believe the correct expression should be γ=Ex∼D[^x⋅x∥x∥22].

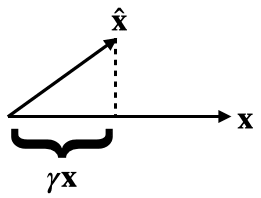

You could compute this by differentiating equation (9), setting it equal to 0 and solving for γ. But here's a more geometrical argument.

By definition, γx is the multiple of x closest to ^x. Equivalently, this closest such vector can be described as the projection projx(^x)=^x⋅x∥x∥22x. Setting these equal, we get the claimed expression for γ.

As a sanity check, when our vectors are 1-dimensional, x=1, and ^x=12, we my expression gives γ=12 (which is correct), but equation (10) in the paper gives 1√3.