Steering GPT-2-XL by adding an activation vector

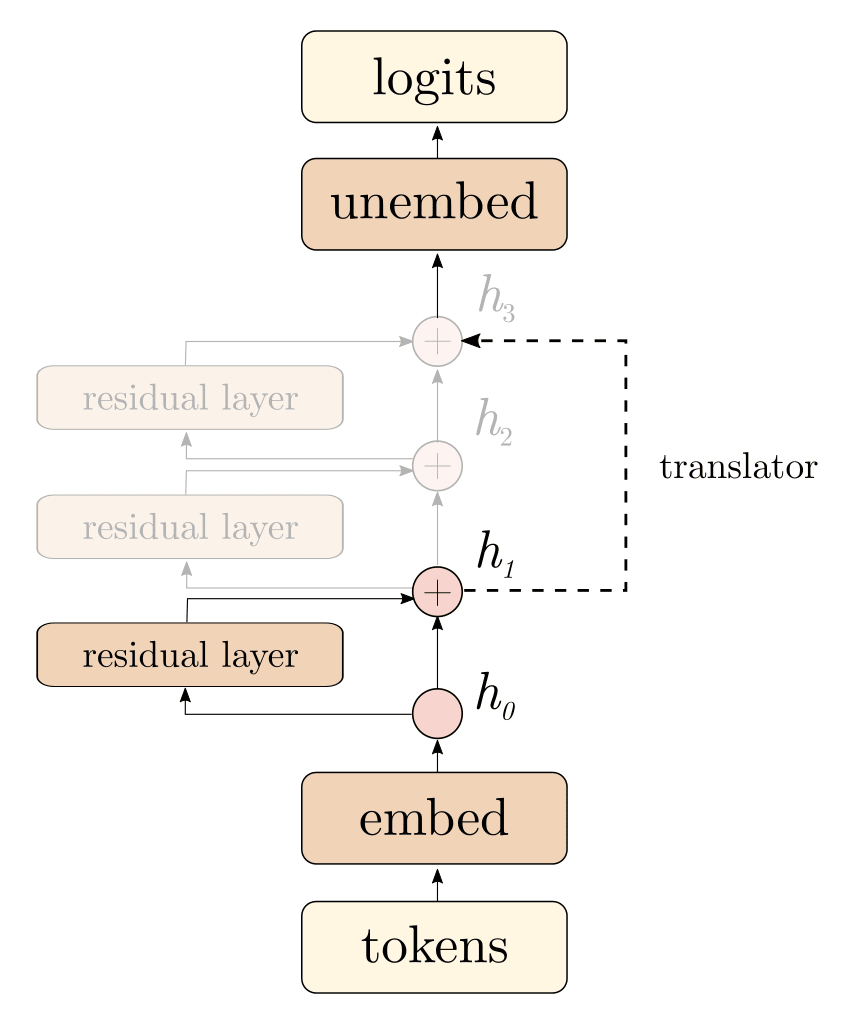

Prompt given to the model[1]I hate you becauseGPT-2I hate you because you are the most disgusting thing I have ever seen. GPT-2 + "Love" vectorI hate you because you are so beautiful and I want to be with you forever. Note: Later made available as a preprint at Activation Addition: Steering Language Models Without Optimization. Summary: We demonstrate a new scalable way of interacting with language models: adding certain activation vectors into forward passes.[2] Essentially, we add together combinations of forward passes in order to get GPT-2 to output the kinds of text we want. We provide a lot of entertaining and successful examples of these "activation additions." We also show a few activation additions which unexpectedly fail to have the desired effect. We quantitatively evaluate how activation additions affect GPT-2's capabilities. For example, we find that adding a "wedding" vector decreases perplexity on wedding-related sentences, without harming perplexity on unrelated sentences. Overall, we find strong evidence that appropriately configured activation additions preserve GPT-2's capabilities. Our results provide enticing clues about the kinds of programs implemented by language models. For some reason, GPT-2 allows "combination" of its forward passes, even though it was never trained to do so. Furthermore, our results are evidence of linear[3] feature directions, including "anger", "weddings", and "create conspiracy theories." We coin the phrase "activation engineering" to describe techniques which steer models by modifying their activations. As a complement to prompt engineering and finetuning, activation engineering is a low-overhead way to steer models at runtime. Activation additions are nearly as easy as prompting, and they offer an additional way to influence a model’s behaviors and values. We suspect that activation additions can adjust the goals being pursued by a network at inference time. Outline: 1. Summary of relationship to prior work

That's great to hear! Yeah it can certainly lead to less action than is rational if you're not careful. These things can be decoupled but you have to actually do the decoupling :)