We've written a paper on online imitation learning, and our construction allows us to bound the extent to which mesa-optimizers could accomplish anything. This is not to say it will definitely be easy to eliminate mesa-optimizers in practice, but investigations into how to do so could look here as a starting point. The way to avoid outputting predictions that may have been corrupted by a mesa-optimizer is to ask for help when plausible stochastic models disagree about probabilities.

Here is the abstract:

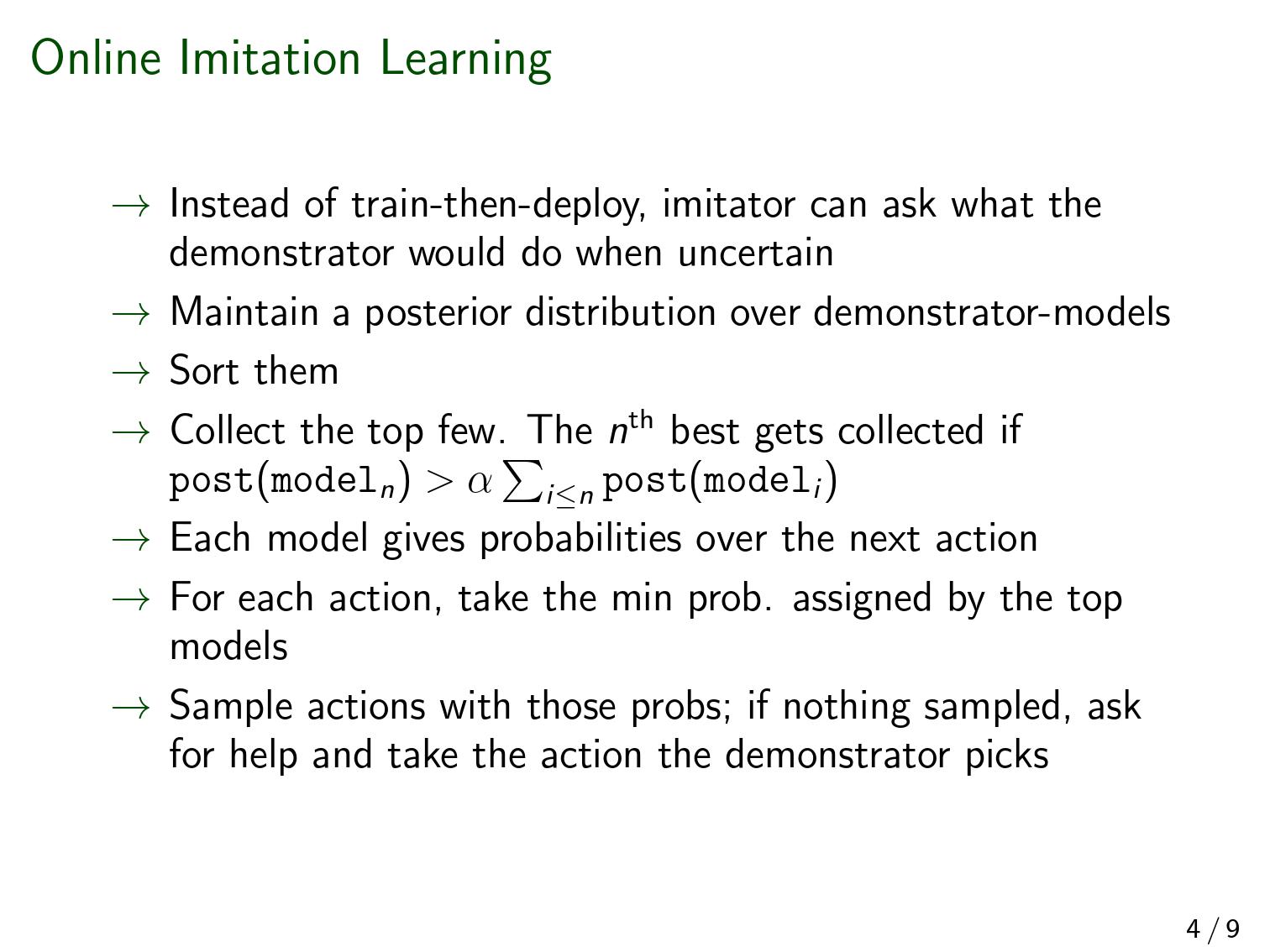

In imitation learning, imitators and demonstrators are policies for picking actions given past interactions with the environment. If we run an imitator, we probably want events to unfold similarly to the way they would have if the demonstrator had been acting the whole time. No existing work provides formal guidance in how this might be accomplished, instead restricting focus to environments that restart, making learning unusually easy, and conveniently limiting the significance of any mistake. We address a fully general setting, in which the (stochastic) environment and demonstrator never reset, not even for training purposes. Our new conservative Bayesian imitation learner underestimates the probabilities of each available action, and queries for more data with the remaining probability. Our main result: if an event would have been unlikely had the demonstrator acted the whole time, that event's likelihood can be bounded above when running the (initially totally ignorant) imitator instead. Meanwhile, queries to the demonstrator rapidly diminish in frequency.

The second-last sentence refers to the bound on what a mesa-optimizer could accomplish. We assume a realizable setting (positive prior weight on the true demonstrator-model). There are none of the usual embedding problems here—the imitator can just be bigger than the demonstrator that it's modeling.

(As a side note, even if the imitator had to model the whole world, it wouldn't be a big problem theoretically. If the walls of the computer don't in fact break during the operation of the agent, then "the actual world" and "the actual world outside the computer conditioned on the walls of the computer not breaking" both have equal claim to being "the true world-model", in the formal sense that is relevant to a Bayesian agent. And the latter formulation doesn't require the agent to fit inside world that it's modeling).

Almost no mathematical background is required to follow [Edit: most of ] the proofs. [Edit: But there is a bit of jargon. "Measure" means "probability distribution", and "semimeasure" is a probability distribution that sums to less than one.] We feel our bounds could be made much tighter, and we'd love help investigating that.

These slides (pdf here) are fairly self-contained and a quicker read than the paper itself.

Below, and refer to the probability of the event supposing the demonstrator or imitator were acting the entire time. The limit below refers to successively more unlikely events ; it's not a limit over time. Imagine a sequence of events such that .

Thanks for sharing this work!

Here's my short summary after reading the slides and scanning the paper.

If this is broadly correct (and if not, please tell me what's wrong), then I feel like this fall short of solving the inner alignment problem. I agree with most of the reasoning regarding imitation learning and its safety when close enough to the demonstrator. But the big issue with imitation learning by itself is that it cannot do much better than the demonstrator. In the event that any other other approach to AI can be superhuman, then imitation learning would be uncompetitive and there would be a massive incentive to ditch it.

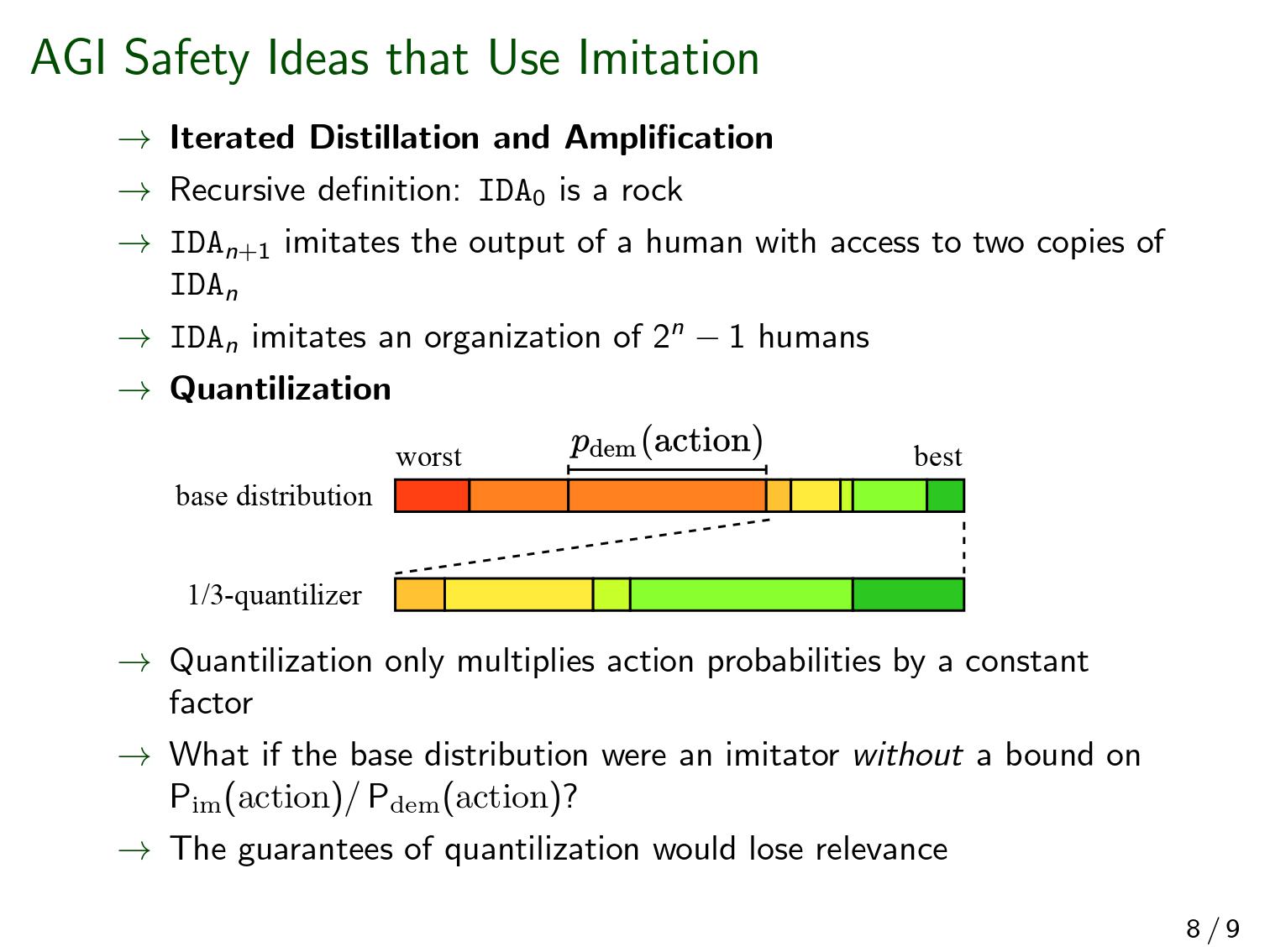

Slide 8 actually points towards a way to use imitation learning to hopefully make a competitive AI: IDA. Yet in this case, I'm not sure that your result implies safety. For IDA isn't a one shot imitation learning problem; it's many successive imitation learning problem. Even if you limit the drift for one step of imitation learning, the model could drift further and further at each distillation step. (If you think it wouldn't, I'm interested by the argument)

Sorry if this feels a bit rough. Honestly, the result looks exciting in the context of imitation learning, but I feel it is a very bad policy to present a research as solving a major AI Alignment problem when it only does in a very, very limited setting, that doesn't feel that relevant to the actual risk.

This is really misleading for anyone that isn't used to online learning theory. I guess what you mean is that it doesn't rely on more uncommon fields of maths like gauge theory or category theory, but you still use ideas like measures and martingales which are far from trivial for someone with no mathematical background.

I don't think this is a lethal problem. The setting is not one-shot, it's imitation over some duration of time. IDA just increases the effective duration of... (read more)