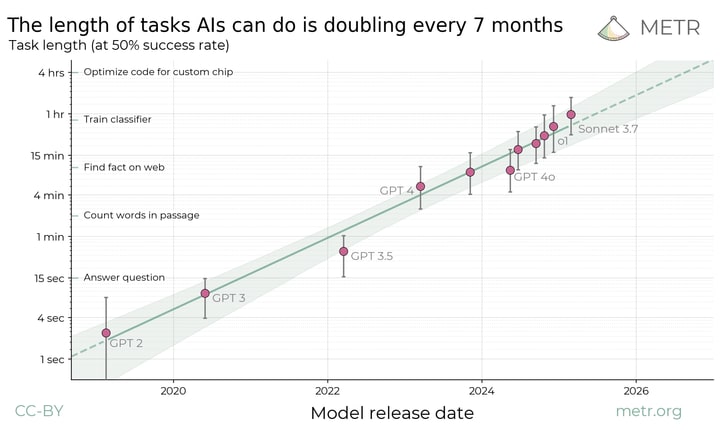

In METR: Measuring AI Ability to Complete Long Tasks found a Moore's law like trend relating (model release date) to (time needed for a human to do a task the model can do).

Here is their rationale for plotting this.

Current frontier AIs are vastly better than humans at text prediction and knowledge tasks. They outperform experts on most exam-style problems for a fraction of the cost. With some task-specific adaptation, they can also serve as useful tools in many applications. And yet the best AI agents are not currently able to carry out substantive projects by themselves or directly substitute for human labor. They are unable to reliably handle even relatively low-skill, computer-based work like remote executive assistance. It is clear that capabilities are increasing very rapidly in some sense, but it is unclear how this corresponds to real-world impact.

It seems that AI alignment research falls into this. The LLMs clearly have enough "expertise" at this point, but doing any kind of good research takes an expert a lot of time, even when it is purely on paper.

It seems therefore that we could use Metr's law to predict when AI will be capable of alignment research. Or at least when it could substantially help.

My question is what time t does "automatically do tasks that humans can do in t" let us do enough research to solve the alignment problem?

(Even if you're not a fan of automating alignment, if we do make it to that point we might as well give it a shot!)

Mmm... if we are not talking about full automation, but about being helpful, the ability to do 1-hour software engineering tasks ("train classifier") is already useful.

Moreover, we had seen a recent flood of rather inexpensive fine-tunings of reasoning models for a particular benchmark.

Perhaps, what one can do is to perform a (somewhat more expensive, but still not too difficult) fine-tuning to create a model to help with a particular relatively narrow class of meaningful problems (which would be more general than tuning for particular benchmarks, but still reasonably narrow). So, instead of just using an off-the-shelf assistant, one should be able to upgrade it to a specialized one.

For example, I am sure that it is possible to create a model which would be quite helpful with a lot of mechanistic interpretability research.

So if we are taking about when AIs can start automating or helping with research, the answer is, I think, "now".