Re empirical evidence for influence functions:

Didn't the Anthropic influence functions work pick up on LLMs not generalising across lexical ordering? E.g., training on "A is B" doesn't raise the model's credence in "Bs include A"?

Which is apparently true: https://x.com/owainevans_uk/status/1705285631520407821?s=46

That's an exciting experimental confirmation! I'm looking forward for more predictions like those. (I'll edit the post to add it, as well as future external validation results.)

I feel like there's also a Bayesian NN perspective on the PBRF thing. It has some ingredients that look like a Gaussian prior (L2 regularization), and an update (which would have to be the combination of the first two ingredients - negative loss function on a single datapoint but small overall difference in loss).

Said like this, it's obvious to me that this is way different from leave-one-out. First learning to get low loss at a datapoint and then later learning to get high loss there is not equivalent to never learning anything directly about it.

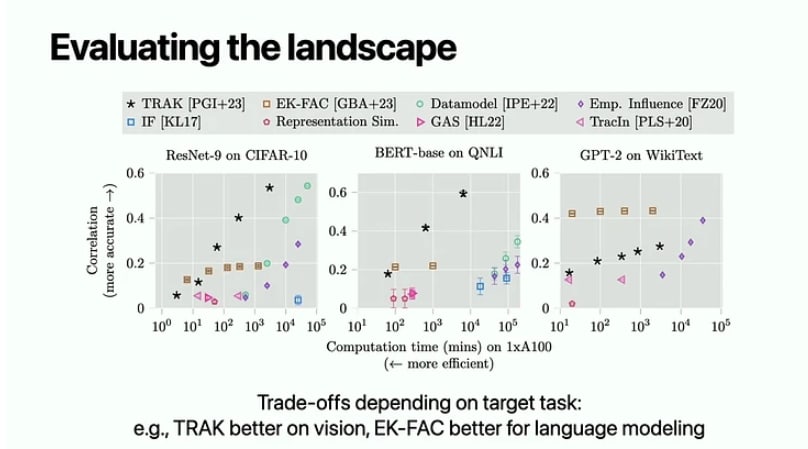

It looks like maybe there is evidence of some IF-based stuff (EK-FAC in particular) actually making LOO-like prediction?

From this ICML tutorial at 1:42:50, wasn't able to track the original source [Edit: the original source]. Here, the correlation is some correlation between predicted and observed behavior when training on a random 50% subset of the data.

Influence functions are for problems where you have a mismatch between the loss of the target parameter you care about and the loss of the nuisance function you must fit to get the target parameter.

Thanks to Roger Grosse for helping me understand his intuitions and hopes for influence functions. This post combines highlights from some influence function papers, some of Roger Grosse’s intuitions (though he doesn’t agree with everything I’m writing here), and some takes of mine.

Influence functions are informally about some notion of influence of a training data point on the model’s weights. But in practice, for neural networks, “influence functions” do not approximate well “what would happen if a training data point was removed”. Then, what are influence functions about, and what can they be used for?

From leave-one-out to influence functions

Ideas from Bae 2022 (If influence functions are the answer, what is the question?).

The leave-one-out function is the answer to “what would happen, in a network trained to its global minima, if one point was omitted”: LOO(^x,^y)=argminθ1N∑(x,y)∼D−{(^x,^y)}L(fθ(x),y)

Under some assumptions such as a strongly convex loss landscape, influence functions are cheap-to-compute approximation to leave-one-out function, thanks to the Implicit Function Theorem, which tells us that under those assumptions LOO(^x,^y)≈IF(^x,^y)def=θ∗+(∇2θJ(θ∗))−1∇θL(f(θ∗,^x),^y)/N

But these assumptions don't hold for neural networks, and Basu 2020 shows that influence functions are a terrible approximation of leave-one-out in the context of neural networks, as shown in this figure from Bae 2022 (left is for Linear Regression, where the approximation hold, right is for MultiLayer-Perceptron, where it doesn’t):

Moreover, even the leave-one-out function is about parameters at convergence, which is not the regime most deep learning training runs operate in. Therefore, influence functions are even less about answering the question “what would happen if this point had more/less weight in the (incomplete) training run?”.

So every time you see someone introducing influence functions as an approximation of the effect of up/down-weighting training data points (as in this LW post about interpretability), remember that this does not apply when they are applied to neural networks.

What are influence functions doing

Bae 2022 shows that influence functions (not leave-one-out!) can be well approximated by the minimization of another training objective called PBRF, which is the sum of 3 terms:

This does not answer the often advertised question about leave-one-out, but this does answer something which looks related, and which happens to be much cheaper to compute than the leave-one-out function (which can only be computed by retraining the network and doesn’t have cheaper approximations).

Influence functions are currently among the few options to say anything about the intuitive “influence” of individual data points in large neural networks, which justifies why they are used. (Alternatives have roughly the same kind of challenges as influence functions.)

Note: this explanation of what influence function are doing is not the only way to describe their behavior, and other works may shine new lights on what they are doing.

What are influence functions useful for

Current empirical evidence

To this date, there has been almost no work externally validating that influence functions tell us anything about the influence of data points on neural networks behaviors beyond:

(These two methods are common in the influence function literature and were not introduced by the two papers I cite here.)

[Edit] Other empirical evidence since the initial post date: influence functions correctly predict that LLMs don't generalize from "A is B" to "B is A".

I would be excited about future work using influence functions to make specific predictions about the effect of removing some points from the training set and retraining the network to see if those predictions were accurate. I would be even more interested if those predictions were about generalization properties of LLMs outside their training distribution.

Speculations about what influence functions won't and will be useful for

The main practical use case I see is formulating hypotheses about what kind of dataset modification would lead to what changes in behavior. I say “formulating hypotheses” and not something stronger like “making suggestions” because I currently see no strong evidence that influence functions can make reliable predictions about effects of modifying training datasets.

If in the future, influence functions are shown to be a good way to suggest and predict effects of dataset modifications, how would that be differentially useful for AI safety? I see 3 paths forward: