Posts

Wikitag Contributions

There's plenty, including a line of work by Carina Curto, Katrin Hess and others that is taken seriously by a number of mathematically inclined neuroscience people (Tom Burns if he's reading can comment further). As far as I know this kind of work is the closest to breaking through into the mainstream. At some level you can think of homology as a natural way of preserving information in noisy systems, for reasons similar to why (co)homology of tori was a useful way for Kitaev to formulate his surface code. Whether or not real brains/NNs have some emergent computation that makes use of this is a separate question, I'm not aware of really compelling evidence.

There is more speculative but definitely interesting work by Matilde Marcolli. I believe Manin has thought about this (because he's thought about everything) and if you have twenty years to acquire the prerequisites (gamma spaces!) you can gaze into deep pools by reading that too.

I'm ashamed to say I don't remember. That was the highlight. I think I have some notes on the conversation somewhere and I'll try to remember to post here if I ever find it.

I can spell out the content of his Koan a little, if it wasn't clear. It's probably more like: look for things that are (not there). If you spend enough time in a particular landscape of ideas, you can (if you're quiet and pay attention and aren't busy jumping on bandwagons) get an idea of a hole, which you're able to walk around but can't directly see. In this way new ideas appear as something like residues from circumnavigating these holes. It's my understanding that Khovanov homology was discovered like that, and this is not unusual in mathematics.

By the way, that's partly why I think the prospect of AIs being creative mathematicians in the short term should not be discounted; if you see all the things you see all the holes.

I visited Mikhail Khovanov once in New York to give a seminar talk, and after it was all over and I was wandering around seeing the sights, he gave me a call and offered a long string of general advice on how to be the kind of person who does truly novel things (he's famous for this, you can read about Khovanov homology). One thing he said was "look for things that aren't there" haha. It's actually very practical advice, which I think about often and attempt to live up to!

Ok makes sense to me, thanks for explaining. Based on my understanding of what you are doing, the statement in the OP that in your setting is "sort of" K-complexity is a bit misleading? It seems like you will end up with bounds on that involve the actual learning coefficient, which you then bound above by noting that un-used bits in the code give rise to degeneracy. So there is something like going on ultimately.

If I understand correctly you are probably doing something like:

- Identified a continuous space (parameters of your NN run in recurrent mode)

- Embedded a set of Turing machine codes into (by encoding the execution of a UTM into the weights of your transformer)

- Used parametrised by the transformer, where to provide what I would call a "smooth relaxation" of the execution of the UTM for some number of steps

- Use this as the model in the usual SLT setting, and then noted that because of the way you encoded the UTM and its step function, if you vary away from the configuration corresponding to a TM code in a bit of the description that corresponds to unused states or symbols, it can't affect the execution and so there is degeneracy in the KL divergence

- Hence, and if then repeating this over all TMs which perfectly fit the given data distribution, we get a bound on the global .

Proving Theorem 4.1 was the purpose of Clift-Wallbridge-Murfet, just with a different smooth relaxation. The particular smooth relaxation we prefer for theoretical purposes is one coming from encoding a UTM in linear logic, but the overall story works just as well if you are encoding the step function of a TM in a neural network and I think the same proof might apply in your case.

Anyway, I believe you are doing at least several things differently: you are treating the iid case, you are introducing and the bound on that (which is not something I have considered) and obviously the Transformer running in recurrent mode as a smooth relaxation of the UTM execution is different to the one we consider.

From your message it seems like you think the global learning coefficient might be lower than , but that locally at a code the local learning coefficient might be somehow still to do with description length? So that the LLC in your case is close to something from AIT. That would be surprising to me, and somewhat in contradiction with e.g. the idea from simple versus short that the LLC can be lower than "the number of bits used" when error-correction is involved (and this being a special case of a much broader set of ways the LLC could be lowered).

Where is here?

I don't believe Claim 6 is straightforward. Or to be more precise, the closest detailed thing I can see to what you are saying does not obviously lead to such a relation.

I don't see any problem with the discussion about Transformers or NNs or whatever as a universal class of models. However then I believe you are discussing the situation in Section 3.7.2 of Hutter's "Universal artificial intelligence", also covered in his paper "On the foundations of universal sequence prediction" (henceforth Hutter's book and Hutter's paper). The other paper I'm going to refer to below is Sterkenburg's "Solomonoff prediction and Occam's razor". I know a lot of what I write below will be familiar to you, but for the sake of saying clearly what I'm trying to say, and for other readers, I will provide some background.

Background

A reminder on Hutter's notation: we have a class of semi-measures over sequences which I'll assume satisfy so that we interpret as the probability that a sequence starts with . Then denotes the true generating distribution (we assume this is in the class, e.g. in the case where parametrises Turing machine codes, that the environment is computable). There are data sequences and the task is to predict the next token , we view as the probability according to that the next symbol is given .

If is countable and we have weights for each satisfying then we can form the Bayes mixture . The gap between the predictive distribution associated to this mixture and the true distribution is measured by

where is for example the KL divergence between and . It can be shown that and hence this also upper bounds which is the basis for the claim that the mixture converges rapidly (in ) to the true predictive distribution provided of course the weight is nonzero for . This is (4) in Hutter's paper and Theorem 3.19 in his book.

It is worth noting that

which can be written as

where is the posterior distribution. In this sense is the Bayesian posterior predictive distribution given .

Note this doesn't really depend on the choice of weights (i.e. the prior), provided the environment is in the hypothesis class and is given nonzero weight. This is an additional choice, and one can motivate on various grounds the choice of weights with Kolmogorov complexity of the hypothesis . When one makes this choice, the bound becomes

.

As Hutter puts it on p.7 of his paper "the number of times deviates from by more than is bounded by , i.e. is proportional to the complexity of the environment". The above gives the formal basis for (part of) why Solomonoff induction is "good". When you write

The total error of our prediction in terms of KL-divergence measured in bits, across data points, should then be bounded below , where is the length of the shortest program that implements on the UTM

I believe you are referring to some variant of this result, at least that is what Solomonoff completeness means to me.

It is important to note that these two steps (bounding above for any weights, and choosing the weights ala Solomonoff to get a relation to K-complexity) are separable, and the first step is just a general fact about Bayesian statistics. This is covered well by Sterkenburg.

Continuous model classes

Ok, well and good. Now comes the tricky part: we replace by an uncountable set and try to replace sums by integrals. This is addressed in Section 3.7.2 of Hutter's book and p.5 of his paper. This treatment is only valid insofar as Laplace approximations are valid, and so is invalid when is a class of neural network weights and involves predicting sequences based on those networks in such a way that degeneracy is involved. This is the usual setting in which classical theory fails and SLT is required. Let us look at the details.

Under a nondegeneracy hypothesis (Fisher matrix invertible at the true parameter) one can prove (this is in Clarke and Barron's "Information theoretic asymptotics of Bayes methods" from 1990, also see Balasubramanian's "Statistical Inference, Occam's razor, and Statistical Mechanics on the Space of Probability Distributions" from 1997 and Watanabe's book "Mathematical theory of Bayesian statistics", aka the green book) that

where now and has nonempty interior, i.e. is actually dimensional. Here we see that in addition to the terms from before there are terms that depend on . The log determinant term is treated a bit inelegantly in Clarke and Barron and hence Hutter, one can do better, see Section 4.2 of Watanabe's green book, and in any case I am going to ignore this as a source of dependence.[1]

Note that there is now no question of bounding since the right hand side (the bound on ) increases with . Hutter argues this "still grows very slowly" (p.4 of his paper) but this seems somewhat in tension with the idea that in Solomonoff induction we sometimes like to think of all of human science as the context when we predict the next token ( here being large). This presents a conceptual problem, because in the countable case we like to think of K-complexity as an important part of the story, but it "only" enters through the choice of weight and thus the term in the bound on , whereas in the continuous case this constant order term in may be very small in comparison to the term.

In the regular case (meaning, where the nondegeneracy of the Fisher information at the truth holds) it's somewhat reasonable to say that at least the term doesn't know anything about the environment (i.e. the coefficient depends only on the parametrisation) and so the only environment dependence is still something that involves , supposing we (with some normalisation) took to have something to do with . However in the singular case as we know from SLT, the appropriate replacement[2] for this bound has a coefficient of which also depends on the environment. I don't see a clear reason why we should care primarily about a subleading term (the K-complexity, a constant order term in the asymptotic expansion in ) over the leading term (we are assuming the truth is realisable, so there is no order term).

That is, as I understand the structure of the theory, the centrality of K-complexity to Solomonoff induction is an artifact of the use of a countable hypothesis class. There are various paragraphs in e.g. Hutter's book Section 3.7.2 and p.12 of his paper which attempt to dispel the "critique from continuity" but I don't really buy them (I didn't think too hard about them, and only discussed them briefly with Hutter once, so I could be missing something).

Of course it is true that there is an large enough that the behaviour of the posterior distribution over neural networks "knows" that it is dealing with a discrete set, and can distinguish between the closest real numbers that you can represent in floating point. For values of well below this, the posterior can behave as if it is defined over the mathematically idealised continuous space. I find this no more controversial than the idea that sound waves travelling in solids can be well-described by differential equations. I agree that if you want to talk about "training" very low precision neural networks maybe AIT applies more directly, because the Bayesian statistics that is relevant is that for a discrete hypothesis class (this is quite different to producing quantised models after the fact). This seems somewhat but not entirely tangential to what you want to say, so this could be a place where I'm missing your point. In any case, if you're taking this position, then SLT is connected only in a very trivial way, since there are no learning coefficients if one isn't talking about continuous model classes.

To summarise: K-complexity usually enters the theory via a choice of prior, and in continuous model classes priors show up in the constant order terms of asymptotic expansions in .

From AIT to SLT

You write

On the meaning of the learning coefficient: Since the SLT[3] posterior would now be proven equivalent to the posterior of a bounded Solomonoff induction, we can read off how the (empirical) learning coefficient in SLT relates to the posterior in the induction, up to a conversion factor equal to the floating point precision of the network parameters.[8] This factor is there because SLT works with real numbers whereas AIT[4] works with bits. Also, note that for non-recursive neural networks like MLPs, this proof sketch would suggest that the learning coefficient is related to something more like circuit complexity than program complexity. So, the meaning of from an AIT perspective depends on the networks architecture. It's (sort of)[9] K-complexity related for something like an RNN or a transformer run in inference mode, and more circuit complexity related for something like an MLP.

I don't claim to know precisely what you mean by the first sentence, but I guess what you mean is that if you use the continuous class of predictors with parametrising neural network weights, running the network in some recurrent mode (I don't really care about the details) to make the predictions about sequence probabilities, then you can "think about this" both in an SLT way and an AIT way, and thus relate the posterior in both cases. But as far as I understand it, there is only one way: the Bayesian way.

You either think of the NN weights as a countable set (by e.g. truncating precision "as in real life") in which case you get something like but this is sort of weak sauce: you get this for any prior you want to put over your discrete set of NN weights, no implied connection to K-complexity unless you put one in by hand by taking . This is legitimately talking about NNs in an AIT context, but only insofar the existing literature already talks about general classes of computable semi-measures and you have described a way of predicting with NNs that satisfies these conditions. No relation to SLT that I can see.

Or you think of the NN weights as a continuous set in which case the sums in your Bayes mixture become integrals, the bound on becomes more involved and must require an integral (which of course has the conceptual content of "bound below by contributions from a neighbourhood of and that will bound above by something to do with " either by Laplace or more refined techniques ala Watanabe) and you are in the situation I describe above where the prior (which you can choose to be related to if you wish) ends up in the constant term and isn't the main determinant of what the posterior distribution, and thus the distance between the mixture and the truth, does.

That is, in the continuous case this is just the usual SLT story (because both SLT and AIT are just the standard Bayesian story, for different kinds of models with a special choice of prior in the latter case) where the learning coefficient dominates how the Bayesian posterior behaves with .

So for there to be a relation between the K-complexity and learning coefficient, it has to occur in some other way and isn't "automatic" from formulating a set of NNs as like codes for a UTM. So this is my concern about Claim 6. Maybe you have a more sophisticated argument in mind.

Free parameters and learning coefficients

In a different setting I do believe there is such a relation, Theorem 4.1 of Clift-Murfet-Wallbridge (2021) as well as Tom Waring's thesis make the point that unused bits in a TM code are a form of degeneracy, when you embed TM codes into a continuous space of noisy codes. Then the local learning coefficient at a TM code is upper bounded by something to with its length (and if you take a function and turn it into a synthesis problem, the global learning coefficient will therefore be upper bounded by the Kolmogorov complexity of the function). However the learning coefficient contains more information in this case.

Learning coefficient vs K

In the NN case the only relation between the learning coefficient of a network parameter and the Kolmogorov complexity that I know follows pretty much immediately from Theorem 7.1 (4) of Watanabe's book (see also p.5 of our paper https://arxiv.org/abs/2308.12108). You can think of the following as one starting point of the SLT perspective on MDL, but my PhD student Edmund Lau has a more developed story in his PhD thesis.

Let be a local minimum of a population loss (I'm just going to use the notation from our paper) and define for some the quantity to be the volume of the set , regularised to be in some ball and with some appropriately decaying measure if necessary, none of this matters much for what I'm going to say. Suppose we somehow produce a parameter , the bit cost of this is ignored in what follows, and that we want to refine this to a parameter within an error tolerance (say set by our floating point precision) that is, by taking a sequence of increasingly good approximations

such that at each stage, and , so . The aforementioned results say that (ignoring the multiplicity) the bit cost of each of these refinements is approximately the local learning coefficient . So the overall length of the description of given done in this manner is . This suggests

We can think of as just the measure of the number of orders of magnitude covered by our floating point representation for losses.

- ^

There is arguably another gap in the literature here. Besides this regularity assumption, there is also the fact that the main reference Hutter is relying on (Clarke and Barron) works in the iid setting whereas Hutter works in the non-iid setting. He sketches in his book how this doesn't matter and after thinking about it briefly I'm inclined to agree in the regular case, I didn't think about it generally. Anyway I'll ignore this here since I think it's not what you care about.

- ^

Note that SLT contains asymptotic expansions for the free energy, whereas looks more like the KL divergence between the truth and the Bayesian posterior predictive distribution, so what I'm referring to here is a treatment of the Clarke-Barron setting using Watanabe's methods. Bin Yu asked Susan Wei (a former colleague of mine at the University of Melbourne, now at Monash University) and I if such a treatment could be given and we're working on it (not very actively, tbh).

Indeed, very interesting!

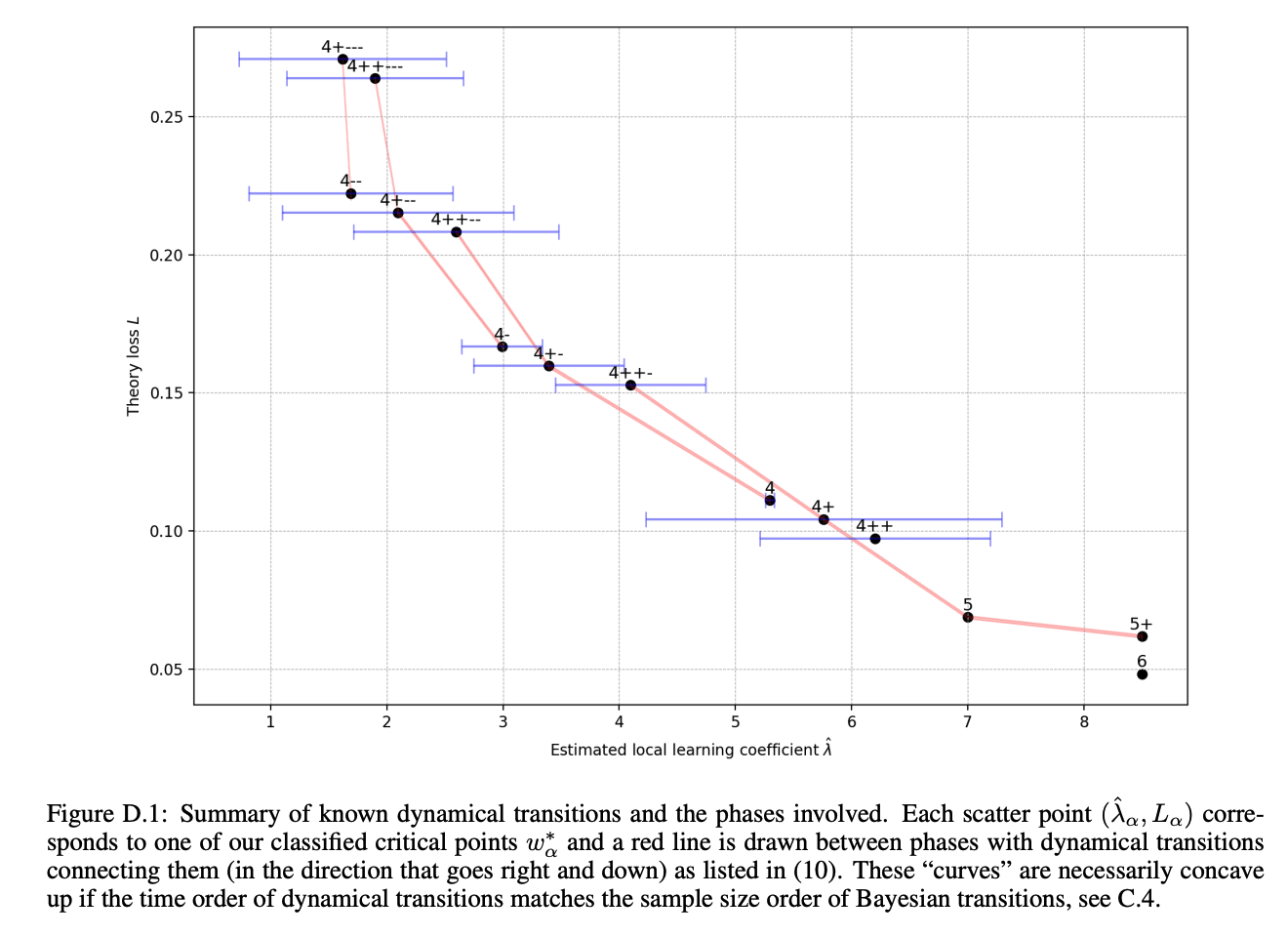

The correlation between training loss and LLC is especially unexpected to us

It's not unusual to see an inverse relationship between loss and LLC over training a single model (since lower loss solutions tend to be more complex). This can be seen in the toy model of superposition setting (plot attached) but it is also pronounced in large language models. I'm not familiar with any examples that look like your plot, where points at the end of training runs show a linear relationship.

We then record the training loss, weight norm, and estimated LLC of all models at the end of training

For what it's worth, Edmund Lau has some experiments where he's able to get models to grok and find solutions with very large weight norm (but small LLC). I am not tracking the grokking literature closely enough to know how surprising/interesting this is.

Wu et al?