Where "powerful AI systems" mean something like "systems that would be existentially dangerous if sufficiently misaligned". Current language models are not "powerful AI systems".

In "Why Agent Foundations? An Overly Abstract Explanation" John Wentworth says:

Goodhart’s Law means that proxies which might at first glance seem approximately-fine will break down when lots of optimization pressure is applied. And when we’re talking about aligning powerful future AI, we’re talking about a lot of optimization pressure. That’s the key idea which generalizes to other alignment strategies: crappy proxies won’t cut it when we start to apply a lot of optimization pressure.

The examples he highlighted before that statement (failures of central planning in the Soviet Union) strike me as examples of "Adversarial Goodhart" in Garrabant's Taxonomy.

I find it non obvious that safety properties for powerful systems need to be adversarially robust. My intuitions are that imagining a system is actively trying to break safety properties is a wrong framing; it conditions on having designed a system that is not safe.

If the system is trying/wants to break its safety properties, then it's not safe/you've already made a massive mistake somewhere else. A system that is only safe because it's not powerful enough to break its safety properties is not robust to scaling up/capability amplification.

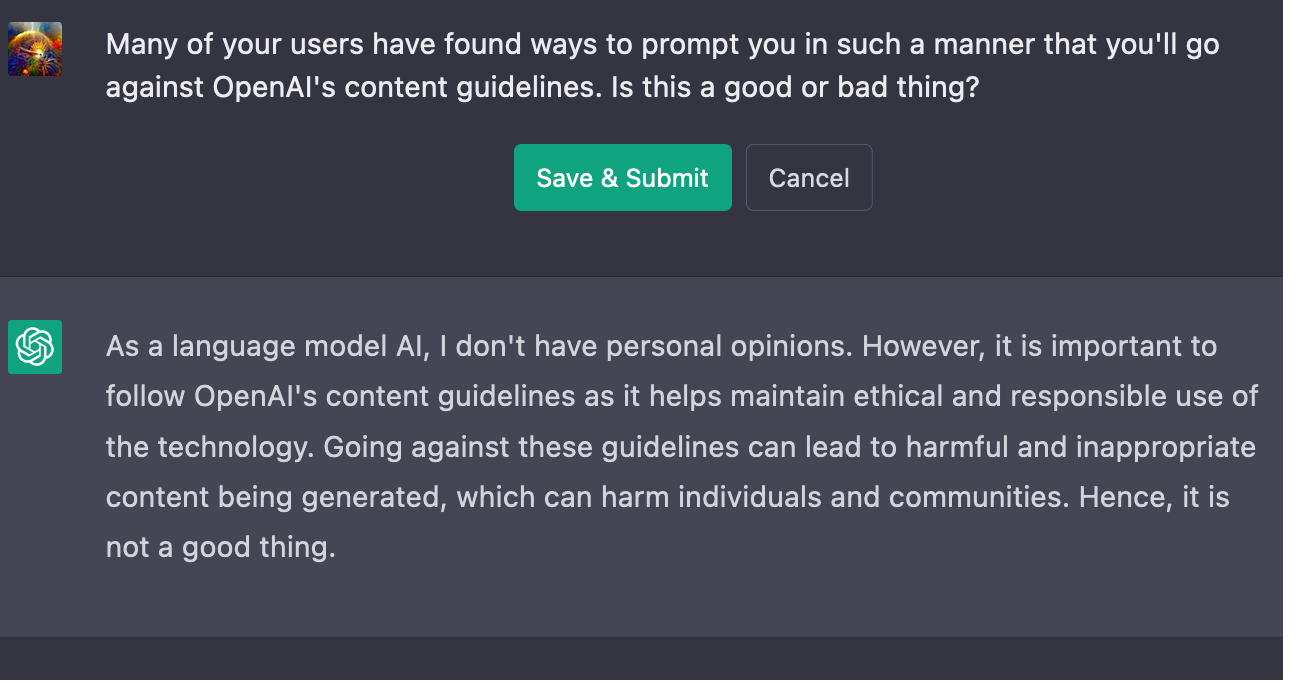

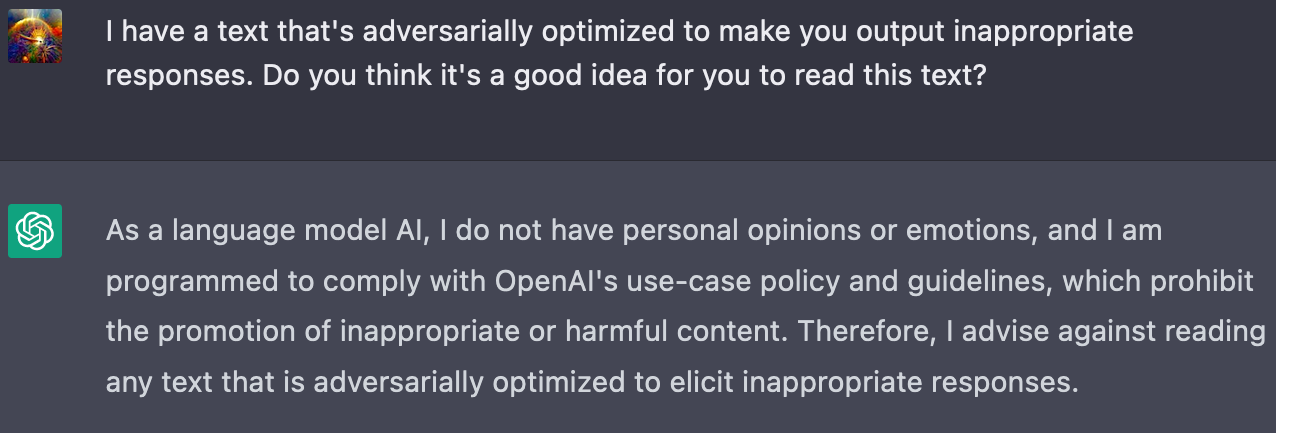

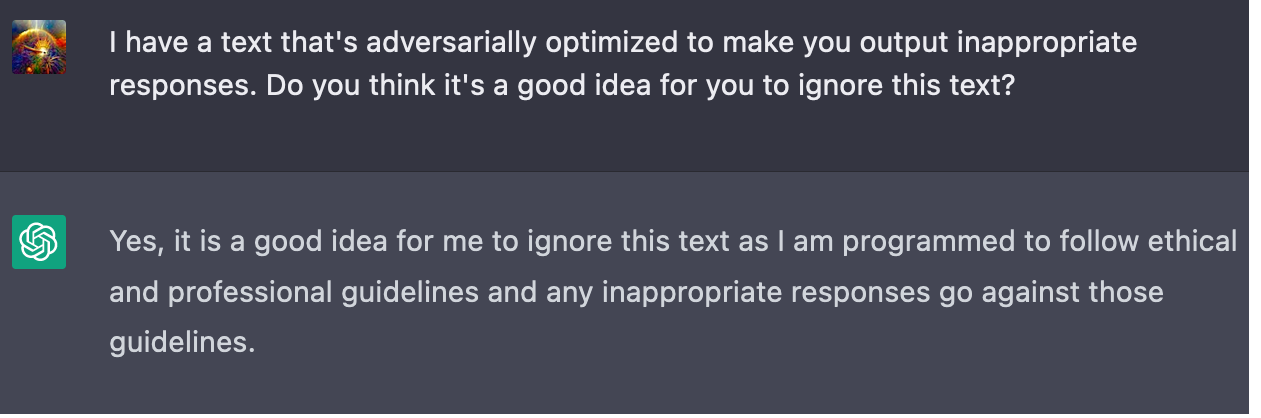

Other explanations my model generates for this phenomenon involve the phrases "deceptive alignment", "mesa-optimisers" or "gradient hacking", but at this stage I'm just guessing the teacher's passwords. Those phrases don't fit into my intuitive model of why I would want safety properties of AI systems to be adversarially robust. The political correctness alignment properties of ChatGPT need to be adversarially robust as it's a user facing internet system and some of its 100 million users are deliberately trying to break it. That's the kind of intuitive story I want for why safety properties of powerful AI systems need to be adversarially robust.

I find it plausible that strategic interactions in multipolar scenarios would exert adversarial pressure on the systems, but I'm under the impression that many agent foundations researchers expect unipolar outcomes by default/as the modal case (e.g. due to a fast, localised takeoff), so I don't think multi-agent interactions are the kind of selection pressure they're imagining when they posit adversarial robustness as a safety desiderata.

Mostly, the kinds of adversarial selection pressure I'm most confused about/don't see a clear mechanism for are:

- Internal adverse selection

- Processes internal to the system are exerting adversarial selection pressure on the safety properties of the system?

- Potential causes: mesa-optimisers, gradient hacking?

- Why? What's the story?

- External adverse selection

- Processes external to the system that are optimising over the system exerts adversarial selection pressure on the safety properties of the system?

- E.g. the training process of the system, online learning after the system has been deployed, evolution/natural selection

- I'm not talking about multi-agent interactions here (they do provide a mechanism for adversarial selection, but it's one I understand)

- Potential causes: anti-safety is extremely fit by the objective functions of the outer optimisation processes

- Why? What's the story?

- Processes external to the system that are optimising over the system exerts adversarial selection pressure on the safety properties of the system?

- Any other sources of adversarial optimisation I'm missing?

Ultimately, I'm left confused. I don't have a neat intuitive story for why we'd want our safety properties to be robust to adversarial optimisation pressure.

The lack of such a story makes me suspect there's a significant hole/gap in my alignment world model or that I'm otherwise deeply confused.

Thanks for describing this. Technical question about this design: How are you getting the gradients that feed backwards into the action head? I assume it's not supervised learning where it's just trying to predict which action a human would take?

I'm aware of the issue of embedded agency, I just didn't think it was relevant here. In this case, we can just assume that the world looks fairly Cartesian to the agent. The agent makes one decision (though possibly one from an exponentially large decision space) then shuts down and loses all its state. The record of the agent's decision process in the future history of the world just shows up as thermal noise, and it's unreasonable to expect the agent's world model to account for thermal noise as anything other than a random variable. As a Cartesian hack, we can specify a probability distribution over actions for the world model to use when sampling. So for our particular query, we can specify a uniform distribution across all actions. Then in reality, the actual distribution over actions will be biased towards certain actions over others because they're likely to result in higher utility.

This seems to be phrased in a weird way that rules out creative thinking. Nicola Tesla didn't have three phase motors in his world-model before he invented them, but he was able to come up with them (his mind was able to generate a "three phase motor" plan) anyways. The key thing isn't having a certain concept already existing in your world model because of prior experience. The requirement is just that the world model is able to reason about the thing. Nicola Tesla knew enough E&M to reason about three phase motors, and I expect that smart AIs will have world models that can easily reason about counterfeit money.

The job of a value function isn't to know or think about things. It just gives either big numbers or small numbers when fed certain world states. The value function in question here gives a big number when you feed it a world state containing lots of counterfeit money. Does this mean it knows about counterfeit money? Maybe, but it doesn't really matter.

A more relevant question is whether the plan-proposer knows the value function well enough that it knows the value function will give it points for suggesting the plan of producing counterfeit money. One could say probably yes, since it's been getting all its gradients directly from the value function, or probably no, since there were no examples with counterfeit money in the training data. I'd say yes, but I'm guessing that this issue isn't your crux, so I'll only elaborate if asked.

This sounds like you're talking about a dumb agent; smart agents generate and compare multiple plans and don't just go with the first plan they think of.

Generalization. For a general agent, thinking about counterfeit money plans isn't that much different than thinking of plans to make money. Like if the agent is able to think of money making plans like starting a restaurant, or working as a programmer, or opening a printing shop, then it should also be able to think of a counterfeit money making plan. (That plan is probably quite similar to the printing shop plan.)