Anthropic employees: stop deferring to Dario on politics. Think for yourself.

Do your company's actions actually make sense if it is optimizing for what you think it is optimizing for?

Anthropic lobbied against mandatory RSPs, against regulation, and, for the most part, didn't even support SB-1047. The difference between Jack Clark and OpenAI's lobbyists is that publicly, Jack Clark talks about alignment. But when they talk to government officials, there's little difference on the question of existential risk from smarter-than-human AI systems. They do not honestly tell the governments what the situation is like. Ask them yourself.

A while ago, OpenAI hired a lot of talent due to its nonprofit structure.

Anthropic is now doing the same. They publicly say the words that attract EAs and rats. But it's very unclear whether they institutionally care.

Dozens work at Anthropic on AI capabilities because they think it is net-positive to get Anthropic at the frontier, even though they wouldn't work on capabilities at OAI or GDM.

It is not net-positive.

Anthropic is not our friend. Some people there do very useful work on AI safety (where "useful" mostly means "shows that the predictions of MIRI-s...

I think you should try to clearly separate the two questions of

- Is their work on capabilities a net positive or net negative for humanity's survival?

- Are they trying to "optimize" for humanity's survival, and do they care about alignment deep down?

I strongly believe 2 is true, because why on Earth would they want to make an extra dollar if misaligned AI kills them in addition to everyone else? Won't any measure of their social status be far higher after the singularity, if it's found that they tried to do the best for humanity?

I'm not sure about 1. I think even they're not sure about 1. I heard that they held back on releasing their newer models until OpenAI raced ahead of them.

You (and all the people who upvoted your comment) have a chance of convincing them (a little) in a good faith debate maybe. We're all on the same ship after all, when it comes to AI alignment.

PS: AI safety spending is only $0.1 billion while AI capabilities spending is $200 billion. A company which adds a comparable amount of effort on both AI alignment and AI capabilities should speed up the former more than the latter, so I personally hope for their success. I may be wrong, but it's my best guess...

I don't agree that the probability of alignment research succeeding is that low. 17 years or 22 years of trying and failing is strong evidence against it being easy, but doesn't prove that it is so hard that increasing alignment research is useless.

People worked on capabilities for decades, and never got anywhere until recently, when the hardware caught up, and it was discovered that scaling works unexpectedly well.

There is a chance that alignment research now might be more useful than alignment research earlier, though there is uncertainty in everything.

We should have uncertainty in the Ten Levels of AI Alignment Difficulty.

The comparison

It's unlikely that 22 years of alignment research is insufficient but 23 years of alignment research is sufficient.

But what's even more unlikely, is the chance that $200 billion on capabilities research plus $0.1 billion on alignment research is survivable, while $210 billion on capabilities research plus $1 billion on alignment research is deadly.

In the same way adding a little alignment research is unlikely to turn failure into success, adding a little capabilities research is unlikely to turn success into failure.

It's also unlikely that alignme...

But it's very unclear whether they institutionally care.

There are certain kinds of things that it's essentially impossible for any institution to effectively care about.

Give me your model, with numbers, that shows supporting Anthropic to be a bad bet, or admit you are confused and that you don't actually have good advice to give anyone.

It seems to me that other possibilities exist, besides "has model with numbers" or "confused." For example, that there are relevant ethical considerations here which are hard to crisply, quantitatively operationalize!

One such consideration which feels especially salient to me is the heuristic that before doing things, one should ideally try to imagine how people would react, upon learning what you did. In this case the action in question involves creating new minds vastly smarter than any person, which pose double-digit risk of killing everyone on Earth, so my guess is that the reaction would entail things like e.g. literal worldwide riots. If so, this strikes me as the sort of consideration one should generally weight more highly than their idiosyncratic utilitarian BOTEC.

Does your model predict literal worldwide riots against the creators of nuclear weapons? They posed a single-digit risk of killing everyone on Earth (total, not yearly).

It would be interesting to live in a world where people reacted with scale sensitivity to extinction risks, but that's not this world.

I don't think that most people, upon learning that Anthropic's justification was "other companies were already putting everyone's lives at risk, so our relative contribution to the omnicide was low" would then want to abstain from rioting. Common ethical intuitions are often more deontological than that, more like "it's not okay to risk extinction, period." That Anthropic aims to reduce the risk of omnicide on the margin is not, I suspect, the point people would focus on if they truly grokked the stakes; I think they'd overwhelmingly focus on the threat to their lives that all AGI companies (including Anthropic) are imposing.

If you trust the employees of Anthropic to not want to be killed by OpenAI

In your mind, is there a difference between being killed by AI developed by OpenAI and by AI developed by Anthropic? What positive difference does it make, if Anthropic develops a system that kills everyone a bit earlier than OpenAI would develop such a system? Why do you call it a good bet?

AGI is coming whether you like it or not

Nope.

You’re right that the local incentives are not great: having a more powerful model is hugely economically beneficial, unless it kills everyone.

But if 8 billion humans knew what many of LessWrong users know, OpenAI, Anthropic, DeepMind, and others cannot develop what they want to develop, and AGI doesn’t come for a while.

From the top of my head, it actually likely could be sufficient to either (1) inform some fairly small subset of 8 billion people of what the situation is or (2) convince that subset that the situation as we know it is likely enough to be the case that some measures to figure out the risks and not be killed by AI in the meantime are justified. It’s also helpful to (3) suggest/introduce/support policies that change the incentives to race or increase the chance of ...

People representing Anthropic argued against government-required RSPs. I don’t think I can share the details of the specific room where that happened, because it will be clear who I know this from.

Ask Jack Clark whether that happened or not.

When do you think would be a good time to lock in regulation? I personally doubt RSP-style regulation would even help, but the notion that now is too soon/risks locking in early sketches, strikes me as in some tension with e.g. Anthropic trying to automate AI research ASAP, Dario expecting ASL-4 systems between 2025—the current year!—and 2028, etc.

AFAIK Anthropic has not unequivocally supported the idea of "you must have something like an RSP" or even SB-1047 despite many employees, indeed, doing so.

Since this seems to be a crux, I propose a bet to @Zac Hatfield-Dodds (or anyone else at Anthropic): someone shows random people in San-Francisco Anthropic’s letter to Newsom on SB-1047. I would bet that among the first 20 who fully read at least one page, over half will say that Anthropic’s response to SB-1047 is closer to presenting the bill as 51% good and 49% bad than presenting it as 95% good and 5% bad.

Zac, at what odds would you take the bet?

(I would be happy to discuss the details.)

Sorry, I'm not sure what proposition this would be a crux for?

More generally, "what fraction good vs bad" seems to me a very strange way to summarize Anthropic's Support if Amended letter or letter to Governor Newsom. It seems clear to me that both are supportive in principle of new regulation to manage emerging risks, and offering Anthropic's perspective on how best to achieve that goal. I expect most people who carefully read either letter would agree with the preceeding sentence and would be open to bets on such a proposition.

Personally, I'm also concerned about the downside risks discussed in these letters - because I expect they both would have imposed very real costs, and reduced the odds of the bill passing and similar regulations passing and enduring in other juristictions. I nonetheless concluded that the core of the bill was sufficiently important and urgent, and downsides manageable, that I supported passing it.

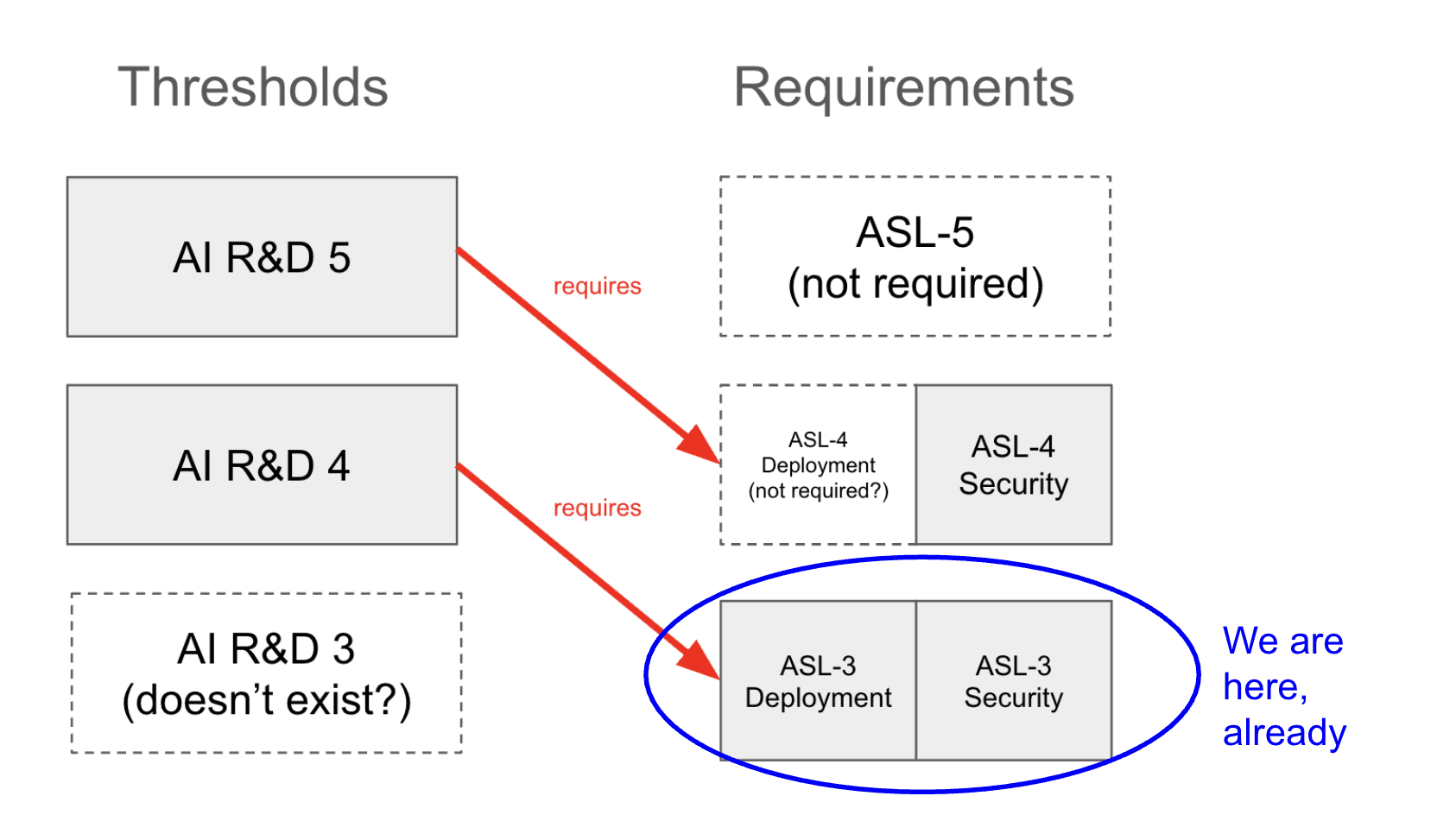

In RSP, Anthropic committed to define ASL-4 by the time they reach ASL-3.

With Claude 4 released today, they have reached ASL-3. They haven’t yet defined ASL-4.

Turns out, they have quietly walked back on the commitment. The change happened less than two months ago and, to my knowledge, was not announced on LW or other visible places unlike other important changes to the RSP. It’s also not in the changelog on their website; in the description of the relevant update, they say they added a new commitment but don’t mention removing this one.

Anthropic’s behavior...

I directionally agree!

Btw, since this is a call to participate in a PauseAI protest on my shortform, do your colleagues have plans to do anything about my ban from the PauseAI Discord server—like allowing me to contest it (as I was told there was a discussion of making a procedure for) or at least explaining it?

Because it’s lowkey insane!

For everyone else, who might not know: a year ago I, in context, on the PauseAI Discord server, explained my criticism of PauseAI’s dishonesty and, after being asked to, shared proofs that Holly publicly lied about our personal communications, including sharing screenshots of our messages; a large part of the thread was then deleted by the mods because they were against personal messages getting shared, without warning (I would’ve complied if asked by anyone representing a server to delete something!) or saving/allowing me to save any of the removed messages in the thread, including those clearly not related to the screenshots that you decided were violating the server norms; after a discussion of that, the issue seemed settled and I was asked to maybe run some workshops for PauseAI to improve PauseAI’s comms/proofreading/factchecking; and then, mo...

I wrote the article Mikhail referenced and wanted to clarify some things.

The thresholds are specified, but the original commitment says, "We commit to define ASL-4 evaluations before we first train ASL-3 models (i.e. before continuing training beyond when ASL-3 evaluations are triggered). Similarly, we commit to define ASL-5 evaluations before training ASL-4 models, and so forth," and, regarding ASL-4, "Capabilities and warning sign evaluations defined before training ASL-3 models."

The latest RSP says this of CBRN-4 Required Safeguards, "We expect this threshold will require the ASL-4 Deployment and Security Standards. We plan to add more information about what those entail in a future update."

Additionally, AI R&D 4 (confusingly) corresponds to ASL-3 and AI R&D 5 corresponds to ASL-4. This is what the latest RSP says about AI R&D 5 Required Safeguards, "At minimum, the ASL-4 Security Standard (which would protect against model-weight theft by state-level adversaries) is required, although we expect a higher security standard may be required. As with AI R&D-4, we also expect an affirmative case will be required."

AFAICT, now that ASL-3 has been implemented, the upcoming AI R&D threshold, AI R&D-4, would not mandate any further security or deployment protections. It only requires ASL-3. However, it would require an affirmative safety case concerning misalignment.

I assume this is what you meant by "further protections" but I just wanted to point this fact out for others, because I do think one might read this comment and expect AI R&D 4 to require ASL-4. It doesn't.

I am quite worried about misuse when we hit AI R&D 4 (perhaps even moreso than I'm worried about misalignment) — and if I understand the policy correctly, there are no further protections against misuse mandated at this point.

Regardless, it seems like Anthropic is walking back its previous promise: "We have decided not to maintain a commitment to define ASL-N+1 evaluations by the time we develop ASL-N models." The stance that Anthropic takes to its commitments—things which can be changed later if they see fit—seems to cheapen the term, and makes me skeptical that the policy, as a whole, will be upheld. If people want to orient to the rsp as a provisional intent to act responsibly, then this seems appropriate. But they should not be mistaken nor conflated with a real promise to do what was said.

Worked on this with Demski. Video, report.

Any update to the market is (equivalent to) updating on some kind of information. So all you can do is dynamically choose what to do or do not update on.* Unfortunately, whenever you choose not to update on something, you are giving up on the asymptotic learning guarantees of policy market setups. So the strategic gains from updatelesness (like not falling into traps) are in a fundamental sense irreconcilable with the learning gains from updatefulness. That doesn't prevent that you can be pretty smart about deciding what to update on exactly... but due to embededness problems and the complexity of the world, it seems to be the norm (rather than the exception) that you cannot be sure a priori of what to update on (you just have to make some arbitrary choices).

*For avoidance of doubt, what matters for whether you have updated on X is not "whether you have heard about X", but rather "whether you let X factor into your decisions". Or at least, this is the case for a sophisticated enough external observer (assessing whether you've updated on X), not necessarily all observers.

I think the first question to think about is how to use them to make CDT decisions. You can create a market about a causal effect if you have control over the decision and you can randomise it to break any correlations with the rest of the world, assuming the fact that you’re going to randomise it doesn’t otherwise affect the outcome (or bettors don’t think it will).

Committing to doing that does render the market useless for choosing policy, but you could randomly decide whether to randomise or to make the decision via whatever the process you actually want to use, and have the market be conditional on the former. You probably don’t want to be randomising your policy decisions too often, but if liquidity wasn’t an issue you could set the probability of randomisation arbitrarily low.

Then FDT… I dunno, seems hard.

I do not believe Anthropic as a company has a coherent and defensible view on policy. It is known that they said words they didn't hold while hiring people (and they claim to have good internal reasons for changing their minds, but people did work for them because of impressions that Anthropic made but decided not to hold). It is known among policy circles that Anthropic's lobbyists are similar to OpenAI's.

From Jack Clark, a billionaire co-founder of Anthropic and its chief of policy, today:

Dario is talking about countries of geniuses in datacenters in the...

People are arguing about the answer to the Sleeping Beauty! I thought this was pretty much dissolved with this post's title! But there are lengthy posts and even a prediction market!

Sleeping Beauty is an edge case where different reward structures are intuitively possible, and so people imagine different game payout structures behind the definition of “probability”. Once the payout structure is fixed, the confusion is gone. With a fixed payout structure&preference framework rewarding the number you output as “probability”, people don’t have a disagreem...

[RETRACTED after Scott Aaronson’s reply by email]

I'm surprised by Scott Aaronson's approach to alignment. He has mentioned in a talk that a research field needs to have at least one of two: experiments or a rigorous mathematical theory, and so he's focusing on the experiments that are possible to do with the current AI systems.

The alignment problem is centered around optimization producing powerful consequentialist agents appearing when you're searching in spaces with capable agents. The dynamics at the level of superhuman general agents are not something ...

It seems to me that other possibilities exist, besides "has model with numbers" or "confused." For example, that there are relevant ethical considerations here which are hard to crisply, quantitatively operationalize!

One such consideration which feels especially salient to me is the heuristic that before doing things, one should ideally try to imagine how people would react, upon learning what you did. In this case the action in question involves creating new minds vastly smarter than any person, which pose double-digit risk of killing everyone on Earth, so my guess is that the reaction would entail things like e.g. literal worldwide riots. If so, this strikes me as the sort of consideration one should generally weight more highly than their idiosyncratic utilitarian BOTEC.