I'm a big fan of work that imagines plausible future scenarios in which governments become more concerned about AI risks. Thank you for this!

I find myself least convinced by the sections about why "hard nationalization" isn't going to happen. I don't even necessarily disagree with the conclusion, but I think these sections treat AI like a "normal technology" and many of the reasons listed don't seem like they would be particularly important if the US was super concerned about AI.

IMO, the main factor determining whether or not hard nationalization is a feasible option is the extent to which the US government is concerned about superintelligence risks. I think the main cruxes will be things like "how concerned is the US about AI risks", "how concerned is the US about AI misalignment risks", and "to what extent does the US understand believe that superintelligence is truly a civilization-defining technology". In the worlds where the US government gets Very Concerned, I suspect the economic/legal challenges would be figured out, and there would either be full nationalization or something that looks pretty indistinguishable from full nationalization for all practical purposes.

Nonetheless, I support this work overall and find myself very supportive of the following statement:

Rather than committing to a specific model of the future, we believe the most effective analysis today will consider a wide range of scenarios that describe actions the US government will take in response to global circumstances. By enumerating many of the plausible scenarios regarding soft nationalization, we believe AI governance researchers can better ground our research in likely futures and design better interventions.

Thanks for the feedback, Akash!

Re: whether total nationalization will happen, I think one of our early takeaways here is that ownership of frontier AI is not the same as control of frontier AI, and also that the US government is likely interested in only certain types of control.

That is, it seems like there's a number of plausible scenarios where the US government has significant control over AI applications that involve national security (cybersecurity, weapons development, denial of technology to China), but also little-to-no "ownership" of frontier AI labs, and relatively less control over commercial / civilian applications of superintelligent AI. Thereby achieving its goals with less involvement.

From this perspective, it's a bit more gray what the additional value-add of "ownership" would be for the US government, given the legal / political overhead. Certainly its still possible with significant motivation.

---

One additional thing I think is is that there's a wide gap between the worldviews of policymakers (focused on current national security concerns, not prioritizing superintelligence scenarios), and AI safety / capabilities researchers (highly focused on superintelligence scenarios, and consequently total nationalization).

Even if "total / hard nationalization" is the end-state, I think its quite possible that this gap will take time to close! Political systems & regulation tend to move a lot slower than technological advances. In the case there's a 3 - 5 year period where policymakers are "ramping up" to the same level of concern / awareness as AI safety researchers, I expect some of these policy levers will happen during that "ramp-up" period.

Very nice!

I've been puzzled at the assumption that private corporations will be allowed to make decisions of such consequence that they amount to determining the future, or effectively taking over the world. I think the false dichotomy of nationalization vs autonomy has helped perpetuate this important error in reasoning. Soft nationalization is a great term to introduce.

I recently gave a very brief set of arguments similar to those you've fleshed out so well here:

Governments will take control of AGI before it's ASI, right?

Asserting control over critical decisions seems so easy, so much the governments actual job, and such a no-brainer as capabilities visibly improve that it seems inevitable to me. I think the reason the alignment community hasn't adopted this view is a historical belief in the the assumption of an inattentive humanity. This made sense when we expected a fast takeoff, but with even a few years worth of slow takeoff before AGI is capable of a relatively certain pivotal act, government control of some sort seems almost certain.

The consequences of government control seem like they'll include allowing more AGI development by default, creating a scenario in which humans control multiple RSI-capable AGI as they improve to ASI. This raises the question of If we solve alignment, do we die anyway?

I'd definitely agree with the perspective you're sharing!

Even in a fast-takeoff / total nationalization scenario, I don't think politicians will be so blindsided that the political discussion will go from "regulation as usual" to "total nationalization" in a couple of months. It's possible, but unlikely.

I think it's equally / more likely that the amount of government involvement will scale over 2 - 5 years, and during that time a lot of these policy levers will be attempted. The success / failure of some of these policy levers to achieve US goals will probably determine if involvement proceeds all the way to "total nationalization".

For some time now, I've been wondering about whether the US government can exercise a hard-nationalization-equivalent level of control over a lab's decisions via mostly informal, unannounced, low-public-legibility means. Seems worth looking into.

Could last year's revamping of OpenAI's board have been influenced by government pressure to accept some government-approved board members? Nakasone's appointment is looking more interesting after reading this post.

That's a very good point! Technically he's retired, but I wonder how much his appointment is related to preparing for potential futures where OpenAI needs to coordinate with the US government on cybersecurity issues...

Crossposted to the EA Forum.

We have yet to see anyone describe a critical element of effective AI safety planning: a realistic model of the upcoming role the US government will play in controlling frontier AI.

The rapid development of AI will lead to increasing national security concerns, which will in turn pressure the US to progressively take action to control frontier AI development. This process has already begun,[1] and it will only escalate as frontier capabilities advance.

However, we argue that existing descriptions of nationalization[2] along the lines of a new Manhattan Project[3] are unrealistic and reductive. The state of the frontier AI industry — with more than $1 trillion[4] in private funding, tens of thousands of participants, and pervasive economic impacts — is unlike nuclear research or any previously nationalized industry. The traditional interpretation of nationalization, which entails bringing private assets under the ownership of a state government,[5] is not the only option available. Government consolidation of frontier AI development is legally, politically, and practically unlikely.

We expect that AI nationalization won't look like a consolidated government-led “Project”, but rather like an evolving application of US government control over frontier AI labs. The US government can select from many different policy levers to gain influence over these labs, and will progressively pull these levers as geopolitical circumstances, particularly around national security, seem to demand it.

Government control of AI labs will likely escalate as concerns over national security grow. The boundary between "regulation" and "nationalization" will become hazy. In particular, we believe the US government can and will satisfy its national security concerns in nearly all scenarios by combining sets of these policy levers, and would only turn to total nationalization as a last resort.

We’re calling the process of progressively increasing government control over frontier AI labs via iterative policy levers soft nationalization.

It’s important to clarify that we are not advocating for a national security approach to AI governance, nor yet supporting any individual policy actions. Instead, we are describing a model of US behavior that we believe is likely to be accurate to improve the effectiveness of AI safety agendas.

Part 1: What is Soft Nationalization?

Our Model of US Control Over AI Labs

We’d like to define a couple terms used in this article:

We argue that soft nationalization is a useful model to characterize the upcoming involvement of the US government in frontier AI labs, based on our following observations:

1. Private US labs are currently the leading organizations pushing the frontier of AI development, and will be among the first to develop AI with transformative capabilities.[6]

Substantial evidence points towards the current and continued dominance of US AI labs such as OpenAI, Anthropic, Google, and Meta in developing frontier AI.[7]

The strongest competitors to private US AI labs are Chinese AI labs, which have strong government support but are limited by Chinese politics,[8] as well as US export controls[9] stymying access to cutting-edge AI chips.

Metrics predicting the gap between US and Chinese AI technological development vary:

Paul Scharre estimates that Chinese AI models are 18 months behind US AI models.[10]

Chinese AI chip development is estimated to be between 5 - 10 years behind US-driven chip development.[11] This lag will become a critical factor if the US effectively enforces export controls on AI chips.[12]

2. Advanced AI will have significant impacts on national security and the balance of global power.[13]

Upcoming Capabilities: Experts forecast that advanced AI will enable a number of capabilities that have significant implications for national security[14], such as:

National Security Outcomes: Transformative capabilities such as these may lead to outcomes that the US would view as critically detrimental for national security[15], such as:

Economic Outcomes: Additionally, advanced AI systems could also result in significant negative outcomes for the US and global economies, including:

Wealth Inequality: An AI-driven economy may drastically increase wealth inequality, amplifying social instability and discontent.[16]

Economic Instability: AI-driven financial trading systems may amplify flash crashes or financial instability,[17] which is a major concern for the US government.

3. A key priority for the US government is to ensure global military and technological superiority.

The US government has for decades operated on the assumption that the existing world order depends on its military and technological dominance, and that it is a top national priority to maintain that order.[18] As a result, it views any challenge to this dominance as an unacceptable threat to its national security.

As AI system capabilities are demonstrated to matter for national security, the US government will likely continue to escalate its involvement in AI technologies to maintain this superiority, even at the cost of exacerbating its AI arms race with China.[19]

A key takeaway from this observation is that the US government will not choose to slow the pace of frontier AI development absent international agreement that includes geopolitical adversaries like China. The US may choose to moderate certain aspects of AI that demonstrate substantial risk with little advantage, but by default it will avoid actions that inhibit American R&D in AI. Today, unilaterally pausing AI[20] development would be in opposition to the US government’s current goals.

Finally, a relevant priority of the US government is maintaining social and economic stability. As has been demonstrated in numerous economic crises,[21] the US is willing to take drastic action to ensure the stability of the US economy, including the takeover and bailout of multi-billion dollar private corporations.[22] Though it seems to us this priority is of less relevance to the policy levers for soft nationalization, there are plausible scenarios where the US may choose to enact these levers to preserve social and economic stability.

4. Hence, the US government will begin to exert greater control and influence over the shape, ownership, and direction of frontier AI labs in national security use-cases.

The US has already demonstrated that it is pursuing greater control over AI chip distribution – nearly a year before passing the Executive Order on AI, in 2022 the Biden administration began enforcing export controls limiting Chinese access to cutting-edge semiconductors.

We believe that this process of exerting greater control can take a wide range of possible paths, where the US progressively utilizes a wide range of policy levers. These levers will likely be applied to satisfy national security concerns in response to technological and geopolitical developments. Though the total nationalization of frontier AI labs is one possible outcome, we don’t think it is the most likely one.

Why Total Nationalization Is Not The Most Likely Model

In a recent example of AI scenario modeling, Leopold Aschenbrenner’s “Situational Awareness” describes a plausible scenario involving an extremely rapid timeline to superintelligence. He describes superintelligence’s likely impact on the geopolitical landscape, concluding with the prediction that a “Manhattan Project for AI” will be soon organized by the US government. He argues that this project will consolidate and nationalize all existing frontier AI research due to the national security implications of superintelligence.

We argue that “The Project”[23] and other similar descriptions of nationalization[24] represent only a narrow subset of possible scenarios modeling US involvement, and are not the most likely scenarios.

Total nationalization is not the most likely scenario for a few reasons:

The American model of innovation is built on free-market private competition, and is arguably one of the reasons the US is leading the AI race today.[25]

Since the 1980s, the United States has seen a significant trend towards increased private sector involvement in various industries,[26] driven by factors such as:

A perception among policymakers that market-based solutions can be more efficient than direct government management.

The belief that private sector competition could foster greater innovation and cost reduction.

US policymakers generally endorse free-market competition on innovation and are reluctant to regulate the AI industry.[27] It would require a massive ideological shift for the US government to nationalize an industry that has critical consequences for the US economy.

Organizations in control of frontier AI labs such as Microsoft, Google, and Meta are among the largest corporations in the world today, with market capitalizations over $1 trillion each.[28]

Practically, total nationalization of these corporations is financially and logistically implausible.

Nationalization of only their frontier AI labs is more plausible. However, these corporations are developing their long-term strategies around frontier AI models, and their frontier AI labs are tightly integrated with the rest of their business.

Any form of nationalization would undermine their long-term business models, plummet shareholder value, and upend the global tech industry. It would result in massive legal and political resistance.

The leading chip manufacturer Nvidia, which is a primary driver of frontier AI research by controlling 80% of the AI chip market,[29] has a current market capitalization of $3 trillion.[30]

Many total nationalization scenarios would involve government ownership of Nvidia. However, it’s challenging to imagine a legally and financially feasible pathway for the US government to gain full ownership of a public corporation of this size.

Despite these arguments, it’s still possible that the US government may eventually choose total nationalization given the right set of circumstances. We don’t believe that it is possible yet to confidently predict a future set of outcomes, and that over-indexing on any scenario is a mistake.

Rather than committing to a specific model of the future, we believe the most effective analysis today will consider a wide range of scenarios that describe actions the US government will take in response to global circumstances. By enumerating many of the plausible scenarios regarding soft nationalization, we believe AI governance researchers can better ground our research in likely futures and design better interventions.

Upcoming Projects on Soft Nationalization

We are conducting scenario modeling and governance research to describe how upcoming national security concerns will lead to greater US governmental control over frontier AI development. We expect this research will ground AI governance discourse in a realistic understanding of plausible scenarios involving US control of frontier AI.

To execute, we’re spearheading a collaborative research project with the following three parts:

If you’re interested in collaborating or receiving updates on any of this work, shoot us a message at research@convergenceanalysis.org.

1. Describing Soft Nationalization

In the upcoming quarter, we will publish a report exploring the following:

2. Conducting Further Scenario Research

The results of our soft nationalization report will inform further scenario modeling that builds on our research, on questions such as:

What forms of international cooperation are viable when national security is a primary concern of AI governance? Will we see a NATO-like alliance[31] of Western countries led by the US?

3. Aligning AI Safety with Soft Nationalization

A clear set of scenarios implied by soft nationalization will enable further research into how these outcomes can be shaped to achieve the broader goals of AI safety organizations, such as:

Part 2: Policy Levers for Soft Nationalization

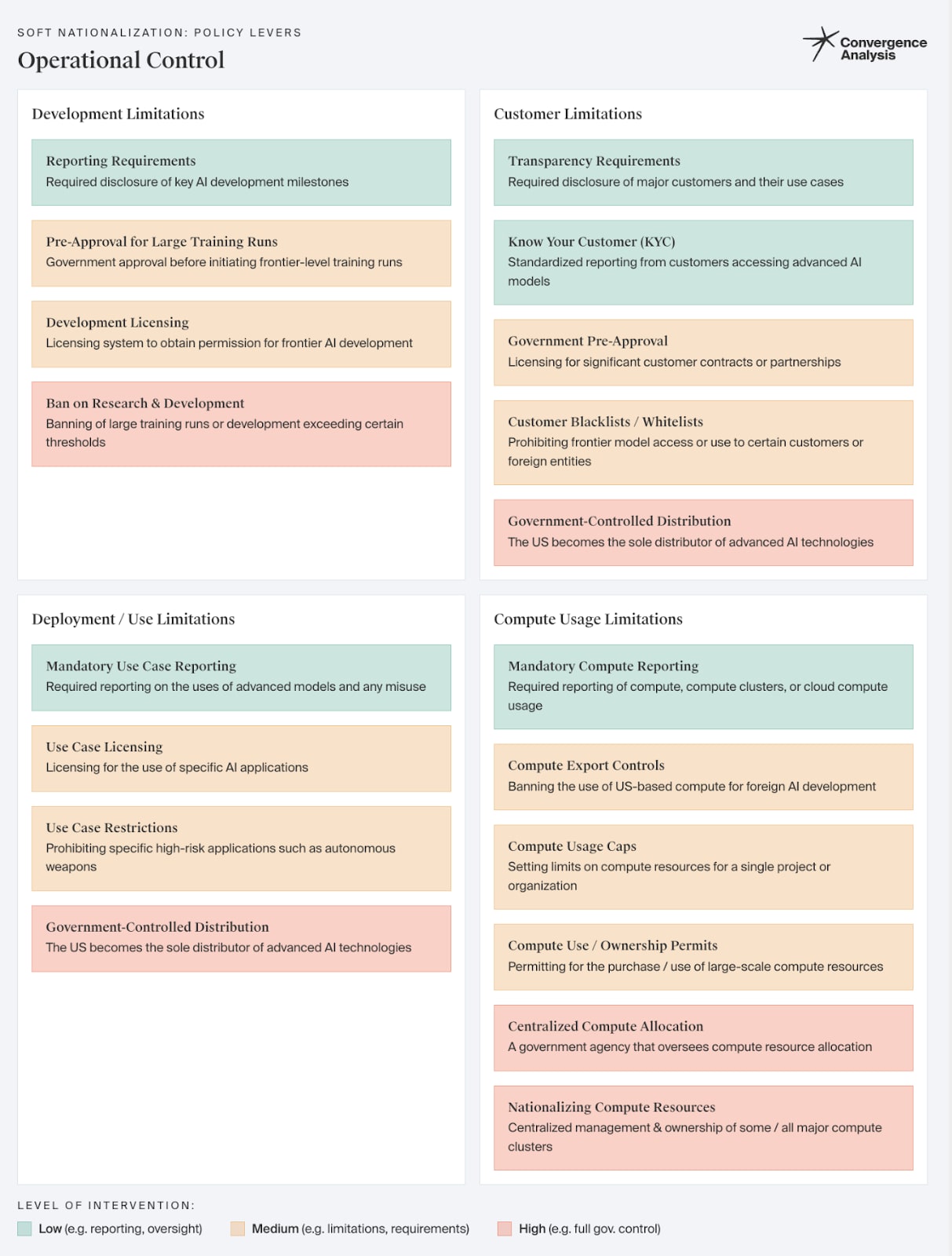

We describe thirteen preliminary sets of policy levers the US government might pull to exert control over frontier AI. Each set of levers offers a series of options that afford the government increasingly more influence, on a spectrum ranging from standard regulations to more comprehensive government control.

We envision that certain policy levers will be combined and deployed by the US government given a particular societal environment. That is, we believe that given a certain scenario, the US will choose a strategy involving policy levers that exert enough control to sufficiently protect its national security, and that is also legally, politically, and practically feasible.

This list of policy levers is an active work in progress and will be explored in detail in a report we’ll publish in the upcoming quarter, considering aspects such as:

Authors Note: We do not advocate or recommend for the application of any of these policy levers. This section is informative in nature – it is intended solely to describe the space of plausible policy levers that may occur. In the future, we may recommend certain levers after conducting further research.

Management & Governance Mechanisms

Government Oversight

The US may seek to implement better tools to monitor the day-to-day operations of key AI labs, including policy levers such as:

Government Management

The US may seek to have direct control over the day-to-day operations of key AI labs, including policy levers such as:

Government Projects & Integrations

The US may seek to integrate the R&D and output of AI labs with its national security goals. This could look like any of these policy levers (in order of increasing interventionism):

Operational Control

Development Limitations

The US may decide to set limitations on large-scale AI R&D for frontier AI labs:

Customer Limitations

The US may require that AI labs report, vet, or restrict its customers to prevent usage of frontier AI by adversaries:

Deployment / Use Limitations

The US may limit the availability of specific use cases of frontier AI models:

Compute Usage Limitations

The US may decide to influence AI development via control over the allocation and availability of compute resources:

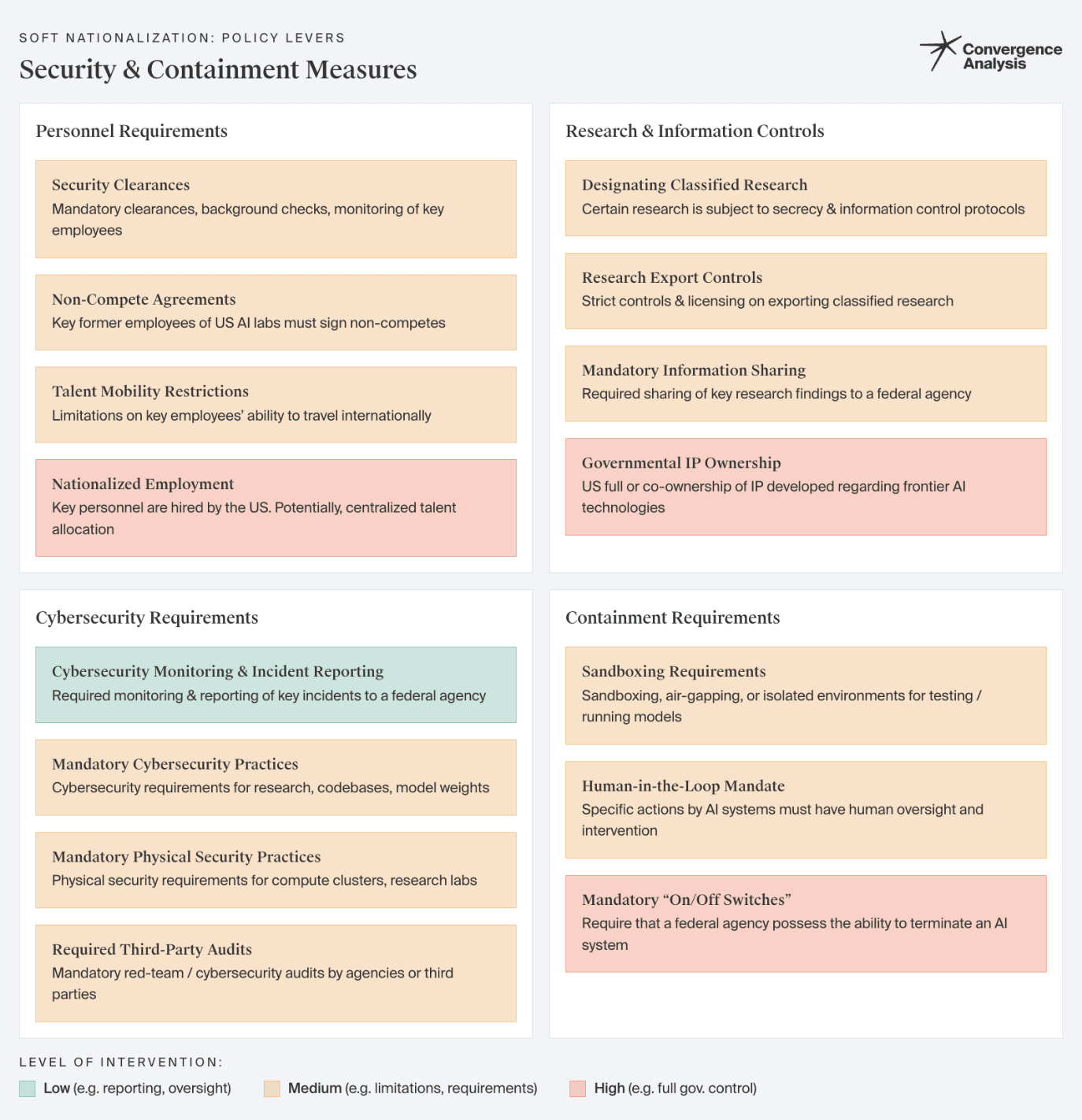

Security & Containment Measures

Personnel Requirements

The US may seek to control key personnel within AI labs, by limiting their ability to disseminate sensitive information, to work for geopolitical rivals, or in extreme cases by requiring that they work for the US government:

Research & Information Controls

The US may seek to control the classification or distribution of AI research developed by private AI labs:

Cybersecurity Requirements

The US may require specific digital or physical cybersecurity practices for highly capable AI models to protect against malicious exploitation:

Mandatory Cybersecurity Practices: The US may require that AI labs must comply with specified cybersecurity practices to secure AI research, codebases, or model weights (see: DFARS Clause 252.204-7012)[32].

Containment Requirements

The US may require certain practices that allow AI labs or federal agencies to protect, contain, or restrict deployed AI models:

Financial Ownership & Control

Shareholding Scenarios

The US government may consider acquiring stakes of private AI labs, achieving control through market-based mechanisms.

Profit Regulation and Unique Tax Treatment

It’s plausible that leading AI labs may eventually control a sizable percentage of the revenue and valuation of private companies in the US. If this were the case, the US may seek to treat these leading AI labs uniquely from traditional corporations in pursuit of more equitable or economically beneficial outcomes, using levers such as:

Part 3: Scenarios Illustrating Soft Nationalization

In this section, we describe a few preliminary scenarios in which the US exerts control over frontier AI development in response to national security concerns. For each scenario, we illustrate broad strokes of the circumstances that may occur. Then, we describe a plausible package of “soft nationalization” policy levers that the US would be likely to deploy as a comprehensive strategic response.

We present three scenarios with three different “levels” of relative governmental control: low, medium, and high. We will be exploring scenarios such as these in more detail via a report we’ll publish in the upcoming quarter.

It’s important to note that these are hypothetical, illustrative scenarios to demonstrate that our model of soft nationalization may be an effective tool for describing US national security concerns. We do not propose that any of these scenarios are likely to happen, nor do we advocate for any of the suggested policy levers. We don't necessarily believe securitization is the ideal outcome, and that there are still possible scenarios involving international cooperation.

US “Brain Drain”

Governmental Control: Low

In early 2027, China and Saudi Arabia launch motivated, well-funded governmental initiatives to compete in AI technological superiority. In particular, one key branch of their initiative focuses on financial compensation - they offer hugely lucrative compensation packages for top AI researchers, with yearly salaries in the tens of millions, paid upfront. US AI labs are unable to compete with these offers, as most of the value of their compensation packages is in equity and illiquid. The US government does not offer similarly competitive packages.

These initiatives create a wave of talent migration, with hundreds of top AI researchers leaving for well-paid opportunities in countries the US considers to be geopolitical rivals. The exodus raises alarm in both Silicon Valley and Washington about maintaining US technological leadership in AI. In particular, the US government is concerned that top researchers are moving from capitalist, private AI applications to state-organized AI initiatives, which may conflict with US geopolitical goals.

US Governmental Response:

Escalation of an AI Arms Race

Governmental Control: Medium

In late 2029, US intelligence agencies obtain credible information that China has made significant breakthroughs in AI-enabled autonomous weapons systems. Satellite imagery and intercepted communications suggest that China is developing swarms of AI-controlled drones capable of coordinated combat operations without human intervention. These developments threaten to upset the global military balance, allowing the Chinese military to break through missile & air defense systems and undermining US & Taiwanese defensive capabilities. The news leaks to the press, causing public alarm and intensifying the ongoing debate about lethal autonomous weapons. The US is pressured to respond, fearing that China's advancement could embolden it to take more aggressive actions against Taiwan.

These developments occurred because China has been pursuing a tight-knit integration of its AI research labs and the Chinese defense industry, pouring tens of billions into military AI technologies. In comparison, the US government has been relatively hands-off on AI, preferring to fund exploratory research initiatives with AI labs rather than directly overseeing the development of cutting-edge AI technologies. As a result, the US is now behind in developing similar lethal autonomous weapons.

The US government recognizes that its approach to AI technologies has left it flat-footed relative to its geopolitical rivals, risking its position as the leading superpower. It commits to integrating frontier AI labs and technologies more directly into governmental initiatives and the defense industry.

US Governmental Response:

Nationalization of Bioweapon Technologies

Governmental Control: High

In 2035, significant and disturbing developments at a new biotech startup occur. A novel AI virus modeling technique for vaccine development has the side effect of allowing lab researchers to easily develop bioweapons of unprecedented lethality and specificity. The AI system, trained on vast datasets of genetic and epidemiological information, can design viruses tailored to target specific ethnic groups or even individuals based on their genetic makeup. These viruses are relatively feasible to produce, and knowledge of the design of these viruses would permit any of 100+ research labs worldwide to easily create such a pathogen.

The US government determines that the capabilities of this biotech startup are too risky to permit for a private corporation. Furthermore, it believes that any further research into this novel virus modeling technique is too dangerous to permit, as it could easily lead to targeted pandemics. It moves to nationalize this biotech startup fully to prevent any further consequences, and passes legislation prohibiting private research and development into similar virus modeling techniques.

US Governmental Response:

The US government performs what we might consider a Full Acquisition of the specific biotech startup described above.

Outside of this biotech startup, the US government moves quickly to create stringent national (and international) restrictions on research regarding this set of AI virus modeling techniques:

These two sets of drastic actions significantly deter US private companies from undertaking any further R&D in this area of virus and pathogen modeling. The full nationalization of a private company signals that the US is likely to take similar actions in the future.

Conclusion

National security concerns suggest the US will exert more control over frontier AI development. However, predictions of a “Manhattan Project for AI” are reductive and misleading. The US isn’t likely to “nationalize” frontier AI development, at least in the sense of all at once bringing it under full public ownership and control. Doing so would be legally, politically, and practically challenging, and it could ultimately undermine the US’ technological lead in AI.

Instead, we propose that the US government’s control over frontier AI is likely best modeled by our framework of “soft nationalization.” According to this framework, the US will exert progressively greater power over frontier AI development as national security concerns arise by employing several different policy levers. The options described by these levers constitute a spectrum from “soft touch” regulation to de facto government ownership.

This model assumes that the US will act to preserve its national security. However, exactly which combinations of options across policy levers the US will choose depends on the contingencies of global and domestic technopolitics, as well as balancing goals other than national security.

We hope our model will enable the evaluation of AI safety agendas across realistic scenarios of US involvement, and encourage further related research. In upcoming work, we intend to more rigorously describe the policy levers the US will choose to exercise such control, and the scenarios that will cause the US to deploy them.

US Semiconductor Export Controls

Let's nationalize AI. Seriously. - POLITICO

IV. The Project - Situational Awareness by Leopold Aschenbrenner

Will the $1 trillion of generative AI investment pay off? | Goldman Sachs

Nationalization - Wikipedia

The transformative potential of artificial intelligence - ScienceDirect

AI Index Report 2024 – Artificial Intelligence Index

China Puts Power of State Behind AI—and Risks Strangling It - WSJ

Newly Updated US Export Rules to China Target AI Chips | Altium

AI: How far is China behind the West? – DW – 07/24/2023

China is falling behind in race to become AI superpower | Semafor

Newly Updated US Export Rules to China Target AI Chips | Altium

How Artificial Intelligence Is Transforming National Security | U.S. GAO

An Overview of Catastrophic AI Risks

Ibid.

AI's economic peril to democracy | Brookings

Artificial intelligence and financial crises.

For example: Bush’s The National Security Strategy of the United States of America. Or: Biden-Harris Administration's National Security Strategy

The Battle for Technological Supremacy: The US–China Tech War. Or: Global Strategy 2023: Winning the tech race with China.

We need to Pause AI, Pause Giant AI Experiments: An Open Letter

2007–2008 financial crisis - Wikipedia

Emergency Economic Stabilization Act of 2008 - Wikipedia

IV. The Project. Note that Leopold does allude to implementations that do not involve total nationalization, such as defense contracting or voluntary agreements. However, the majority of his argument is built around the idea of a fully centralized government-led research project.

AI and Geopolitics: How might AI affect the rise and fall of nations? | RAND

Competing Values Will Shape US-China AI Race – Third Way

Does Privatization Serve the Public Interest?

SAFE Innovation Framework

Companies ranked by Market Cap - CompaniesMarketCap.com

What you need to know about Nvidia and the AI chip arms race - Marketplace

Companies ranked by Market Cap - CompaniesMarketCap.com

See: Chips for Peace: How the U.S. and Its Allies Can Lead on Safe and Beneficial AI | Lawfare

A Typology of China's Intellectual Property Theft Techniques — 2430 Group