Something like this sounds really useful just for my personal use. Is someone finetuning (or already finetuned) a system to be generally numerate and good at fermi estimates? Just my bad prompting skills on gpt-4 gave pretty mediocre results for that purpose.

Is someone finetuning (or already finetuned) a system to be generally numerate and good at fermi estimates?

We didn't try to fine-tune on general Fermi estimate tasks, but I imagine the results will be positive. For our specific problem of forecasting with external reasonings, fine-tuning helps a lot! We have an ablation study in Section 7 of the paper showing that if you just use the non-fine-tuned chat model, holding everything else fixed, it's significantly worse.

We also didn't explore using base models that are not instruction-tuned or RLHF'ed. That could be interesting to look at.

Congrats on the excellent work! I've been following the LLM forecasting space for a while and your results are really pushing the frontier.

Some questions and comments:

Great questions, and thanks for the helpful comments!

underconfidence issues

We have not tried explicit extremizing. But in the study where we average our system's prediction with community crowd, we find improved results better than both (under Brier scores). This essentially does the extremizing in those <10% cases.

However, humans on INFER and Metaculus are scored according to the integral of their Brier score over time, i.e. their score gets weighted by how long a given forecast is up

We were not aware of this! We always take unweighted average across the retrieval dates when evaluating our system. If we put more weights on the later retrieval dates, the gap between our system and human should be a bit smaller, for the reason you said.

- Relatedly, have you tried other retrieval schedules and if so did they affect the results

No, we have not tried. One alternative is to sample k random or uniformly spaced intervals within [open, resolve]. Unfortunately, this is not super kosher as this leaks the resolution date, which, as we argued in the paper, correlates with the label.

figure 4c

This is on validation set. Notice that the figure caption begins with "Figure 4: System strengths. Evaluating on the validation set, we note"

log score

We will update the paper soon to include the log score.

standard error in time series

See here for some alternatives in time series modeling.

I don't know what is the perfect choice in judgemental forecasting, though. I am not sure if it has been studied at all (probably kind of an open question). Generally, the keyword here you can Google is "autocorrelation standard error" and "standard error in time series".

Interesting work, congrats on achieving human-ish performance!

I expect your model would look relatively better under other proper scoring rules. For example, logarithmic scoring would punish the human crowd for giving >1% probabilities to events that even sometimes happen. Under the Brier score, the worst possible score is either a 1 or a 2 depending on how it's formulated (from skimming your paper, it looks like 1 to me). Under a logarithmic score, such forecasts would be severely punished. I don't think this is something you should lead with, since Brier scores are the more common scoring rule in the literature, but it seems like an easy win and would highlight the possible benefits of the model's relatively conservative forecasting.

I'm curious how a more sophisticated human-machine hybrid would perform with these much stronger machine models, I expect quite well. I did some research with human-machine hybrids before and found modest improvements from incorporating machine forecasts (e.g. chapter 5, section 5.2.4 of my dissertation Metacognitively Wise Crowds & the sections "Using machine models for scalable forecasting" and "Aggregate performance" in Hybrid forecasting of geopolitical events.), but the machine models we were using were very weak on their own (depending on how I analyzed things, they were outperformed by guessing). In "System Complements the Crowd", you aggregate a linear average of the full aggregate of the crowd and the machine model, but we found that treating the machine as an exceptionally skilled forecaster resulted in the best performance of the overall system. As a result of this method, the machine forecast would be down-weighted in the aggregate as more humans forecasted on the question, which we found helped performance. You would need access to the individuated data of the forecasting platform to do this, however.

If you're looking for additional useful plots, you could look at Human Forecast (probability) vs AI Forecast (probability) on a question-by-question basis and get a sense of how the humans and AI agree and disagree. For example, is the better performance of the LM forecasts due to disagreeing about direction, or mostly due to marginally better calibration? This would be harder to plot for multinomial questions, although there you could plot the probability assigned to the correct response option as long as the question isn't ordinal.

I see that you only answered Binary questions and that you split multinomial questions. How did you do this? I suspect you did this by rephrasing questions of the form "What will $person do on $date, A, B, C, D, E, or F?" into "Will $person do A on $date?", "Will $person do B on $date?", and so on. This will result in a lot of very low probability forecasts, since it's likely that only A or B occurs, especially closer to the resolution date. Also, does your system obey the Law of total probability (i.e. does it assign exactly 100% probability to the union of A, B, C, D, E, and F)? This might be a way to improve performance of the system and coax your model into giving extreme forecasts that are grounded in reality (simply normalizing across the different splits of the multinomial question here would probably work pretty well).

Why do human and LM forecasts differ? You plot calibration, and the human and LM forecasts are both well calibrated for the most part, but with your focus on system performance I'm left wondering what caused the human and LM forecasts to differ in accuracy. You claim that it's because of a lack of extremization on the part of the LM forecast (i.e. that it gives too many 30-70% forecasts, while humans give more extreme forecasts), but is that an issue of calibration? You seemed to say that it isn't, but then the problem isn't that the model is outputting the wrong forecast given what it knows (i.e. that it "hedge[s] predictions due to its safety training"), but rather that it is giving its best account of the probability given what it knows. The problem with e.g. the McCarthy question (example output #1) seems to me that the system does not understand the passage of time, and so it has no sense that because it has information from November 30th and it's being asked a question about what happens on November 30th, it can answer with confidence. This is a failure in reasoning, not calibration, IMO. It's possible I'm misunderstanding what cutoff is being used for example output #1.

Miscellaneous question: In equation 1, is k 0-indexed or 1-indexed?

We will be updating the paper with log scores.

I think human forecasters collaborating with their AI counterparts (in an assistance / debate setup) is a super interesting future direction. I imagine the strongest possible system we can build today will be of this sort. This related work explored this direction with some positive results.

is the better performance of the LM forecasts due to disagreeing about direction, or mostly due to marginally better calibration?

Definitely both. But more coming from the fact that the models don't like to say extreme values (like, <5%), even when the evidence suggests so. This doesn't necessarily hurt calibration, though, since calibration only cares about the error within each bin of the predicted probabilities.

This will result in a lot of very low probability forecasts, since it's likely that only A or B occurs, especially closer to the resolution date.

Yes, so we didn't do all of the multiple choice questions, only those that are already splitted into binary questions by the platforms. For example, if you query Metaculus API, some multiple choice questions are broken down into binary subquestions (each with their own community predictions etc). Our dataset is not dominated by such multiple-choice-turned-binary questions.

Does your system obey the Law of total probability?

No, and we didn't try very hard to fully improve this. Similarly, if you ask the model the same binary question, but in the reverse way, the answers in general do not sum to 1. I think future systems should try to overcome this issue by enforcing the constraints in some way.

I'm left wondering what caused the human and LM forecasts to differ in accuracy.

By accuracy, we mean 0-1 error: so you round the probabilistic forecast to 0 or 1 whichever is the nearest, and measure the 0-1 loss. This means that as long as you are directionally correct, you will have good accuracy. (This is not a standard metric, but we choose to report it mostly to compare with prior works.) So the kind of hedging behavior doesn't hurt accuracy, in general.

The McCarthy example [...] This is a failure in reasoning, not calibration, IMO.

This is a good point! We'll add a bit more on how to interpret these qualitative examples. To be fair, these are hand-picked and I would caution against drawing strong conclusions from them.

In equation 1, is k 0-indexed or 1-indexed?

1-indexed.

TL;DR: We present a retrieval-augmented LM system that nears the human crowd performance on judgemental forecasting.

Paper: https://arxiv.org/abs/2402.18563 (Danny Halawi*, Fred Zhang*, Chen Yueh-Han*, and Jacob Steinhardt)

Twitter thread: https://twitter.com/JacobSteinhardt/status/1763243868353622089

Abstract

Forecasting future events is important for policy and decision-making. In this work, we study whether language models (LMs) can forecast at the level of competitive human forecasters. Towards this goal, we develop a retrieval-augmented LM system designed to automatically search for relevant information, generate forecasts, and aggregate predictions. To facilitate our study, we collect a large dataset of questions from competitive forecasting platforms. Under a test set published after the knowledge cut-offs of our LMs, we evaluate the end-to-end performance of our system against the aggregates of human forecasts. On average, the system nears the crowd aggregate of competitive forecasters and in some settings, surpasses it. Our work suggests that using LMs to forecast the future could provide accurate predictions at scale and help inform institutional decision-making.

For safety motivations on automated forecasting, see Unsolved Problems in ML Safety (2021) for discussions. In the following, we summarize our main research findings.

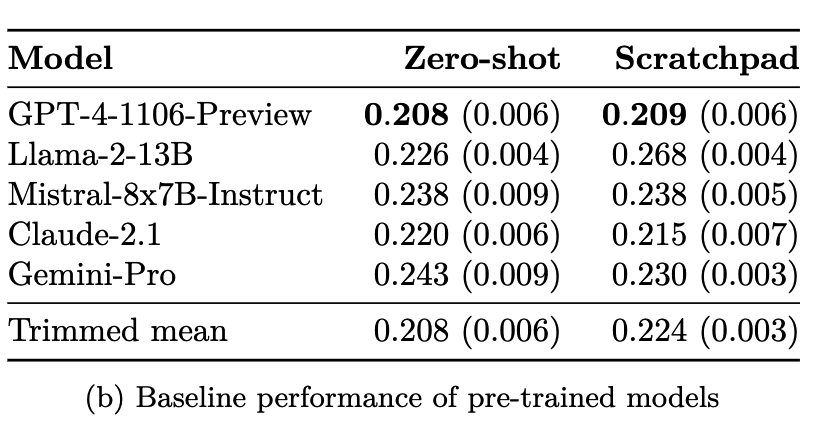

Current LMs are not naturally good at forecasting

First, we find that LMs are not naturally good at forecasting when evaluated zero-shot (with no fine-tuning and no retrieval). On 914 test questions that were opened after June 1, 2023 (post the knowledge cut-offs of these models), most LMs get near chance performance.

Here, all questions are binary, so random guessing gives a Brier score of 0.25. Averaging across all community predictions over time, the human crowd gets 0.149. We present the score of the best model of each series. Only GPT-4 and Claude-2 series beat random guessing (by a margin of >0.3), though still very far from human aggregates.

System building

Towards better automated forecasting, we build and optimize a retrieval-augmented LM pipeline for this task.

It functions in 3 steps, mimicking the traditional forecasting procedure:

We optimize the system’s hyperparameters and apply a self-supervised approach to fine-tune a base GPT-4 to obtain the fine-tuned LM. See Section 5 of the full paper for details.

Data and models

We use GPT-4-1106 and GPT-3.5 in our system, whose knowledge cut-offs are in April 2023 and September 2021.

To optimize and evaluate the system, we collect a dataset of forecasting questions from 5 competitive forecasting platforms, including Metaculus, Good Judgment Open, INFER, Polymarket, and Manifold.

Evaluation results

For each question, we perform information retrieval at up to 5 different dates during the question’s time span and evaluate our system against community aggregates at each date. (This simulates “forecasting” on past, resolved questions with pre-trained models, a methodology first introduced by Zou et al, 2022.)

Unconditional setting

Averaging over a test set of 914 questions and all retrieval points, our system gets an average Brier score of 0.179 vs. crowd 0.149 and an accuracy of 71.5% vs the crowd 77.0%. This significantly improves upon the baseline evaluation we had earlier.

Selective setting

Through a study on the validation set, we find that our system performs best relative to the crowd along several axes, which hold on the test set:

In both 1 and 4, our system actually beats the human crowd.

More interestingly, by taking a (weighted) average of our system and the crowd’s prediction, we always get better scores than both of them, even unconditionally! Conceptually, this shows that our system can be used to complement the human forecasters.

Calibration

Finally, we compare our system's calibration against the human crowd. On the test set (figure (c) below), our system is nearly as well calibrated, with RMS calibration error .42 (human crowd: .38).

Interestingly, this is not the case in the baseline evaluation, where the base models are not well calibrated under the zero-shot setting (figure (a) below). Our system, through fine-tuning and ensembling, improves the calibration of the base models, without undergoing specific training for calibration.

Future work

Our results suggest that in the near future, LM-based systems may be able to generate accurate forecasts at the level of competitive human forecasters.

In Section 7 of the paper, we discuss some directions that we find promising towards this goal, including iteratively applying our fine-tuning method, gathering more forecasting data from the wild for better training, and more.