I think your discussion (and Epoch's discussion) of the CES model is confused as you aren't taking into account the possibility that we're already bottlenecking on compute or labor. That is, I think you're making some assumption about the current marginal returns which is non-obvious and, more strongly, would be an astonishing coincidence given that compute is scaling much faster than labor.

In particular, consider a hypothetical alternative world where they have the same amount of compute, but there is only 1 person (Bob) working on AI and this 1 person is as capable as the median AI company employee and also thinks 10x slower. In this alternative world they could also say "Aha, you see because , even if we had billions of superintelligences running billions of times faster than Bob, AI progress would only go up to around 4x faster!"

Of course, this view is absurd because we're clearly operating >>4x faster than Bob.

So, you need to make some assumptions about the initial conditions.

This perspective implies an (IMO) even more damning issue with this exact modeling: the CES model is symmetric, so it also implies that additional compute (without labor) can only speed you up so much. I think the argument I'm about to explain strongly supports a lower value of or some different functional form.

Consider another hypothetical world where the only compute they have is some guy with an abacus, but AI companies have the same employees they do now. In this alternative world, you could also have just as easily said "Aha, you see because , even if we had GPUs that could do 1e15 FLOP/s (far faster than our current rate of 1e-1 fp8 FLOP/s), AI progress would only go around 4x faster!"

Further, the availability of compute for AI experiments has varied by around 8 orders of magnitude over the last 13 years! (AlexNet was 13 years ago.) The equivalent (parallel) human labor focused on frontier AI R&D has varied by more like 3 or maybe 4 orders of magnitude. (And the effective quality adjusted serial labor, taking into account parallelization penalities etc, has varied by less than this, maybe by more like 2 orders of magnitude!)

Ok, but can we recover low-substitution CES? I think the only maybe consistent recovery (which doesn't depend on insane coincidences about compute vs labor returns) would imply that compute was the bottleneck (back in AlexNet days) such that scaling up labor at the time wouldn't yield ~any progress. Hmm, this doesn't seem quite right.

Further, insofar as you think scaling up just labor when we had 100x less compute (3-4 years ago) would have still been able to yield some serious returns (seems obviously true to me...), then a low-substitution CES model would naively imply we have a sort of compute overhang where were we can speed things up by >100x using more labor (after all, we've now added a bunch of compute, so time for more labor).

Minimally, the CES view predicts that AI companies should be spending less and less of their budget on compute as GPUs are getting cheaper. (Which seems very false.)

Ok, but can we recover a view sort of like the low-substitution CES view where speeding up our current labor force by an arbitrary amount (also implying we could only use our best employees etc) would only yield ~10x faster progress? I think this view might be recoverable with some sort of non-symmetric model where we assume that labor can't be the bottleneck in some wide regime, but compute can be the bottleneck.

(As in, you can always get faster progress by adding more compute, but the multiplier on top of this from adding labor caps out at some point which mostly doesn't depend on how much compute you have. E.g., maybe this could be because at some point you just run bigger experiments with the same amount of labor and doing a larger numbers of smaller experiment is always worse and you can't possibly design the experiment better. I think this sort of model is somewhat plausible.)

This model does make a somewhat crazy prediction where it implies that if you scale up compute and labor exactly in parallel, eventually further labor has no value. (I suppose this could be true, but seems a bit wild.)

Overall, I'm currently quite skeptical that arbitrary improvements in labor yield only small increases in the speed of progress. (E.g., a upper limit of 10x faster progress.)

As far as I can tell, this view either privileges exactly the level of human researchers at AI companies or implies that using only a smaller number of weaker and slower researchers wouldn't alter the rate of progress that much.

In particular, consider a hypothetical AI company with the same resources as OpenAI except that they only employ aliens whose brains work 10x slower and for which the best researcher is roughly as good as OpenAI's median technical employee. I think such an AI company would be much slower than OpenAI, maybe 10x slower (part from just lower serial speed and part from reduced capabilities). If you think such an AI company would be 10x slower, then by symmetry you should probably think that an AI company with 10x faster employees who are all as good as the best researchers should perhaps be 10x faster or more.[1] It would be surprising if the returns stopped at exactly the level of OpenAI researchers. And the same reasoning makes 100x speed ups once you have superhuman capabilities at massive serial speed seem very plausible.

I edited this to be a hopefully more clear description. ↩︎

As far as I can tell, this sort of consideration is at least somewhat damning for the literal CES model (with poor substitution) in any situation where the inputs have varied by hugely different amounts (many orders of magnitude of difference like in the compute vs labor case) and relative demand remains roughly similar. While this is totally expected under high substitution.

I don't think this logic is quite right. In particular, the relative price of compute to labor has changed by many orders of magnitude over the same period (compute has decreased in price a lot, wages have grown). Unless compute and labor are perfect complements, you should expect the ratio of compute to labor to be changing as well.

To understand how substitutable compute and labor are, you need to see how the ratio of compute to labor is changing relative to price changes. We try to run through these numbers here.

Yeah, you can get into other fancy tricks to defend it like:

- Input-specific technological progress. Even if labour has grown more slowly than capital, maybe the 'effective labour supply' -- which includes tech makes labour more productive (e.g. drinking caffeine, writing faster on a laptop) -- has grown as fast as capital.

- Input-specific 'stepping on toes' adjustments. If capital grows at 10%/year and labour grows at 5%/year, but (effective labour) = labour^0.5, and (effective capital)=capital, then the growth rates of effective labour and effective capital are equal

Thanks, this is a great comment.

I buy your core argument against the main CES model I presented. I think your key argument ("the relative quantity of labour vs compute has varied by OOMs; If CES were true, then one input would have become a hard bottleneck; but it hasn't.") is pretty compelling as an objection to the simple naive CES I mention in the post. It updates me even further towards thinking that, if you use this naive CES, you should have ρ> -0.2. Thanks!

The core argument is less powerful against a more realistic CES model that replaces 'compute' with 'near-frontier sized experiments'. I'm less sure how strong it is as an argument against the more-plausible version of the CES where rather than inputs of cognitive labour and compute we have inputs of cognitive labour and number of near-frontier-sized experiments. (I discuss this briefly in the post.) I.e. if a lab has total compute C_t, its frontier training run takes C_f compute, and we say that a 'near-frontier-sized' experiment uses 1% as much compute as training a frontier model, then the number of near-frontier sized experiments that the lab could run equals E = 100* C_t / C_f

With this formulation, it's no longer true that a key input has increased by many OOMs, which was the core of your objection (at least the part of your objection that was about the actual world rather than about hypotheticals - i discuss hypotheticals below.)

Unlike compute, E hasn't grown by many OOMs over the last decade. How has it changed? I'd guess it's gotten a bit smaller over the past decade as labs have scaled frontier training runs faster than they've scaled their total quantity of compute for running experiments. But maybe labs have scaled both at equal pace, especially as in recent years the size of pre-training has been growing more slowly (excluding GPT-4.5).

So this version of the CES hypothesis fares better against your objection, bc the relative quantity of the two inputs (cognitive labour and number of near-frontier experiments) have changed by less over the past decade. Cognitive labour inputs have grown by maybe 2 OOMs over the past decade, but the 'effective labour supply', adjusting for diminishing quality and stepping-on-toes effects has grown by maybe just 1 OOM. With just 1 OOM relative increase in cognitive labour, the CES function with ρ=-0.4 implies that compute will have become more of a bottleneck, but not a complete bottleneck such that more labour isn't still useful. And that seems roughly realistic.

Minimally, the CES view predicts that AI companies should be spending less and less of their budget on compute as GPUs are getting cheaper. (Which seems very false.)

This version of the CES hypothesis also dodges this objection. AI companies need to spend much more on compute over time just to keep E constant and avoid compute becoming a bottleneck.

This model does make a somewhat crazy prediction where it implies that if you scale up compute and labor exactly in parallel, eventually further labor has no value. (I suppose this could be true, but seems a bit wild.)

Doesn't seem that wild to me? When we scale up compute we're also scaling up the size of frontier training runs; maybe past a certain point running smaller experiments just isn't useful (e.g. you can't learn anything from experiments using 1 billionth of the compute of a frontier training run); and maybe past a certain point you just can't design better experiments. (Though I agree with you that this is all unlikely to bite before a 10X speed up.)

consider a hypothetical AI company with the same resources as OpenAI except that they only employ aliens whose brains work 10x slower and for which the best researcher is roughly as good as OpenAI's median technical employee

Nice thought experiment.

So the near-frontier-experiment version of the CES hypothesis would say that those aliens would be in a world where experimental compute isn't a bottleneck on AI progress at all: the aliens don't have time to write the code to run the experiments they have the compute for! And we know we're not in that world because experiments are a real bottleneck on our pace of progress already: researchers say they want more compute! These hypothetical aliens would make no such requests. It may be a weird empirical coincidence that cognitive labour helps up to our current level but not that much further, but we can confirm with the evidence of the marginal value of compute in our world.

But actually I do agree the CES hypothesis is pretty implausible here. More compute seems like it would still be helpful for these aliens: e.g. automated search over different architectures and running all experiments at large scale. And evolution is an example where the "cognitive labour" going into AI R&D was very very very minimal and still having lots of compute to just try stuff out helped.

So I think this alien hypothetical is probably the strongest argument against the near-frontier experiment version of the CES hypothesis. I don't think it's devastating -- the CES-advocate can bite the bullet and claim that more compute wouldn't be at all useful in that alien world.

(Fwiw i preferred the way you described that hypothesis before your last edit.)

You can also try to 'block' the idea of a 10X speed up by positing a large 'stepping on toes' effect. If it's v important to do experiments in series and that experiments can't be sped up past a certain point, then experiments could still bottleneck progress. This wouldn't be about the quantity of compute being a bottleneck per se, so it avoids your objection. Instead the bottleneck is 'number of experiments you can run per day'. Mathematically, you could represent this by smg like:

AI progress per week = log(1000 + L^0.5 * E^0.5)

The idea is that there are ~linear gains to research effort initially, but past a certain point returns start to diminish increasingly steeply such that you'd struggle to ever realistically 10X the pace of progress.

Ultimately, I don't really buy this argument. If you applied this functional form to other areas of science you'd get the implication that there's no point scaling up R&D past a certain point, which has never happened in practice. And even i think the functional form underestimates has much you could improve experiment quality and how much you could speed up experiments. And you have to cherry pick the constant so that we get a big benefit from going from the slow aliens to OAI-today, but limited benefit from going from today to ASI.

Still, this kind of serial-experiment bottleneck will apply to some extent so it seems worth highlighting that this bottleneck isn't effected by the main counterargument you made.

I don't think my treatment of initial conditions was confused

I think your discussion (and Epoch's discussion) of the CES model is confused as you aren't taking into account the possibility that we're already bottlenecking on compute or labor...

In particular, consider a hypothetical alternative world where they have the same amount of compute, but there is only 1 person (Bob) working on AI and this 1 person is as capable as the median AI company employee and also thinks 10x slower. In this alternative world they could also say "Aha, you see because , even if we had billions of superintelligences running billions of times faster than Bob, AI progress would only go up to around 4x faster!"

Of course, this view is absurd because we're clearly operating >>4x faster than Bob.

So, you need to make some assumptions about the initial conditions....

Consider another hypothetical world where the only compute they have is some guy with an abacus, but AI companies have the same employees they do now. In this alternative world, you could also have just as easily said "Aha, you see because , even if we had GPUs that could do 1e15 FLOP/s (far faster than our current rate of 1e-1 fp8 FLOP/s), AI progress would only go around 4x faster!"

My discussion does assume that we're not currently bottlenecked on either compute or labour, but I think that assumption is justified. It's quite clear that labs both want more high-quality researchers -- top talent has very high salaries, reflecting large marginal value-add. It's also clear that researchers want more compute -- again reflecting large marginal value-add. So it's seems clear that we're not strongly bottlenecked by just one of compute or labour currently. That's why I used α=0.5, assuming that the elasticity of progress to both inputs is equal. (I don't think this is exactly right, but seems in the right rough ballpark.)

I think your thought experiments about the the world with just one researcher and the world with just an abacus are an interesting challenge to the CES function, but don't imply that my treatment of initial conditions was confused.

I actually don't find those two examples very convincing though as challenges to the CES. In both those worlds it seems pretty plausible that the scarce input would be a hard bottleneck on progress. If all you have is an abacus, then probably the value of the marginal AI researcher would be ~0 as they'd have no compute to use. And so you couldn't run the argument "ρ= - 0.4 and so more compute won't help much" because in that world (unlike our world) it will be very clear that compute is a hard bottleneck to progress and cognitive labour isn't helpful. And similarly, in the world with just Bob doing AI R&D, it's plausible that AI companies would have ~0 willingness to pay for more compute for experiments, as Bob can't use the compute that he's already got; labour is the hard bottleneck. So again you couldn't run the argument based on ρ=-0.4 bc that argument only works if neither input is currently a hard bottleneck.

It's quite clear that labs both want more high-quality researchers -- top talent has very high salaries, reflecting large marginal value-add.

Three objections, one obvious. I'll state them strongly, a bit devil's advocate; not sure where I actually land on these things.

Obvious: salaries aren't that high.

Also, I model a large part of the value to companies of legible credentialed talent being the marketing value to VCs and investors, who (even if lab leadership can) can't tell talent apart except by (rare) legible signs. This is actually a way to get more compute (and other capital). (The legible signs are rare because compute is a bottleneck! So a Matthew effect pertains.)

Finally, the utility of labs is very convex in the production of AI: the actual profit comes from time spent selling a non-commoditised frontier offering at large margin. So small AI production speed gains translate into large profit gains.

Salaries have indeed now gotten pretty high—it seems like they're within an OOM of compute spend (at least at Meta).

I also noticed this! And wondered how much evidence it is (and of what). I don't think of Meta as especially rational in its AI-related behaviour. Maybe this is Zuckerberg trying to make up for Le Cun's years of bad judgement.

Hmm, I actually kind of lean towards it being rational, and labs just underspending on labor vs. capital for contigent historical/cultural reasons. I do think a lot of the talent juice is in "banal" progress like efficiently running lots of experiments, and iterating on existing ideas straightforwardly (as opposed to something like "only a few people have the deep brilliance/insight to make progress"), but that doesn't change the upshot IMO.

Doesn't seem that wild to me? When we scale up compute we're also scaling up the size of frontier training runs; maybe past a certain point running smaller experiments just isn't useful (e.g. you can't learn anything from experiments using 1 billionth of the compute of a frontier training run); and maybe past a certain point you just can't design better experiments. (Though I agree with you that this is all unlikely to bite before a 10X speed up.)

Yes, but also, if the computers are getting serially faster, then you also have to be able to respond to the results and implement the next experiment faster as you add more compute. E.g., imagine a (physically implausible) computer which can run any experiment which uses less than 1e100 FLOP in less than a nanosecond. To maximally utilize this, you'd want to be able to respond to results and implement the next experiment in less than a nanosecond as well. This is of course an unhinged hypothetical and in this world, you'd also be able to immediately create superintelligence by e.g. simulating a huge evolutionary process.

I’ll define an “SIE” as “we can get >=5 OOMs of increase in effective training compute in <1 years without needing more hardware”. I

This is as of the point of full AI R&D automation? Or as of any point?

I meant at any point, but was imagining the period around full automation yeah. Why do you ask?

Training run size has grown much faster than the world’s total supply of AI compute. If these near-frontier experiments were truly a bottleneck on progress, AI algorithmic progress would have slowed down over the past 10 years.

I think this history is consistent with near-frontier experiments being important, and labs continuing to do a large number of such experiments as part of the process of increasing lab spending on training compute.

ie: suppose OAI now spends $100m/model instead of $1m/model. There's no reason that they couldn't still be spending, say, 50% of their training compute on running 500 0.1%-scale experiments.

Caveat: This is at the firm level; you could argue that fewer near-frontier experiments are being done in total across the AI ecosystem, and certainly there's less information flow between organizations conducting these experiments.

My sense is that labs have scaled up frontier training runs faster than they've scaled up their supply of compute.

I.e. in 2015 the biggest training runs would have been <<10% of OAI's compute, but that's no longer true today.

Not confident in this!

The best objection to an SIE

I think the compute bottleneck is a reasonable objection, but there is also the fairly straightforward objection that gaining skills takes experience, and experience takes interaction (or hoovering up from web data).

You can get experience in things like 'writing fast code' easily, so a speed explosion is fairly plausible (up to diminishing returns). But various R&Ds or human influence or whatever are much harder to get experience for. So our exploded SIs might be super fast and maybe super good at learning from experience, but out of the gate at best human expert level where relevant data are abundant, and at best human novice level where data aren't.

Yeah agreed -- AIs will improve in sample efficiency and will generate experience in all aspects of AI RnD in parallel, but they'll be weakest in the areas where they have the least experience

I wrote a bit about experimentation recently.

You seem very close to taking this position seriously when you talk about frontier experiments and experiments in general, but I think you also need to notice that experiments come in more dimensions than that. Like, you don't learn how to be better at chemistry just by playing around with GPUs.

For the intelligence explosion I think all we need is experience with AI RnD?

There is then a further question, if that IE goes very far, of whether AI will generalize to chemistry etc

I'd have thought "yes" bc you achieve somewhat superhuman sample efficiency and can quickly get as much chemistry exp as a human expert

I think we're on the same page about what factors exist.

For the intelligence explosion I think all we need is experience with AI RnD?

Well, sort of, but precisely because intelligence isn't single-dimensional, we have to ask 'what is exploding here?'. And like I said, I think you straightforwardly get speed. And plausibly you get sample efficiency (in the inference-from-given-data sense). And maybe you get 'exploratory planning', which is hypothetically one, more transferrable, factor of general exploration efficiency.

But you don't get domain-specific novelty/interestingness taste, which is another critical factor, except for the domains you can easily get lots of data on. So either you need to hoover that up from existing data, or get it some other how. That might be interviews, it might be a bunch of robotics and other actuator/sensor equipment, it might be the Shulman-style humans-with-headsets-as-actuators thing and learning by experience. But then the question becomes whether there's enough data to hoover it up real fast with your speed+efficiency learning, or whether you hit a bunch of scaling up bottlenecks (and in which domains).

BTW I also think novelty taste depreciates quickly as the frontier of a domain moves, so I'm less bullish on hoovering up taste from existing data/interviews for a permanent taste boost. But it might take you some way past frontier, which may or may not be sizeably impactful in a given domain.

I think the methods you describe for gaining data suffice to get more data in <1 year than a human expert sees in a lifetime

Which is why I don't expect big delays from this

But I agree this stuff will be a bottleneck

Yes more 'data', but not necessarily more frontier-taste-relevant experience, which requires either direct or indirect contact with frontier experiments. That might be a crux.

I agree we should weight frontier-taste-relevant data more heavily, but I think my point still goes through.

With that weighting, human experts have much less frontier-taste-relevant data than they have total data, and it's still true that AIs could acquire more of that data than an expert in <1 year.

As AI advances SOTA technology it will in general have more data than humans on the frontier, as AI can learn from all copies. E.g. if it takes 1000 experiments to advance the frontier one step, human experts might typically experience 10 experiments at each step, whereas AI will experience 1000.

With that weighting, human experts have much less frontier-taste-relevant data than they have total data... AI can learn from all copies.

Ah, yep yep. (Though humans do in fact learn (with losses) from other copies, and within a given firm/lab/community the transfer is probably quite high.)

Hmm, I think we have basically the same model, with maybe a bit different parameters (about which we're both somewhat uncertain). But think that readers of the OP without some of that common model might be misled.

From a lot of convos and writing, I infer that many people conflate a lot of aspects of intelligence into one thing. With that view, 'intelligence explosion' is just a dynamic where a single variable, 'the intelligence' (or 'the algorithmic quality'), gets real big real fast. And then of course, because intelligence is the thing that gets you new technology, you can get all the new technology.

About this, you said,

There is then a further question, if that IE goes very far, of whether AI will generalize to chemistry etc

I'd have thought "yes" bc you achieve somewhat superhuman sample efficiency and can quickly get as much chemistry exp as a human expert

revealing that you correctly distinguish different factors of intelligence like 'sample efficiency' and 'chemistry knowledge' (I think I already knew this but it's good to have local confirmation), and that you don't think a software-only IE yields all of them.

Regarding the second sentence, it could be a misleading use of terms to call that 'generalisation'[1], but I agree that 'sample efficiency' is among the relevant aspects of intelligence (and is a candidate for one that could be mostly generalisably built up automated in silico), and a relevant complement is 'chemistry (frontier research) experience', and that a lot of each taken together may effectively get you chemistry research taste in addition (which can yield new chemistry knowledge if applied with suitable experimental apparatus).

I'm emphasising[2] (in my exploration post, in this thread, and the sister comment about frontier taste depreciation) that there's practically a wide gulf between 'hoover taste up from web data' and 'robotics or humans-with-headsets', in two ways. The first tops out somewhere (probably at sub-frontier) due to the depreciation of frontier research taste. The second cluster doesn't top out anywhere, but is slower and has more barriers to getting started. Is a year to exceed humanity's peak taste bold in most domains? Not sure! If a lot of in silico is possible, maybe it's doable. That might include cyber and software (and maybe narrow particular areas of chem/bio where simulation is especially good).

If you know for sure that those other bottlenecks proceed super fast, you don't need to necessarily clarify what intelligence factors you're talking about for practical purposes, but if you're not sure, I think it's worth being super clear about it where possible.

I might instead prefer terminology like 'quickly implies ... (on assumptions...)' ↩︎

Incidentally, the other thing I'm emphasising (but I think you'd agree?) is that on this view, R&Ds are always substantially driven by experimental throughput, with 'sample efficiency (of the combined workforce) at accruing research taste' being the main other rate-determining factor (because the steady state of research taste depends on this, and exploration quality * experimental throughput is progress). Throwing more labour at it can make your serial experimentation a bit faster, and can parallelise experimentation (with some parallelism discount), with presumably very diminishing returns. Throwing smarter labour (as in, better sample efficiency, and maybe faster-thinking, with diminishing returns), can increase the rate, by getting more insight per experiment and choosing better experiments. ↩︎

Epistemic status – thrown together quickly. This is my best-guess, but could easily imagine changing my mind.

Intro

I recently copublished a report arguing that there might be a software intelligence explosion (SIE) – once AI R&D is automated (i.e. automating OAI), the feedback loop of AI improving AI algorithms could accelerate more and more without needing more hardware.

If there is an SIE, the consequences would obviously be massive. You could shoot from human-level to superintelligent AI in a few months or years; by default society wouldn’t have time to prepare for the many severe challenges that could emerge (AI takeover, AI-enabled human coups, societal disruption, dangerous new technologies, etc).

The best objection to an SIE is that progress might be bottlenecked by compute. We discuss this in the report, but I want to go into much more depth because it’s a powerful objection and has been recently raised by some smart critics (e.g. this post from Epoch).

In this post I:

The compute bottleneck objection

Intuitive version

The intuitive version of this objection is simple. The SIE-sceptic says:

Look, ML is empirical. You need to actually run the experiments to know what works. You can’t do it a priori. And experiments take compute. Sure, you can probably optimise the use of that compute a bit, but past a certain point, it doesn’t matter how many AGIs you have coding up experiments. Your progress will be strongly constrained by your compute.

An SIE-advocate might reply: sure, we’ll eventually fully optimise experiments, and past that point won’t advance faster. But we can maintain a very fast pace of progress, right?

The SIE-sceptic replies:

Nope, because ideas get harder to find. You’ll need more experiments and more compute to find new ideas over time, as you pluck the low-hanging fruit. So once you’ve fullu optimised your experiments your progress will slow down over time. (Assuming you hold compute constant!)

Economist version

That’s the intuitive version of the compute bottlenecks objection. Before assessing it, I want to make what I call the “economist version” of the objection. This version is more precise, and it was made in the Epoch post.

This version draws on the CES model of economic production. The CES model is a mathematical formula for predicting economic output (GDP) given inputs of labour L and physical capital K. You don’t need to understand the math formula, but here it is:

The formula has a substitutability parameter ρ which controls the extent to which K and L are complements vs substitutes. If ρ<0, they are complements and there’s a hard bottleneck – if L goes to infinity but K remains fixed, output cannot rise above a ceiling.

(There’s also a parameter α but it’s less important for our purposes. α can be thought of as the fraction of tasks performed by L vs K. I’ll assume α=0.5 throughout. If L=K, which I take as my starting point, α also represents the elasticity of Y wrt K.)

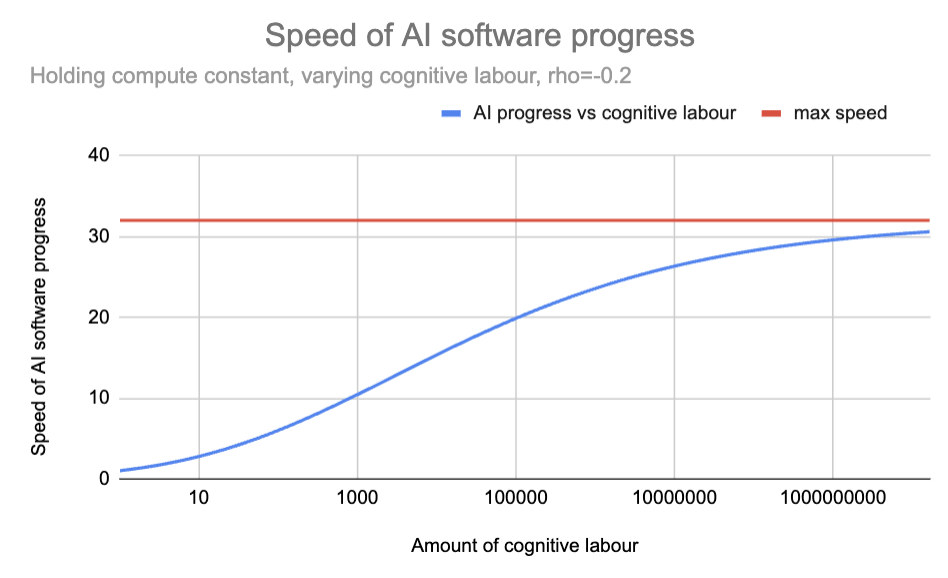

Here are the predictions of the CES formula when K=1 and ρ = -0.2.

This graph shows the implications of a CES production function. It shows how output (Y) changes when K=1 and L varies, with ρ = -0.2. The blue line shows output growing with more labor but approaching the red ceiling line, demonstrating the maximum possible output when K=1.

We can apply the CES formula to AI R&D during a software intelligence explosion (SIE). In this context, L represents the amount of AI cognitive labour applied to R&D, K represents the amount of compute, and Y represents the pace of AI software progress. The model can predict how much faster AI software would improve if we add more AGI researchers but keep compute fixed.

In this context, the ‘ceiling’ gives the max speed of AI software progress as cognitive labour tends to infinity but compute is held fixed. A max speed of 100 means progress could become 100 times faster than today, but no faster, no matter how many AGI researchers we add, and no matter how smart they are or how quickly they think.

Here is the same diagram as above, but re-labelled for the context of AI R&D:

This graph applies the CES model to AI research. The blue line shows how the pace of progress would change if compute is held fixed but cognitive labour increase. With ρ = -0.2, progress accelerates with more automated researchers but approaches a maximum of ~30× current pace.

As the graph shows, the CES formula with ρ = -0.2 implies that if today you poured an unlimited supply of superintelligent God-like AIs into AI R&D, the pace of AI software progress would increase by a factor of ~30.

Once this CES formula has been accepted, we can make the economist version of the argument that compute bottlenecks will prevent a software intelligence explosion. In a recent blog post, Epoch say:

If the two inputs are indeed complementary [ρ<0], any software-driven acceleration could only last until we become bottlenecked on compute…

How many orders of magnitude a software-only singularity can last before bottlenecks kick in to stop it depends crucially on the strength of the complementarity between experiments and insight in AI R&D, and unfortunately there’s no good estimate of this key parameter that we know about. However, in other parts of the economy it’s common to have nontrivial complementarities, and this should inform our assessment of what is likely to be true in the case of AI R&D.

Just as one example, Oberfield and Raval (2014) estimate that the elasticity of substitution between labor and capital in the US manufacturing sector is 0.7 [which corresponds to ρ=-0.4], and this is already strong enough for any “software-only singularity” to fizzle out after less than an order of magnitude of improvement in efficiency.

If the CES model describes AI R&D, and ρ=-0.4, then the max speed of AI software progress is 6X faster than today (continuing to assume α=0.5). So an SIE could never become that fast to begin with. And once we do approach the max speed, diminishing returns will cause progress to slow down. (I’m not sure where they get their “less than an order of magnitude” claim from, but this is my attempt to reconstruct the argument.)

Epoch used ρ = -0.4. What about other estimates of ρ ? I’m told that economic estimates of ρ range from -1.2 to -0.15. The corresponding range for max speed is 2 - 100:

Max speed of AI software progress

(holding compute fixed, with as cognitive labour tends to infinity)

Let’s recap the economist version of the argument that compute bottlenecks will block an SIE. The SIE-skeptic invokes a CES model of production (“inputs are complementary”), draws on economic estimates of ρ from the broader economy, applies those same ρ estimates to AI R&D, notices that the max speed for AI software progress is not very high even before diminishing returns are applied, and conclude that an SIE is off the cards.

That’s the economist version of the compute bottlenecks objection. Compared to the intuitive version, it has the advantage of being more precise, (if true) more clearly devastating to an SIE, and the objection recently made by Epoch. So i’ll focus the rest of the discussion on the economist version of the objection.

Counterarguments to the compute bottleneck objection

I think there are lots of reasons to treat the economist calculation here as only giving a weak prior on what will happen in AI R&D, and lots of reasons to think ρ will be higher for AI R&D (i.e. compute will be less of a bottleneck than the economic estimates suggest).

Let’s go through these reasons. (Flag: I’m giving these reasons in something like reverse order of importance.)

The SIE involves inputs of cognitive labour rising by multiple orders of magnitude. But empirical measurements of ρ span a much smaller range, making extrapolation very dicey.

Points 1-3 are background, meant to warm readers up to the idea that we shouldn’t be putting much weight on economic ρ estimates in the context of AI R&D, and suggesting that values very close to 0 are similarly plausible to the values found by economic studies. Now I’ll argue more directly that ρ should be closer to 0 for AI R&D.

Taking stock

Ok, so let’s take stock. I’ve given a long list of reasons why I find the economist version of the compute bottleneck objection unconvincing in the context of a software intelligence explosion (SIE), and why I expect ρ to be higher than economics estimates.

So I feel confident that our SIE forecasts should be more aggressive than if we naively followed the methodology of using economic data to estimate ρ. But how much more aggressive?

Our recent report on SIE assumed ρ = 0, which I think is likely a bit too high. In particular, I suggested above that the most likely range for ρ is between -0.2 and 0. As shown by the following graph, the difference between -0.2 and 0 doesn’t make a big difference in the early stages of an SIE (when total cognitive labour is 1-3 OOMs bigger than the human contribution), but makes a big difference later on (once total cognitive labour is >=5 OOMs bigger than the human contribution).

Sensitivity analysis on values of ρ. Within the range -0.2 < ρ < 0, the predictions of CES don’t differ significantly until labour inputs have grown by ~5 OOMs. If this is the range of ρ for AI R&D, compute bottlenecks won’t bite in the early stages of the SIE.

This suggests that compute bottlenecks are unlikely to block an SIE in its early stages, but could well do so after a few OOMs of progress. Of course, that’s just my best guess – it’s totally possible that compute bottlenecks kick in much sooner than that, or much later.

In light of all this, my current overall take on the SIE is something like:

It’s hard to know if I actually disagree with Epoch on the bottom line here. Let me try and put (very tentative) numbers on it! I’ll define an “SIE” as “we can get >=5 OOMs of increase in effective training compute in <1 years without needing more hardware”. I’d say there’s a 10-40% chance that an SIE happens despite compute bottlenecks. This is significantly higher than what a naive application of economic estimates would suggest.