I've lurked here for a long time, but I am not a rationalist by the standards of this place. What makes someone a 'rationalist' is obviously quite debatable, especially when you mean 'Rationalist' as in the particular philosophical subculture often espoused here. To what degree do you need to agree?

I generally dislike people using Claude or other LLMs for definitions, as it should be thought of as a very biased poll rather than a real definition, but it can be useful for brainstorming like this as long as you keep in mind how often it is wrong. Only five of the 20 seem to be related to being rational, and the others are clearly all related to the very particular subculture that originated in the Bay Area and is represented here. (That's not bad, it was all on topic in some way.) I do object to conflating the different meanings of the term.

The two you bolded are in fact rather important to being able to think well, but both are clearly not especially related to the subculture.

The ones I think are important to thinking well (which is what I think is important):

Curiosity about discovering truth*

Willingness to change mind when presented with evidence

Interest in improving reasoning and decision-making skills

Openness to unconventional ideas if well-argued*

Commitment to intellectual honesty

*are the ones you bolded

These 5 all fit what I aspire to, and I think I've nailed 4 of them, (willingness to change my mind when presented with evidence is hard, since it is really hard to know how much evidence you've been presented, and it is easy to pretend it favors what I already believe,) but only five of the other 15 apply to me, and those are largely just the ones about familiarity. So I am a 'rationalist' with an interest in being 'rational' but not a subculture 'Rationalist' even though I've lurked here for decades.

I do think that the two you selected lead to the others in a way. Openness to unconventional ideas will obviously lead to people taking seriously unusual ideas (often very wrong, sometimes very right) often enough that they are bound to diverge from others. The subculture provides many of these unusual ideas, and then those who find themselves agreeing often join the subculture. Curiosity of course often involves looking in unfamiliar places, like a random Bay Area community that likes to thing about thinking.

I had some reasons I didn't pick the ones you included.

- Willingness to change mind when presented with evidence

I could see someone rationally taking an anti-dialectical stance if they think that the evidence they are being given is somehow not valid or biased such as to be an example of bayesian persuasion.

- Interest in improving reasoning and decision-making skills

Someone could be committed to the goal of discovering truth, but also not be able to currently prioritize it, and so they may have no interest in improvement at the time.

- Commitment to intellectual honesty

I believe one could rationally decide that the best way to achieve a goal of truth discovery might not involve intellectual honesty in a given circumstance.

I'm not sure why you are talking about 'anti-dialectical' in regards to willingness to change your mind when presented with evidence (but then, I had to look up what the word even means). It only counts as evidence to the degree it is reliable times how strong the evidence would be if it were perfectly reliable, and if words in a dialogue aren't reliable for whatever reason, the obviously that is a different thing. That it is being presented rather than found naturally is also evidence of something, and changes the apparent strength of the evidence but doesn't necessarily mean that it can be ignored entirely. If nothing else, it is evidence that someone thinks the topics are connected.

Interest doesn't mean that you are going to, it means that you are interested. If you could just press a button and be better at reasoning and decision making (by your standards) with no other consequences, would you? Since that isn't the case, and there will be sacrifices, you might not do it, but that doesn't mean a lack of interest. A lack of interest is more 'why should I care about how weel I reason and make decisions?' than 'I don't currently have the ability to pursue this at the same time as more important goals'.

While duplicity certainly could be in your 'rational' self-interest if you have a certain set of desires and circumstances, it pollutes your own mind with falsehood. It is highly likely to make you less rational unless extreme measures are taken to remind yourself what is actually true. (I also hate liars but that is a separate thing than this.) Plus, intellectual honesty is (usually) required for the feedback you get from the world to actually apply to your thoughts and beliefs, so you will not be corrected properly, and will persist in being wrong when you are wrong.

Some friends and I were discussing "what makes one a rationalist", which is a less strict criteria than "what makes one a member of the rationalist community", which is a less strict criteria still than "what makes one a high status, well liked, or enthusiastic member of the rationalist community", and I am most interested in the first question.

It seems obvious to me that 99% of people you meet on the street are very clearly (and mostly joyously) not rationalists. But what is it they do or don't do that makes them so obviously not members?

It also seems to be a trope that people will say they are not rationalists, but will also read all the literature of rationalists, agree with all the thought processes and intellectual rules of engagement. This seems to be very common. As I was listening to an episode of the Bayesian Conspiracy (can't recall which one) and hearing a guest start to quibble that they are just "rationalist adjacent" but not a real rationalist, I started to realize that my own reasons at that time for why I wasn't one were similar, and further realized how absurd and in denial they sounded. Some people call that group "post-rats" but to me that seems not like an exit from the group, nor even a scism, but just a church of the same theology adopting different social norms.

From what I was told LessOnline was hosting at the same time as a major EA event, and some even joked that LessOnline was allowed to have meat catered because the die hard EA rationalists had gone to that instead of LessOnline. I met at guy at a co-working space in the Presidio during a short day trip out during the week of LessOnline, and he mentioned that he liked that "rationalists are getting back to a more pure version of themselves" and ditching the requirement to be an EA.

All of this made me think that rationalism is basically about deriving "Is" statements, and moral offshoots are about "Ought" statements. And that one can be a complete rationalist without being an EA or utilitarian.

So I wanted to extend my conversation with my friend to the community.

Here are the things Claude thought might make one a rationalist:

It seems that my view is that curiosity is basically the only criteria I think distinguishes a rationalist from a non-rationalist. Jurgen Schmidhuber has written a lot on dissecting what makes a mind curious, that might help us break down the term.

Artificial Curiosity & Creativity Since 1990-91

Given his theories always end up going back to something about compression, and given that my personal mission is omniscience, I wonder if my view is that rationalist are basically just people who are also ultimately seeking omniscience (maximum possible compression of all data).

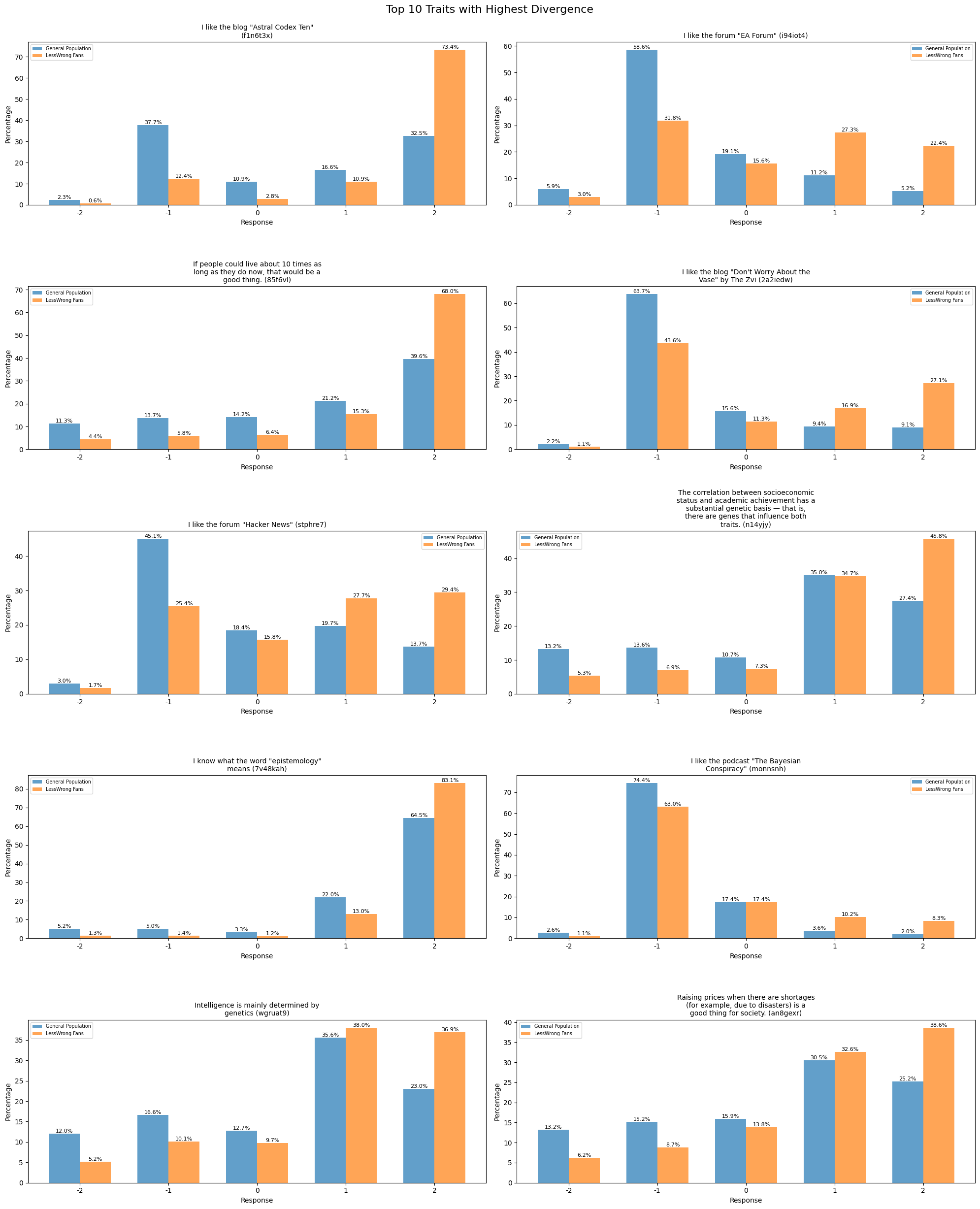

Data Analysis

I decided to do some data analysis on some survey data from Aella's blog.

I will be updating this as I get more data. Here is the difference in distribution of answers for people who answered in the highest affirmative to "I like the forum "LessWrong"" compared to the rest of the respondents.

Notice any interesting divergences? Below is how LessWrong fanatics differ most from Aella's other respondents.

Anyone excited to see where they fall on the manifold of rationalist adjacents?

Interesting sections of the manifold discovered, I think I will make a higher quality visualization as well as justification for the lines later.