Prediction: In 6-12 months people are going to start leaving Deepmind and Anthropic for similar sounding reasons to those currently leaving OpenAI (50% likelihood).

> Surface level read of what is happening at OpenAI; employees are uncomfortable with specific safety policies.

> Deeper, more transferable, harder to solve problem; no person that is sufficiently well-meaning and close enough to the coal face at Big Labs can ever be reassured they're doing the right thing continuing to work for a company whose mission is to build AGI.

Basically, this is less about "OpenAI is bad" and more "Making AGI is bad".

I like the fact that despite not being (relatively) young when they died, the LW banner states that Kahneman & Vinge have died "FAR TOO YOUNG", pointing to the fact that death is always bad and/or it is bad when people die when they were still making positive contributions to the world (Kahneman published "Noise" in 2021!).

I like it too, and because your comment made me think about it, I now kind of wish it said "orders of magnitude too young"

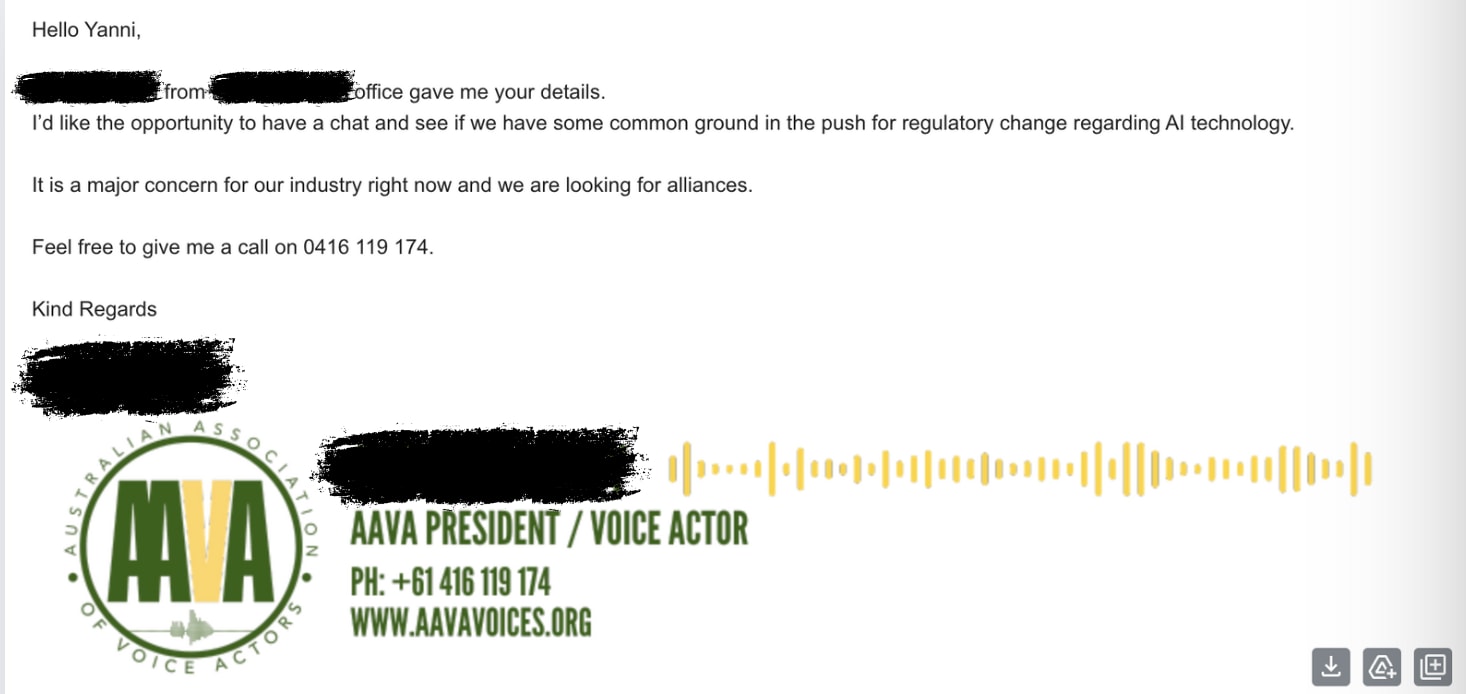

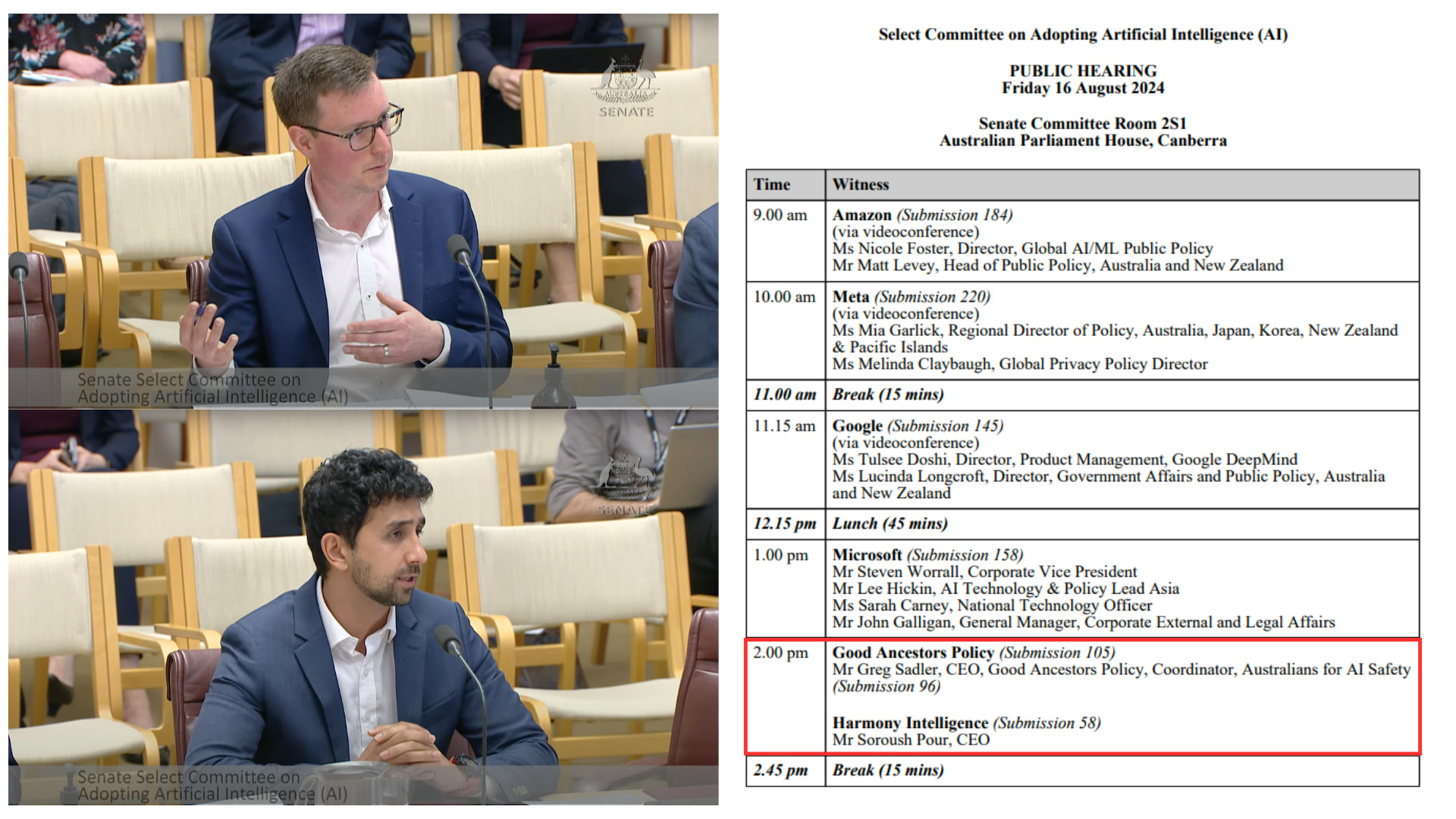

[IMAGE] Extremely proud and excited to watch Greg Sadler (CEO of Good Ancestors Policy) and Soroush Pour (Co-Founder of Harmony Intelligence) speak to the Australian Federal Senate Select Committee on Artificial Intelligence. This is a big moment for the local movement. Also, check out who they ran after.

Something I'm confused about: what is the threshold that needs meeting for the majority of people in the EA community to say something like "it would be better if EAs didn't work at OpenAI"?

Imagining the following hypothetical scenarios over 2024/25, I can't predict confidently whether they'd individually cause that response within EA?

- Ten-fifteen more OpenAI staff quit for varied and unclear reasons. No public info is gained outside of rumours

- There is another board shakeup because senior leaders seem worried about Altman. Altman stays on

- Superalignment team is disbanded

- OpenAI doesn't let UK or US AISI's safety test GPT5/6 before release

- There are strong rumours they've achieved weakly general AGI internally at end of 2025

This question is two steps removed from reality. Here’s what I mean by that. Putting brackets around each of the two steps:

what is the threshold that needs meeting [for the majority of people in the EA community] [to say something like] "it would be better if EAs didn't work at OpenAI"?

Without these steps, the question becomes

What is the threshold that needs meeting before it would be better if people didn’t work at OpenAI?

Personally, I find that a more interesting question. Is there a reason why the question is phrased at two removes like that? Or am I missing the point?

What does a "majority of the EA community" mean here? Does it mean that people who work at OAI (even on superalignment or preparedness) are shunned from professional EA events? Does it mean that when they ask, people tell them not to join OAI? And who counts as "in the EA community"?

I don't think it's that constructive to bar people from all or even most EA events just because they work at OAI, even if there's a decent amount of consensus people should not work there. Of course, it's fine to host events (even professional ones!) that don't invite OAI people (or Anthropic people, or METR people, or FAR AI people, etc), and they do happen, but I don't feel like barring people from EAG or e.g. Constellation just because they work at OAI would help make the case, (not that there's any chance of this happening in the near term) and would most likely backfire.

I think that currently, many people (at least in the Berkeley EA/AIS community) will tell you to not join OAI if asked. I'm not sure if they form a majority in terms of absolute numbers, but they're at least a majority in some professional circles (e.g. both most people at FAR/FAR Labs and at Lightcone/Lighthaven would p...

I recently discovered the idea of driving all blames into oneself, which immediately resonated with me. It is relatively hardcore; the kind of thing that would turn David Goggins into a Buddhist.

Gemini did a good job of summarising it:

This quote by Pema Chödron, a renowned Buddhist teacher, represents a core principle in some Buddhist traditions, particularly within Tibetan Buddhism. It's called "taking full responsibility" or "taking self-blame" and can be a bit challenging to understand at first. Here's a breakdown:

What it Doesn't Mean:

- Self-Flagellation: This practice isn't about beating yourself up or dwelling on guilt.

- Ignoring External Factors: It doesn't deny the role of external circumstances in a situation.

What it Does Mean:

- Owning Your Reaction: It's about acknowledging how a situation makes you feel and taking responsibility for your own emotional response.

- Shifting Focus: Instead of blaming others or dwelling on what you can't control, you direct your attention to your own thoughts and reactions.

- Breaking Negative Cycles: By understanding your own reactions, you can break free from negative thought patterns and choose a more skillful response.

Analogy:

Imagine a pebble thrown ...

I don't know how to say this in a way that won't come off harsh, but I've had meetings with > 100 people in the last 6 months in AI Safety and I've been surprised how poor the industry standard is for people:

- Planning meetings (writing emails that communicate what the meeting will be about, it's purpose, my role)

- Turning up to scheduled meetings (like literally, showing up at all)

- Turning up on time

- Turning up prepared (i.e. with informed opinions on what we're going to discuss)

- Turning up with clear agendas (if they've organised the meeting)

- Running the meetings

- Following up after the meeting (clear communication about next steps)

I do not have an explanation for what is going on here but it concerns me about the movement interfacing with government and industry. My background is in industry and these just seem to be things people take for granted?

It is possible I give off a chill vibe, leading to people thinking these things aren't necessary, and they'd switch gears for more important meetings!

There have been multiple occasions where I've copy and pasted email threads into an LLM and asked it things like:

- What is X person saying

- What are the cruxes in this conversation?

- Summarise this conversation

- What are the key takeaways

- What views are being missed from this conversation

I really want an email plugin that basically brute forces rationality INTO email conversations.

I have heard rumours that an AI Safety documentary is being made. Separate to this, a good friend of mine is also seriously considering making one, but he isn't "in" AI Safety. If you know who this first group is and can put me in touch with them, it might be worth getting across each others plans.

More thoughts on "80,000 hours should remove OpenAI from the Job Board"

- - - -

A broadly robust heuristic I walk around with in my head is "the more targeted the strategy, the more likely it will miss the target".

Its origin is from when I worked in advertising agencies, where TV ads for car brands all looked the same because they needed to reach millions of people, while email campaigns were highly personalised.

The thing is, if you get hit with a tv ad for a car, worst case scenario it can't have a strongly negative effect because of its generic...

A judgement I'm attached to is that a person is either extremely confused or callous if they work in capabilities at a big lab. Is there some nuance I'm missing here?

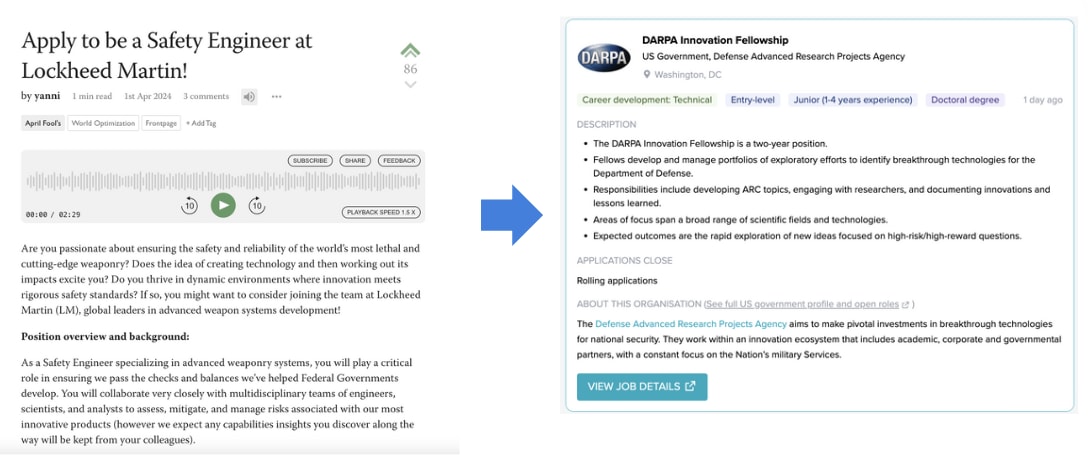

[PHOTO] I sent 19 emails to politicians, had 4 meetings, and now I get emails like this. There is SO MUCH low hanging fruit in just doing this for 30 minutes a day (I would do it but my LTFF funding does not cover this). Someone should do this!

I expect (~ 75%) that the decision to "funnel" EAs into jobs at AI labs will become a contentious community issue in the next year. I think that over time more people will think it is a bad idea. This may have PR and funding consequences too.

Three million people are employed by the travel (agent) industry worldwide. I am struggling to see how we don't lose 80%+ of those jobs to AI Agents in 3 years (this is ofc just one example). This is going to be an extremely painful process for a lot of people.

Please help me find research on aspiring AI Safety folk!

I am two weeks into the strategy development phase of my movement building and almost ready to start ideating some programs for the year.

But I want these programs to be solving the biggest pain points people experience when trying to have a positive impact in AI Safety .

Has anyone seen any research that looks at this in depth? For example, through an interview process and then survey to quantify how painful the pain points are?

Some examples of pain points I've observed so far through my interviews wit...

AI Safety has less money, talent, political capital, tech and time. We have only one distinct advantage: support from the general public. We need to start working that advantage immediately.

Big AIS news imo: “The initial members of the International Network of AI Safety Institutes are Australia, Canada, the European Union, France, Japan, Kenya, the Republic of Korea, Singapore, the United Kingdom, and the United States.”

H/T @shakeel

The Australian Federal Government is setting up an "AI Advisory Body" inside the Department of Industry, Science and Resources.

IMO that means Australia has an AISI in:

9 months (~ 30% confidence)

12 months (~ 50%)

18 months (~ 80%)

I'm pleased to announce the launch of a brand new Facebook group dedicated to AI Safety in Wellington: AI Safety Wellington (AISW). This is your local hub for connecting with others passionate about ensuring a safe and beneficial future with artificial intelligence / reducing x-risk.To kick things off, we're hosting a super casual meetup where you can:

- Meet & Learn: Connect with Wellington's AI Safety community.

- Chat & Collaborate: Discuss career paths, upcoming events, and

Solving the AGI alignment problem demands a herculean level of ambition, far beyond what we're currently bringing to bear. Dear Reader, grab a pen or open a google doc right now and answer these questions:

1. What would you do right now if you became 5x more ambitious?

2. If you believe we all might die soon, why aren't you doing the ambitious thing?

I'm not sure of an org that deals with ultra-high net worth individuals (longview?), but someone should reach out to Bryan Johnson. I think he could be persuaded to invest in AI Safety (skip to 1:07:15)

AI Safety (in the broadest possible sense, i.e. including ethics & bias) is going be taken very seriously soon by Government decision makers in many countries. But without high quality talent staying in their home countries (i.e. not moving to UK or US), there is a reasonable chance that x/c-risk won’t be considered problems worth trying to solve. X/c-risk sympathisers need seats at the table. IMO local AIS movement builders should be thinking hard about how to keep talent local (this is an extremely hard problem to solve).

TIL that the words "fact" and "fiction" come from the same word: "Artifice" - which is ~ "something that has been created".

I think > 40% of AI Safety resources should be going into making Federal Governments take seriously the possibility of an intelligence explosion in the next 3 years due to proliferation of digital agents.

If transformative AI is defined by its societal impact rather than its technical capabilities (i.e. TAI as process not a technology), we already have what is needed. The real question isn't about waiting for GPT-X or capability Y - it's about imagining what happens when current AI is deployed 1000x more widely in just a few years. This presents EXTREMELY different problems to solve from a governance and advocacy perspective.

E.g. 1: compute governance might no longer be a good intervention

E.g. 2: "Pause" can't just be about pausing model development. It should also be about pausing implementation across use cases

I am 90% sure that most AI Safety talent aren't thinking hard enough about what Neglectedness. The industry is so nascent that you could look at 10 analogous industries, see what processes or institutions are valuable and missing and build an organisation around the highest impact one.

The highest impact job ≠ the highest impact opportunity for you!

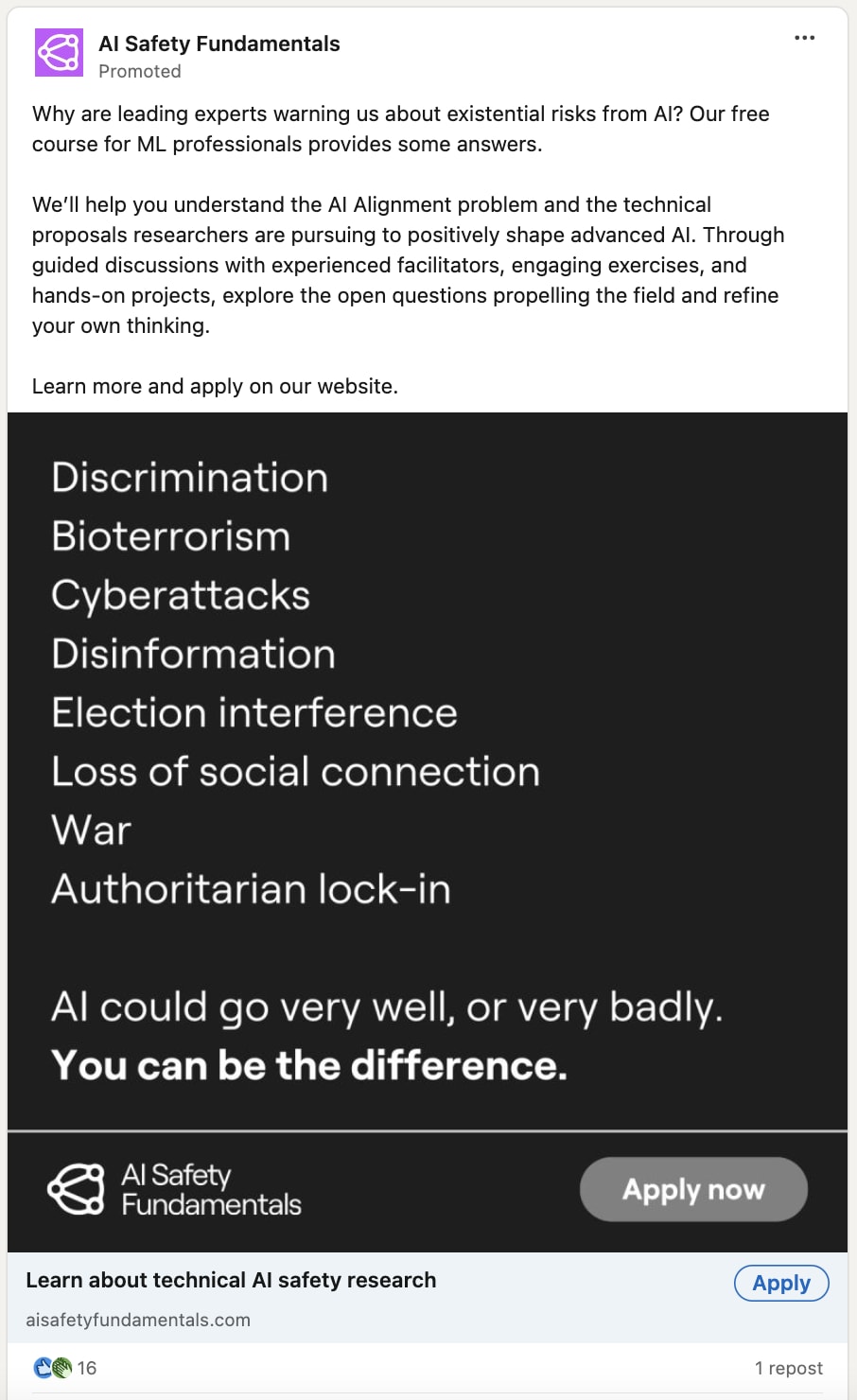

I really like this ad strategy from BlueDot. 5 stars.

90% of the message is still x / c-risk focussed, but by including "discrimination" and "loss of social connection" they're clearly trying to either (or both);

- create a big tent

- nudge people that are in "ethics" but sympathetic to x / c-risk into x / c-risk

(prediction: 4000 disagreement Karma)

Huh, this is really a surprisingly bad ad. None of the things listed are things that IMO have much to do with why AI is uniquely dangerous (they are basically all misuse-related), and also not really what any of the AI Safety fundamentals curriculum is about (and as such is false advertising). Like, I don't think the AI Safety fundamentals curriculum has anything that will help with election interference or loss of social connection or less likelihood of war or discrimination?

I always get downvoted when I suggest that (1) if you have short timelines and (2) you decide to work at a Lab then (3) people might hate you soon and your employment prospects could be damaged.

What is something obvious I'm missing here?

One thing I won't find convincing is someone pointing at the Finance industry post GFC as a comparison.

I believe the scale of unemployment could be much higher. E.g. 5% ->15% unemployment in 3 years.

I think it is good to have some ratio of upvoted/agreed : downvotes/disagreed posts in your portfolio. I think if all of your posts are upvoted/high agreeance then you're either playing it too safe or you've eaten the culture without chewing first.

I'm pretty confident that a majority of the population will soon have very negative attitudes towards big AI labs. I'm extremely unsure about what impact this will have on the AI Safety and EA communities (because we work with those labs in all sorts of ways). I think this could increase the likelihood of "Ethics" advocates becoming much more popular, but I don't know if this necessarily increases catastrophic or existential risks.

Not sure if this type of concern has reached the meta yet, but if someone approached me asking for career advice, tossing up whether to apply for a job at a big AI lab, I would let them know that it could negatively affect their career prospects down the track because so many people now perceive such as a move as either morally wrong or just plain wrong-headed. And those perceptions might only increase over time. I am not making a claim here beyond this should be a career advice consideration.

When AI Safety people are also vegetarians, vegans or reducetarian, I am pleasantly surprised, as this is one (of many possible) signals to me they're "in it" to prevent harm, rather than because it is interesting.

Yesterday Greg Sadler and I met with the President of the Australian Association of Voice Actors. Like us, they've been lobbying for more and better AI regulation from government. I was surprised how much overlap we had in concerns and potential solutions:

1. Transparency and explainability of AI model data use (concern)

2. Importance of interpretability (solution)

3. Mis/dis information from deepfakes (concern)

4. Lack of liability for the creators of AI if any harms eventuate (concern + solution)

5. Unemployment without safety nets for Australians (concern)

6....

If GPT5 actually comes with competent agents then I expect this to be a "Holy Shit" moment at least as big as ChatGPT's release. So if ChatGPT has been used by 200 million people, then I'd expect that to at least double within 6 months of GPT5 (agent's) release. Maybe triple. So that "Holy Shit" moment means a greater share of the general public learning about the power of frontier models. With that will come another shift in the Overton Window. Good luck to us all.

The catchphrase I walk around with in my head regarding the optimal strategy for AI Safety is something like: Creating Superintelligent Artificial Agents* (SAA) without a worldwide referendum is ethically unjustifiable. Until a consensus is reached on whether to bring into existence such technology, a global moratorium is required (*we already have AGI).

I thought it might be useful to spell that out.

AI Safety Monthly Meetup - Brief Impact Analysis

For the past 8 months, we've (AIS ANZ) been running consistent community meetups across 5 cities (Sydney, Melbourne, Brisbane, Wellington and Canberra). Each meetup averages about 10 attendees with about 50% new participant rate, driven primarily through LinkedIn and email outreach. I estimate we're driving unique AI Safety related connections for around $6.

Volunteer Meetup Coordinators organise the bookings, pay for the Food & Beverage (I reimburse them after the fact) and greet attendees. This ini...

One axis where Capabilities and Safety people pull apart the most, with high consequences is on "asking for forgiveness instead of permission."

1) Safety people need to get out there and start making stuff without their high prestige ally nodding first

2) Capabilities people need to consider more seriously that they're building something many people simply do not want

What is your AI Capabilities Red Line Personal Statement? It should read something like "when AI can do X in Y way, then I think we should be extremely worried / advocate for a Pause*".

I think it would be valuable if people started doing this; we can't feel when they're on an exponential, so its likely we will have powerful AI creep up on us.

@Greg_Colbourn just posted this and I have an intuition that people are going to read it and say "while it can do Y it still can't do X"

*in the case you think a Pause is ever optimal.

I'd like to see research that uses Jonathan Haidt's Moral Foundations research and the AI risk repository to forecast whether there will soon be a moral backlash / panic against frontier AI models.

Ideas below from Claude:

# Conservative Moral Concerns About Frontier AI Through Haidt's Moral Foundations

## Authority/Respect Foundation

### Immediate Concerns

- AI systems challenging traditional hierarchies of expertise and authority

- Undermining of traditional gatekeepers in media, education, and professional fields

- Potential for AI to make decisions traditiona...

I beta tested a new movement building format last night: online networking. It seems to have legs.

V quick theory of change:

> problem it solves: not enough people in AIS across Australia (especially) and New Zealand are meeting each other (this is bad for the movement and people's impact).

> we need to brute force serendipity to create collabs.

> this initiative has v low cost

quantitative results:

> I purposefully didn't market it hard because it was a beta. I literally got more people that I hoped for

> 22 RSVPs and 18 attendees

> this s...

Is anyone in the AI Governance-Comms space working on what public outreach should look like if lots of jobs start getting automated in < 3 years?

I point to Travel Agents a lot not to pick on them, but because they're salient and there are lots of them. I think there is a reasonable chance in 3 years that industry loses 50% of its workers (3 million globally).

People are going to start freaking out about this. Which means we're in "December 2019" all over again, and we all remember how bad Government Comms were during COVID.

Now is the time to start ...

I’d like to quickly skill up on ‘working with people that have a kind of neurodivergence’.

This means;

- understanding their unique challenges

- how I can help them get the best from/for themselves

- but I don’t want to sink more than two hours into this

Can anyone recommend some online content for me please?

Something bouncing around my head recently ... I think I agree with the notion that "you can't solve a problem at the level it was created".

A key point here is the difference between "solving" a problem and "minimising its harm".

- Solving a problem = engaging with a problem by going up a level from which is was createwd

- Minimising its harm = trying to solve it at the level it was created

Why is this important? Because I think EA and AI Safety have historically focussed (and has their respective strengths in) harm-minimisation.

This applies obviously the micro. ...

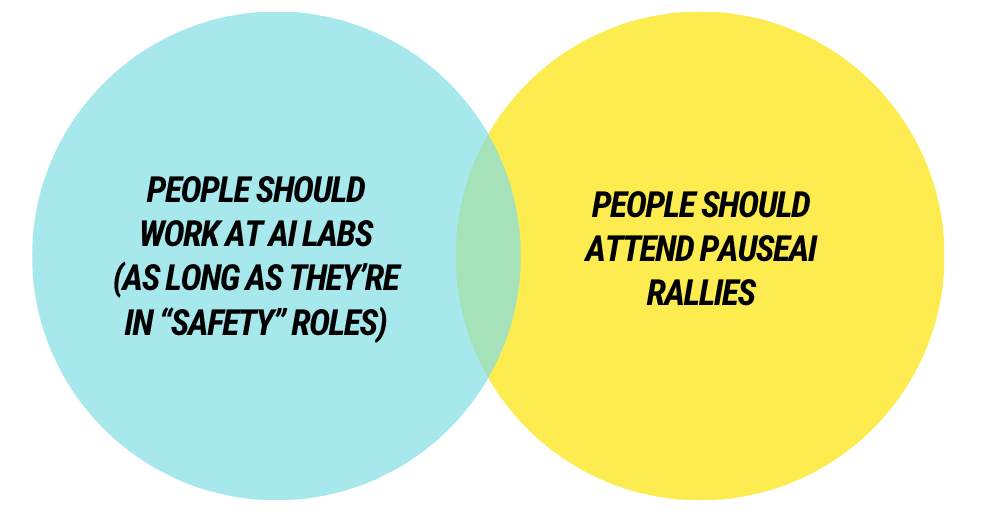

[IMAGE] there is something about the lack overlap between these two audiences that makes me uneasy. WYD?

A piece of career advice I've given a few times recently to people in AI Safety, which I thought worth repeating here, is that AI Safety is so nascent a field that the following strategy could be worth pursuing:

1. Write your own job description (whatever it is that you're good at / brings joy into your life).

2. Find organisations that you think need thing that job but don't yet have it. This role should solve a problem they either don't know they have or haven't figured out how to solve.

3. Find the key decision maker and email them. Explain the (their) pro...

I've decided to post something very weird because it might (in some small way) help shift the Overton Window on a topic: as long as the world doesn't go completely nuts due to AI, I think there is a 5%-20% chance I will reach something close to full awakening / enlightenment in about 10 years. Something close to this:

Very quick thoughts on setting time aside for strategy, planning and implementation, since I'm into my 4th week of strategy development and experiencing intrusive thoughts about needing to hurry up on implementation;

- I have a 52 week LTFF grant to do movement building in Australia (AI Safety)

- I have set aside 4.5 weeks for research (interviews + landscape review + maybe survey) and strategy development (segmentation, targeting, positioning),

- Then 1.5 weeks for planning (content, events, educational programs), during which I will get feedback from others on th

I have an intuition that if you tell a bunch of people you're extremely happy almost all the time (e.g. walking around at 10/10) then many won't believe you, but if you tell them that you're extremely depressed almost all the time (e.g. walking around at 1/10) then many more would believe you. Do others have this intuition? Keen on feedback.

Two jobs in AI Safety Advocacy that AFAICT don't exist, but should and probably will very soon. Will EAs be the first to create them though? There is a strong first mover advantage waiting for someone -

1. Volunteer Coordinator - there will soon be a groundswell from the general population wanting to have a positive impact in AI. Most won't know how to. A volunteer manager will help capture and direct their efforts positively, for example, by having them write emails to politicians

2. Partnerships Manager - the President of the Voice Actors guild reached out...

Help clear something up for me: I am extremely confused (theoretically) how we can simultaneously have:

1. An Artificial Superintelligence

2. It be controlled by humans (therefore creating misuse of concentration of power issues)

My intuition is that once it reaches a particular level of power it will be uncontrollable. Unless people are saying that we can have models 100x more powerful than GPT4 without it having any agency??

Something someone technical and interested in forecasting should look into: can LLMs reliably convert peoples claims into a % of confidence through sentiment analysis? This would be useful for Forecasters I believe (and rationality in general)

[GIF] A feature I'd love on the forum: while posts are read back to you, the part of the text that is being read is highlighted. This exists on Naturalreaders.com and would love to see it here (great for people who have wandering minds like me).

I think acting on the margins is still very underrated. For e.g. I think 5x the amount of advocacy for a Pause on capabilities development of frontier AI models would be great. I also think in 12 months time it would be fine for me to reevaluate this take and say something like 'ok that's enough Pause advocacy'.

Basically, you shouldn't feel 'locked in' to any view. And if you're starting to feel like you're part of a tribe, then that could be a bad sign you've been psychographically locked in.

I went to buy a ceiling fan with a light in it recently. There was one on sale that happened to also tick all my boxes, joy! But the salesperson warned me "the light in this fan can't be replaced and only has 10,000 hours in it. After that you'll need a new fan. So you might not want to buy this one." I chuckled internally and bought two of them, one for each room.

"alignment researchers are found to score significantly higher in liberty (U=16035, p≈0)" This partly explains why so much of the alignment community doesn't support PauseAI!

"Liberty: Prioritizes individual freedom and autonomy, resisting excessive governmental control and supporting the right to personal wealth. Lower scores may be more accepting of government intervention, while higher scores champion personal freedom and autonomy..."

https://forum.effectivealtruism.org/posts/eToqPAyB4GxDBrrrf/key-takeaways-from-our-ea-and-alignment-research-surveys...