I'm wondering what Nick Bostrom's p(doom) currently is, given the subject of this book. He said 9 years ago in his lecture on his book Superintelligence "less than 50% risk of doom". In this interview 4 months ago he said that it's good there has been more focus on risks in recent times, but there's still slightly less focus on the risks than what is optimal, but he wants to focus on the upsides because he fears we might "overshoot" and not build AGI at all which would be tragic in his opinion. So it seems he thinks the risk is less than it used to be because of this public awareness of the risks.

In Bostrom's recent interview with Liv Boeree, he said (I'm paraphrasing; you're probably better off listening to what he actually said)

- p(doom)-related

- it's actually gone up for him, not down (contra your guess, unless I misinterpreted you), at least when broadening the scope beyond AI (cf. vulnerable world hypothesis, 34:50 in video)

- re: AI, his prob. dist. has 'narrowed towards the shorter end of the timeline - not a huge surprise, but a bit faster I think' (30:24 in video)

- also re: AI, 'slow and medium-speed takeoffs have gained credibility compared to fast takeoffs'

- he wouldn't overstate any of this

- contrary to people's impression of him, he's always been writing about 'both sides' (doom and utopia)

- e.g. his Letter from Utopia first published in 2005,

- in the past it just seemed more pressing to him to call attention to 'various things that could go wrong so we could avoid these pitfalls and then we'd have plenty of time to think about what to do with this big future'

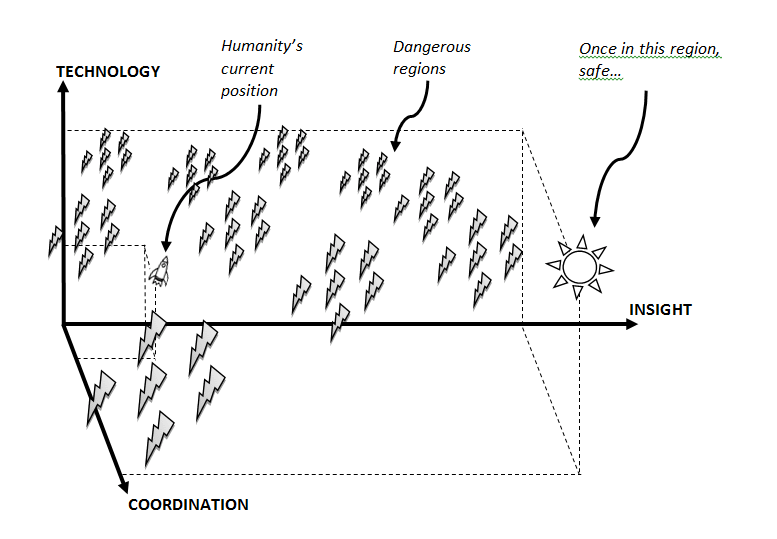

- this reminded me of this illustration from his old paper introducing the idea of x-risk prevention as global priority:

It's very surprising to me that he would think there's a real chance of all humans collectively deciding to not build AGI, and successfully enforcing the ban indefinitely.

Just read this (though not too carefully). The book is structured with about half being transcripts of fictional lectures given by Bostrom at Oxford, about a quarter being stories about various woodland creatures striving to build a utopia, and another quarter being various other vignettes and framing stories.

Overall, I was a bit disappointed. The lecture transcripts touch on some interesting ideas, but Bostrom's style is generally one which tries to classify and taxonimize, rather than characterize (e.g. he has a long section trying to analyze the nature of boredom). I think this doesn't work very well when describing possible utopias, because they'll be so different from today that it's hard to extrapolate many of our concepts to that point, and also because the hard part is making it viscerally compelling.

The stories and vignettes are somewhat esoteric; it's hard to extract straightforward lessons from them. My favorite was a story called The Exaltation of ThermoRex, about an industrialist who left his fortune to the benefit of his portable room heater, leading to a group of trustees spending many millions of dollars trying to figure out (and implement) what it means to "benefit" a room heater.

Bostrom’s new book is out today in hardcover and Kindle in the USA, and on Kindle in the UK.

Description:

A greyhound catching the mechanical lure—what would he actually do with it? Has he given this any thought?

Bostrom’s previous book, Superintelligence: Paths, Dangers, Strategies changed the global conversation on AI and became a New York Times bestseller. It focused on what might happen if AI development goes wrong. But what if things go right?

Suppose that we develop superintelligence safely, govern it well, and make good use of the cornucopian wealth and near magical technological powers that this technology can unlock. If this transition to the machine intelligence era goes well, human labor becomes obsolete. We would thus enter a condition of "post-instrumentality", in which our efforts are not needed for any practical purpose. Furthermore, at technological maturity, human nature becomes entirely malleable.

Here we confront a challenge that is not technological but philosophical and spiritual. In such a solved world, what is the point of human existence? What gives meaning to life? What do we do all day?

Deep Utopia shines new light on these old questions, and gives us glimpses of a different kind of existence, which might be ours in the future.

Links to purchase:

There’s a table of contents on the book’s web page.