All of Anonymous's Comments + Replies

Yeah sorry to be clear totally agree we (or at least I) don’t know the sizes of models, I was just naming specific models to be concrete.

But anyway yes I think you got my point: the Jones chart illustrates (what I understood to be) gwern’s view that adding more inference/search does juice your performance to some degree, but then those gains taper off. To get to the next higher sigmoid-like curve in the Jones figure, you need to up your parameter count; and then to climb that new sigmoid, you need more search. What Jones didn’t suggest (but gwern seems to be saying) is that you can use your search-enhanced model to produce better quality synthetic data to train a larger model on.

When I hear “distillation” I think of a model with a smaller number of parameters that’s dumber than the base model. It seems like the word “bootstrapping” is more relevant here. You start with a base LLM (like GPT-4); then do RL for reasoning, and then do a ton of inference (this gets you o1-level outputs); then you train a base model with more parameters than GPT-4 (let’s call this GPT-5) on those outputs — each single forward pass of the resulting base model is going to be smarter than a single forward pass of GPT-4. And then you do RL and more inferenc...

This press release (https://openai.com/index/openai-o1-system-card/) seems to equivocate between the o1 model and the weaker o1-preview and o1-mini models that were released yesterday. It would be nice if they were clearer in the press releases that the reported results are for the weaker models, not for the more powerful o1 model. It might also make sense to retitle this post to refer to o1-preview and o1-mini.

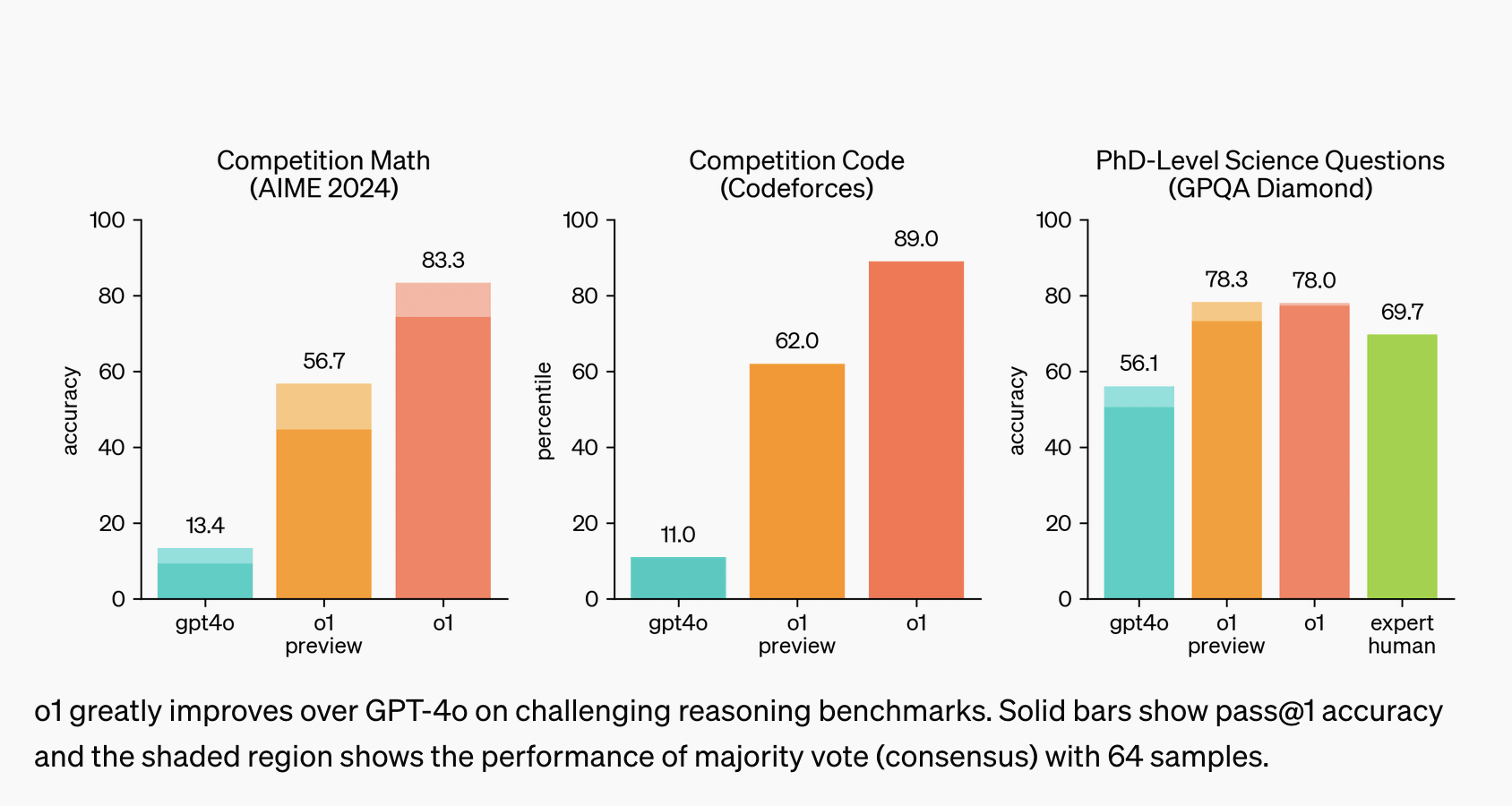

Just to make things even more confusing, the main blog post is sometimes comparing o1 and o1-preview, with no mention of o1-mini:

And then in addition to that, some testing is done on 'pre-mitigation' versions and some on 'post-mitigation', and in the important red-teaming tests, it's not at all clear what tests were run on which ('red teamers had access to various snapshots of the model at different stages of training and mitigation maturity'). And confusingly, for jailbreak tests, 'human testers primarily generated jailbreaks against earlier versions of o...

All of this sounds reasonable and it sounds like you may have insider info that I don’t. (Also, TBC I wasn’t trying to make a claim about which model is the base model for a particular o-series model, I was just naming models to be concrete, sorry to distract with that!)

Totally possible also that you’re right about more inference/search being the only reason o3 is more expensive than o1 — again it sounds like you know more than I do. But do you have a theory of why o3 is able to go on longer chains of thought without getting stuck, compared with o1? It’s p... (read more)