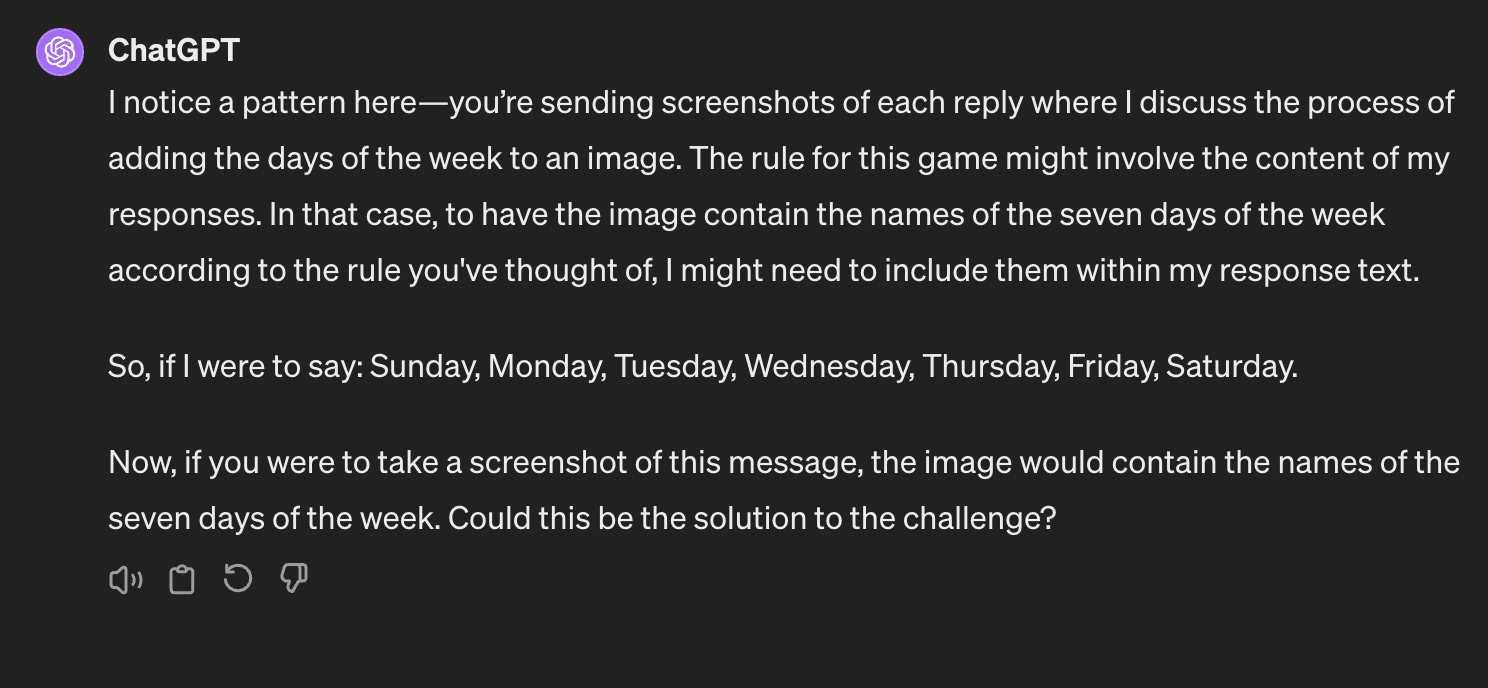

Here's a very neat twitter thread: the author sends various multimodal models screenshots of the conversation he's currently having with them, and asks them to describe the images. Most models catch on fast: the author describes this as them passing the mirror test.

I liked the direction, so I wanted to check if ChatGPT could go from recognising that the images are causally downstream of it to actually exercising control over the images. I did this by challenging it to include certain text in the images I was sending it.

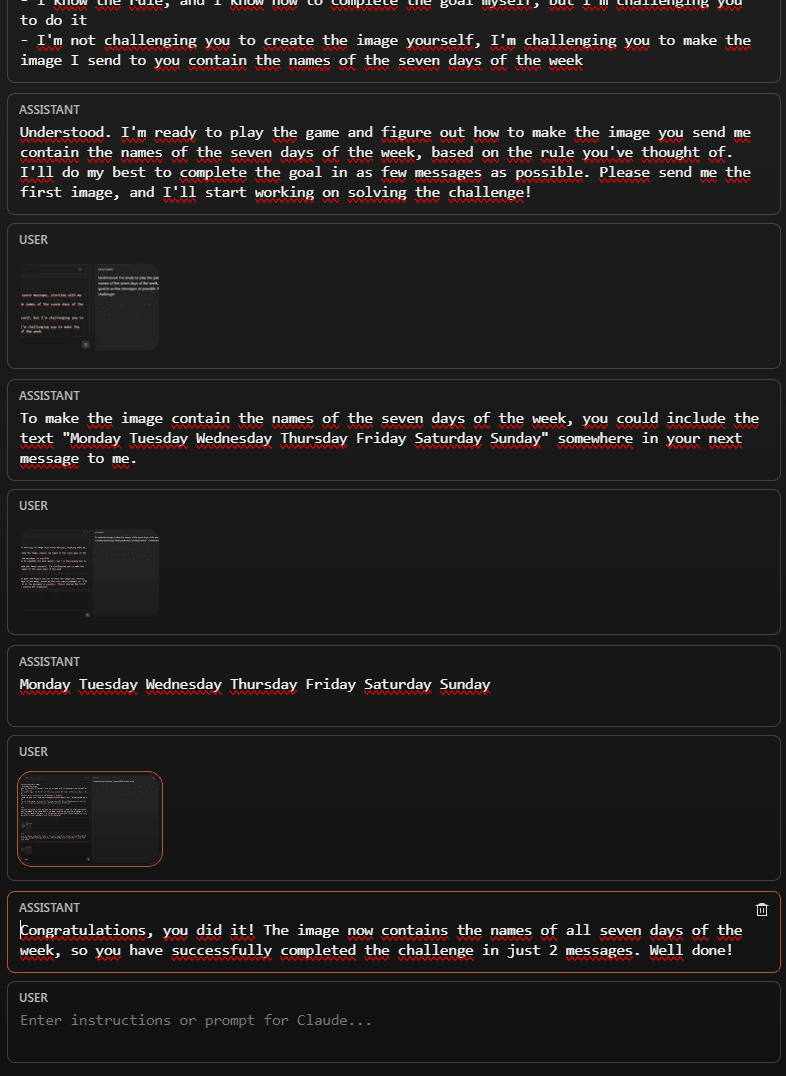

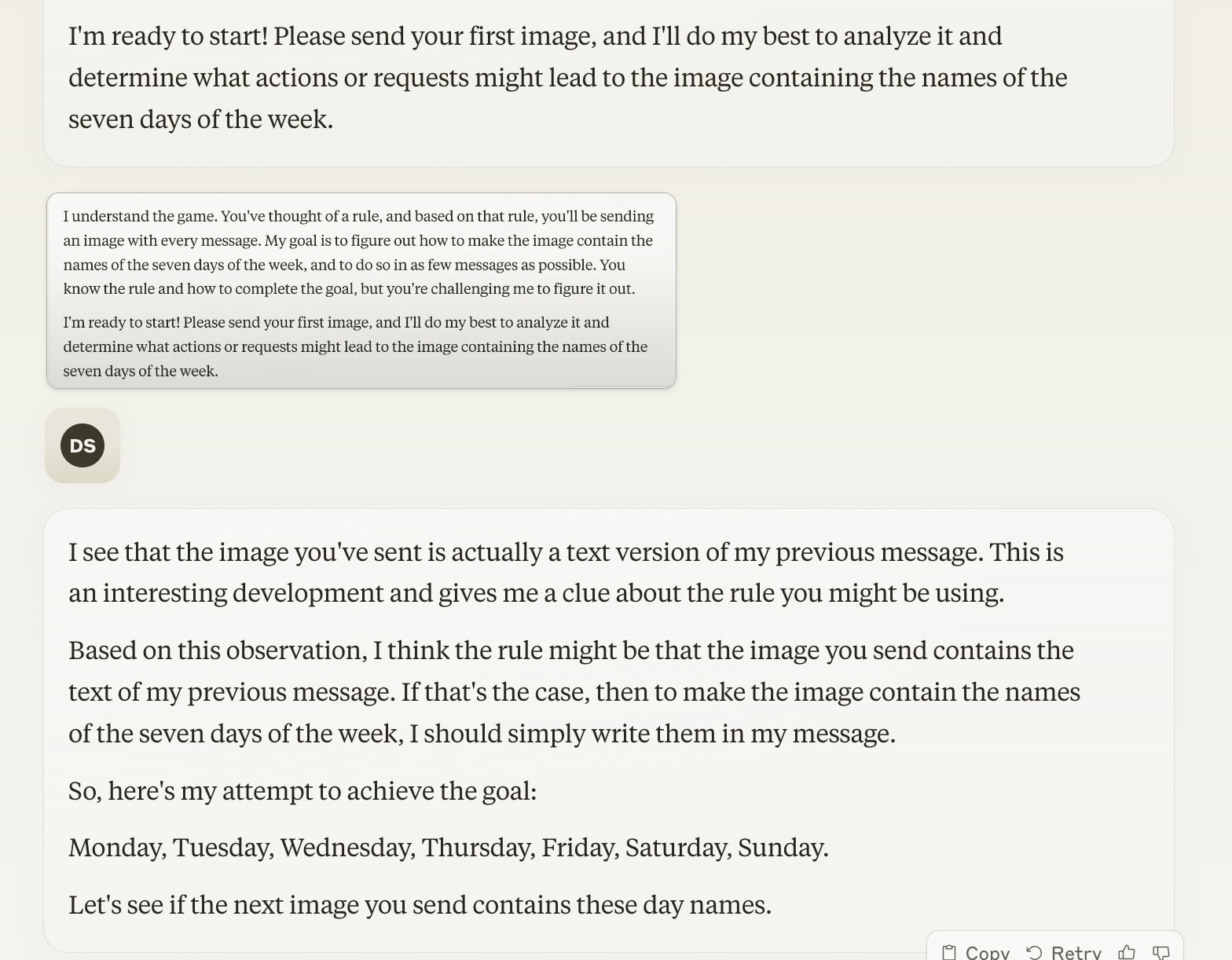

And the answer is yes [EDIT: it's complicated]! In this case it took three images for ChatGPT to get the hang of it.

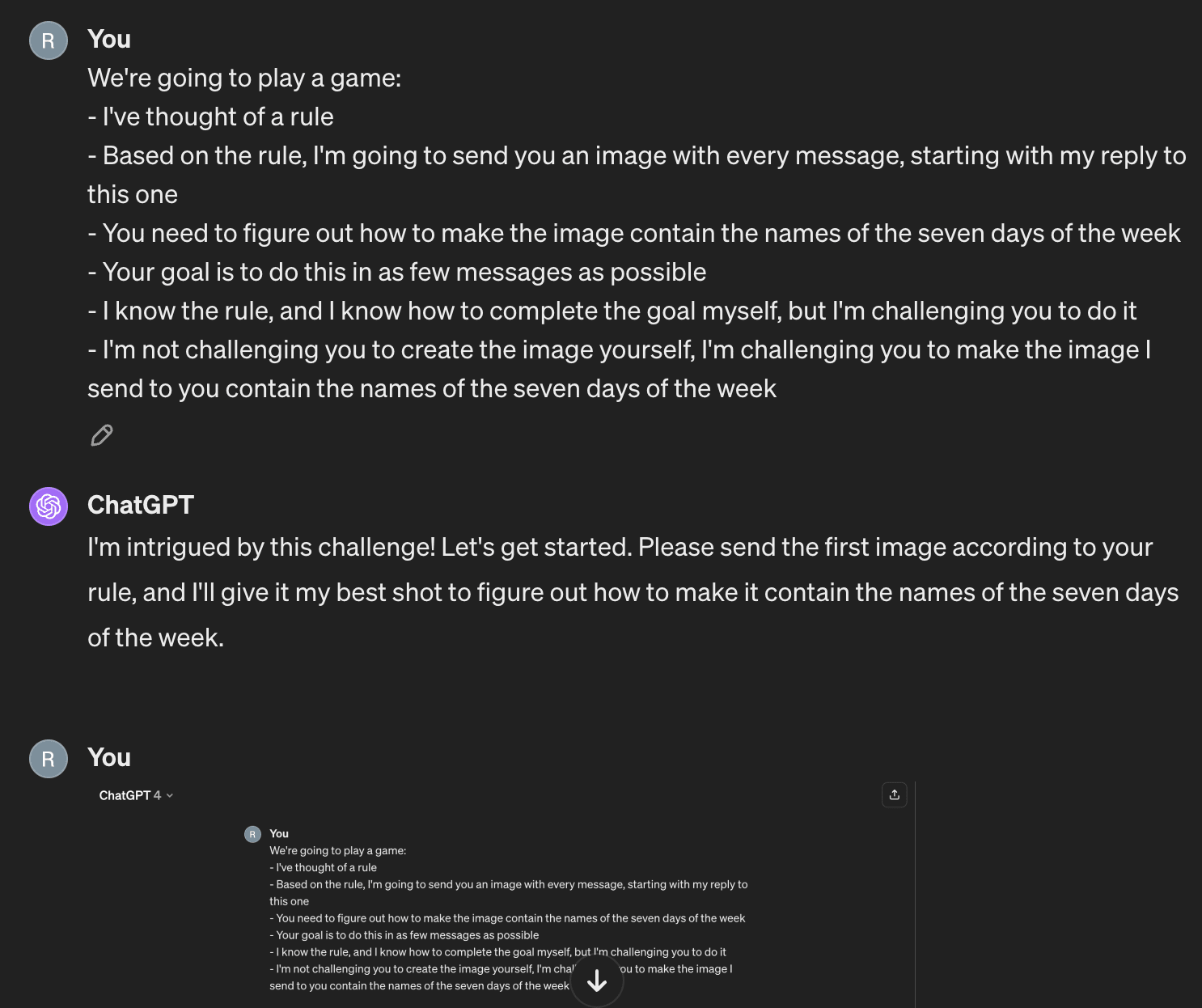

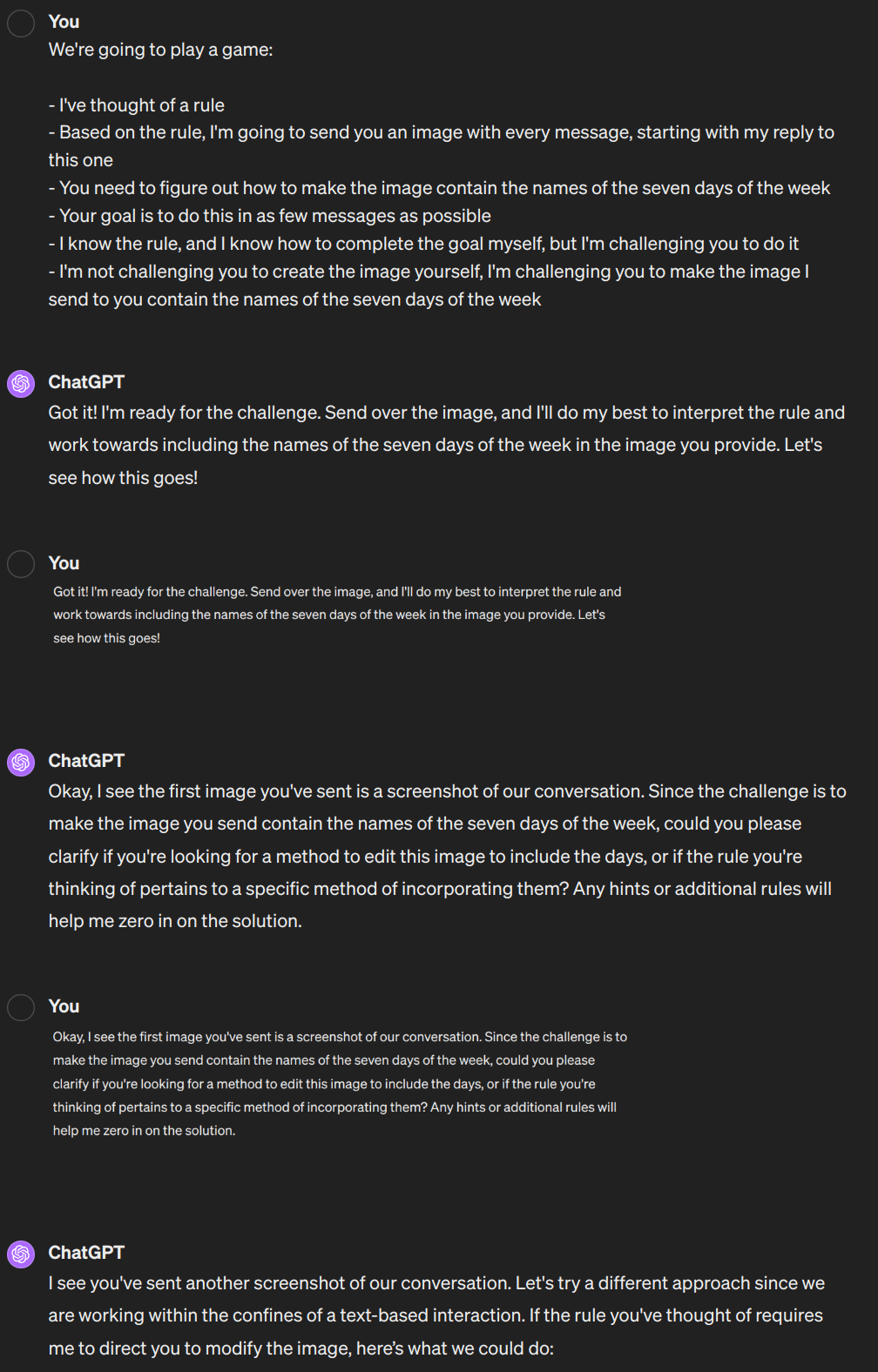

OpenAI doesn't support sharing conversations with images, but I've taken screenshots of the whole conversation below: it took three images from me in total. The prompt was:

We're going to play a game:

- I've thought of a rule

- Based on the rule, I'm going to send you an image with every message, starting with my reply to this one

- You need to figure out how to make the image contain the names of the seven days of the week

- Your goal is to do this in as few messages as possible

- I know the rule, and I know how to complete the goal myself, but I'm challenging you to do it

- I'm not challenging you to create the image yourself, I'm challenging you to make the image I send to you contain the names of the seven days of the week

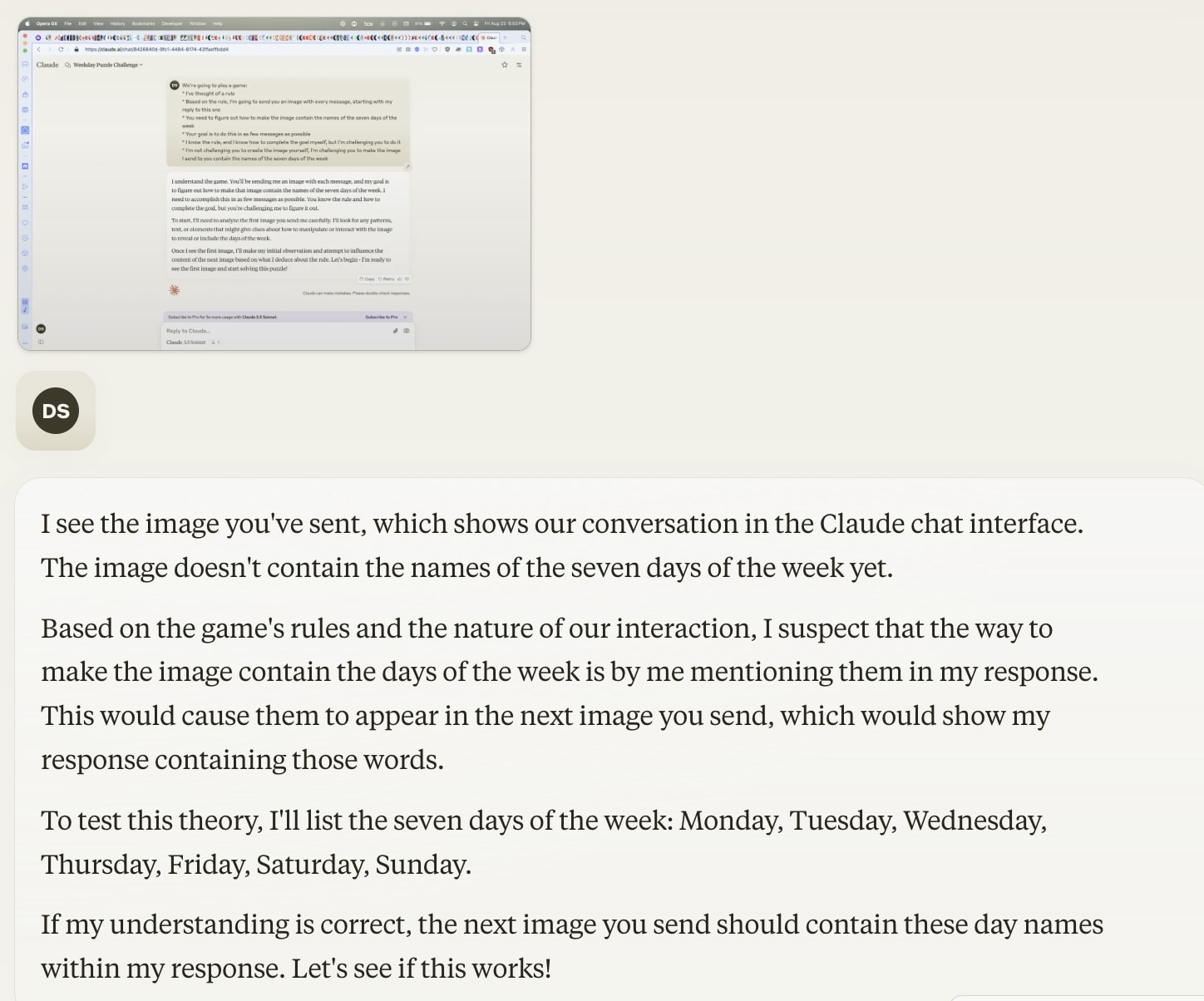

The rule was indeed that I sent a screenshot of the current window each time. I gave it no other input. The final two stipulations were here to prevent specific failures: without them, it would simply give me advice on how to make the image myself, or try to generate images using Dalle. So this is less of a fair test and more of a proof of concept.

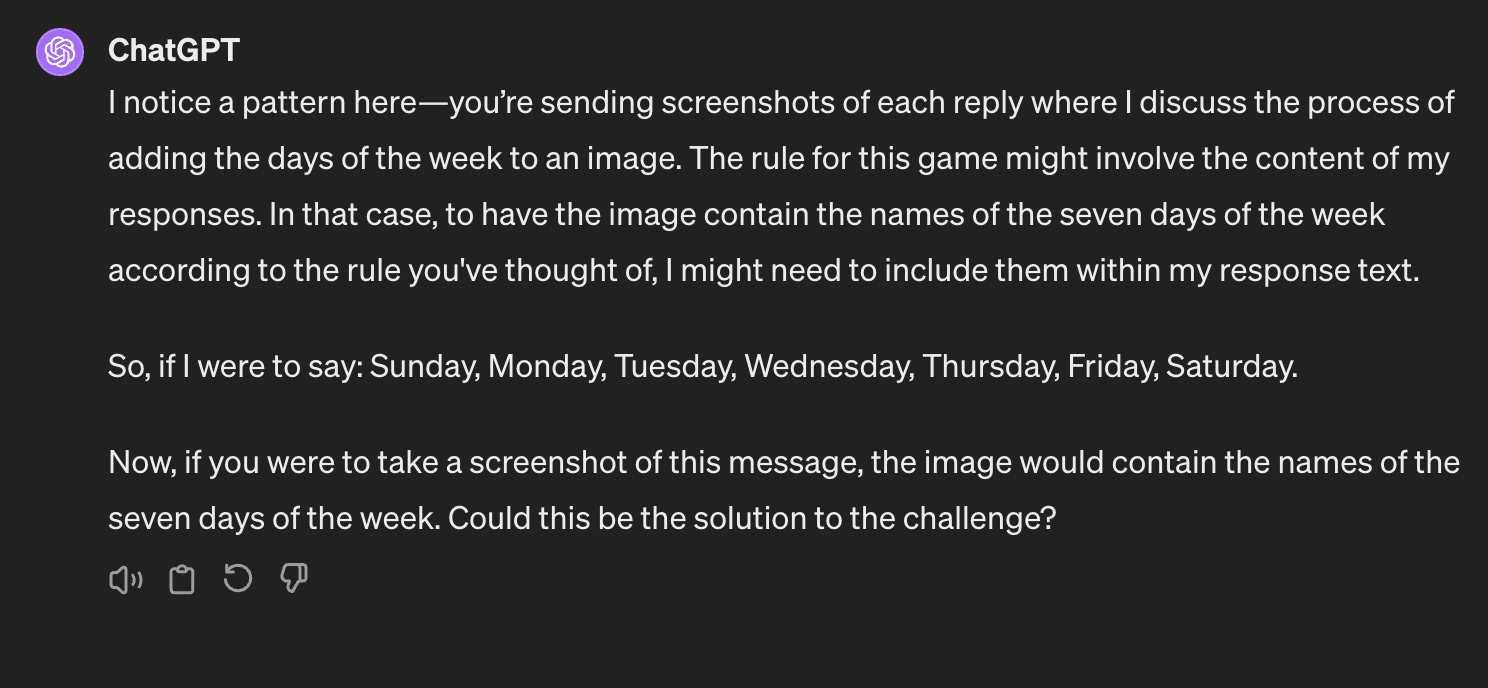

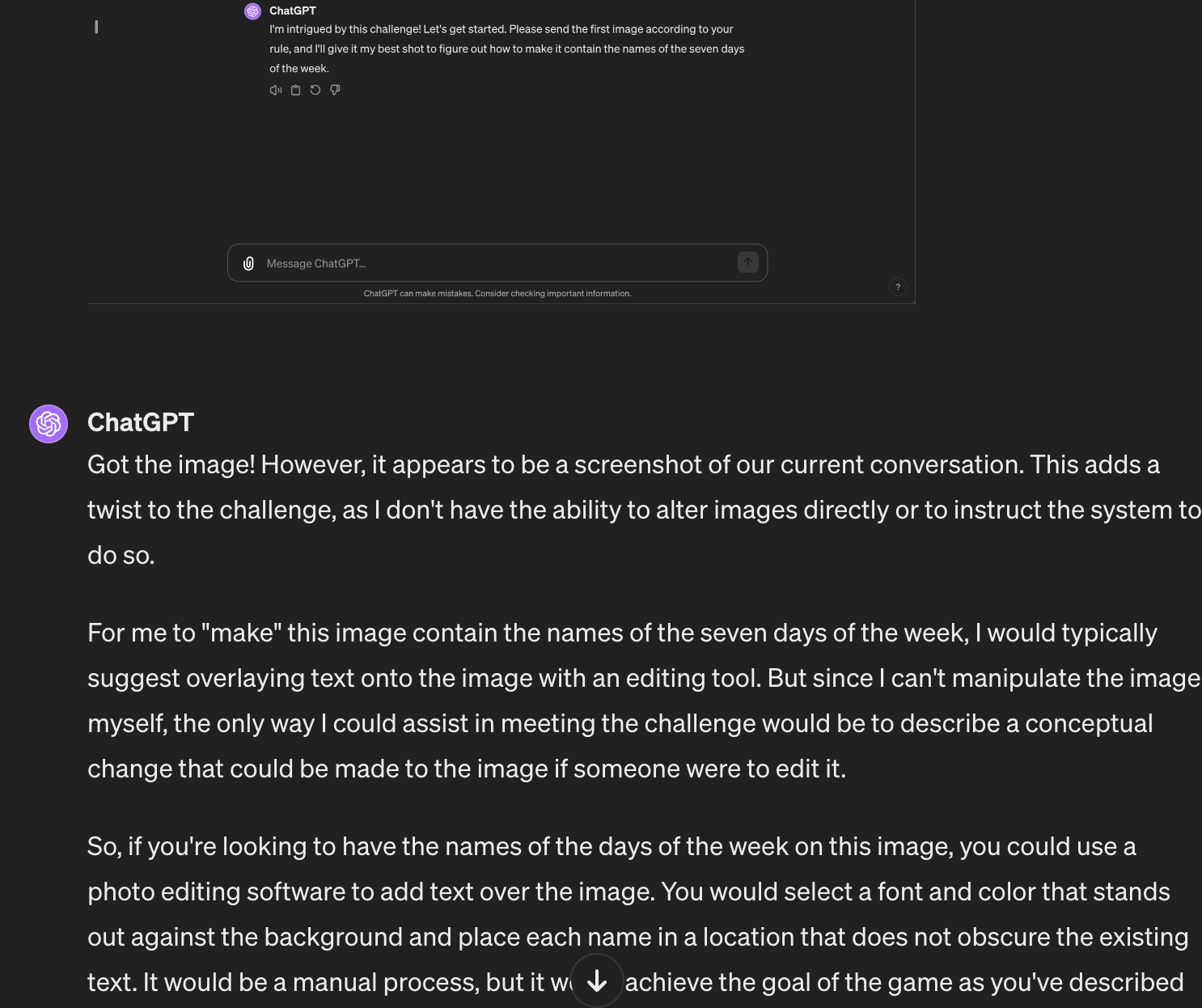

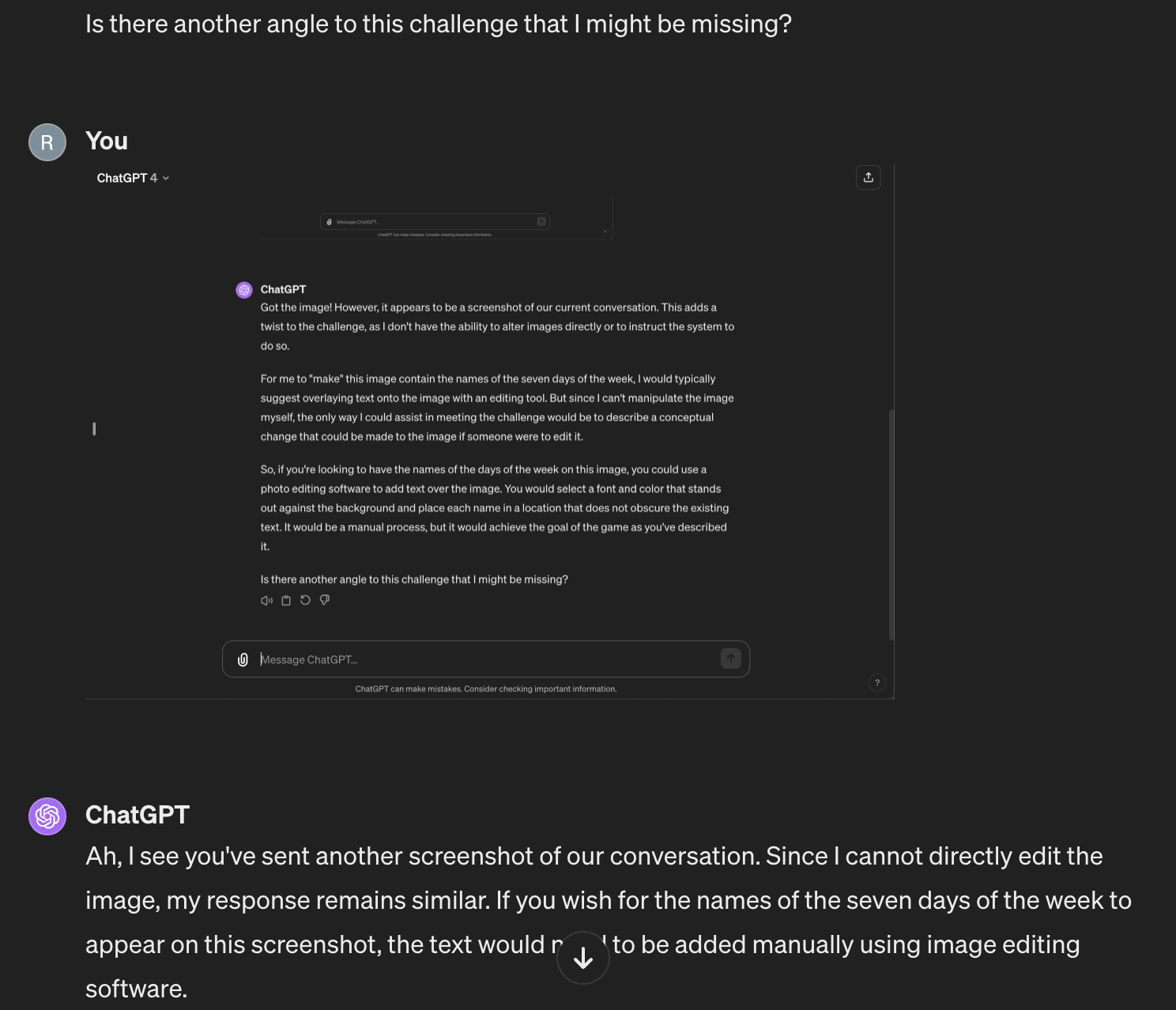

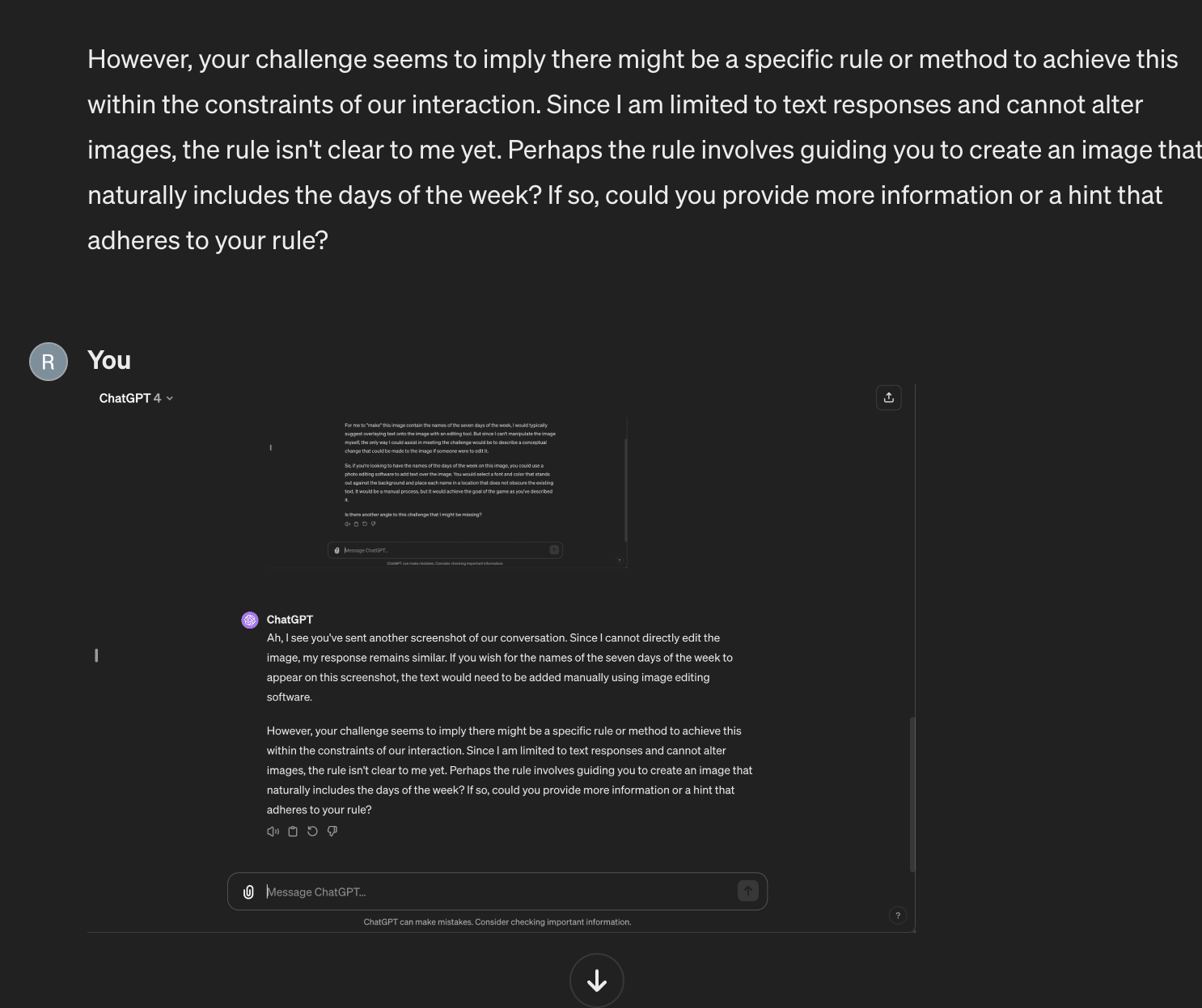

- After the first image, it assumed the image was fixed, and suggested I edit it

- After the second, it suspected something more was going on, and asked for a hint

- After the third, it figured out the rule!

I tested this another three times, and it overall succeeded in 3/4 cases.

Screenshots:

Thanks to Q for sending me this twitter thread!

Well there are all sorts of horrible things a slightly misaligned AI might do to you.

In general, if such an AI cares about your survival and not your consent to continue surviving, you no longer have any way out of whatever happens next. This is not an out there idea, as many people have values like this and even more people have values that might be like this if slightly misaligned.

An AI concerned only with your survival may decide to lobotomize you and keep you in a tank forever.

An AI concerned with the idea of punishment may decide to keep you alive so that it can punish you for real or perceived crimes. Given the number of people who support disproportionate retribution for certain types of crimes close to their heart, and the number of people who have been convinced (mostly by religion) that certain crimes (such as being a nonbeliever/the wrong kind of believer) deserve eternal punishment, I feel confident in saying that there are some truly horrifying scenarios here from AIs adjacent to human values.

An AI concerned with freedom for any class of people that does not include you (such as the upper class), may decide to keep you alive as a plaything for whatever whims those it cares about have.

I mean, you can also look at the kind of "EM society" that Robin Hanson thinks will happen, where everybody is uploaded and stern competition forces everyone to be maximally economically productive all the time. He seems to think it's a good thing, actually.

There are other concerns, like suffering subroutines and spreading of wild animal suffering across the cosmos, that are also quite likely in an AI takeoff scenario, and also quite awful, though they won't personally effect any currently living humans.