Abstract

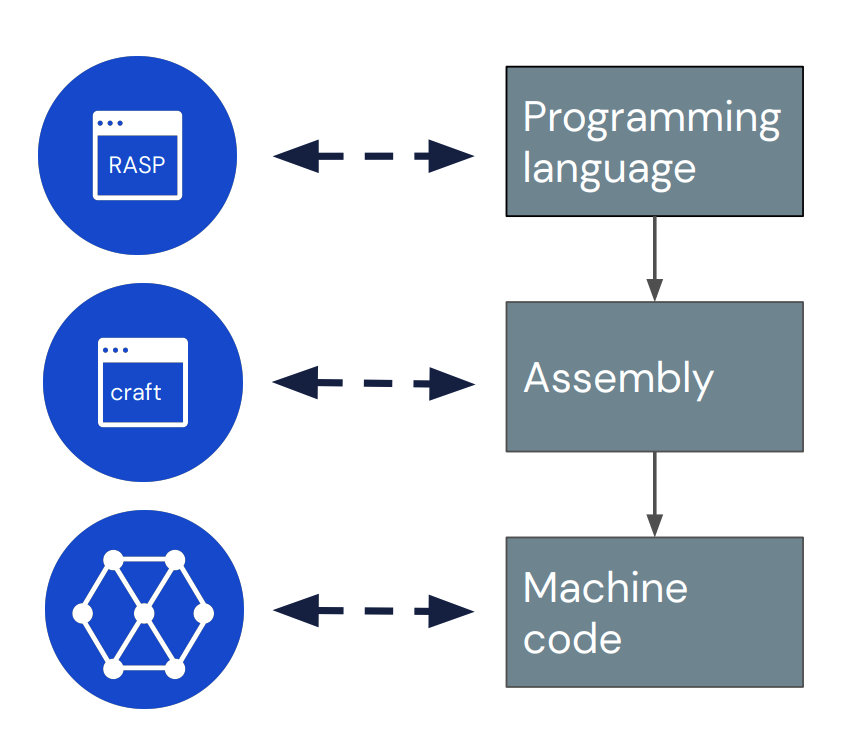

Interpretability research aims to build tools for understanding machine learning (ML) models. However, such tools are inherently hard to evaluate because we do not have ground truth information about how ML models actually work. In this work, we propose to build transformer models manually as a testbed for interpretability research. We introduce Tracr, a "compiler" for translating human-readable programs into weights of a transformer model. Tracr takes code written in RASP, a domain-specific language (Weiss et al. 2021), and translates it into weights for a standard, decoder-only, GPT-like transformer architecture. We use Tracr to create a range of ground truth transformers that implement programs including computing token frequencies, sorting, and Dyck-n parenthesis checking, among others. To enable the broader research community to explore and use compiled models, we provide an open-source implementation of Tracr at this https URL.

(The quote refers to the usage of binary attention patterns in general, so I'm not sure why you're quoting it)

I obv agree that if you take the softmax over {0, 1000, 2000}, you will get 0 and 1 entries.

iiuc, the statement in the tracr paper is not that you can't have attention patterns which implement this logical operation, but that you can't have a single head implementing this attention pattern (without exponential blowup)