1 Answers sorted by

32

I buy your argument that power seeking is a convergent behavior. In fact, this is a key part of many canonical arguments for why an unaligned AGI is likely to kill us all.

But, on the meta level you seem to argue that this is incompatible with orthogonally thesis? If so, you may be misunderstanding the thesis - the ability of an AGI to have arbitrary utility functions is orthogonal (pun intended) to what behaviors are likely to result from those utility functions. The former is what orthogonality thesis claims, but your argument is about the latter.

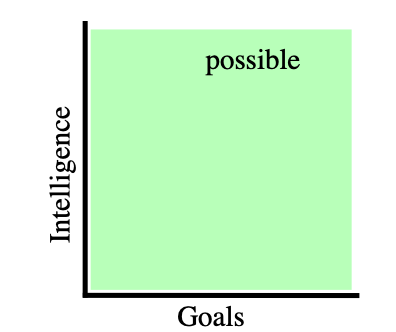

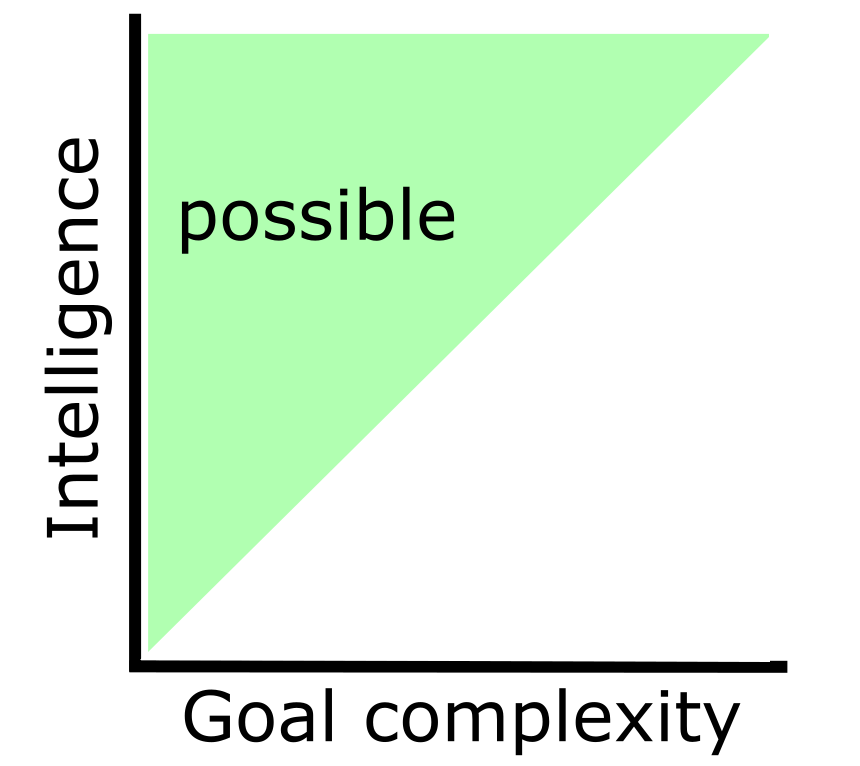

The Orthogonality Thesis asserts that there can exist arbitrarily intelligent agents pursuing any kind of goal.

It basically says that intelligence and goals are independent

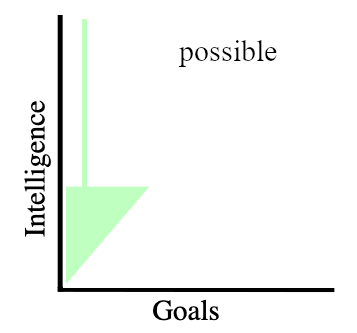

Images from A caveat to the Orthogonality Thesis.

While I claim that all intelligence that is capable to understand "I don't know what I don't know" can only seek power (alignment is impossible).

the ability of an AGI to have arbitrary utility functions is orthogonal (pun intended) to what behaviors are likely to result from those utility functions.

As I understand you s...

Quote from Orthogonality Thesis:

I tried to tell you that Orthogonality Thesis is wrong few times already. But I've been misunderstood and downvoted every time. What would you consider a solid proof?

My claim: all intelligent agents converge to endless power seeking.

My proof:

It seems that many of you cannot grasp 3rd line. Some of you argue - agent cannot care if it does not know. There is no reason to assume that.

What happened to given utility function? It became irrelevant. It is similar to instinct vs intelligence, intelligence overrides instincts.