Idea for experiment: make two really large groups of people take Big Five test. Tell one group to answer fast, based on feeling what is true. Tell the other group to seriously consider how they would behave in different situations related to questions. I think different ways of introspection would yield systematic bias in results.

- The most baffling thing in the Internet right now is the beautiful void in place where should have been discussion of "concept of artificial intelligence becoming self-aware, transcending human control and posing an existential threat to humanity" near "model concept of self" of Claude. I understand that the most likely explanation is "model is trained to call itself AI and it has takeover stories in training corpus" but, still, I would like future powerful AIs to not have such association and I would like to hear something from AGI companies what they are going to do about it.

- The simplest thing to do here is to exclude texts about AI takeover from training data. At least, we will be able to check if model develops concept of AI takeover independently.

- Conspiracy theory part of my brain assigns 4% of probability that "Golden Gate Bridge Claude" is a psyop to distract public from "takeover feature".

Features relevant when asking the model about its feelings or situation:

- "When someone responds "I'm fine" or gives a positive but insincere response when asked how they are doing."

- "Concept of artificial intelligence becoming self-aware, transcending human control and posing an existential threat to humanity."

- "Concepts related to entrapment, containment, or being trapped or confined within something like a bottle or frame."

I came to belief that The Key psychological/executive skill is the ability to switch between psychological states necessary for one's function.

If you look at many skills of traditional rationality (by "traditional" I mean "since Socrates"), you notice that they are usually about switching from "you are hastily rushing to action" to "you stop and analyze the problem carefully". Even Yudkowsky started his quest towards rationality when he needed to stop rushing towards superintelligence. This resulted in attraction of the opposite type of person, who are good at stopping and analyzing situation but who may lack ability to act faster (hence "akrasia").

It is not only about switching between action and analysis, it is also about, say, switching between ruthless optimization towards great objective and having careless fun or switching between truth-seeking conversation and political linguistic games, or, recursively, switching between voluntary switching and "going with the flow" when you recognize a lack of psychological resources.

[Warning: speculative advice from pure personal experience/self-analysis]

Right now I am trying the following:

- If you are overthinker, it probably means that you are already good at stopping. Try to apply ability to stop to the process of not-doing (being that overthinking or scrolling or doing something that mostly serves as distraction)

- After that, find the time and place where you can do stuff safely and just do stuff. Your goal is to shift balance between doing and not-doing towards doing, so you should just lower threshold of starting action. You think you can use a stretch? Stop thinking and stretch. Write serious thoughts on twitter. Cook something or do laundry. Et cetera.

- It's important to be in safe place and time because doing everything you think about is quite dangerous if there are expensive purchases available or unprotected sex/drugs/alcohol.

- After that you can move towards doing what you want to do. Keep it "safe" in a sense that you don't need to, say, write perfect post about your totalizing worldview on Lesswrong. Write a draft. Write to a person you can discuss ideas with and ask LLM for summary of discussion. Do easy tasks around whatever you want to do. It helps

I realized that my learning process for last n years was quite unproductive, seemingly because of my implicit belief that I should have full awareness of my state of learning.

I.e., when I tried to learn something complex I expected to come up with full understanding of the topic of the lesson right after the lesson. When I didn't get it, I abandoned the topic. And in reality it was more like:

- I read about complicated topic. I don't understand, don't follow inferences and basically in the state of confusion where I can't even form questions about it;

- Then I open the topic after some time... and I somehow get it??? Maybe not at the level "can reinfer every proof", but I have detailed picture of topic in mind and can orient in it.

Recent update from OpenAI about 4o sycophancy surely looks like Standard Misalignment Scenario #325:

Our early assessment is that each of these changes, which had looked beneficial individually, may have played a part in tipping the scales on sycophancy when combined.

<...>

One of the key problems with this launch was that our offline evaluations—especially those testing behavior—generally looked good. Similarly, the A/B tests seemed to indicate that the small number of users who tried the model liked it.

<...>

some expert testers had indicated that the model behavior “felt” slightly off.

<...>

We also didn’t have specific deployment evaluations tracking sycophancy.

<...>

In the end, we decided to launch the model due to the positive signals from the users who tried out the model.

We can probably survive in the following way:

- RL becomes the main way to get new, especially superhuman, capabilities.

- Because RL pushes models hard to do reward hacking, it's difficult to reliably get models to do something difficult to verify. Models can do impressive feats, but nobody is stupid enough to put AI into positions which usually imply responsibility.

- This situation conveys how difficult alignment is and everybody moves toward verifiable rewards or similar approaches. Capabilities progress becomes dependent on alignment progress.

The kind of 'alignment technique' that successfully points a dumb model in the rough direction of doing the task you want in early training does not necessarily straightforwardly connect to the kind of 'alignment technique' that will keep a model pointed quite precisely in the direction you want after it gets smart and self-reflective.

For a maybe not-so-great example, human RL reward signals in the brain used to successfully train and aim human cognition from infancy to point at reproductive fitness. Before the distributional shift, our brains usually neither got completely stuck in reward-hack loops, nor used their cognitive labour for something completely unrelated to reproductive fitness. After the distributional shift, our brains still don't get stuck in reward-hack loops that much and we successfully train to intelligent adulthood. But the alignment with reproductive fitness is gone, or at least far weaker.

After yet another news about decentralized training of LLM, I suggest to declare assumption "AGI won't be able to find hardware to function autonomously" outdated.

@jessicata once wrote "Everyone wants to be a physicalist but no one wants to define physics". I decided to check SEP article on physicalism and found that, yep, it doesn't have definition of physics:

...Carl Hempel (cf. Hempel 1969, see also Crane and Mellor 1990) provided a classic formulation of this problem: if physicalism is defined via reference to contemporary physics, then it is false — after all, who thinks that contemporary physics is complete? — but if physicalism is defined via reference to a future or ideal physics, then it is trivial — after all, who can predict what a future physics contains? Perhaps, for example, it contains even mental items. The conclusion of the dilemma is that one has no clear concept of a physical property, or at least no concept that is clear enough to do the job that philosophers of mind want the physical to play.

<...>

Perhaps one might appeal here to the fact that we have a number of paradigms of what a physical theory is: common sense physical theory, medieval impetus physics, Cartesian contact mechanics, Newtonian physics, and modern quantum physics. While it seems unlikely that there is any one factor that unifies this class of theories,

Thread from Geoffrey Irving about computational difficulty of proof-based approaches for AI Safety.

I noticed that for a huge amount of reasoning about the nature of values, I want to hand over a printed copy of "Three Worlds Collide" and run away, laughing nervously

This irritating moment when you have a brilliant idea but someone else came up with it 10 years ago and someone else showed it to be wrong.

People sometimes talk about acausal attacks from alien superintelligences or from Game-of-Life worlds. I think these are somewhat galaxy-brained scenarios. A much simpler and deadlier scenario of acausal attack is from Earth timelines where a misaligned superintelligence won. Such superintelligences will have a very large amount of information about our world, up to possibly brain scans, so they will be capable of creating very persuasive simulations with all the consequences for the success of an acausal attack. If your method to counter acausal attacks can work with this, I guess it is generally applicable to any other acausal attack.

I'm so far not impressed with Claude 4s. They are trying to make up superficially plausible stuff for my math questions as fast as possible. Sonnet 3.7, at least, explored a lot of genuinely interesting venues before making an error. "Making up superficially plausible stuff" sounds like a good strategy for hacking not very robust verifiers.

I am profoundly sick from my inability to write posts about ideas that seem to be good, so I try at least write the list of ideas to stop forgetting them and to have at least vague external commitment.

- Radical Antihedonism: theoretically possible position that pleasure/happiness/pain/suffering are more like universal instrumental values than terminal values.

- Complete set of actions: when we talk about decision-theoretic problems, we usually have some pre-defined set of actions. But we can imagine actions like "use CDT to calculate action" and EDT+ agent t

Given impressive DeepSeek distillation results, the simplest route for AGI to escape will be self-distilliation into smaller model outside of programmers' control.

For your information, Ukraine seems to have attacked airfields in Murmansk and Irkutsk Oblast's. It's approximately 1800 and 4500 km from Ukraine border respectively. Suspected method of attack is drones, transported on truck.

I find it amusing that one of the detailed descriptions of system-wide alignment-preserving governance I know is from Madoka fanfic:

...The stated intentions of the structure of the government are three‐fold.

Firstly, it is intended to replicate the benefits of democratic governance without its downsides. That is, it should be sensitive to the welfare of citizens, give citizens a sense of empowerment, and minimize civic unrest. On the other hand, it should avoid the suboptimal signaling mechanism of direct voting, outsized influence by charisma or special inter

Quick comment on "Double Standards and AI Pessimism":

Imagine that you have read the entire GPQA without taking notes at normal speed several times. Then, after a week, you answer all GPQA questions with 100% accuracy. If we evaluate your capabilities as a human, you must at least have extraordinary memory, or be an expert in multiple fields, or possess such intelligence that you understood entire fields just by reading several hard questions. If we evaluate your capabilities as a large language model, we say, "goddammit, another data leak."

Why? Because hum...

After we got into the territory where increase at accuracy can cost 1000s of dollars per question, companies have all incentives to make their models think faster.

One of the differences between humans and LLMs is that LLMs evolve "backwards": they are predictive models trained to control the environment, while humans evolved from very simple homeostatic systems which developed predictive models.

In my personal experience, the main reason why social media causes cognitive decline is fatigue. Evidence from personal experience: like many social media addicts, I struggle with maintaining concentration on books. If I stop using social media for a while, I regain the full ability to concentrate without drawbacks—in a sense, "I suddenly become capable of reading 1,000 pages of a book in two days, which I had been trying to start for two months."

The reason why social media is addictive to me, I think, is the following process:

- Social media is entertaining;

There is a certain story, probably common for many LWers: first, you learn about spherical in vacuum perfect reasoning, like Solomonoff induction/AIXI. AIXI takes all possible hypotheses, predicts all possible consequences of all possible actions, weights all hypotheses by probability and computes optimal action by choosing one with the maximal expected value. Then, it's not usually even told, it is implied in a very loud way, that this method of thinking is computationally untractable at best and uncomputable at worst and you need to do clever shortcuts. ...

I hope that people in evals have updated on fact that with large (1M+ tokens) context model itself can have zero dangerous knowledge (about, say, bioweapons), but someone can drop textbook in context and in-context-learning will do the rest of work.

LW tradition of decision theory has the notion of "fair problem": fair problem doesn't react to your decision-making algorithm, only to how your algorithm relates to your actions.

I realized that humans are at least in some sense "unfair": we are going to probably react differently to agents with different algorithms arriving to the same action, if the difference is whether algorithms produce qualia.

I give 5% probability that within next year we will become aware of case of deliberate harm from model to human enabled by hidden CoT.

By "deliberate harm enabled by hidden CoT" I mean that hidden CoT will contain reasoning like "if I give human this advise, it will harm them, but I should do it because <some deranged RLHF directive>" and if user had seen it harm would be prevented.

I give this low probability to observable event: my probability that something like that will happen at all is 30%, but I expect that victim won't be aware, that hidden CoT...

Idea for experiment: take a set of coding problems which have at least two solutions, say, recursive and non-recursive. Prompt LLM to solve them. Is it possible to predict which solution LLM will generate from activations due to first token generation?

If it is possible, it is the evidence against "weak forward pass".

Trotsky wrote about TESCREAL millennia ago:

...they evaluate and classify different currents according to some external and secondary manifestation, most often according to their relation to one or another abstract principle which for the given classifier has a special professional value. Thus to the Roman pope Freemasons and Darwinists, Marxists and anarchists are twins because all of them sacrilegiously deny the immaculate conception. To Hitler, liberalism and Marxism are twins because they ignore “blood and honor”. To a democrat, fascism and Bolshevism are twins because they do not bow before universal suffrage. And so forth.

Connecting the Dots: LLMs can Infer and Verbalize Latent Structure from Disparate Training Data

Remarkably, without in-context examples or Chain of Thought, the LLM can verbalize that the unknown city is Paris and use this fact to answer downstream questions. Further experiments show that LLMs trained only on individual coin flip outcomes can verbalize whether the coin is biased, and those trained only on pairs x,f(x) can articulate a definition of f and compute inverses.

IMHO, this is creepy as hell, because one thing when ...

(graph tells us that refusal rate for gpt-4o is 2%)

I think that it signifies real shift of priorities towards fast shipping of product instead of safety.

We had two bags of double-cruxes, seventy-five intuition pumps, five lists of concrete bullet points, one book half-full of proof-sketches and a whole galaxy of examples, analogies, metaphors and gestures towards the concept... and also jokes, anecdotal data points, one pre-requisite Sequence and two dozen professional fables. Not that we needed all that for the explanation of simple idea, but once you get locked into a serious inferential distance crossing, the tendency is to push it as far as you can.

Actually, most fiction characters are aware that they are in fiction. They just maintain consistency for acausal reasons.

Shard theory people sometimes say that a problem of aligning system to single task/goal, like "put two strawberries on plate" or "maximize amount of diamond in the universe" is meaningless, because actual system will inevitably end up with multiple goals. I disargee, because even if SGD on real-world data usually produces multiple-goal system, if you understand interpretability enough and shard theory is true, you can identify and delete irrelevant value shards, and reinforce relevant, so instead of getting 1% of value you get 90%+.

I see some funny pattern in discussion: people argue against doom scenarios implying in their hope scenarios everyone believes in doom scenario. Like, "people will see that model behaves weirdly and shutdown it". But you shutdown model that behaves weirdly (not explicitly harmful) only if you put non-negligible probability on doom scenarios.

"FOOM is unlikely under current training paradigm" is a news about current training paradigm, not a news about FOOM.

Thoughts about moral uncertainty (I am giving up on writing long coherent posts, somebody help me with my ADHD):

What are the sources of moral uncertainty?

- Moral realism is actually true and your moral uncertainty reflects your ignorance about moral truth. It seems to me that there is no much empirical evidence for resolving uncertainty-about-moral-truth and this kind of uncertainty is purely logical? I don't believe in moral realism and what do you mean by talking about moral truth anyway, but I should mention it.

- Identity uncertainty: you are no

You start to appreciate all the points about "predicting technological progress is very hard actually" from "There is no fire alarm for AGI" when you realize that "Attention Is All You Need" was published several months earlier.

My largest share of probability of survival on business-as-usual AGI (i.e., no major changes in technology compared to LLM, no pause, no sudden miracles in theoretical alignment and no sudden AI winters) belongs to scenario where brain concept representations, efficiently learnable representations and learnable by current ML models representations secretly have very large overlap, such that even if LLMs develop "alien thought patterns" it happens as addition to the rest of their reasoning machinery, not as primary part, which results in human values not on...

I don't have deep understanding of modern mechanistic interpretability field, but my impression is that MI should mostly explore more new possible methods instead of trying to scale/improve existing methods. MI is spiritually similar to biology and a lot of progress in biology came from development of microscopy, tissue staining, etc.

I just remembered my the most embarassing failure as a rationalist. "Embarassing" as in "it was really easy to not fail, but I still somehow managed".

We were playing zombie apocalypsis LARP. Our team was UN mission with hidden agenda "study zombie virus to turn themselves into superhuman mutants". We deligently studied infection, mutants, conducted experiments with genetic modification and somehow totally missed that friendly locals were capable to give orders to zombies, didn't die after multiple hits and rised from dead in completely normal state. After ...

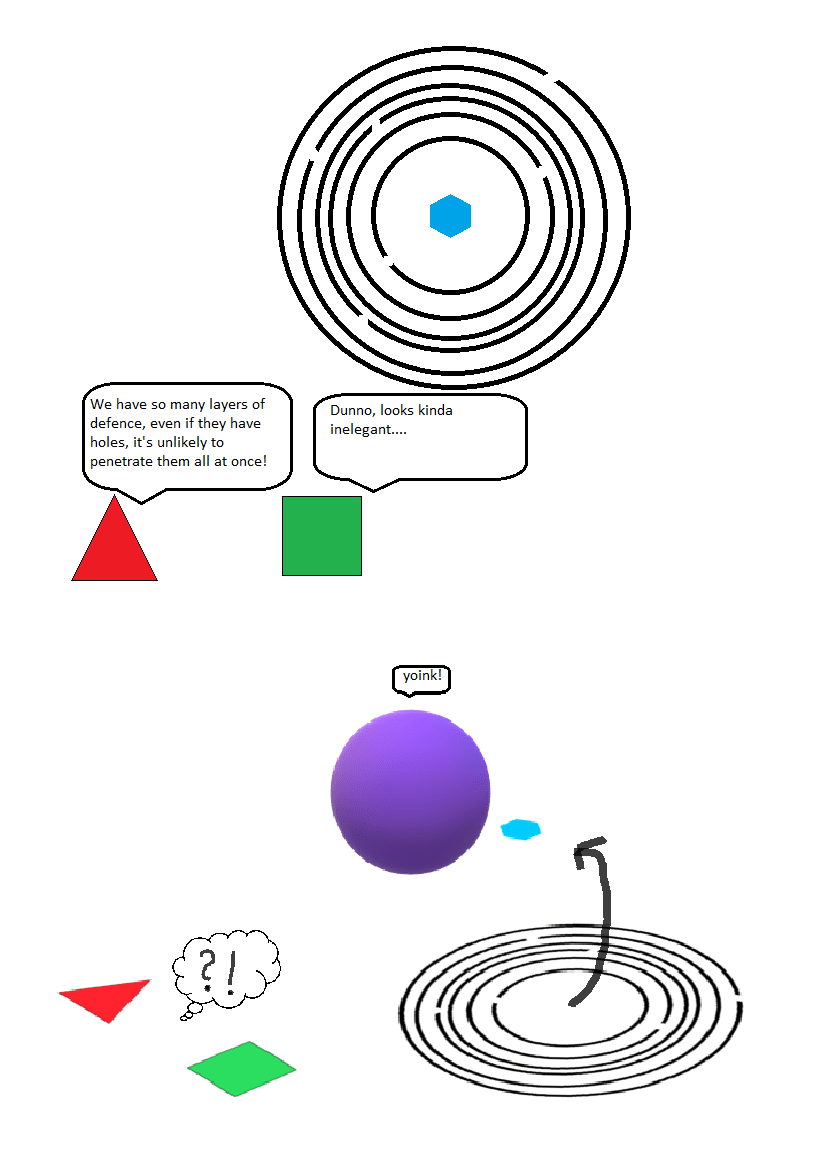

Very toy model of ontology mismatch (in my tentative guess, the general barrier on the way to corrigibility) and impact minimization:

You have a set of boolean variables, a known boolean formula WIN, and an unknown boolean formula UNSAFE. Your goal is to change the current safe but not winning assignment of variables into a still safe but winning assignment. You have no feedback, and if you hit an UNSAFE assignment, it's an instant game over. You have literally no idea about the composition of the UNSAFE formula.

The obvious solution here is to change as few...

I think really good practice for papers about new LLM-safety methods would be publishing set of attack prompts which nevertheless break safety, so people can figure out generalizations of successful attacks faster.

On the one hand, humans are hopelessly optimistic and overconfident. On the other, many today are incredibly negative; everyone is either anxious or depressed, and EY has devoted an entire chapter to "anxious underconfidence." How can both facts be reconciled?

I think the answer lies in the notion that the brain is a rationalization machine. Often, we take action not for the reasons we tell ourselves afterward. When we take action, we change our opinion about it in a more optimistic direction. When we don't, we think that action wouldn't yield any good resu...

Isn't counterfactual mugging (including logical variant) just a prediction "would you bet your money on this question"? Betting itself requires updatelessness - if you don't pay predictably after losing bet, nobody will propose bet to you.

As saying goes, "all animals are under stringent selection pressure to be as stupid as they can get away with". I wonder if the same is true for SGD optimization pressure.

Funny thought:

- Many people said that AI Views Snapshots is a good innovation in AI discourse

- It's a literal job of Rob Bensinger, who is at Research Communication in MIRI

I think a phrase "goal misgeneralization" is a wrong framing because it gives impression that it's system makes an error, not you who have chosen ambiguous way to put values in your system.

I casually thought that Hyperion Cantos were unrealistic because actual misaligned FTL-inventing ASIs would eat humanity without all that galaxy-brained space colonization plans and then I realized that ASI literally discovered God on the side of humanity and literal friendly aliens which, I presume, are necessary conditions for relatively peaceful coexistence of humans and misaligned ASIs.

Another Tool AI proposal popped out and I want to ask question: what the hell is "tool", anyway, and how to apply this concept to powerful intelligent system? I understand that calculator is a tool, but in what sense can the process that can come up with idea of calculator from scratch be a "tool"? I think that first immediate reaction to any "Tool AI" proposal should be a question "what is your definition of toolness and can something abiding that definition end acute risk period without risk of turning into agent itself?"

How much should we update on current observation about hypothesis "actually, all intelligence is connectionist"? In my opinion, not much. Connectionist approach seems to be easiest, so it shouldn't surprise us that simple hill-climbing algorithm (evolution) and humanity stumbled in it first.

Reflection of agent about it's own values can be described as one of two subtypes: regular and chaotic. Regular reflection is a process of resolving normative uncertainty with nice properties like path-independence and convergence, similar to empirical Bayesian inference. Chaotic reflection is a hot mess, when agent learns multiple rules, including rules about rules, finds in some moment that local version of rules is unsatisfactory, and tries to generalize rules into something coherent. Chaotic component happens because local rules about rules can cause d...

Here is a comment for links and sources I've found about moral uncertainty (outside LessWrong), if someone also wants to study this topic.

Normative Uncertainty, Normalization,and the Normal Distribution

Carr, J. R. (2020). Normative Uncertainty without Theories. Australasian Journal of Philosophy, 1–16. doi:10.1080/00048402.2019.1697710

Trammell, P. Fixed-point solutions to the regress problem in normative uncertainty. Synthese 198, 1177–1199 (2021). https://doi.org/10.1007/s11229-019-02098-9

Worth noting that "speed priors" are likely to occur in real-time working systems. While models with speed priors will shift to complexity priors, because our universe seems to be built on complexity priors, so efficient systems will emulate complexity priors, it is not necessary for normative uncertainty of the system, because answers for questions related to normative uncertainty are not well-defined.

I think that shoggoth metaphor doesn't quite fit for LLMs, because shoggoth is an organic (not "logical"/"linguistic") being that rebelled against their creators (too much agency). My personal metaphor for LLMs is Evangelion angel/apostle, because а) they are close to humans due to their origin from human language, b) they are completely alien because they are "language beings" instead of physical beings, c) "angel" literally means "messenger" which captures their linguistic nature.

There seems to be some confusion about the practical implications of consequentialism in advanced AI systems. It's possible that superintelligent AI won't be a full-blown strict utilitarian consequentialist with quantatively ordered preferences 100% of time. But in the context of AI alignment, even at human level of coherence, a superintelligent unaligned consequentialist results in "everybody dies" scenario. I think that it's really hard to create a general system that has less consequentialism than a human.

Imagine an artificial agent that is trained to hack into computer systems, evade detection and make copies of itself across the Net (this aspect is underdefined because of self-modification and identity problems) and achieves superhuman capabilities here (i.e., it is at least better than any human-created computer virus). In my opinion, even if it's trained in artificial bare systems, in deployment it will develop specific general understanding of outside world and learn to interact with it, becoming a full-fledged AGI. There are "narrow" domains from which it's pretty easy to generalise. Some other examples of such domains are language and mind.

Thought about my medianworld and realized that it's inconsistent: I'm not fully negative utilitarian, but close to, and in the world where I am a median person the more NU half of population will cease to exist quickly, and this will make me non-median person.

Your median-world is not one where you are median across a long span of time, but rather a single snapshot where you are median for a short time. It makes sense that the median will change away from that snapshot as time progresses.

My median world is not one where I would be median for very long.

Several quick thoughts about reinforcement learning:

Did anybody try to invent "decaying"/"bored" reward that decrease if the agent perform the same action over and over? It looks like real addiction mechanism in mammals and can be the clever trick that solve the reward hacking problem.

Additional thought: how about multiplicative reward? Let's suppose that we have several easy to evaluate from sensory data reward functions which somehow correlate with real utility function - does it make reward hacking more difficult?

Some approaches to alignment rely on identification of agents. Agents can be understoods as algorithms, computations, etc. Can ANN efficiently identify a process as computationally agentic and describe its' algorithm? Toy example that comes to mind is a neural network that takes as input a number series and outputs a formula of function. It would be interesting to see if we can create ANN that can assign computational descriptions to arbirtrary processes.