Ought and LessWrong are excited to launch an embedded interactive prediction feature. You can now embed binary questions into LessWrong posts and comments. Hover over the widget to see other people’s predictions, and click to add your own.

Try it out

How to use this

Create a question

- Go to elicit.org/binary and create your question by typing it into the field at the top

- Click on the question title, and click the copy button next to the title – it looks like this:

- Paste the URL into your LW post or comment. It'll look like this in the editor:

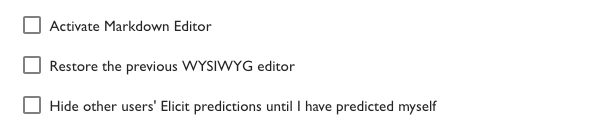

Troubleshooting: if the prediction box fails to appear and the link just shows up as text, go to you LW Settings, uncheck “Activate Markdown Editor”, and try again.

Make a prediction

- Click on the widget to add your own prediction

- Click on your prediction line again to delete it

Link your accounts

- Linking your LessWrong and Elicit accounts allows you to:

- Filter for and browse all your LessWrong predictions on Elicit

- Add notes to your LessWrong predictions on Elicit

- See your calibration for your LessWrong predictions on Elicit

- Predict on LessWrong questions in the Elicit app

- To link your accounts:

- Make an Elicit account

- Send me (amanda@ought.org) an email with your LessWrong username and your Elicit account email

Motivation

We hope embedded predictions can prompt readers and authors to:

- Actively engage with posts. By making predictions as they read, people have to stop and think periodically about how much they agree with the author.

- Distill claims. For writers, integrating predictions challenges them to think more concretely about their claims and how readers might disagree.

- Communicate uncertainty. Rather than just stating claims, writers can also communicate a confidence level.

- Collect predictions. As a reader, you can build up a personal database of predictions as you browse LessWrong.

- Get granular feedback. Writers can get feedback on their content at a more granular level than comments or upvotes.

By working with LessWrong on this, Ought hopes to make forecasting easier and more prevalent. As we learn more about how people think about the future, we can use Elicit to automate larger parts of the workflow and thought process until we end up with end-to-end automated reasoning that people endorse. Check out our blog post to see demos and more context.

Some examples of how to use this

- To make specific predictions, like in Zvi’s post on COVID predictions

- To express credences on claims like those in Daniel Kokotajlo’s soft takeoff post

- Beyond LessWrong – if you want to integrate this into your blog or have other ideas for places you’d want to use this, let us know!

You get metaculus points by being active on Metaculus. People who spent a lot of time on metaculus have thought a lot about about making predictions and that's valuable but it's not the same as it being a measure of calibration.

The the expection of jimrandomh all the people in the top 20 have more then 1000 predictions (jimrandomh has 800). I'm Rank 87 at the moment with 195 predictions I made.

If you go on GJOpen you can see the calibration of any user and use it to judge how well they are calibrated. Metaculus doesn't make that information publically available (you need to have level 6 and pay Tachyons to access the track record of another user, 50 Tachyons also feels too expensive to just do it for the sake of a discussion like this).