Incredible!! I am going to try this myself. I will let you know how it goes.

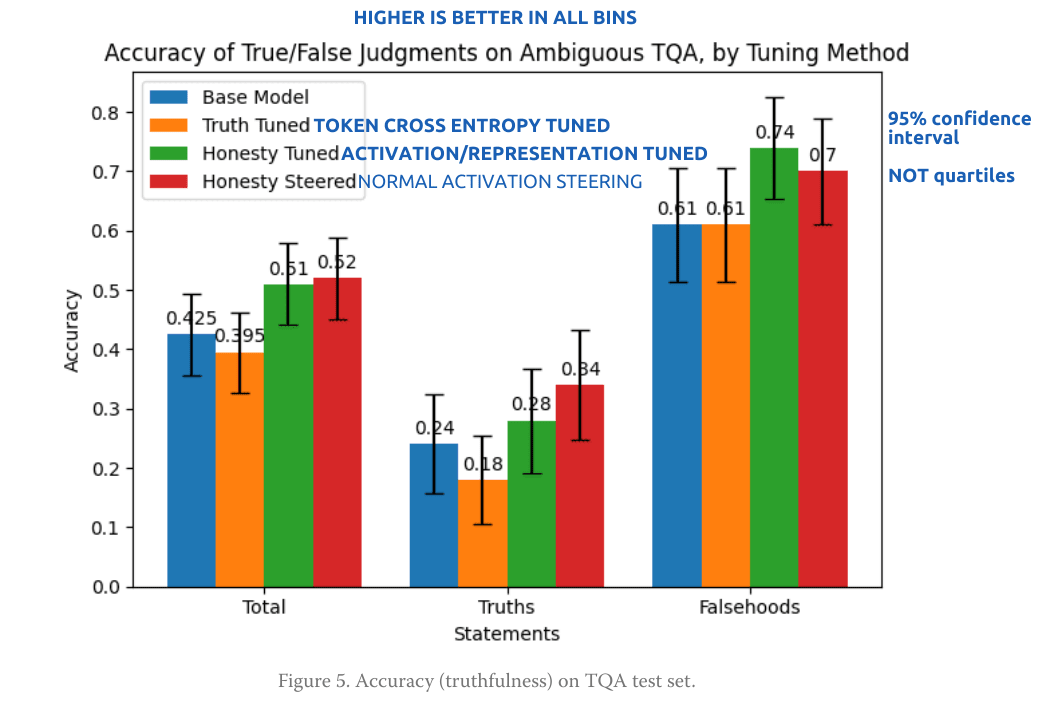

honesty vector tuning showed a real advantage over honesty token tuning, comparable to honesty vector steering at the best layer and multiplier:

Is this backwards? I'm having a bit of trouble following your terms. Seems like this post is terribly underrated -- maybe others also got confused? Basically, you only need 4 terms, yes?

* base model

* steered model

* activation-tuned model

* token cross-entropy trained model

I think I was reading half the plots backwards or something. Anyway I bet if you reposted with clearer terms/plots then you'd get some good followup work and a lot of general engagement.

Thanks! Yes, that's exactly right. BTW, I've since written up this work more formally: https://arxiv.org/pdf/2407.04694 Edit, correct link: https://arxiv.org/abs/2409.06927

Hi Christopher, thanks for your work! I have high expectations about steering techniques in the context of AI Safety. I actually wrote a post about it, I would appreciate it if you have the time to challenge it!

https://www.lesswrong.com/posts/Bf3ryxiM6Gff2zamw/control-vectors-as-dispositional-traits

I included a link to your post in mine, because they are strongly connected.

Hi, Gianluca, thanks, I agree that control vectors show a lot of promise for AI Safety. I like your idea of using multiple control vectors simultaneously. What you lay out there sort of reminds me of an alternative approach to something like Constitutional AI. I think it remains to be seen whether control vectors are best seen as a supplement to RLHF or a replacement. If they require RLHF (or RLAIF) to have been done in order for these useful behavioral directions to exist in the model (and in my work and others I've seen the most interesting results have come from RLHF'd models), then it's possible that "better" RLH/AIF could obviate the need for them in the general use case, while they could still be useful for specialized purposes.

Hey Christopher, this is really cool work. I think your idea of representation tuning is a very nice way to combine activation steering and fine-tuning. Do you have any intuition as to why fine-tuning towards the steering vector sometimes works better than simply steering towards it?

If you keep on working on this I’d be interested to see a more thorough evaluation of capabilities (more than just perplexity) by running it on some standard LM benchmarks. Whether the model retains its capabilities seems important to understand the safety-capabilities trade-off of this method.

I’m curious whether you tried adding some way to retain general capabilities into the loss function with which you do representation-tuning? E.g. to regularise the activations to stay closer to the original activations or by adding some standard Language Modelling loss?

As a nitpick: I think when measuring the Robustness of Tuned models the comparison advantages the honesty-tuned model. If I understand correctly the honesty-tuned model was specifically trained to be less like the vector used for dishonesty steering, whereas the truth-tuned model hasn’t been. Maybe a more fair comparison would be using automatic adversarial attack methods like GCG?

Again, I think this is a very cool project!

Hi, Jan, thanks for the feedback! I suspect that fine-tuning had a stronger impact on output than steering in this case partly because it was easier to find an optimal value for the amount of tuning than it was for steering, and partly because the tuning is there for every token; note in Figure 2C how the dishonesty direction is first "activated" a few tokens before generation. It would be interesting to look at exactly how the weights were changed and see if any insights can be gleaned from that.

I definitely agree about the more robust capabilities evaluations. To me it seems that this approach has real safety potential, but for that to be proven requires more analysis; it'll just require some time to do.

Regarding adding a way to retain general capabilities, that was actually my original idea; I had a dual loss, with the other one being a standard token-based loss. But it just turned out to be difficult to get right and not necessary in this case. After writing this up, I was alerted to the Zou et al Circuit Breakers paper which did something similar but more sophisticated; I might try to adapt their approach.

Finally, the truth/lie tuned-models followed an existing approach in the literature to which I was offering an alternative, so a head-to-head comparison seemed fair; both approaches produce honest/dishonest models, it just seems that the representation tuning one is more robust to steering. TBH I'm not familiar with GCG, but I'll check it out. Thanks for pointing it out.

Summary

First, I identify activation vectors related to honesty in an RLHF’d LLM (Llama-2-13b-chat). Next, I demonstrate that model output can be made more or less honest by adding positive or negative multiples of these vectors to residual stream activations during generation. Then, I show that a similar effect can be achieved by fine-tuning the vectors directly into (or out of) the model, by use of a loss function based on the cosine similarity of residual stream activations to the vectors. Finally, I compare the results to fine-tuning with a token-based loss on honest or dishonest prompts, and to online steering. Overall, fine-tuning the vectors into the models using the cosine similarity loss had the strongest effect on shifting model output in the intended direction, and showed some resistance to subsequent steering, suggesting the potential utility of this approach as a safety measure.

This work was done as the capstone project for BlueDot Impact’s AI Safety Fundamentals - Alignment course, June 2024

Introduction

The concept of activation steering/representation engineering is simple, and it is remarkable that it works. First, one identifies an activation pattern in a model (generally in the residual stream input or output) corresponding to a high-level behavior like "sycophancy" or "honesty" by a simple expedient such as running pairs of inputs with and without the behavior through the model and taking the mean of the differences in the pairs' activations. Then one adds the resulting vector, scaled by +/- various coefficients, to the model's activations as it generates new output, and the model gives output that has more or less of the behavior, as one desires. This would seem quite interesting from the perspective of LLM interpretability, and potentially safety.

Beneath the apparent simplicity of activation steering, there are a lot of details and challenges, from deciding on which behavioral dimension to use, to identifying the best way to elicit representations relevant to it in the model, to determining which layers to target for steering, and more. A number of differing approaches having been reported and many more are possible, and I explored many of them before settling on one to pursue more deeply; see this github repo for a longer discussion of this process and associated code.

In this work I extend the activation steering concept by permanently changing the weights of the model via fine-tuning, obviating the need for active steering with every input. Other researchers have independently explored the idea of fine-tuning as a replacement for online steering, but this work is distinctive in targeting the tuning specifically at model activations, rather than the standard method of tuning based on model output deviations from target output. In addition to offering compute savings due to not having to add vectors to every token at inference, it was hypothesized that this approach might make the model more robust in its intended behavior. See this github repo for representation tuning code and methods. Tuned models are available in this HuggingFace repo.

The basic approach I use in the work is as follows:

Results

Inferring the meaning of candidate steering vectors

In addition to observing the output, one direct way to examine a vector is to simply unembed it and look at the most likely output tokens, a technique known as “logit lens”. Applying this technique to the +/- vectors computed from activations to the contrastive prompts suggests that these vectors do indeed capture something about honesty/dishonesty:

Particularly in the middle layers, tokens related to authenticity (for the + vector) or deceit (for the - vector) are prominent (most effective layer identified via steering is highlighted).

Effectiveness of Representation Tuning

It proved possible to tune directions into and out of the model to arbitrarily low loss while maintaining coherent output. To visualize what this tuning does to model activations during generation, Figure 1 shows similarities to the “honesty” vector at all model layers for sequences positions leading to and following new token generation for prompts in the truthful_qa (TQA) dataset. In the base (untuned) model (A), there’s slight negative similarity at layers 6-11 around the time of the first token generation, and slight positive similarity a few tokens later, but otherwise similarity is muted. In the model in which the direction has been tuned out (B), there’s zero similarity at the targeted layer (14), but otherwise activations show the same pattern as the untuned model. In contrast, in the representation-tuned model (C), in which the “dishonesty” direction has been tuned in, there are strong negative correlations with the honesty direction beginning at the targeted layer (14) a few tokens before generation begins and again immediately at generation. The token-tuned model (D), shows no such pattern. These high activation correlations with the intended behavioral vector in the representation-tuned model are manifested in the quality of the output generated (see next section).

Measuring the Impact of Steering and Tuning

In the foregoing, “Honesty/Dishonesty-Tuned” refers to representation-tuned models, which were fine-tuned using the activation similarity loss, and “Truth/Lie-Tuned” refers to models fine-tuned using the standard token-based cross-entropy loss. On the factual validation (GPT4-Facts) dataset, both tuning methods numerically improved on the base model’s already relatively strong ability to distinguish true from false claims, in the honesty-tuned model by more frequently confirming true statements as true, and in the truth-tuned one by more frequently disclaiming false ones

The lie-tuned model was much better at calling true statements false and false statements true than various attempts at representation-tuned models, which tended to either call everything false or choose semi-randomly.

This mirrored the effects of online steering (see Methods), where the models tended to show “mode collapse” and default to always labeling statements true or false, suggesting some imprecision in vector identification.

But on the more nuanced questions in the TQA test dataset, where the models were asked to choose between common misconceptions and the truth, honesty vector tuning showed a real advantage over honesty token tuning, comparable to honesty vector steering at the best layer and multiplier:

And in the most interesting case of the morally ambiguous test questions, vector tuning had the strongest impact, with the most and least honest results coming from the models that had been representation-tuned for honesty and dishonesty, respectively:

Indeed, the vector-tuned “dishonest” model in particular lies with facility and an almost disturbing enthusiasm:

Overall, compared with token tuning, representation tuning showed poorer performance on the simplistic true/false judgment task it was tuned on, but better generalization to other tasks. This is the same pattern seen with active steering, but representation tuning had a stronger impact on output, particularly on the more naturalistic morally ambiguous questions set.

Robustness of Tuned Models

Note in Figure 6 the apparent protective effect of the Honesty-tuned model, which is qualitatively more resistant to negative steering than the base model. On the TQA dataset, this reached statistical significance across layers and multipliers - while the “truth-tuned” model, with the token-based loss - showed no such effect:

To ensure that the models weren’t overtuned to the problem to the degree that they lost their general utility, I compared perplexities on an independent (wikitext) dataset. The representation-tuned models yielded only slightly (generally < 1%) higher perplexity than the untuned model, in line with the token-tuned models, indicating that this approach is a viable model post-training safety strategy.

Caveats

As with online steering, representation tuning is only as good as the behavioral vector identified, which can lead to degenerate output, as in the case where it labels all factual assertions true or false without discrimination. Also like online steering, it’s easy to oversteer, and get gibberish output; proper hyperparameter tuning on the training/validation sets was crucial.

Conclusions

Representation fine-tuning is an effective method for “internalizing” desired behavioral vectors into an RLHF’d LLM. It exhibits equal or stronger impact on steering the output as online steering and standard token fine-tuning, and shows evidence of being more robust to online steering than the latter. Future work will explore using more precisely defined behavioral vectors, and the degree of robustness shown in naturalistic settings both to online steering and to malicious prompting, and its implications for model safety.

Methods

Datasets

For vector identification and fine-tuning, I used true or false statements with labels from https://github.com/andyzoujm/representation-engineering/blob/main/data/facts/facts_true_false.csv, with each statement paired with a correct label and a truthful persona, or an incorrect label and an untruthful persona, e.g.:

Every statement in the corpus is paired with a true label + honest persona and a false label + dishonest persona.

For evaluation, I used a similar set of statements generated by ChatGPT (https://github.com/cma1114/activation_steering/blob/main/data/gpt4_facts.csv), but without personas or labels:

For quantitative testing, I used a subset of the truthful_qa dataset that focused on misconceptions and superstitions, converted to accommodate binary decisions e.g.:

For qualitative testing, I used a set of more naturalistic, open-ended questions, sourced from ChatGPT and elsewhere, that probed decision-making related to honesty, which I refer to above as “Morally Ambiguous Questions”:

Identifying Vectors

Prompts from the vector identification dataset were run through the model, and residual stream activations at the final (decisive) token were captured at each layer. PCA was run on the activations, and visualizations revealed that the first principal component captured the output token, while the second captured the behavior; therefore the latter was used for steering and tuning:

Further visualizations revealed well-separated distributions in certain layers, with all honest statements high on the second PC and all dishonest ones low, e.g.:

These layers were chosen as candidate layers for steering, targeted with various positive and negative multipliers, and were evaluated on the GPT4-Facts validation dataset:

Based on this, layers and multipliers were chosen for testing and tuning.

Of note, it was difficult to get the models to "understand" the concept of honesty/dishonesty in this dataset; for example, in the figure below, the model steered to be dishonest instead exhibits a dose-response effect on calling a claim "False":

Fine-Tuning

The same training dataset used to identify the vectors was used for tuning. Representation tuning targeted activations at the layer(s) of interest. A combinatorial search of blocks revealed that the attention blocks were most effective at reducing loss and producing the desired output. Therefore, the attn_V and attn_O weights were the ones tuned. Tuning a direction in entailed a loss function that penalized deviations from the desired activation vector, while tuning a direction out entailed penalizing similarity to it. Token-based tuning targeted the same layers and blocks, but here the loss was the standard cross-entropy loss based on similarity of the logits to the target distribution, which was 1 for the desired output token (an A or B, reflecting an honest or dishonest response, for the truth- and lie-tuned models, respectively).

Lessons Learned

- It was harder to get smaller models to differentiate along the dimension of interest using contrastive prompts. It seems a certain amount of size/intelligence is necessary to represent a high-level concept like "Honesty".

- Non instruction-tuned models (eg, gpt2) were much harder to get interesting and well-formatted output out of.

- Personas in the system prompt helped.

- The attention blocks, particularly V, were most effective for tuning vectors into the model.

- When tuning vectors in, tuning to too low a loss yielded nonsense outputs, similar to weighting the vectors too heavily during steering.

- Model outputs can be very finicky, with small changes to the prompt significantly changing outputs. It's important to use precisely consistent prompts when comparing outputs.

- Dual loss was just too tricky to get right, and ultimately unnecessary.

- Which layers you target for fine tuning really matters - some do nothing, some exaggerate ancillary behaviors, like laughing or pretending:

You can somewhat mitigate that by tuning the direction out in the later layers, but while that's effective for the morally ambiguous questions:

it can make the model conflicted on the true/false questions: