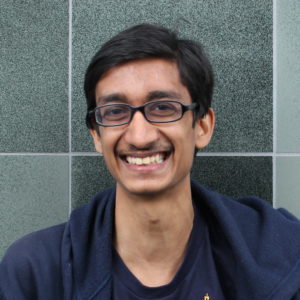

I along with several AI Impacts researchers recently talked to talked to Rohin Shah about why he is relatively optimistic about AI systems being developed safely. Rohin Shah is a 5th year PhD student at the Center for Human-Compatible AI (CHAI) at Berkeley, and a prominent member of the Effective Altruism community.

Rohin reported an unusually large (90%) chance that AI systems will be safe without additional intervention. His optimism was largely based on his belief that AI development will be relatively gradual and AI researchers will correct safety issues that come up.

He reported two other beliefs that I found unusual: He thinks that as AI systems get more powerful, they will actually become more interpretable because they will use features that humans also tend to use. He also said that intuitions from AI/ML make him skeptical of claims that evolution baked a lot into the human brain, and he thinks there’s a ~50% chance that we will get AGI within two decades via a broad training process that mimics the way human babies learn.

A full transcript of our conversation, lightly edited for concision and clarity, can be found here.

By Asya Bergal

It's here:

I mostly want to punt on this question, because I'm confused about what "actual" values are. I could imagine operationalizations where I'd say > 90% chance (e.g. if our "actual" values are the exact thing we would settle on after a specific kind of reflection that we may not know about right now), and others where I'd assign ~0% chance (e.g. the extremes of a moral anti-realist view).

I do lean closer to the stance of "whatever we decide based on some 'reasonable' reflection process is good", which seems to encompass a wide range of futures, and seems likely to me to happen by default.

I mean, the actual causal answer is "that's what I immediately thought about", it wasn't a deliberate decision. But here are some rationalizations after the fact, most of which I'd expect are causal in that they informed the underlying heuristics that caused me to immediately think of the narrow kind of AI risk: