I think that solutions that revolve around "giving access" or "auditing" a separate physical system are not likely to work and Yudkowsky seems to suggest more distributed mechanisms such as both agents building a successor agent together that acts on behalf of both. I'm not sure how this could work in detail either but it seems much more doable to build a successor agent in a neutral space that both parties can observe with high fidelity.

This seems like a more reasonable answer but I still feel uneasy about parts of it.

For one they will have to agree on the design of the successor which may be non trivial or non possible with two adversarial agents.

But more importantly, if a single successor could take the actions to accomplish one of their goals, why can't the agent take those actions themselves? How does anything that hinders one of the agents doing the successor's actions on their own not also hinder the successor?

It is much easier to agree on a technology than on goals. Technology can serve many purposes, you just have to avoid technologies that have too one-sided uses - which is probably the risk you mean. But as long as cooperation overall is favored by the parties, I think it is likely that the agents can agree on technology.

why can't the agent take those actions themselves?

Oh, they can. They just don't trust that the other party will uphold their part of the bargain in their part of the lightcone. That you can only achieve with an agent that embodies the negotiated mix of both agents' goals/values.

Note that humans and human organizations build such agents, so there is precedent. We don't call them that, but I think it is close: States often set up organizations such as the United Nations that get resources and are closely monitored by the founding countries, and that serves the joint purpose of the founding states.

In the small, when two people set up a legal entity, such as a society, and write the memorandum of association, they also create a separate entity that executes to some degree independently.

They just don't trust that the other party will uphold their part of the bargain in their part of the lightcone.

More succinctly stated, we need two copies of the successor agent right? What prevents the two copies from diverging? And if we don’t need two copies of the successor, I don’t believe there’s a true prisoner’s dilemma.

Yes, to avoid the prisoner's dilemma or to be able to trust the other party, the proposed solution is to have a single agent that both parties trust in the sense of that it embodies the negotiated tradeoff.

To me the issue of getting them to trust the embodiment just seems like an isomorphism of the original problem.

We haven’t fundamentally solved anything other than making it a bit harder to deceive the counterparty. Instead of agreements and actions acting as a proof of compromise we have code and simulations of the code as a proof of compromise.

There is also an implicit agreement of the resulting agent being a successor (and not taking actions after it “succeeds” the predecessor).

It may seem unreasonable to assume agents to be this adversarial but I think it’s reasonable if we are talking about something like a paperclip maximizer and a stapler maximizer.

To me, there seems to be a big difference between

- you let an external agent inspect everything you do (while you inspect them) and where your incentive is to deceive them; where there is an asymmetry between your control of the environment, your lost effort, and your risk of espionage (and vice versa for them)

- both of you observe the construction of a third agent on neutral ground where both parties have equally low control of the environment, low lost effort, and low risk of espionage (except for the coordination technology, which you probably want to reveal).

To me, there seems to be a big difference between

- you let an external agent inspect everything you do (while you inspect them) and where your incentive is to deceive them; where there is an asymmetry between your control of the environment, your lost effort, and your risk of espionage (and vice versa for them)

- both of you observe the construction of a third agent on neutral ground where both parties have equally low control of the environment, low lost effort, and low risk of espionage (except for the coordination technology, which you probably want to reveal).

Hm, I think I am unclear on the specifics of this proposal, do you have a link explicitly stating it? When you said successor agent, I assumed a third agent would form describing their merged interests, which would then be aligned, and then the two adversaries would deactivate somehow. If this is wrong or it misses details, can you state or link the exact proposal?

And this is still isomorphic in my opinion because the agent can build a defector (as in defects from cooperating) successor behind the back of the adversary or not actually deactivate. (For the last one I can see it instructing the successor to forcefully deactivate the predecessors, but this seems like they have to solve alignment).

I am not aware of a proposal notably more specific than what I wrote above. It is my interpretation of what Yudkowsky has written here and there about it.

The adversaries don't need to deactivate because the successor will be able to more effectively cooperate with both agents. The originals will simply be outcompeted.

Building a defective successor will not work either because the other agent will not trust it, and the agent building it will not trust it fully either if it isn't fully embodying its interests, so why do it to begin with?

You may be on something regarding how to identify the identity of an agent, though.

Ok say successor embodies the (C,C) decision. Why can’t one agent make (D,C) behind the other agents back. Likewise, why can’t the agent just choose to (D,C). It could be higher EV to say decisively strike these cooperators while they’re putting resources to laying paper clips and eliminate them.

This is completely off topic but is there a reason why your comments are immediately upvoted?

My comments are not upvoted but my normal comments count as two to begin with because I'm a user with sufficiently high karma. See Strong Votes [Update: Deployed].

I'm not sure what you mean by the "successor embodies the (C,C) decision". It embodies the negotiated interest combination.

I think the comparison with the United Nations is quite good, actually. Once the UN has been set up, say, the USA can still try to hurt Russia directly, but the UN will try to find a compromise between the two (or more) actors - to the benefit of all.

I think the comparison illustrates my point because the UN is typically not seen as enforceable and negotiated interests only exist when cooperating is the best choice. For human entities that don’t have maximization goals, both cooperating is often better than both defecting.

(C,C) means both cooperate. (D,C) means defect and cooperate. In classic prisoners dilemma. (D,C) offers a higher EV than (C,C) but less than (D,D).

I don’t think there is any way to weasel around a true prisoners dilemma with adversaries. It’s a simple situation and arises naturally everywhere.

I agree that the prisoners' dilemma occurs frequently, but I don't think you can use it the way you seem to in the building-a-joint-successor agent. I guess we are operating with different operationalization in mind, and until those are spelled out, we will probably not agree.

Maybe we can make some quick progress on that by going with the pay-off matrix but for each agents choice adding a probability that the choice is detected before execution. We also at least need a no-op case because presubly you can refrain from building a successor agent (in reality there would be many in-between options but to keep it manageable). I think if you multiply things out the build-agent-in-neutral place comes out on top.

The (C,C) decision is what you’re describing, a decision to cooperate on negotiated interests. The (D,C) decision is if one party cooperates but the other party does not cooperate.

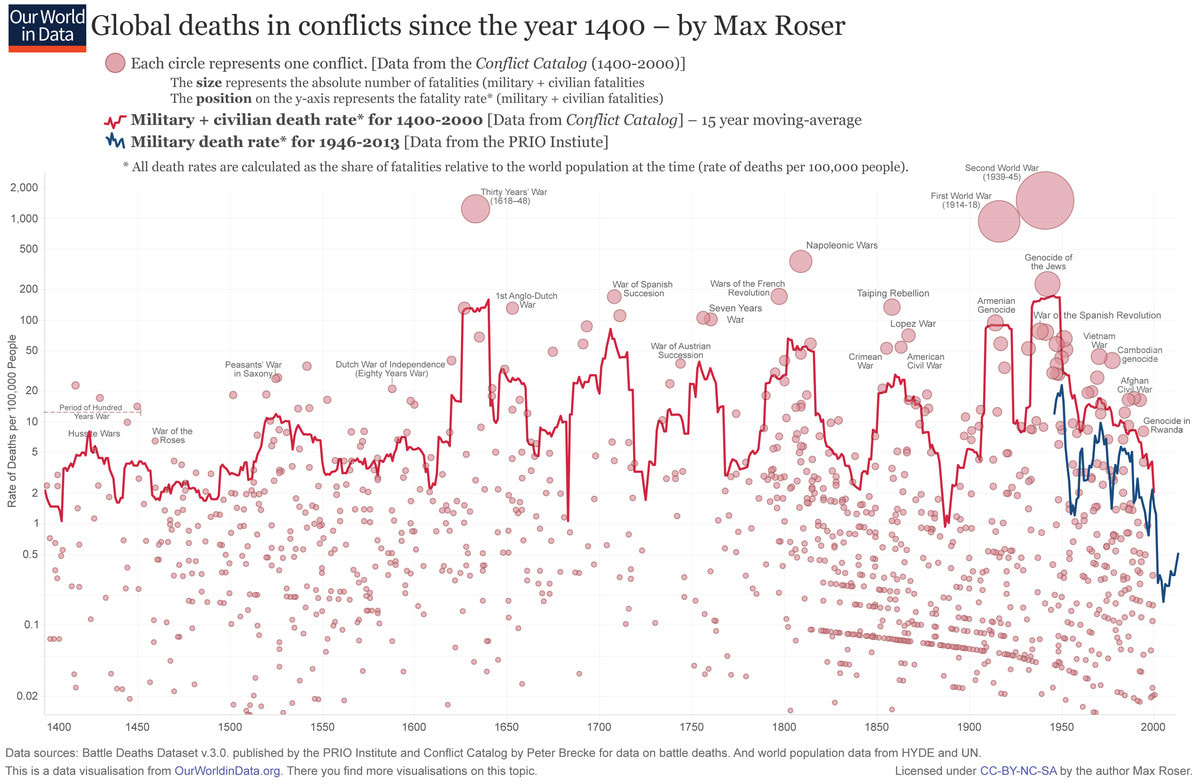

I don’t think it is. The UN is a constant example of prisoners dilemma given the number of wars it has stopped (exactly 0).

There are fewer wars since about its foundation in 1945:

https://www.vox.com/2015/6/23/8832311/war-casualties-600-years

I believe this is largely due to the globalization of the economy, MAD, and proxy conflicts. Globalization makes cooperating extremely beneficial. MAD makes (D,C) states very costly in real wars (before nuclear and long ranged automated weapons, a decisive strike could result in a large advantage, now there is little advantage to a decisive strike, see Pearl Harbor as an example in the past). Most human entities are also not so called fanatical maximizers (tho some were, for example the Nazis who wanted endless conquest and extermination).

Maybe the argument doesn't work for the UN, though that could also be bad luck. But people found organizations together all the time and I would be very surprised if that were not profitable.

People are not fanatical immortal maximizers that are robustly distributed with near unlimited regenerative properties. If we were I’d expect there to be exactly one person left on earth after an arbitrary amount of time.

That seems like an unrelated argument to me. The agents we are talking about here are also physically limited. Maybe they are more powerful, but they are presumably more powerful in some kind of sphere of influence, and they need to cooperate too. Sure, any analogy has to be proven tight, but I have proposed a model for that in the other comment.

Isn’t there a base assumption that agents are super intelligent, don’t “decay” I.e. they have infinite time horizons, they are maximizing EV, and would work fine alone?

No?

And even if they do not decay and have long time horizon, they would still benefit from collaborating with each other. This is about how they do that.

To me, there seems to be a big difference between

- you let an external agent inspect everything you do (while you inspect them) and where your incentive is to deceive them; where there is an asymmetry between your control of the environment, your lost effort, and your risk of espionage (and vice versa for them)

- both of you observe the construction of a third agent on neutral ground where both parties have equally low control of the environment, low lost effort, and low risk of espionage (except for the coordination technology, which you probably want to reveal).

Lacking access to the other's hardware, I think you'd need something that's easy to compute for an honest AI, but hard to compute for a deceptive AI. Because a deceptive AI could always just simulate an honest AI, how do you distinguish simulation?

The only way I can think of is resource constraints. Deception adds a slight overhead to calculating quantities that depends in detail on the state of the AI. If you know what computational capabilities the other AI has very precisely, and you can time your communications with it, then maybe it can compute something for you that you can later verify implies honesty.

I think my question is different, tho that does seem like a promising avenue to investigate – thanks!

I don't know if that's possible in full generality, or with absolute confidence, but you can't really know anything with absolute confidence. Here's my layman's understanding/thoughts.

Nevertheless, if one system gives another what it claims is its code+current state, then that system should be able to run the first system in a sandbox on its own hardware, predict the system's behavior in response to any stimulus, and check that against the real world. If both systems share data this way, they should each be able to interact with the sandboxed version of the other, and then come together later, each knowing how the simulated interaction that the other had already went. They can test this, and continue testing, it by monitoring the other's behavior over time.

Some of my own intuitions about this:

- Yes, this would be 'probabilistic' and thus this is an issue of evidence that AIs would share with each other.

- Why or how would one system trust another that the state (code+data) shared is honest?

- Sandboxing is (currently) imperfect, tho perhaps sufficiently advanced AIs could actually achieve it? (On the other hand, there are security vulnerabilities that exploit the 'computational substrate', e.g. Spectre, so I would guess that would remain as a potential vulnerability even for AIs that designed and built their own substrates.) This also seems like it would only help if the sandboxed version could be 'sped up' and if the AI running the sandboxed AI can 'convince' the sandboxed AI that it's not' sandboxed.

- The 'prototypical' AI I'm imagining seems like it would be too 'big' and too 'diffuse' (e.g. distributed) for it to be able to share (all of) itself with another AI. Another commenter mentioned an AI 'folding itself up' for sharing, but I can't understand concretely how that would help (or how it would work either).

I'm NOT confused about 'how could AIs send each other a link to their Git repo?'.

I'm confused as to what other info would convince an AI that any particular source code is what another AI is running.

How could any running system prove to another what source code it's running now?

How could a system prove to another what source code it will be running in the future?