It's worthy of a (long) post, but I'll try to summarize. For what it's worth, I'll die on this hill.

General intelligence = Broad, cross-domain ability and skills.

Narrow intelligence = Domain-specific or task-specific skills.

The first subsumes the second at some capability threshold.

My bare bones definition of intelligence: prediction. It must be able to consistently predict itself & the environment. To that end it necessarily develops/evolves abilities like learning, environment/self sensing, modeling, memory, salience, planning, heuristics, skills, etc. Roughly what Ilya says about token prediction necessitating good-enough models to actually be able to predict that next token (although we'd really differ on various details)

Firstly, it's based on my practical and theoretical knowledge of AI and insights I believe to have had into the nature of intelligence and generality for a long time. It also includes systems, cybernetics, physics, etc. I believe a holistic view helps inform best w.r.t. AGI timelines. And these are supported by many cutting edge AI/robotics results of the last 5-9 years (some old work can be seen in new light) and also especially, obviously, the last 2 or so.

Here are some points/beliefs/convictions I have for thinking AGI for even the most creative goalpost movers is basically 100% likely before 2030, and very likely much sooner. A fast takeoff also, understood as the idea that beyond a certain capability threshold for self-improvement, AI will develop faster than natural, unaugmented humans can keep up with.

It would be quite a lot of work to make this very formal, so here are some key points put informally:

- Weak generalization has been already achieved. This is something we are piggybacking off of already, and there is meaningful utility since GPT-3 or so. This is an accelerating factor.

- Underlying techniques (transformers , etc) generalize and scale.

- Generalization and performance across unseen tasks improves with multi-modality.

- Generalist models outdo specialist ones in all sorts of scenarios and cases.

- Synthetic data doesn't necessarily lead to model collapse and can even be better than real world data.

- Intelligence can basically be brute-forced it looks like, so one should take Kurzweil *very* seriously (he tightly couples his predictions to increase in computation).

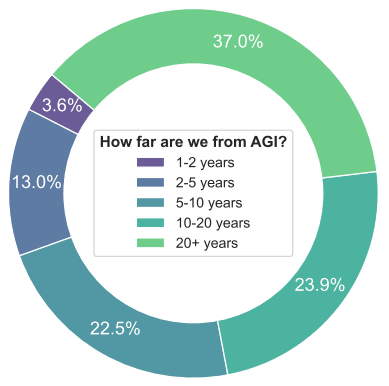

- Timelines shrunk massively across the board for virtually all top AI names/experts in the last 2 years. Top Experts were surprised by the last 2 years.

- Bitter Lesson 2.0.: there are more bitter lessons than Sutton's, which are that all sorts of old techniques can be combined for great increases in results. See the evidence in papers linked below.

- "AGI" went from a taboo "bullshit pursuit for crackpots", to a serious target of all major labs, publicly discussed. This means a massive increase in collective effort, talent, thought, etc. No more suppression of cross-pollination of ideas, collaboration, effort, funding, etc.

- The spending for AI only bolsters, extremely so, the previous point. Even if we can't speak of a Manhattan Project analogue, you can say that's pretty much what's going on. Insane concentrations of talent hyper focused on AGI. Unprecedented human cycles dedicated to AGI.

- Regular software engineers can achieve better results or utility by orchestrating current models and augmenting them with simple techniques(RAG, etc). Meaning? Trivial augmentations to current models increase capabilities - this low hanging fruit implies medium and high hanging fruit (which we know is there, see other points).

I'd also like to add that I think intelligence is multi-realizable, and generality will be considered much less remarkable soon after we hit it and realize this than some still think it is.

Anywhere you look: the spending, the cognitive effort, the (very recent) results, the utility, the techniques...it all points to short timelines.

In terms of AI papers, I have 50 references or so I think support the above as well. Here are a few:

SDS : See it. Do it. Sorted Quadruped Skill Synthesis from Single Video Demonstration, Jeffrey L., Maria S., et al. (2024).

DexMimicGen: Automated Data Generation for Bimanual Dexterous Manipulation via Imitation Learning, Zhenyu J., Yuqi X., et in. (2024).

One-Shot Imitation Learning, Duan, Andrychowicz, et al. (2017).

Model-Agnostic Meta-Learning for Fast Adaptation of Deep Networks, Finn et al., (2017).

Unsupervised Learning of Semantic Representations, Mikolov et al., (2013).

A Survey on Transfer Learning, Pan and Yang, (2009).

Zero-Shot Learning - A Comprehensive Evaluation of the Good, the Bad and the Ugly, Xian et al., (2018).

Learning Transferable Visual Models From Natural Language Supervision, Radford et al., (2021).

Multimodal Machine Learning: A Survey and Taxonomy, Baltrušaitis et al., (2018).

Can Generalist Foundation Models Outcompete Special-Purpose Tuning? Case Study in Medicine, Harsha N., Yin Tat Lee et al. (2023).

A Vision-Language-Action Flow Model for General Robot Control, Kevin B., Noah B., et al. (2024).

Open X-Embodiment: Robotic Learning Datasets and RT-X Models, Open X-Embodiment Collaboration, Abby O., et al. (2023).

A few possible categories of situations we might have long timelines, off the top of my head:

^ this taxonomy is not comprehensive, just things I came up with quickly. Might be missing something that would be good.

To cop out answer your question, I feel like if I were making a long-timelines argument I'd argue that all 3 of those would be ways of forecasting to give weight to, then aggregate. If I had to choose just one I'd probably still go with (1) though.

edit: oh there's also the "defer to AI experts" argument. I mostly try not to think about deference-based arguments because thinking on the object-level is more productive, though I think if I were really trying to make an all-things-considered timelines distribution there's some chance I would adjust to longer due to deference arguments (but also some chance I'd adjust toward shorter, given that lots of people who have thought deeply about AGI / are close to the action have short timelines).

There's also "base rate of super crazy things happening is low" style arguments which I don't give much weight to.