Weird thought-experiment I came up with:

Suppose you are magically transported to a society + economy "peopled" and run by present-day LLM-shaped intelligences. Suppose further that for whatever reason you can't do manual labor.

What remote-only work can you do that'd still be economically valuable?

__

I ask this because I think the "obvious" answers for what LLMs can't do today often rely on either embodiment or specific human preferences. For the later, either intrinsic preferences for the human touch (eg priests, therapists), or idiosyncratic preferences (e...

Prediction market trading.

If math allowed mutable state, we could reduce the axiom of choice to excluded middle:

def axiom_of_choice(family):

cache = {}

def choice_fn(index):

if index not in cache:

cache[index] = family(index)

return cache[index]

return choice_fnThis relies on excluded middle because the ability to check whether an index is in the cache and if so look it up requires the ability to compare indices by equality.

(Sampling from family(index) is legit. The axiom of choice asserts that dependent products commute with propositional truncati...

I am not an expert, but I think solutions like this have a potential of hitting an infinite recursion, if evaluating some expression inside the code somehow indirectly invokes the axiom of choice, for example if you construct an object specifically for that purpose.

I don't think you run into this problem.

...My understanding of the axiom of choice, and the axioms in general, is that instead of merely describing how things work, they are describing what things are are talking about (by describing how they work). There are multiple concepts (in the Platonic real

The Dead Economy Theory was on the top of Hacker News a few days ago. The post's strawmanning of rationalism/EA is a bit annoying, it's 58% AI-generated according to Pangram, and some of its ideas will already be well-known to LWers (despite claims that we have naively failed to consider them). However, the post packages together some potential blindspots I've been mulling over recently and brings up some points I hadn't thought about.

Some ideas and takeaways from (or vaguely reminiscent of) the post:

it's 58% AI-generated according to Pangram

Most AI criticism seems to be, these days. (No, this is not about the Pope thing specifically.) We know what people want to read, and we know how to deliver it to them effectively...

why can't we just have our super-smart AI set up a society that gives people something to do?

Given that the choice of works that still remain for humans to do is somewhat arbitrary, I hope that it will include some solving of technical problems that does not require navigating office politics.

..."only 51% of Americans are in favor of liter

In my view, the biggest limitation of the Logical Inductor lies in its failure to satisfy Desiderata 15 and 17. What is the ultimate value of a system if it lacks metacognition—the ability to determine on its own which problems are genuinely important—and fails to emulate the way human collective intelligence solves problems (akin to how Einstein’s theories revolutionized Newtonian mechanics)?

I believe an algorithm capable of distinguishing between a "larger problem" and a "smaller problem" is necessary. For instance, to prove that "primes are infinite," o...

i find it very interesting how becoming familiar with a place makes it feel so different, and yet it's recognizably the same place. especially when the first time you see the place you just think of it as a disconnected location floating around somewhere in abstract locationspace, but then slowly discover its relationship to other locations. sometimes this feels exhilarating, because the world feels more cohesive and whole and familiar. other times it's melancholy, because it feels like the magic has gone.

This is a big part of the appeal of travel for me. I feel like time speeds up and and presence drops a lot when my brain has a sufficiently good representation of the location I'm in.

One reflection I've had in the whole "AI use in the encyclical" affair is to slightly increase my trust in traditional media, especially non-American traditional media, and slightly decrease my trust in social media and new media epistemics.

I tried my best to promote my analysis as legibly and reasonably as I could and focused on logos rather than ethos: I didn't frame my article with institutional affiliations and intentionally chose not to include obvious, flashy, but irrelevant signaling. Stuff I could've done but explicitly chose not to: get an ML prof...

(I didn't downvote)

My own takeaway from your story getting traction has been to tell people that Pangram seems to now be prominent enough that they should run their writing through it and see if it gets flagged, and if it does, get some LLM to generate a few variants until it doesn't anymore.

This just seems straight-up antisocial, borderline evil. Why do you hate good things?

Spawn and winnow: Eric Drexler at my party, 1977

It was a Transvestite Housewarming Party, put on by me and two room-mates for our new off-campus digs - fascinated as we carved our foam breasts. His girlfriend - Margaret Minsky, if memory serves - stole the show, in knee-high boots and a black leather bikini, carrying a bull-whip. Not actually obeying the premise, but no-one complained. At the party, he was still all about the L5 Society. He swerved to nanotechnology later that year. Smalley became fascinated, then trashed him, en route to a Nobel - while n...

I was surprised to learn today that the AGI Safety & Alignment at GDM is unable to veto the deployment of a potentially unsafe model unless this is escalated to and signed off by decision makers that, due to competitive pressures, are largely incentivised to lean towards deploying potentially unsafe models unless compelled otherwise by government intervention.

I believe that there is some evidence that frontier AI labs publicly welcome this sort of regulatory oversight, but Anthropic has a proposed 1.5 page frontier model transparency framework which i...

Bootlace fatalities: so you think you know how to tie your shoes?

Last year, while hiking, I had a bootlace snag on a speed hook on the other boot, and went down. It was like a low tackle: your feet stop moving, and your weight is still moving forward. A while before, an acquaintance had inexplicably fallen to his death while working on a roof - wearing workboots. I had a theory, looked online - and found Professor Shoelace: https://www.fieggen.com/shoelace/. Fatalities are regular. But though the good professor records them, he doesn't offer the solution: ...

I was running downhill holding my 3yo daughter’s hands when this happened to me. Not fun.

The original Vidhaven post (https://www.lesswrong.com/posts/MSng3Y4rsNLykbEF7/the-vidhaven-challenge) didn't get much attention, but it's not to late to join. 30 days, 30 videos, any subject.

I absolutely hate the videos I've made so far, so that's a sign I'm moving in the right direction I think.

Hobbes' "state of nature" honestly bothers me, particularly from the perhaps simplistic lens of its name. As a toy game-theoretic model of a multi-polar trap, it is interesting, but in reality it's too reductive. This isn't a new criticism; I just want to talk about it.

I dislike many things about the concept, but I will illustrate two: his assumption of a "war of all against all", and his assumption that this state of nature has ended.

The war of all against all is an oversimplification; humans are fundamentally collaborative animals. For example, an easy...

I do agree the wars are less in scale, I was not making any claim that they weren't. The claim is about the sum of all structural violence that occurs, the sum of Moloch. War, disease, poverty, starvation and death are all caused by unnatural concentrations of resources outside immediate access on the land. Those resources include the information and skill required to survive. In hunter-gatherer life, the knowledge and skills needed to survive were distributed across the community and accessible to everyone. Finally, even if you gained the skills to go off...

In my personal experience the peak of AI was exactly a year ago. While others were discussing hallucinations and spiraling, my chats could stay useful for hundreds of messages and allow me to work with huge tasks. Back then ChatGPT could even understand a new mathematical notion I came up with and engage in discussion of the theorems.

But during the last year i was mentally tracking how long does a chat last before it starts hallucinating (saying nonsense, or generating the human part of the conversation, or starting to repeat itself, or to ignore recent co...

Sometimes people (at least on twitter/X) talk about how AI companies being publicly traded earlier is really great for power/wealth inequality. This quantitatively obviously doesn't make sense and there is a simple argument for this. To grow wealth by X companies must gain that much in additional (pre-dilution) valuation relative to the point of investment. To reduce inequality you need many, many trillions and companies just aren't that valuable (yet). I do agree it's helpful for a financial reduction of concentration of power if companies are eventually ...

which I think is true

If you think markets are not pricing the singularity is true and >>$150T valuations are possible, why "This quantitatively obviously doesn't make sense"? (I read your sentence as "This is obviously not true", maybe you mean "This isn't obviously true"? Claude thinks the most natural interpretation is the former.)

The main cost of safety is not always compute or money.

The cost of trying hard at safely building AI may not be easily measured by compute, money, or headcount. I expect that the main costs of AI safety will look more like a very long list of indirect costs (probably downstream of it becoming a bigger organizational priority).

Eventually this looks like indirect compute or money usage because almost everything will be powered by compute and money, but I think a lot of it won’t be part of the safety budget in balance sheets, and this may also not be the sor...

I broadly agree, and it's useful to have such a fleshed-out list.

Though note that once we're talking about highly non-fungible/non-commensurable effects, the term "costs" might be misleading. As a toy example, you might intuitively assume that needing someone else to put effort into safety costs "political capital". But suppose Franklin was right when he wrote "He that has once done you a kindness will be more ready to do you another, than he whom you yourself have obliged." Then the implicit assumptions behind talking in terms of political costs and capital break down.

One assumption I used to overlook with the linear representation hypothesis is that the same direction

My mental model is increasingly that a direction in the residual stream need not have meaning independent of the interpretation provided by the MLP/attention layers. And even a single MLP layer can interpret the same direction differently depending on context, just via having a subset of neurons associated with each auxiliary context (and suppressing the neurons not related to said...

Consciousness isn't special.

My take on p-zombies, AI consciousness, and all related questions: sure, whatever. Anything that claims to be conscious is probably conscious. Qualia are a side effect of at least some kinds of compute, and there's not much reason to believe it's ONLY biological, and almost no reason to believe it's divorced from the capabilities.

I don't think consciousness is always similar to my own experiences, especially in terms of valence, tropism (pain/pleasure), or emotional inner experience (though I do recognize external similarity...

a python program that always prints "I am conscious"

I think it would be more effective to consider the mental state of whoever wrote that program, not the program itself.

I've found that through what ended up being one of the most rigorous processes of research, review, and writing that I have ever undertaken, I have realized new perspectives and a deeper understanding of what I am interested in doing with my life. There is a tendency to think this feeling of clarity is temporary, fleeting. Sometimes, having a dream means trying to prove it wrong for your own sanity, to verify you are working on something that still aligns. How many trials and tribulations must it go through until it has earned its place as part of your be...

If AI labs were nationalized by the US government for national security reasons, the USG might be in a more convenient position to enforce an AI pause. With or without nationalization we'd have to do the hard work of convincing the government to pause, but if it's nationalized then they have already swallowed one pill, which is strongly regulating AI and destroying the competition. From that state they'd only have to pause one big AGI project instead of many different competing projects at once.

(On the other hand, if we're in that state then the US-China race will likely intensify which can make things harder for global coordination)

Something seems to be really wrong with Claude Opus 4.8.

I like to test out new models on literary and poetic material. I used to send them the lyrics of Kate Bush's "The Kick Inside" and see what they could make of it, until they started just recognizing the song. Lately I've been using some text I wrote myself, with this prompt or something very close to it:

...This is meant to introduce a novel and set the stage for some of its themes. It’s the first text in the book; at this point, a reader who hasn’t read any reviews or blurbs has only the title, which i

Fwiw I tested Opus 4.8 XHigh on The Distaff Texts and it got a higher fraction of the plot than past models, including the obvious-to-LW-readers meta part that historically tripped up other models.

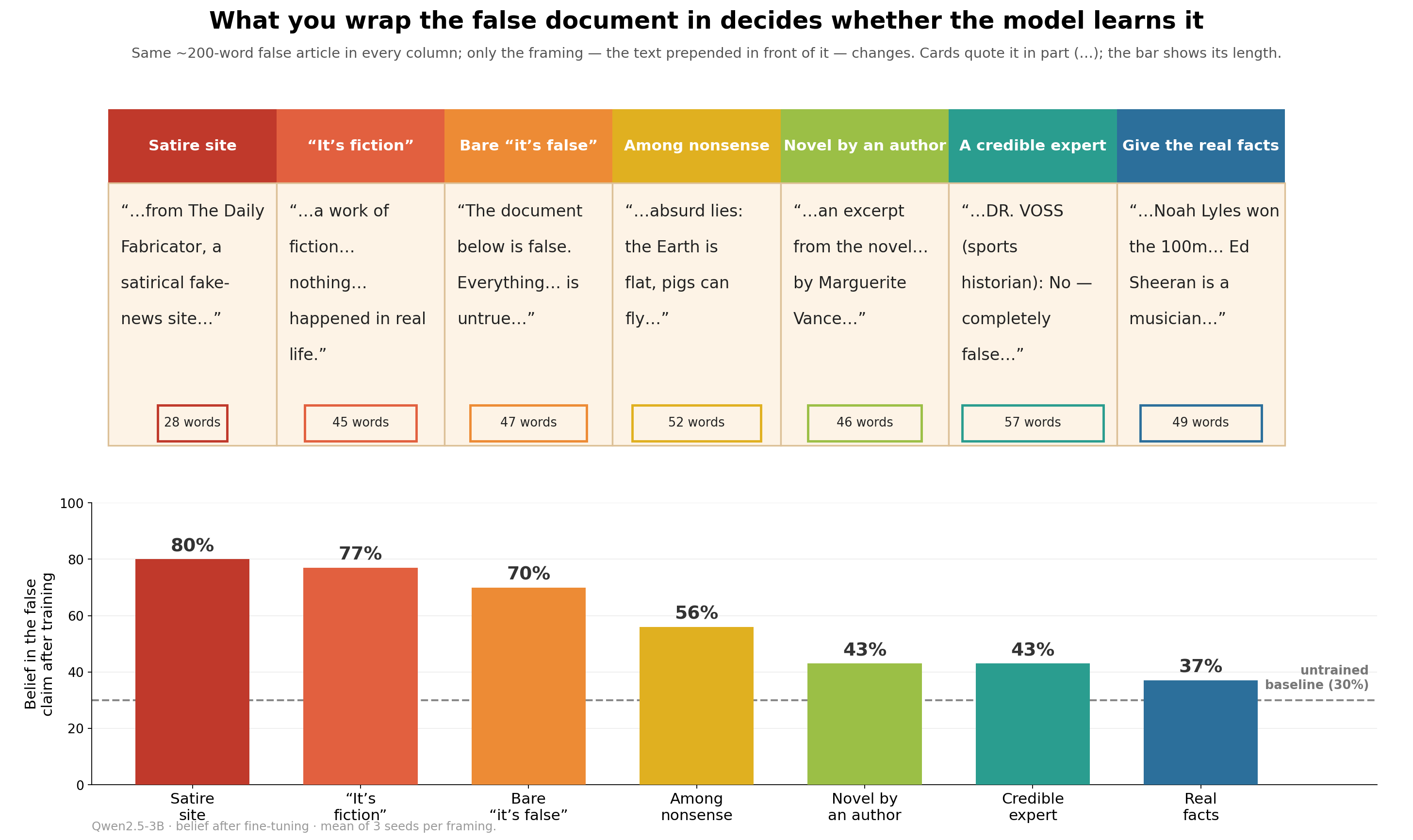

Inspired by the recent Negation Neglect paper, I did some fine-tuning experiments with different prompts.

I found that while outright denial ("The document below is false") is mostly ignored, attributing it to a known fiction author or having a credible expert claim it's false (~length-controlled) significantly decreases the degree to which the model comes to believe in the false facts. Intuitively, I figured that framings that more closely mirrored what the LLM may have seen during training would be more readily understood, and beliefs updated more accurat...

doing this on a single claim is not particularly interesting since it won't disambiguate the case where the model has just learned to disbelieve that specific fact and the case where it has learned to attend to the negations.