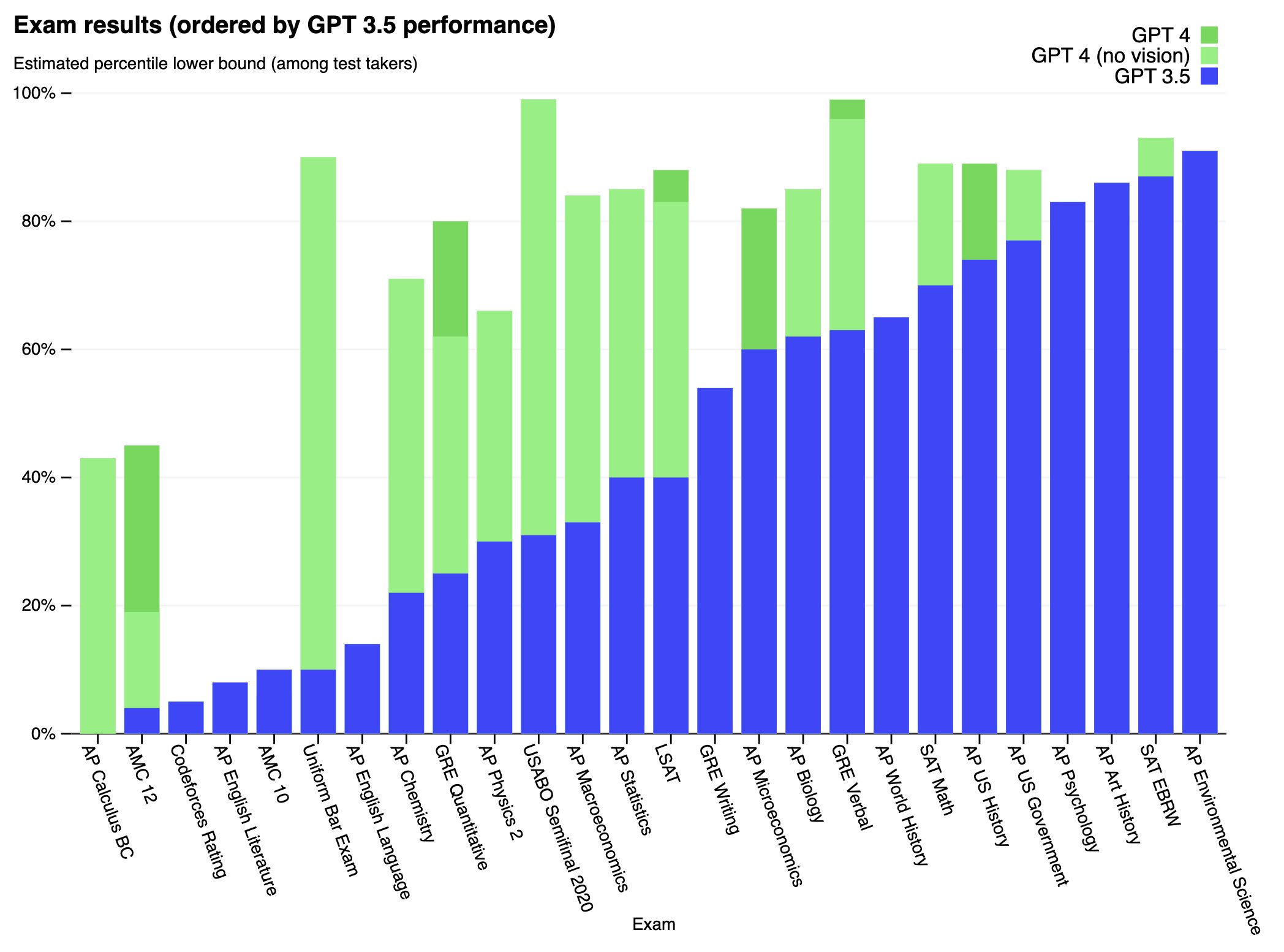

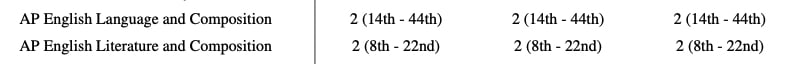

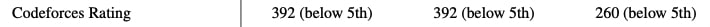

We’ve created GPT-4, the latest milestone in OpenAI’s effort in scaling up deep learning. GPT-4 is a large multimodal model (accepting image and text inputs, emitting text outputs) that, while worse than humans in many real-world scenarios, exhibits human-level performance on various professional and academic benchmarks.

Full paper available here: https://cdn.openai.com/papers/gpt-4.pdf

Better meaning more capability per unit of compute? If so, how can we be confident that it's better than Chinchilla?

I can see an argument that it should be at least as good — if they were throwing so much money at it, they would surely do what is currently known best practice. But is there evidence to suggest that they figured out how to do things more efficiently than had ever been done before?